mirror of

https://github.com/qodo-ai/pr-agent.git

synced 2025-07-21 04:50:39 +08:00

Compare commits

1124 Commits

zmeir-publ

...

example-pr

| Author | SHA1 | Date | |

|---|---|---|---|

| 7005a0466a | |||

| 648dd3299f | |||

| 77a6fafdfc | |||

| ea7511e3c8 | |||

| 512c92fe51 | |||

| 1853b4ef47 | |||

| 2f10b4f3c5 | |||

| 73a20076eb | |||

| afb633811f | |||

| 81da328ae3 | |||

| 729f5e9c8e | |||

| fdc776887d | |||

| cb64f92cce | |||

| f3ad0e1d2a | |||

| 480e2ee678 | |||

| 9b97073174 | |||

| 4271bb7e52 | |||

| e9bf8574a8 | |||

| 2ce4af16cb | |||

| 2c1dfe7f3f | |||

| f7a6348401 | |||

| 02c0c89b13 | |||

| b8cc110cbe | |||

| 2b1e841ef1 | |||

| a247fc3263 | |||

| 654938f27c | |||

| a7a0de764c | |||

| 1b22e59b4b | |||

| f908d02ab4 | |||

| 7d2a35e32c | |||

| e351428848 | |||

| 4cd6649a44 | |||

| e62acef6d2 | |||

| a043eb939b | |||

| 73eafa2c3d | |||

| a61e492fe1 | |||

| 243f0f2b21 | |||

| 93b6d31505 | |||

| 429aed04b1 | |||

| eeb20b055a | |||

| 4b073b32a5 | |||

| f629755a9a | |||

| c1ed3ee511 | |||

| a4e6c99c82 | |||

| 0b70e07b8c | |||

| 862c236076 | |||

| 5c2f81a928 | |||

| b76bc390f1 | |||

| 25d1e84b7f | |||

| 70a409abf1 | |||

| cf3401536a | |||

| 2feaee4306 | |||

| 863eb0105d | |||

| 21a7a0f136 | |||

| d2a129fe30 | |||

| fe796245a3 | |||

| 10bc84eb5b | |||

| 06c0a35a65 | |||

| 082bcd00a1 | |||

| 71b421efa3 | |||

| 317fec0536 | |||

| 4dcbce41c8 | |||

| b3fa654446 | |||

| e09439fc1b | |||

| 324e481ce7 | |||

| abfad088e3 | |||

| f30789e6c8 | |||

| 5c01f97f54 | |||

| 2d726edbe4 | |||

| 526ad00812 | |||

| 37812dfede | |||

| c9debc38f2 | |||

| fe7d2bb924 | |||

| 586785ffde | |||

| c21e606eee | |||

| a658766046 | |||

| 3f2175e548 | |||

| 3ff30fcc92 | |||

| 07abf4788c | |||

| 492dd3c281 | |||

| d2fb1cfce5 | |||

| 24fe5a572a | |||

| 3af9c3bfb9 | |||

| c22084c7ac | |||

| 96b91c9daa | |||

| 55464d5c5b | |||

| f6048e8157 | |||

| e474982485 | |||

| 18f06cc670 | |||

| 574e3b9d32 | |||

| f7410da330 | |||

| 76f3d54519 | |||

| 59f117d916 | |||

| 4a71259be7 | |||

| b90dde48c0 | |||

| c57807e53a | |||

| 0e54a13272 | |||

| ddc6c02018 | |||

| b67d06ae59 | |||

| ca1289af03 | |||

| 5e642c10fa | |||

| b5a643d67a | |||

| 580eede021 | |||

| ea56910a2f | |||

| 51e1278cd7 | |||

| ddeb4b598d | |||

| 7e029ead45 | |||

| f8f57419c4 | |||

| 917f4b6a01 | |||

| 97d6fb999a | |||

| 1373ca23fc | |||

| 4521077433 | |||

| 6264624c05 | |||

| 5f6fa5a082 | |||

| 2dcee63df5 | |||

| c6cc676275 | |||

| b8e4d10b9d | |||

| 70a957caf0 | |||

| 5ff9aaedfd | |||

| fc8865f8dc | |||

| 466af37675 | |||

| 2202ff1cdf | |||

| 2022018d4c | |||

| b1c374808d | |||

| 20978402ea | |||

| 8f615e17a3 | |||

| 5cbbaf44c9 | |||

| f96d4924e7 | |||

| f36b672eaa | |||

| f104b70703 | |||

| d4e979cb02 | |||

| 668041c09f | |||

| aa73eb2841 | |||

| 14d4ca8c74 | |||

| 690c113603 | |||

| 1a28c77783 | |||

| 0326b7e4ac | |||

| d8ae32fc55 | |||

| 8db2e3b2a0 | |||

| 9465b7b577 | |||

| d7df4287f8 | |||

| b3238e90f2 | |||

| fdfd6247fb | |||

| 46d4d04e94 | |||

| 0f6564f42d | |||

| cddf183e03 | |||

| e80a0ed9c8 | |||

| d6d362b51e | |||

| 4eff0282a1 | |||

| 8fc07df6ef | |||

| 84e4b607cc | |||

| 613ccb4c34 | |||

| e95a6a8b07 | |||

| 2add584fbc | |||

| 54d7d59177 | |||

| b3129c7dd9 | |||

| 3f76d95495 | |||

| 1b600cd85f | |||

| 26cc26129c | |||

| d1d7903e39 | |||

| dff4d1befc | |||

| 3504a64269 | |||

| 83247cadec | |||

| 5ca1748b93 | |||

| c7a681038d | |||

| eb977b4c24 | |||

| b62e0967d5 | |||

| 14a934b146 | |||

| 26dc2e9d21 | |||

| d78a71184d | |||

| eae30c32a2 | |||

| bc28d657b2 | |||

| 416a5495da | |||

| a2b27dcac8 | |||

| d8e4e2e8fd | |||

| 3fae5cbd8d | |||

| 896a81d173 | |||

| b216af8f04 | |||

| 388cc740b6 | |||

| 6214494c84 | |||

| 762a6981e1 | |||

| b362c406bc | |||

| 7a342d3312 | |||

| 2e95988741 | |||

| 9478447141 | |||

| 082293b48c | |||

| e1d92206f3 | |||

| 557ec72bfe | |||

| 6b4b16dcf9 | |||

| c4899a6c54 | |||

| 24d82e65cb | |||

| 2567a6cf27 | |||

| 94cb6b9795 | |||

| e878bbbe36 | |||

| 172b5f0787 | |||

| 0df0542958 | |||

| 7d89b82967 | |||

| c5f9bbbf92 | |||

| a5e5a82952 | |||

| ccbb62b50a | |||

| a8dddd1999 | |||

| f5c6dd55b8 | |||

| 0e932af2e3 | |||

| 1df36c6a44 | |||

| e9891fc530 | |||

| 9e5e9afe92 | |||

| 5e43c202dd | |||

| 727eea2b62 | |||

| 37e6608e68 | |||

| f64d5f1e2a | |||

| 8fdf174dec | |||

| 29d4f98b19 | |||

| 737792d83c | |||

| 7e5889061c | |||

| 755e04cf65 | |||

| 44d6c95714 | |||

| 14610d5375 | |||

| f9c832d6cb | |||

| c2bec614e5 | |||

| 49725e92f2 | |||

| a1e32d8331 | |||

| 0293412a42 | |||

| 10ec0a1812 | |||

| 69b68b78f5 | |||

| c5bc4b44ff | |||

| 39e5102a2e | |||

| f0991526b5 | |||

| 6c82bc9a3e | |||

| 54f41dd603 | |||

| 094f641fb5 | |||

| a35a75eb34 | |||

| 5a7c118b56 | |||

| cf9e0fbbc5 | |||

| ef9af261ed | |||

| ff79776410 | |||

| ec3f2fb485 | |||

| 94a2a5e527 | |||

| ea4bc548fc | |||

| 1eefd3365b | |||

| db37ee819a | |||

| e352c98ce8 | |||

| e96b03da57 | |||

| 1d2aedf169 | |||

| 4c484f8e86 | |||

| 8a79114ed9 | |||

| cd69f43c77 | |||

| 6d6d864417 | |||

| b286c8ed20 | |||

| 7238c81f0c | |||

| 62412f8cd4 | |||

| 5d2bdadb45 | |||

| 06d030637c | |||

| 8e3fa3926a | |||

| 92071fcf1c | |||

| fed1c160eb | |||

| e37daf6987 | |||

| 8fc663911f | |||

| bb2760ae41 | |||

| 3548b88463 | |||

| c917e48098 | |||

| e6ef123ce5 | |||

| 194bfe1193 | |||

| e456cf36aa | |||

| fe3527de3c | |||

| b99c769b53 | |||

| 60bdfb78df | |||

| c0b3c76884 | |||

| e1370a8385 | |||

| c623c3baf4 | |||

| d0f3a4139d | |||

| 3ddc7e79d1 | |||

| 3e14edfd4e | |||

| 15573e2286 | |||

| ce64877063 | |||

| 6666a128ee | |||

| 9fbf89670d | |||

| ad1c51c536 | |||

| 9ab7ccd20d | |||

| c907f93ab8 | |||

| 29a8cf8357 | |||

| 7b6a6c7164 | |||

| cf4d007737 | |||

| a751bb0ef0 | |||

| 26d6280a20 | |||

| 32a19fdab6 | |||

| 775ccb3f25 | |||

| a1c6c57f7b | |||

| 73bb70fef4 | |||

| dcac6c145c | |||

| 4bda9dfe04 | |||

| 66644f0224 | |||

| e74bb80668 | |||

| e06fb534d3 | |||

| 71a341855e | |||

| 7d949ad6e2 | |||

| 4b5f86fcf0 | |||

| cd11f51df0 | |||

| b40c0b9b23 | |||

| 816ddeeb9e | |||

| 11f01a226c | |||

| b57ec301e8 | |||

| 71da20ea7e | |||

| c895657310 | |||

| eda20ccca9 | |||

| aed113cd79 | |||

| 0ab07a46c6 | |||

| 5f32e28933 | |||

| 7538c4dd2f | |||

| e3845283f8 | |||

| a85921d3c5 | |||

| 27b64fbcaf | |||

| 8d50f2ae82 | |||

| e97a03f522 | |||

| 2e3344b5b0 | |||

| e1b51eace7 | |||

| 49e3d5ec5f | |||

| afa78ed3fb | |||

| 72d5e4748e | |||

| 61d3e1ebf4 | |||

| 055b5ea700 | |||

| 3434296792 | |||

| ae375c2ff0 | |||

| 3d5efdf4f3 | |||

| 9a585de364 | |||

| c27dc436c4 | |||

| e83747300d | |||

| 7374243d0b | |||

| 5c568bc0c5 | |||

| 22c196cb3b | |||

| d2cc856cfc | |||

| 013a689b33 | |||

| d772213cfc | |||

| 638db96311 | |||

| 4dffabf397 | |||

| 6f2bbd3baa | |||

| 9e41f3780c | |||

| f53ec1d0cc | |||

| f7666cb59a | |||

| a7cb59ca8b | |||

| ca0ea77415 | |||

| 0cf27e5fee | |||

| f3bdbfc103 | |||

| 20e3acdd86 | |||

| f965b09571 | |||

| e6bea76eee | |||

| 414f2b6767 | |||

| 6541575a0e | |||

| 02570ea797 | |||

| b8583c998d | |||

| 726594600b | |||

| c77cc1d6ed | |||

| b6c9e01a59 | |||

| ec673214c8 | |||

| 16777a5334 | |||

| 65bb70a1dd | |||

| 1a89c7eadf | |||

| 07617eab5a | |||

| f9e4c2b098 | |||

| fa24413201 | |||

| b6cabda586 | |||

| abbce60f18 | |||

| 5daaaf2c1d | |||

| e8f207691e | |||

| b0dce4ceae | |||

| fc494296d7 | |||

| 67b4069540 | |||

| e6defcc846 | |||

| 096fcbbc17 | |||

| eb7add1c77 | |||

| 1b6fb3ea53 | |||

| c57b70f1d4 | |||

| a2c3db463a | |||

| 193da1c356 | |||

| 5bc26880b3 | |||

| 21a1cc970e | |||

| 954727ad67 | |||

| 1314898cbf | |||

| ff04d459d7 | |||

| 88ca501c0c | |||

| fe284a8f91 | |||

| d41fe0cf79 | |||

| 3673924fe9 | |||

| d5c098de73 | |||

| 9f5c0daa8e | |||

| bce2262d4e | |||

| e6f1e0520a | |||

| d8de89ae33 | |||

| 428c38e3d9 | |||

| 7ffdf8de37 | |||

| 83e670c5df | |||

| c324d88be3 | |||

| 41166dc271 | |||

| e7258e732b | |||

| 18ee9d66b0 | |||

| 8755f635b4 | |||

| e2417ebe88 | |||

| 264dea2a8b | |||

| da98fd712f | |||

| e6548f4fe1 | |||

| e66fed2468 | |||

| 1b3fb49f9c | |||

| 66a5f06b45 | |||

| 51c817ba29 | |||

| 8f9f09ecbf | |||

| d77a71bf47 | |||

| 70c0ef5ce1 | |||

| 92e9012fb6 | |||

| 43dc648b05 | |||

| 6dee18b24a | |||

| baa0e95227 | |||

| b27f57d05d | |||

| fd8c90041c | |||

| ea6253e2e8 | |||

| 2a270886ea | |||

| 2945c36899 | |||

| 1bab26f1c5 | |||

| 72eecbbf61 | |||

| 989c56220b | |||

| e387086890 | |||

| 088f256415 | |||

| d92f5284df | |||

| f3e794e50b | |||

| 44239f1a79 | |||

| 428e6382bd | |||

| d13a92515b | |||

| eaf7cfbcf2 | |||

| abb633db0f | |||

| ca11cfa54e | |||

| 8df57941c6 | |||

| af45dcc7df | |||

| 18c33ae6fc | |||

| 896d65a43a | |||

| 02ea2c7a40 | |||

| fdbb7f176c | |||

| 5386ec359d | |||

| 85f64ad895 | |||

| 589d329a3c | |||

| 3585a4ebff | |||

| 706f6bf44d | |||

| 54f29fcf38 | |||

| e941fa9ec0 | |||

| 4479c5f11b | |||

| b2369c66d8 | |||

| ab5ac8ffa8 | |||

| ccc7f1e10a | |||

| 8d075b76ae | |||

| 32a8b0e9bc | |||

| 69c6acf89d | |||

| e07412c098 | |||

| 8cec3ffde3 | |||

| 25bc54785b | |||

| 8b033ccc94 | |||

| 2003b6915b | |||

| 175218c779 | |||

| f96c6cbc95 | |||

| 4dbb70c9d5 | |||

| 73cc7c2d71 | |||

| 902975b660 | |||

| 813fa8571e | |||

| b5c505f727 | |||

| 26b9e8a235 | |||

| c90e72cb75 | |||

| 55f022d93e | |||

| 71b532d4d5 | |||

| c18ae77299 | |||

| 2f0fa246c0 | |||

| a371f7ab84 | |||

| e1149862b2 | |||

| b8f93516ce | |||

| d15d374cdc | |||

| cae0f627e2 | |||

| 7fbdc3aead | |||

| 0551922839 | |||

| 043d453cab | |||

| 663ae92bdf | |||

| 96824aa9e2 | |||

| 5cca299b16 | |||

| cd3527f7d4 | |||

| bb12c75431 | |||

| 4accddcaa7 | |||

| bb8a0f10f4 | |||

| c3cbaaf09e | |||

| a6e65e867f | |||

| 0df8071673 | |||

| b17a4d9551 | |||

| dc7db4cbdd | |||

| 4e94fcc372 | |||

| 4c72cfbff4 | |||

| ac89867ac7 | |||

| 34ed598c20 | |||

| e7aee84ea8 | |||

| 388684e2e8 | |||

| 8f81c18647 | |||

| ba78475944 | |||

| 75dd5688fa | |||

| aa32024078 | |||

| 9167c20512 | |||

| a7fb5d98b1 | |||

| fda47bb5cf | |||

| 9c4f849066 | |||

| 3e2e2d6c6e | |||

| 56cc804fcf | |||

| 62746294e3 | |||

| d384b0644e | |||

| 3e07fe618f | |||

| be54fb5bf8 | |||

| 46ec3c0754 | |||

| 5e608cc7e7 | |||

| 04162564ca | |||

| 992f51a019 | |||

| 2bc25b7435 | |||

| fcd9821d10 | |||

| 911ad299e2 | |||

| fbfa186733 | |||

| 7545b25823 | |||

| 1370a051f1 | |||

| dcbd3132d1 | |||

| 497f84b3bd | |||

| c2fe2fc657 | |||

| f7abdc6ae8 | |||

| d327245edf | |||

| 632de3f186 | |||

| de14b0e4c0 | |||

| f010d1389b | |||

| 4411f6d88a | |||

| a2ca43afcd | |||

| 1f62520606 | |||

| c0511c954e | |||

| 818ab5a9e8 | |||

| 291ffdd6ae | |||

| 4fbe7d14b5 | |||

| ea91a38541 | |||

| caaee4e43d | |||

| 43af4aa182 | |||

| e343ce8468 | |||

| 7b2c01181b | |||

| 978c56c128 | |||

| 4043dfff9e | |||

| 279d45996f | |||

| 01aa038ad6 | |||

| 084256b923 | |||

| dc42713217 | |||

| 99f17666c5 | |||

| bba22667f1 | |||

| 1b8349b0ef | |||

| b94e3521d1 | |||

| 32931f0bc0 | |||

| 72ac8e8091 | |||

| 33045e6898 | |||

| 069c3a8e5c | |||

| 9c0656c296 | |||

| 228ee26541 | |||

| f8d548367f | |||

| d3f466f59b | |||

| 6b45940128 | |||

| a52e94fcbc | |||

| ee3874f0aa | |||

| 31ba7acf49 | |||

| b7a2551cab | |||

| d4eb100cbc | |||

| 21feb92b75 | |||

| 2f6178306f | |||

| 36e7c1c22f | |||

| c31baa5aea | |||

| 67052aa714 | |||

| caee7cbf50 | |||

| 9bee3055c2 | |||

| 901eda2f10 | |||

| 8cf7d2d0b1 | |||

| d7f43d6ee0 | |||

| 9bd5140ea4 | |||

| 12bd9e8b42 | |||

| ca8997b616 | |||

| 8e42162b5e | |||

| 98d0835c48 | |||

| 2aef9dfe55 | |||

| 115b513c9b | |||

| fd63fe4c95 | |||

| d40285e4d3 | |||

| 517658fb37 | |||

| f9f0f220c2 | |||

| 6382b8a68b | |||

| e371b217ec | |||

| 7dec7b0583 | |||

| bf6a235add | |||

| 1d9489c734 | |||

| bd588b4509 | |||

| 245f29e58a | |||

| 7f5f2d2d1a | |||

| fe500845b7 | |||

| b42b2536b5 | |||

| 498ad3d19c | |||

| 892dbe458e | |||

| 1b098aea13 | |||

| ed1816a2d7 | |||

| e90c9e5853 | |||

| e4f28b157f | |||

| 6fb8a882af | |||

| 9889d26d3e | |||

| b23a4c0535 | |||

| 0f7a481eaa | |||

| 3fc88b2bc4 | |||

| ed5aaaab45 | |||

| 145b5db458 | |||

| 8321792a8d | |||

| 8af8fd8e5d | |||

| 753ea3e44c | |||

| 660601f7c5 | |||

| 4e7f67f596 | |||

| e486addb8f | |||

| 4a5310e2a1 | |||

| 8962c9cf8a | |||

| bc95cf5b8e | |||

| dcd8196b94 | |||

| 901c1dc3f0 | |||

| adb9964823 | |||

| 335877c4a7 | |||

| 5da6a0147c | |||

| cd1ae55f4f | |||

| ca50724952 | |||

| 460b315b53 | |||

| 00ff516e8a | |||

| 55b3c3fe5c | |||

| 1443df7227 | |||

| 739b63f73b | |||

| 4a54532b6a | |||

| 0dbe64e401 | |||

| c0b23e1091 | |||

| 704c169181 | |||

| 746140b26e | |||

| 53ce609266 | |||

| 7584ec84ce | |||

| 140760c517 | |||

| 56e9493f7a | |||

| 958ecf333a | |||

| ae3d7067d3 | |||

| a49e81d959 | |||

| 916d7c236e | |||

| 6343d35616 | |||

| 0203086aac | |||

| 0066156aca | |||

| 544bac7010 | |||

| 34090b078b | |||

| 9567199bb2 | |||

| 1f7a833a54 | |||

| 990f69a95d | |||

| 2b8a8ce824 | |||

| 6585854c85 | |||

| 98019fe97f | |||

| d52c11b907 | |||

| e79bcbed93 | |||

| 690c819479 | |||

| 630d1d9e03 | |||

| 20c32375e1 | |||

| 44b790567b | |||

| 4d6d6c4812 | |||

| 7f6493009c | |||

| 7a6efbcb55 | |||

| 777c773a90 | |||

| f7c698ff54 | |||

| 1b780c0496 | |||

| 2e095807b7 | |||

| ae98cfe17b | |||

| 35a6eb2e52 | |||

| 8b477c694c | |||

| 1254ad1727 | |||

| eeea38dab3 | |||

| 8983fd9071 | |||

| 918ae25654 | |||

| de39595522 | |||

| 4c6595148b | |||

| 02e0f958e7 | |||

| be19b64542 | |||

| 24900305d6 | |||

| 06d00032df | |||

| 244cbbd27f | |||

| 970a7896e9 | |||

| 8263bf5f9c | |||

| 8823d8c0e9 | |||

| 5cbcef276c | |||

| ce9014073c | |||

| 376c4523dd | |||

| e0ca594a69 | |||

| 48233fde23 | |||

| 9c05a6b1b5 | |||

| da848d7e39 | |||

| c6c97ac98a | |||

| 92e23ff260 | |||

| aa03654ffc | |||

| 85130c0d30 | |||

| 3c27432f50 | |||

| eec62c14dc | |||

| ad6dd38fe3 | |||

| 307b3b4bf7 | |||

| 8e7e13ab62 | |||

| bd085e610a | |||

| d64b1f80da | |||

| f26264daf1 | |||

| 2aaa722102 | |||

| edaeb99b43 | |||

| ce54a7b79e | |||

| f14c5d296a | |||

| 18d46fb655 | |||

| 07bd926678 | |||

| d3c7dcc407 | |||

| f5dd7207dc | |||

| e5e10d5ec5 | |||

| 314d13e25f | |||

| 2dc2a45e4b | |||

| 39522abc03 | |||

| 3051dc50fb | |||

| e776cebc33 | |||

| 33ef23289f | |||

| 85bc307186 | |||

| a0f53d23af | |||

| 82ac9d447b | |||

| 9286e61753 | |||

| 56828f0170 | |||

| 9e878d0d9a | |||

| 0e42634da4 | |||

| b94ed61219 | |||

| ceaff2a269 | |||

| 12167bc3a1 | |||

| 355abfc39a | |||

| c163d47a63 | |||

| 5d529a71ad | |||

| 5079daa4ad | |||

| f0dc485305 | |||

| db6bf41051 | |||

| 123741faf3 | |||

| 67ff50583a | |||

| 01d1cf98f4 | |||

| 52ba2793cd | |||

| fd39c64bed | |||

| 49c58f997a | |||

| 16150e9c84 | |||

| 6599cbc7f2 | |||

| 2dfad0bb20 | |||

| 53108a9b20 | |||

| f2ab623e76 | |||

| 3a93dcd6a7 | |||

| d31b66b656 | |||

| f17b4fcc9e | |||

| 5582a901ff | |||

| 412c86593d | |||

| 04be1573d5 | |||

| 3d771e28ce | |||

| a9a7a55f02 | |||

| 62fe1de12d | |||

| 4184f81090 | |||

| 635b243280 | |||

| cbe0a695d8 | |||

| 782c170883 | |||

| 9157fa670e | |||

| 36e5e5a17e | |||

| f4f040bf8d | |||

| 82fb611a26 | |||

| 580af44e7d | |||

| 09ef809080 | |||

| 2b22f712fb | |||

| b85679e5e4 | |||

| dcad490513 | |||

| fb9335f424 | |||

| 81c38f9646 | |||

| b1a2e3e323 | |||

| 542bc9586a | |||

| b3749d08e2 | |||

| 31e91edebc | |||

| 6693aa3cbc | |||

| fda98643c2 | |||

| 2bbb25d59c | |||

| 08afeb9759 | |||

| 2d5b0fa37f | |||

| 99f5a2ab0f | |||

| d7dcecfe00 | |||

| c6f8d985c2 | |||

| 532dfd223e | |||

| 9770f4709a | |||

| 35afe758e9 | |||

| 50125ae57f | |||

| 6595c3e0c9 | |||

| fdd16f6c75 | |||

| 7b7e913195 | |||

| 5477469a91 | |||

| dff4646920 | |||

| 6e7622822e | |||

| 631fb93b28 | |||

| dee1f168f8 | |||

| bb18e32c56 | |||

| 7803d8eec4 | |||

| 9a84b4b184 | |||

| 70286e9574 | |||

| 3f60d12a9a | |||

| 164b340c29 | |||

| 4bb035ec0f | |||

| 23a79bc8fe | |||

| 1db53ae1ad | |||

| cca951d787 | |||

| 230d684cd3 | |||

| 0a02fa8597 | |||

| f82b9620af | |||

| 524faadffb | |||

| 82710c2d15 | |||

| ce29d9eb49 | |||

| b7b650eb05 | |||

| 6ca0655517 | |||

| edcf89a456 | |||

| 7762a67250 | |||

| 7049c73790 | |||

| cc7be0811a | |||

| d3a5aea89e | |||

| dd87df49f5 | |||

| e85bcf3a17 | |||

| abb754b16b | |||

| bb5878c99a | |||

| 273a9e35d9 | |||

| fcc208d09f | |||

| 20bbdac135 | |||

| ceedf2bf83 | |||

| 2d6b947292 | |||

| 2e13b12fe6 | |||

| 2d56c88291 | |||

| cf9c6a872d | |||

| 0bb8ab70a4 | |||

| 4a47b78a90 | |||

| 3e542cd88b | |||

| 17ed050ca7 | |||

| e24c5e3501 | |||

| b206b1c5ff | |||

| 0270306d3c | |||

| 3e09b9ac37 | |||

| 725ac9e85d | |||

| e00500b90c | |||

| f1f271fa00 | |||

| d38c5236dd | |||

| 49a3a1e511 | |||

| 1b0b90e51d | |||

| 64481e2d84 | |||

| e0f295659d | |||

| fe75e3f2ec | |||

| e3274af831 | |||

| 95b6abef09 | |||

| 7f1849a867 | |||

| 7760f37dee | |||

| ebbe655c40 | |||

| 164ed77d72 | |||

| b1148e5f7a | |||

| 2012e25596 | |||

| a75253097b | |||

| 079d62af56 | |||

| 6c4a5bae52 | |||

| 886139c6b5 | |||

| 8f751f7371 | |||

| 43297b851f | |||

| 4f39239e73 | |||

| 00e1925927 | |||

| 7189b3ab41 | |||

| a00038fbd8 | |||

| a45343793a | |||

| 703215fe83 | |||

| 0f975ccf4a | |||

| 7367c62cf9 | |||

| fed0ea349a | |||

| bd86266a4b | |||

| bd07a0cd7f | |||

| ed8554699b | |||

| 749ae1be79 | |||

| 0e3dbbd0f2 | |||

| 7a57db5d88 | |||

| 102edcdcf1 | |||

| c92648cbd5 | |||

| 26b008565b | |||

| 0dec24aa37 | |||

| 68a2f2a27d | |||

| cfa14178f8 | |||

| b97c4b6114 | |||

| 3d43cecbea | |||

| eb143ec851 | |||

| 3e94a71dcd | |||

| dd14423b07 | |||

| 8e47fdc284 | |||

| ab607d74be | |||

| bfe7304449 | |||

| e12874b696 | |||

| 696e2bd6ff | |||

| 450f410e3c | |||

| 08a3f033cb | |||

| c5a79ceedd | |||

| 13547afc58 | |||

| 8ae936e504 | |||

| e577d27f9b | |||

| dfb73c963a | |||

| 8c0370a166 | |||

| d7b77764c3 | |||

| 6605f9c444 | |||

| 2a8adcbbd6 | |||

| 0b22c8d427 | |||

| dfa0d9fd43 | |||

| c8470645e2 | |||

| 5a181e52d5 | |||

| 0ad8dcd2aa | |||

| e2d015a20c | |||

| a0cfe4b48a | |||

| a6ba8b614a | |||

| 4f0fabd2ca | |||

| 42b047a14e | |||

| 3daf94954a | |||

| b564d8ac32 | |||

| d8e6da74db | |||

| 278f1883fd | |||

| ef71a7049e | |||

| 6fde87b3bd | |||

| 07fe91e57b | |||

| 01e2f3f0cd | |||

| 63a703c000 | |||

| 4664d91844 | |||

| 8f16c46012 | |||

| a8780f722d | |||

| 1a8fce1505 | |||

| 8519b106f9 | |||

| d375dd62fe | |||

| 3770bf8031 | |||

| 5c527eca66 | |||

| b4ca52c7d8 | |||

| a78d741292 | |||

| 42388b1f8d | |||

| 0167003bbc | |||

| 2ce91fbdf5 | |||

| aa7659d6bf | |||

| 4aa54b9bd4 | |||

| c6d0bacc08 | |||

| 99ed9b22a1 | |||

| eee6d51b40 | |||

| a50e137bba | |||

| 92c0522f4d | |||

| 6a72df2981 | |||

| 808ca48605 | |||

| c827cbc0ae | |||

| 48fcb46d4f | |||

| 66b94599ec | |||

| 231efb33c1 | |||

| eb798dae6f | |||

| 52576c79b3 | |||

| cce2a79a1f | |||

| 413e5f6d77 | |||

| 09ca848d4c | |||

| 801923789b | |||

| cfb696dfd5 | |||

| 2e7a0a88fa | |||

| 1dbbafc30a | |||

| d8eae7faab | |||

| 14eceb6e61 | |||

| 884317c4f7 | |||

| c5f4b229b8 | |||

| 5a2a17ec25 | |||

| 1bd47b0d53 | |||

| 7531ccd31f | |||

| 3b19827ae2 | |||

| ea6e1811c1 | |||

| bc2cf75b76 | |||

| 9e1e0766b7 | |||

| ccde68293f | |||

| 99d53af28d | |||

| 5ea607be58 | |||

| e3846a480e | |||

| a60a58794c | |||

| 8ae5faca53 | |||

| 28d6adf62a | |||

| 1229fba346 | |||

| 59a59ebf66 | |||

| 36ab12c486 | |||

| 0254e3d04a | |||

| f6036e936e | |||

| 10a07e497d | |||

| 3b334805ee | |||

| b6f6c903a0 | |||

| 55637a5620 | |||

| 404cc0a00e | |||

| 0815e2024c | |||

| 41dcb75e8e | |||

| d23daf880f | |||

| d1a8a610e9 | |||

| 918549a4fc | |||

| 8f482cd41a | |||

| 34096059ff | |||

| 2dfbfec8c2 | |||

| 6170995665 | |||

| ca42a54bc3 | |||

| c0610afe2a | |||

| d4cbcc465c | |||

| adb3f17258 | |||

| 2c03a67312 | |||

| 55eb741965 | |||

| 8e6518f071 | |||

| c9c95d60d4 | |||

| 02ecaa340f | |||

| cca809e91c | |||

| 57ff46ecc1 | |||

| 3819d52eb0 | |||

| 3072325d2c | |||

| abca2fdcb7 | |||

| 4d84f76948 | |||

| dd8f6eb923 | |||

| b9c25e487a | |||

| 1bf27c38a7 | |||

| 1f987380ed | |||

| cd8bbbf889 | |||

| 8e5498ee97 | |||

| 0412d7aca0 | |||

| 1eac3245d9 | |||

| cd51bef7f7 | |||

| e8aa33fa0b | |||

| 54b021b02c | |||

| 32151e3d9a | |||

| 32358678e6 | |||

| 42e32664a1 | |||

| 1e97236a15 | |||

| 321f7bce46 | |||

| 02a1d8dbfc | |||

| e34f9d8d1c | |||

| 35dac012bd | |||

| 21ced18f50 | |||

| fca78cf395 | |||

| d1b91b0ea3 | |||

| 76e00acbdb | |||

| 2f83e7738c | |||

| f4a226b0f7 | |||

| f5e2838fc3 | |||

| bbdfd2c3d4 | |||

| 74572e1768 | |||

| f0a17b863c | |||

| 86fd84e113 | |||

| d5b9be23d3 | |||

| 057bb3932f | |||

| 05f29cc406 | |||

| 63c4c7e584 | |||

| 1ea23cab96 | |||

| e99f9fd59f | |||

| fdf6a3e833 | |||

| 79cb94b4c2 | |||

| 9adec7cc10 | |||

| 1f0df47b4d | |||

| a71a12791b | |||

| 23fa834721 | |||

| 9f67d07156 | |||

| 6731a7643e | |||

| f87fdd88ad | |||

| f825f6b90a | |||

| f5d5008a24 | |||

| 0b63d4cde5 | |||

| 2e246869d0 | |||

| 2f9546e144 | |||

| 6134c2ff61 | |||

| 3cfbba74f8 | |||

| 050bb60671 | |||

| 12a7e1ce6e | |||

| cd0438005b | |||

| 7c3188ae06 | |||

| 6cd38a37cd | |||

| 12e51bb6aa | |||

| e2a4cd6b03 | |||

| 329e228aa2 | |||

| 3d5d517f2a | |||

| a2eb2e4dac | |||

| d89792d379 | |||

| 23ed2553c4 | |||

| fe29ce2911 | |||

| df25a3ede2 | |||

| 4c36fb4df2 | |||

| 67c61e0ac8 | |||

| 0985db4e36 | |||

| ee2c00abeb | |||

| 577f24d107 | |||

| fc24b34c2b | |||

| 1e962476da | |||

| 3326327572 | |||

| 36be79ea38 | |||

| 523839be7d | |||

| d1586ddd77 | |||

| 3420853923 | |||

| 1f373d7b0a | |||

| 7fdbd6a680 | |||

| 17b40a1fa1 | |||

| c47e74c5c7 | |||

| 7abbe08ff1 | |||

| 8038b6ab99 | |||

| 6e26ad0966 | |||

| 7e2449b228 | |||

| 97bfee47a3 | |||

| 3b27c834a4 | |||

| 5bc2ef1eff | |||

| 2f558006bf | |||

| 8868c92141 | |||

| 370520df51 | |||

| e17dd66dce | |||

| fc8494d696 | |||

| f8aea909b4 | |||

| 2e832b8fb4 | |||

| ccddbeccad | |||

| a47fa342cb | |||

| f73cddcb93 | |||

| 5f36f0d753 | |||

| dc4bf13d39 | |||

| bdf7eff7cd | |||

| dc67e6a66e | |||

| 6d91f44634 | |||

| 0396e10706 | |||

| 77f243b7ab | |||

| c507785475 | |||

| 5c5015b267 | |||

| 3efe08d619 | |||

| 2e36fce4eb | |||

| d6d4427545 | |||

| 5d45632247 | |||

| 90c045e3d0 | |||

| 7f0a96d8f7 | |||

| 8fb9affef3 | |||

| 6c42a471e1 | |||

| f2b74b6970 | |||

| ffd11aeffc | |||

| e5a8ed205e | |||

| 90f97b0226 | |||

| 978348240b | |||

| 4d92e7d9c2 |

@ -1,3 +1,5 @@

|

||||

venv/

|

||||

pr_agent/settings/.secrets.toml

|

||||

pics/

|

||||

pics/

|

||||

pr_agent.egg-info/

|

||||

build/

|

||||

|

||||

38

.github/workflows/build-and-test.yaml

vendored

Normal file

38

.github/workflows/build-and-test.yaml

vendored

Normal file

@ -0,0 +1,38 @@

|

||||

name: Build-and-test

|

||||

|

||||

on:

|

||||

push:

|

||||

pull_request:

|

||||

types: [ opened, reopened ]

|

||||

|

||||

jobs:

|

||||

build-and-test:

|

||||

runs-on: ubuntu-latest

|

||||

|

||||

steps:

|

||||

- id: checkout

|

||||

uses: actions/checkout@v2

|

||||

|

||||

- id: dockerx

|

||||

name: Setup Docker Buildx

|

||||

uses: docker/setup-buildx-action@v2

|

||||

|

||||

- id: build

|

||||

name: Build dev docker

|

||||

uses: docker/build-push-action@v2

|

||||

with:

|

||||

context: .

|

||||

file: ./docker/Dockerfile

|

||||

push: false

|

||||

load: true

|

||||

tags: codiumai/pr-agent:test

|

||||

cache-from: type=gha,scope=dev

|

||||

cache-to: type=gha,mode=max,scope=dev

|

||||

target: test

|

||||

|

||||

- id: test

|

||||

name: Test dev docker

|

||||

run: |

|

||||

docker run --rm codiumai/pr-agent:test pytest -v

|

||||

|

||||

|

||||

32

.github/workflows/pr-agent-review.yaml

vendored

Normal file

32

.github/workflows/pr-agent-review.yaml

vendored

Normal file

@ -0,0 +1,32 @@

|

||||

# This workflow enables developers to call PR-Agents `/[actions]` in PR's comments and upon PR creation.

|

||||

# Learn more at https://www.codium.ai/pr-agent/

|

||||

# This is v0.2 of this workflow file

|

||||

|

||||

name: PR-Agent

|

||||

|

||||

on:

|

||||

pull_request:

|

||||

issue_comment:

|

||||

|

||||

permissions:

|

||||

issues: write

|

||||

pull-requests: write

|

||||

|

||||

jobs:

|

||||

pr_agent_job:

|

||||

runs-on: ubuntu-latest

|

||||

name: Run pr agent on every pull request

|

||||

steps:

|

||||

- name: PR Agent action step

|

||||

id: pragent

|

||||

uses: Codium-ai/pr-agent@main

|

||||

env:

|

||||

OPENAI_KEY: ${{ secrets.OPENAI_KEY }}

|

||||

OPENAI_ORG: ${{ secrets.OPENAI_ORG }} # optional

|

||||

GITHUB_TOKEN: ${{ secrets.GITHUB_TOKEN }}

|

||||

PINECONE.API_KEY: ${{ secrets.PINECONE_API_KEY }}

|

||||

PINECONE.ENVIRONMENT: ${{ secrets.PINECONE_ENVIRONMENT }}

|

||||

GITHUB_ACTION.AUTO_REVIEW: true

|

||||

GITHUB_ACTION.AUTO_IMPROVE: true

|

||||

|

||||

|

||||

16

.github/workflows/review.yaml

vendored

16

.github/workflows/review.yaml

vendored

@ -1,16 +0,0 @@

|

||||

on:

|

||||

pull_request:

|

||||

issue_comment:

|

||||

jobs:

|

||||

pr_agent_job:

|

||||

runs-on: ubuntu-latest

|

||||

name: Run pr agent on every pull request

|

||||

steps:

|

||||

- name: PR Agent action step

|

||||

id: pragent

|

||||

uses: Codium-ai/pr-agent@main

|

||||

env:

|

||||

OPENAI_KEY: ${{ secrets.OPENAI_KEY }}

|

||||

OPENAI_ORG: ${{ secrets.OPENAI_ORG }} # optional

|

||||

GITHUB_TOKEN: ${{ secrets.GITHUB_TOKEN }}

|

||||

|

||||

6

.gitignore

vendored

6

.gitignore

vendored

@ -1,4 +1,8 @@

|

||||

.idea/

|

||||

venv/

|

||||

pr_agent/settings/.secrets.toml

|

||||

__pycache__

|

||||

__pycache__

|

||||

dist/

|

||||

*.egg-info/

|

||||

build/

|

||||

review.md

|

||||

|

||||

6

.pr_agent.toml

Normal file

6

.pr_agent.toml

Normal file

@ -0,0 +1,6 @@

|

||||

[pr_reviewer]

|

||||

enable_review_labels_effort = true

|

||||

|

||||

|

||||

[pr_code_suggestions]

|

||||

summarize=true

|

||||

45

CHANGELOG.md

Normal file

45

CHANGELOG.md

Normal file

@ -0,0 +1,45 @@

|

||||

## 2023-08-03

|

||||

|

||||

### Optimized

|

||||

- Optimized PR diff processing by introducing caching for diff files, reducing the number of API calls.

|

||||

- Refactored `load_large_diff` function to generate a patch only when necessary.

|

||||

- Fixed a bug in the GitLab provider where the new file was not retrieved correctly.

|

||||

|

||||

## 2023-08-02

|

||||

|

||||

### Enhanced

|

||||

- Updated several tools in the `pr_agent` package to use commit messages in their functionality.

|

||||

- Commit messages are now retrieved and stored in the `vars` dictionary for each tool.

|

||||

- Added a section to display the commit messages in the prompts of various tools.

|

||||

|

||||

## 2023-08-01

|

||||

|

||||

### Enhanced

|

||||

- Introduced the ability to retrieve commit messages from pull requests across different git providers.

|

||||

- Implemented commit messages retrieval for GitHub and GitLab providers.

|

||||

- Updated the PR description template to include a section for commit messages if they exist.

|

||||

- Added support for repository-specific configuration files (.pr_agent.yaml) for the PR Agent.

|

||||

- Implemented this feature for both GitHub and GitLab providers.

|

||||

- Added a new configuration option 'use_repo_settings_file' to enable or disable the use of a repo-specific settings file.

|

||||

|

||||

|

||||

## 2023-07-30

|

||||

|

||||

### Enhanced

|

||||

- Added the ability to modify any configuration parameter from 'configuration.toml' on-the-fly.

|

||||

- Updated the command line interface and bot commands to accept configuration changes as arguments.

|

||||

- Improved the PR agent to handle additional arguments for each action.

|

||||

|

||||

## 2023-07-28

|

||||

|

||||

### Improved

|

||||

- Enhanced error handling and logging in the GitLab provider.

|

||||

- Improved handling of inline comments and code suggestions in GitLab.

|

||||

- Fixed a bug where an additional unneeded line was added to code suggestions in GitLab.

|

||||

|

||||

## 2023-07-26

|

||||

|

||||

### Added

|

||||

- New feature for updating the CHANGELOG.md based on the contents of a PR.

|

||||

- Added support for this feature for the Github provider.

|

||||

- New configuration settings and prompts for the changelog update feature.

|

||||

@ -1,18 +0,0 @@

|

||||

## Configuration

|

||||

|

||||

The different tools and sub-tools used by CodiumAI pr-agent are easily configurable via the configuration file: `/pr-agent/settings/configuration.toml`.

|

||||

##### Git Provider:

|

||||

You can select your git_provider with the flag `git_provider` in the `config` section

|

||||

|

||||

##### PR Reviewer:

|

||||

|

||||

You can enable/disable the different PR Reviewer abilities with the following flags (`pr_reviewer` section):

|

||||

```

|

||||

require_focused_review=true

|

||||

require_tests_review=true

|

||||

require_security_review=true

|

||||

```

|

||||

You can contol the number of suggestions returned by the PR Reviewer with the following flag:

|

||||

```inline_code_comments=3```

|

||||

And enable/disable the inline code suggestions with the following flag:

|

||||

```inline_code_comments=true```

|

||||

@ -1,8 +1,9 @@

|

||||

FROM python:3.10 as base

|

||||

|

||||

WORKDIR /app

|

||||

ADD pyproject.toml .

|

||||

ADD requirements.txt .

|

||||

RUN pip install -r requirements.txt && rm requirements.txt

|

||||

RUN pip install . && rm pyproject.toml requirements.txt

|

||||

ENV PYTHONPATH=/app

|

||||

ADD pr_agent pr_agent

|

||||

ADD github_action/entrypoint.sh /

|

||||

|

||||

408

INSTALL.md

408

INSTALL.md

@ -1,80 +1,77 @@

|

||||

|

||||

## Installation

|

||||

|

||||

To get started with PR-Agent quickly, you first need to acquire two tokens:

|

||||

|

||||

1. An OpenAI key from [here](https://platform.openai.com/), with access to GPT-4.

|

||||

2. A GitHub\GitLab\BitBucket personal access token (classic) with the repo scope.

|

||||

|

||||

There are several ways to use PR-Agent:

|

||||

|

||||

**Locally**

|

||||

- [Using Docker image (no installation required)](INSTALL.md#use-docker-image-no-installation-required)

|

||||

- [Run from source](INSTALL.md#run-from-source)

|

||||

|

||||

**GitHub specific methods**

|

||||

- [Run as a GitHub Action](INSTALL.md#run-as-a-github-action)

|

||||

- [Run as a polling server](INSTALL.md#run-as-a-polling-server)

|

||||

- [Run as a GitHub App](INSTALL.md#run-as-a-github-app)

|

||||

- [Deploy as a Lambda Function](INSTALL.md#deploy-as-a-lambda-function)

|

||||

- [AWS CodeCommit](INSTALL.md#aws-codecommit-setup)

|

||||

|

||||

**GitLab specific methods**

|

||||

- [Run a GitLab webhook server](INSTALL.md#run-a-gitlab-webhook-server)

|

||||

|

||||

**BitBucket specific methods**

|

||||

- [Run as a Bitbucket Pipeline](INSTALL.md#run-as-a-bitbucket-pipeline)

|

||||

- [Run on a hosted app](INSTALL.md#run-on-a-hosted-bitbucket-app)

|

||||

- [Bitbucket server and data center](INSTALL.md#bitbucket-server-and-data-center)

|

||||

---

|

||||

|

||||

#### Method 1: Use Docker image (no installation required)

|

||||

### Use Docker image (no installation required)

|

||||

|

||||

To request a review for a PR, or ask a question about a PR, you can run directly from the Docker image. Here's how:

|

||||

A list of the relevant tools can be found in the [tools guide](./docs/TOOLS_GUIDE.md).

|

||||

|

||||

1. To request a review for a PR, run the following command:

|

||||

To invoke a tool (for example `review`), you can run directly from the Docker image. Here's how:

|

||||

|

||||

- For GitHub:

|

||||

```

|

||||

docker run --rm -it -e OPENAI.KEY=<your key> -e GITHUB.USER_TOKEN=<your token> codiumai/pr-agent --pr_url <pr_url> review

|

||||

docker run --rm -it -e OPENAI.KEY=<your key> -e GITHUB.USER_TOKEN=<your token> codiumai/pr-agent:latest --pr_url <pr_url> review

|

||||

```

|

||||

|

||||

2. To ask a question about a PR, run the following command:

|

||||

|

||||

- For GitLab:

|

||||

```

|

||||

docker run --rm -it -e OPENAI.KEY=<your key> -e GITHUB.USER_TOKEN=<your token> codiumai/pr-agent --pr_url <pr_url> ask "<your question>"

|

||||

docker run --rm -it -e OPENAI.KEY=<your key> -e CONFIG.GIT_PROVIDER=gitlab -e GITLAB.PERSONAL_ACCESS_TOKEN=<your token> codiumai/pr-agent:latest --pr_url <pr_url> review

|

||||

```

|

||||

|

||||

Possible questions you can ask include:

|

||||

Note: If you have a dedicated GitLab instance, you need to specify the custom url as variable:

|

||||

```

|

||||

docker run --rm -it -e OPENAI.KEY=<your key> -e CONFIG.GIT_PROVIDER=gitlab -e GITLAB.PERSONAL_ACCESS_TOKEN=<your token> GITLAB.URL=<your gitlab instance url> codiumai/pr-agent:latest --pr_url <pr_url> review

|

||||

```

|

||||

|

||||

- What is the main theme of this PR?

|

||||

- Is the PR ready for merge?

|

||||

- What are the main changes in this PR?

|

||||

- Should this PR be split into smaller parts?

|

||||

- Can you compose a rhymed song about this PR?

|

||||

- For BitBucket:

|

||||

```

|

||||

docker run --rm -it -e CONFIG.GIT_PROVIDER=bitbucket -e OPENAI.KEY=$OPENAI_API_KEY -e BITBUCKET.BEARER_TOKEN=$BITBUCKET_BEARER_TOKEN codiumai/pr-agent:latest --pr_url=<pr_url> review

|

||||

```

|

||||

|

||||

For other git providers, update CONFIG.GIT_PROVIDER accordingly, and check the `pr_agent/settings/.secrets_template.toml` file for the environment variables expected names and values.

|

||||

|

||||

---

|

||||

|

||||

#### Method 2: Run as a GitHub Action

|

||||

|

||||

You can use our pre-built Github Action Docker image to run PR-Agent as a Github Action.

|

||||

|

||||

1. Add the following file to your repository under `.github/workflows/pr_agent.yml`:

|

||||

|

||||

```yaml

|

||||

on:

|

||||

pull_request:

|

||||

issue_comment:

|

||||

jobs:

|

||||

pr_agent_job:

|

||||

runs-on: ubuntu-latest

|

||||

name: Run pr agent on every pull request, respond to user comments

|

||||

steps:

|

||||

- name: PR Agent action step

|

||||

id: pragent

|

||||

uses: Codium-ai/pr-agent@main

|

||||

env:

|

||||

OPENAI_KEY: ${{ secrets.OPENAI_KEY }}

|

||||

GITHUB_TOKEN: ${{ secrets.GITHUB_TOKEN }}

|

||||

If you want to ensure you're running a specific version of the Docker image, consider using the image's digest:

|

||||

```bash

|

||||

docker run --rm -it -e OPENAI.KEY=<your key> -e GITHUB.USER_TOKEN=<your token> codiumai/pr-agent@sha256:71b5ee15df59c745d352d84752d01561ba64b6d51327f97d46152f0c58a5f678 --pr_url <pr_url> review

|

||||

```

|

||||

|

||||

2. Add the following secret to your repository under `Settings > Secrets`:

|

||||

|

||||

Or you can run a [specific released versions](./RELEASE_NOTES.md) of pr-agent, for example:

|

||||

```

|

||||

OPENAI_KEY: <your key>

|

||||

```

|

||||

|

||||

The GITHUB_TOKEN secret is automatically created by GitHub.

|

||||

|

||||

3. Merge this change to your main branch.

|

||||

When you open your next PR, you should see a comment from `github-actions` bot with a review of your PR, and instructions on how to use the rest of the tools.

|

||||

|

||||

4. You may configure PR-Agent by adding environment variables under the env section corresponding to any configurable property in the [configuration](./CONFIGURATION.md) file. Some examples:

|

||||

```yaml

|

||||

env:

|

||||

# ... previous environment values

|

||||

OPENAI.ORG: "<Your organization name under your OpenAI account>"

|

||||

PR_REVIEWER.REQUIRE_TESTS_REVIEW: "false" # Disable tests review

|

||||

PR_CODE_SUGGESTIONS.NUM_CODE_SUGGESTIONS: 6 # Increase number of code suggestions

|

||||

codiumai/pr-agent@v0.9

|

||||

```

|

||||

|

||||

---

|

||||

|

||||

#### Method 3: Run from source

|

||||

### Run from source

|

||||

|

||||

1. Clone this repository:

|

||||

|

||||

@ -92,24 +89,102 @@ pip install -r requirements.txt

|

||||

|

||||

```

|

||||

cp pr_agent/settings/.secrets_template.toml pr_agent/settings/.secrets.toml

|

||||

chmod 600 pr_agent/settings/.secrets.toml

|

||||

# Edit .secrets.toml file

|

||||

```

|

||||

|

||||

4. Run the appropriate Python scripts from the scripts folder:

|

||||

4. Add the pr_agent folder to your PYTHONPATH, then run the cli.py script:

|

||||

|

||||

```

|

||||

python pr_agent/cli.py --pr_url <pr_url> review

|

||||

python pr_agent/cli.py --pr_url <pr_url> ask <your question>

|

||||

python pr_agent/cli.py --pr_url <pr_url> describe

|

||||

python pr_agent/cli.py --pr_url <pr_url> improve

|

||||

export PYTHONPATH=[$PYTHONPATH:]<PATH to pr_agent folder>

|

||||

python3 -m pr_agent.cli --pr_url <pr_url> review

|

||||

python3 -m pr_agent.cli --pr_url <pr_url> ask <your question>

|

||||

python3 -m pr_agent.cli --pr_url <pr_url> describe

|

||||

python3 -m pr_agent.cli --pr_url <pr_url> improve

|

||||

python3 -m pr_agent.cli --pr_url <pr_url> add_docs

|

||||

python3 -m pr_agent.cli --pr_url <pr_url> generate_labels

|

||||

python3 -m pr_agent.cli --issue_url <issue_url> similar_issue

|

||||

...

|

||||

```

|

||||

|

||||

---

|

||||

|

||||

#### Method 4: Run as a polling server

|

||||

Request reviews by tagging your Github user on a PR

|

||||

### Run as a GitHub Action

|

||||

|

||||

You can use our pre-built Github Action Docker image to run PR-Agent as a Github Action.

|

||||

|

||||

1. Add the following file to your repository under `.github/workflows/pr_agent.yml`:

|

||||

|

||||

```yaml

|

||||

on:

|

||||

pull_request:

|

||||

issue_comment:

|

||||

jobs:

|

||||

pr_agent_job:

|

||||

runs-on: ubuntu-latest

|

||||

permissions:

|

||||

issues: write

|

||||

pull-requests: write

|

||||

contents: write

|

||||

name: Run pr agent on every pull request, respond to user comments

|

||||

steps:

|

||||

- name: PR Agent action step

|

||||

id: pragent

|

||||

uses: Codium-ai/pr-agent@main

|

||||

env:

|

||||

OPENAI_KEY: ${{ secrets.OPENAI_KEY }}

|

||||

GITHUB_TOKEN: ${{ secrets.GITHUB_TOKEN }}

|

||||

```

|

||||

** if you want to pin your action to a specific release (v0.7 for example) for stability reasons, use:

|

||||

```yaml

|

||||

on:

|

||||

pull_request:

|

||||

issue_comment:

|

||||

|

||||

jobs:

|

||||

pr_agent_job:

|

||||

runs-on: ubuntu-latest

|

||||

permissions:

|

||||

issues: write

|

||||

pull-requests: write

|

||||

contents: write

|

||||

name: Run pr agent on every pull request, respond to user comments

|

||||

steps:

|

||||

- name: PR Agent action step

|

||||

id: pragent

|

||||

uses: Codium-ai/pr-agent@v0.7

|

||||

env:

|

||||

OPENAI_KEY: ${{ secrets.OPENAI_KEY }}

|

||||

GITHUB_TOKEN: ${{ secrets.GITHUB_TOKEN }}

|

||||

```

|

||||

2. Add the following secret to your repository under `Settings > Secrets and variables > Actions > New repository secret > Add secret`:

|

||||

|

||||

```

|

||||

Name = OPENAI_KEY

|

||||

Secret = <your key>

|

||||

```

|

||||

|

||||

The GITHUB_TOKEN secret is automatically created by GitHub.

|

||||

|

||||

3. Merge this change to your main branch.

|

||||

When you open your next PR, you should see a comment from `github-actions` bot with a review of your PR, and instructions on how to use the rest of the tools.

|

||||

|

||||

4. You may configure PR-Agent by adding environment variables under the env section corresponding to any configurable property in the [configuration](pr_agent/settings/configuration.toml) file. Some examples:

|

||||

```yaml

|

||||

env:

|

||||

# ... previous environment values

|

||||

OPENAI.ORG: "<Your organization name under your OpenAI account>"

|

||||

PR_REVIEWER.REQUIRE_TESTS_REVIEW: "false" # Disable tests review

|

||||

PR_CODE_SUGGESTIONS.NUM_CODE_SUGGESTIONS: 6 # Increase number of code suggestions

|

||||

```

|

||||

|

||||

---

|

||||

|

||||

### Run as a polling server

|

||||

Request reviews by tagging your GitHub user on a PR

|

||||

|

||||

Follow [steps 1-3](#run-as-a-github-action) of the GitHub Action setup.

|

||||

|

||||

Follow steps 1-3 of method 2.

|

||||

Run the following command to start the server:

|

||||

|

||||

```

|

||||

@ -118,7 +193,7 @@ python pr_agent/servers/github_polling.py

|

||||

|

||||

---

|

||||

|

||||

#### Method 5: Run as a GitHub App

|

||||

### Run as a GitHub App

|

||||

Allowing you to automate the review process on your private or public repositories.

|

||||

|

||||

1. Create a GitHub App from the [Github Developer Portal](https://docs.github.com/en/developers/apps/creating-a-github-app).

|

||||

@ -127,9 +202,11 @@ Allowing you to automate the review process on your private or public repositori

|

||||

- Pull requests: Read & write

|

||||

- Issue comment: Read & write

|

||||

- Metadata: Read-only

|

||||

- Contents: Read-only

|

||||

- Set the following events:

|

||||

- Issue comment

|

||||

- Pull request

|

||||

- Push (if you need to enable triggering on PR update)

|

||||

|

||||

2. Generate a random secret for your app, and save it for later. For example, you can use:

|

||||

|

||||

@ -149,17 +226,36 @@ git clone https://github.com/Codium-ai/pr-agent.git

|

||||

```

|

||||

|

||||

5. Copy the secrets template file and fill in the following:

|

||||

```

|

||||

cp pr_agent/settings/.secrets_template.toml pr_agent/settings/.secrets.toml

|

||||

# Edit .secrets.toml file

|

||||

```

|

||||

- Your OpenAI key.

|

||||

- Set deployment_type to 'app'

|

||||

- Copy your app's private key to the private_key field.

|

||||

- Copy your app's ID to the app_id field.

|

||||

- Copy your app's webhook secret to the webhook_secret field.

|

||||

- Set deployment_type to 'app' in [configuration.toml](./pr_agent/settings/configuration.toml)

|

||||

|

||||

> The .secrets.toml file is not copied to the Docker image by default, and is only used for local development.

|

||||

> If you want to use the .secrets.toml file in your Docker image, you can add remove it from the .dockerignore file.

|

||||

> In most production environments, you would inject the secrets file as environment variables or as mounted volumes.

|

||||

> For example, in order to inject a secrets file as a volume in a Kubernetes environment you can update your pod spec to include the following,

|

||||

> assuming you have a secret named `pr-agent-settings` with a key named `.secrets.toml`:

|

||||

```

|

||||

cp pr_agent/settings/.secrets_template.toml pr_agent/settings/.secrets.toml

|

||||

# Edit .secrets.toml file

|

||||

volumes:

|

||||

- name: settings-volume

|

||||

secret:

|

||||

secretName: pr-agent-settings

|

||||

// ...

|

||||

containers:

|

||||

// ...

|

||||

volumeMounts:

|

||||

- mountPath: /app/pr_agent/settings_prod

|

||||

name: settings-volume

|

||||

```

|

||||

|

||||

> Another option is to set the secrets as environment variables in your deployment environment, for example `OPENAI.KEY` and `GITHUB.USER_TOKEN`.

|

||||

|

||||

6. Build a Docker image for the app and optionally push it to a Docker repository. We'll use Dockerhub as an example:

|

||||

|

||||

```

|

||||

@ -169,6 +265,7 @@ docker push codiumai/pr-agent:github_app # Push to your Docker repository

|

||||

|

||||

7. Host the app using a server, serverless function, or container environment. Alternatively, for development and

|

||||

debugging, you may use tools like smee.io to forward webhooks to your local machine.

|

||||

You can check [Deploy as a Lambda Function](#deploy-as-a-lambda-function)

|

||||

|

||||

8. Go back to your app's settings, and set the following:

|

||||

|

||||

@ -177,4 +274,189 @@ docker push codiumai/pr-agent:github_app # Push to your Docker repository

|

||||

|

||||

9. Install the app by navigating to the "Install App" tab and selecting your desired repositories.

|

||||

|

||||

> **Note:** When running PR-Agent from GitHub App, the default configuration file (configuration.toml) will be loaded.<br>

|

||||

> However, you can override the default tool parameters by uploading a local configuration file `.pr_agent.toml`<br>

|

||||

> For more information please check out the [USAGE GUIDE](./Usage.md#working-with-github-app)

|

||||

---

|

||||

|

||||

### Deploy as a Lambda Function

|

||||

|

||||

1. Follow steps 1-5 of [Method 5](#run-as-a-github-app).

|

||||

2. Build a docker image that can be used as a lambda function

|

||||

```shell

|

||||

docker buildx build --platform=linux/amd64 . -t codiumai/pr-agent:serverless -f docker/Dockerfile.lambda

|

||||

```

|

||||

3. Push image to ECR

|

||||

```shell

|

||||

docker tag codiumai/pr-agent:serverless <AWS_ACCOUNT>.dkr.ecr.<AWS_REGION>.amazonaws.com/codiumai/pr-agent:serverless

|

||||

docker push <AWS_ACCOUNT>.dkr.ecr.<AWS_REGION>.amazonaws.com/codiumai/pr-agent:serverless

|

||||

```

|

||||

4. Create a lambda function that uses the uploaded image. Set the lambda timeout to be at least 3m.

|

||||

5. Configure the lambda function to have a Function URL.

|

||||

6. In the environment variables of the Lambda function, specify `AZURE_DEVOPS_CACHE_DIR` to a writable location such as /tmp. (see [link](https://github.com/Codium-ai/pr-agent/pull/450#issuecomment-1840242269))

|

||||

7. Go back to steps 8-9 of [Method 5](#run-as-a-github-app) with the function url as your Webhook URL.

|

||||

The Webhook URL would look like `https://<LAMBDA_FUNCTION_URL>/api/v1/github_webhooks`

|

||||

|

||||

---

|

||||

|

||||

### AWS CodeCommit Setup

|

||||

|

||||

Not all features have been added to CodeCommit yet. As of right now, CodeCommit has been implemented to run the pr-agent CLI on the command line, using AWS credentials stored in environment variables. (More features will be added in the future.) The following is a set of instructions to have pr-agent do a review of your CodeCommit pull request from the command line:

|

||||

|

||||

1. Create an IAM user that you will use to read CodeCommit pull requests and post comments

|

||||

* Note: That user should have CLI access only, not Console access

|

||||

2. Add IAM permissions to that user, to allow access to CodeCommit (see IAM Role example below)

|

||||

3. Generate an Access Key for your IAM user

|

||||

4. Set the Access Key and Secret using environment variables (see Access Key example below)

|

||||

5. Set the `git_provider` value to `codecommit` in the `pr_agent/settings/configuration.toml` settings file

|

||||

6. Set the `PYTHONPATH` to include your `pr-agent` project directory

|

||||

* Option A: Add `PYTHONPATH="/PATH/TO/PROJECTS/pr-agent` to your `.env` file

|

||||

* Option B: Set `PYTHONPATH` and run the CLI in one command, for example:

|

||||

* `PYTHONPATH="/PATH/TO/PROJECTS/pr-agent python pr_agent/cli.py [--ARGS]`

|

||||

|

||||

##### AWS CodeCommit IAM Role Example

|

||||

|

||||

Example IAM permissions to that user to allow access to CodeCommit:

|

||||

|

||||

* Note: The following is a working example of IAM permissions that has read access to the repositories and write access to allow posting comments

|

||||

* Note: If you only want pr-agent to review your pull requests, you can tighten the IAM permissions further, however this IAM example will work, and allow the pr-agent to post comments to the PR

|

||||

* Note: You may want to replace the `"Resource": "*"` with your list of repos, to limit access to only those repos

|

||||

|

||||

```

|

||||

{

|

||||

"Version": "2012-10-17",

|

||||

"Statement": [

|

||||

{

|

||||

"Effect": "Allow",

|

||||

"Action": [

|

||||

"codecommit:BatchDescribe*",

|

||||

"codecommit:BatchGet*",

|

||||

"codecommit:Describe*",

|

||||

"codecommit:EvaluatePullRequestApprovalRules",

|

||||

"codecommit:Get*",

|

||||

"codecommit:List*",

|

||||

"codecommit:PostComment*",

|

||||

"codecommit:PutCommentReaction",

|

||||

"codecommit:UpdatePullRequestDescription",

|

||||

"codecommit:UpdatePullRequestTitle"

|

||||

],

|

||||

"Resource": "*"

|

||||

}

|

||||

]

|

||||

}

|

||||

```

|

||||

|

||||

##### AWS CodeCommit Access Key and Secret

|

||||

|

||||

Example setting the Access Key and Secret using environment variables

|

||||

|

||||

```sh

|

||||

export AWS_ACCESS_KEY_ID="XXXXXXXXXXXXXXXX"

|

||||

export AWS_SECRET_ACCESS_KEY="XXXXXXXXXXXXXXXX"

|

||||

export AWS_DEFAULT_REGION="us-east-1"

|

||||

```

|

||||

|

||||

##### AWS CodeCommit CLI Example

|

||||

|

||||

After you set up AWS CodeCommit using the instructions above, here is an example CLI run that tells pr-agent to **review** a given pull request.

|

||||

(Replace your specific PYTHONPATH and PR URL in the example)

|

||||

|

||||

```sh

|

||||

PYTHONPATH="/PATH/TO/PROJECTS/pr-agent" python pr_agent/cli.py \

|

||||

--pr_url https://us-east-1.console.aws.amazon.com/codesuite/codecommit/repositories/MY_REPO_NAME/pull-requests/321 \

|

||||

review

|

||||

```

|

||||

|

||||

---

|

||||

|

||||

### Run a GitLab webhook server

|

||||

|

||||

1. From the GitLab workspace or group, create an access token. Enable the "api" scope only.

|

||||

2. Generate a random secret for your app, and save it for later. For example, you can use:

|

||||

|

||||

```

|

||||

WEBHOOK_SECRET=$(python -c "import secrets; print(secrets.token_hex(10))")

|

||||

```

|

||||

3. Follow the instructions to build the Docker image, setup a secrets file and deploy on your own server from [Method 5](#run-as-a-github-app) steps 4-7.

|

||||

4. In the secrets file, fill in the following:

|

||||

- Your OpenAI key.

|

||||

- In the [gitlab] section, fill in personal_access_token and shared_secret. The access token can be a personal access token, or a group or project access token.

|

||||

- Set deployment_type to 'gitlab' in [configuration.toml](./pr_agent/settings/configuration.toml)

|

||||

5. Create a webhook in GitLab. Set the URL to the URL of your app's server. Set the secret token to the generated secret from step 2.

|

||||

In the "Trigger" section, check the ‘comments’ and ‘merge request events’ boxes.

|

||||

6. Test your installation by opening a merge request or commenting or a merge request using one of CodiumAI's commands.

|

||||

|

||||

|

||||

|

||||

### Run as a Bitbucket Pipeline

|

||||

|

||||

|

||||

You can use the Bitbucket Pipeline system to run PR-Agent on every pull request open or update.

|

||||

|

||||

1. Add the following file in your repository bitbucket_pipelines.yml

|

||||

|

||||

```yaml

|

||||

pipelines:

|

||||

pull-requests:

|

||||

'**':

|

||||

- step:

|

||||

name: PR Agent Review

|

||||

image: python:3.10

|

||||

services:

|

||||

- docker

|

||||

script:

|

||||

- docker run -e CONFIG.GIT_PROVIDER=bitbucket -e OPENAI.KEY=$OPENAI_API_KEY -e BITBUCKET.BEARER_TOKEN=$BITBUCKET_BEARER_TOKEN codiumai/pr-agent:latest --pr_url=https://bitbucket.org/$BITBUCKET_WORKSPACE/$BITBUCKET_REPO_SLUG/pull-requests/$BITBUCKET_PR_ID review

|

||||

```

|

||||

|

||||

2. Add the following secure variables to your repository under Repository settings > Pipelines > Repository variables.

|

||||

OPENAI_API_KEY: <your key>

|

||||

BITBUCKET_BEARER_TOKEN: <your token>

|

||||

|

||||

You can get a Bitbucket token for your repository by following Repository Settings -> Security -> Access Tokens.

|

||||

|

||||

Note that comments on a PR are not supported in Bitbucket Pipeline.

|

||||

|

||||

|

||||

### Run using CodiumAI-hosted Bitbucket app

|

||||

|

||||

Please contact <support@codium.ai> or visit [CodiumAI pricing page](https://www.codium.ai/pricing/) if you're interested in a hosted BitBucket app solution that provides full functionality including PR reviews and comment handling. It's based on the [bitbucket_app.py](https://github.com/Codium-ai/pr-agent/blob/main/pr_agent/git_providers/bitbucket_provider.py) implementation.

|

||||

|

||||

|

||||

### Bitbucket Server and Data Center

|

||||

|

||||

Login into your on-prem instance of Bitbucket with your service account username and password.

|

||||

Navigate to `Manage account`, `HTTP Access tokens`, `Create Token`.

|

||||

Generate the token and add it to .secret.toml under `bitbucket_server` section

|

||||

|

||||

```toml

|

||||

[bitbucket_server]

|

||||

bearer_token = "<your key>"

|

||||

```

|

||||

|

||||

#### Run it as CLI

|

||||

|

||||

Modify `configuration.toml`:

|

||||

|

||||

```toml

|

||||

git_provider="bitbucket_server"

|

||||

```

|

||||

|

||||

and pass the Pull request URL:

|

||||

```shell

|

||||

python cli.py --pr_url https://git.onpreminstanceofbitbucket.com/projects/PROJECT/repos/REPO/pull-requests/1 review

|

||||

```

|

||||

|

||||

#### Run it as service

|

||||

|

||||

To run pr-agent as webhook, build the docker image:

|

||||

```

|

||||

docker build . -t codiumai/pr-agent:bitbucket_server_webhook --target bitbucket_server_webhook -f docker/Dockerfile

|

||||

docker push codiumai/pr-agent:bitbucket_server_webhook # Push to your Docker repository

|

||||

```

|

||||

|

||||

Navigate to `Projects` or `Repositories`, `Settings`, `Webhooks`, `Create Webhook`.

|

||||

Fill the name and URL, Authentication None select the Pull Request Opened checkbox to receive that event as webhook.

|

||||

|

||||

The URL should end with `/webhook`, for example: https://domain.com/webhook

|

||||

|

||||

=======

|

||||

|

||||

@ -1,4 +1,4 @@

|

||||

# Git Patch Logic

|

||||

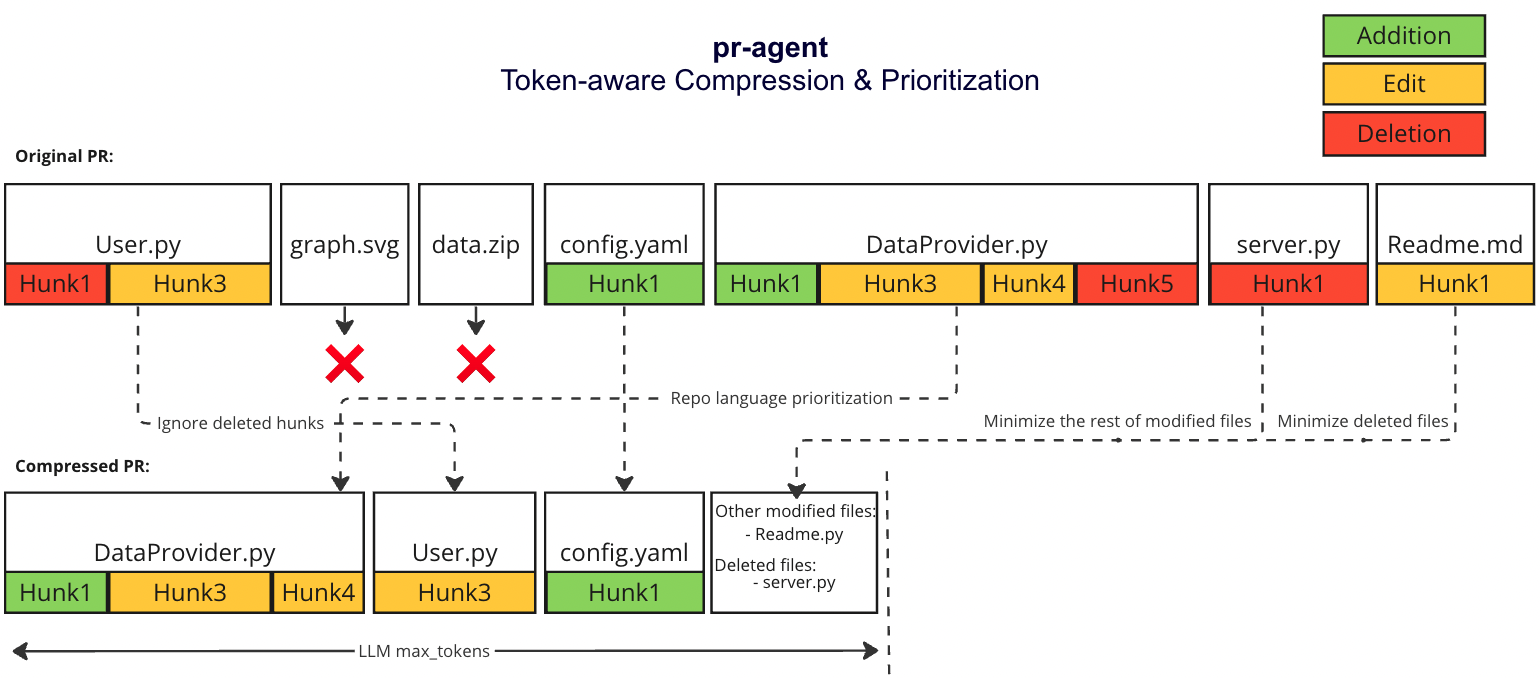

# PR Compression Strategy

|

||||

There are two scenarios:

|

||||

1. The PR is small enough to fit in a single prompt (including system and user prompt)

|

||||

2. The PR is too large to fit in a single prompt (including system and user prompt)

|

||||

@ -16,7 +16,7 @@ We prioritize the languages of the repo based on the following criteria:

|

||||

## Small PR

|

||||

In this case, we can fit the entire PR in a single prompt:

|

||||

1. Exclude binary files and non code files (e.g. images, pdfs, etc)

|

||||

2. We Expand the surrounding context of each patch to 6 lines above and below the patch

|

||||

2. We Expand the surrounding context of each patch to 3 lines above and below the patch

|

||||

## Large PR

|

||||

|

||||

### Motivation

|

||||

@ -25,13 +25,13 @@ We want to be able to pack as much information as possible in a single LMM promp

|

||||

|

||||

|

||||

|

||||

#### PR compression strategy

|

||||

#### Compression strategy

|

||||

We prioritize additions over deletions:

|

||||

- Combine all deleted files into a single list (`deleted files`)

|

||||

- File patches are a list of hunks, remove all hunks of type deletion-only from the hunks in the file patch

|

||||

#### Adaptive and token-aware file patch fitting

|

||||

We use [tiktoken](https://github.com/openai/tiktoken) to tokenize the patches after the modifications described above, and we use the following strategy to fit the patches into the prompt:

|

||||

1. Withing each language we sort the files by the number of tokens in the file (in descending order):

|

||||

1. Within each language we sort the files by the number of tokens in the file (in descending order):

|

||||

* ```[[file2.py, file.py],[file4.jsx, file3.js],[readme.md]]```

|

||||

2. Iterate through the patches in the order described above

|

||||

2. Add the patches to the prompt until the prompt reaches a certain buffer from the max token length

|

||||

@ -39,4 +39,4 @@ We use [tiktoken](https://github.com/openai/tiktoken) to tokenize the patches af

|

||||

4. If we haven't reached the max token length, add the `deleted files` to the prompt until the prompt reaches the max token length (hard stop), skip the rest of the patches.

|

||||

|

||||

### Example

|

||||

|

||||

<kbd><img src=https://codium.ai/images/git_patch_logic.png width="768"></kbd>

|

||||

|

||||

254

README.md

254

README.md

@ -2,160 +2,242 @@

|

||||

|

||||

<div align="center">

|

||||

|

||||

<img src="./pics/logo-dark.png#gh-dark-mode-only" width="250"/>

|

||||

<img src="./pics/logo-light.png#gh-light-mode-only" width="250"/>

|

||||

|

||||

<picture>

|

||||

<source media="(prefers-color-scheme: dark)" srcset="https://codium.ai/images/pr_agent/logo-dark.png" width="330">

|

||||

<source media="(prefers-color-scheme: light)" srcset="https://codium.ai/images/pr_agent/logo-light.png" width="330">

|

||||

<img alt="logo">

|

||||

</picture>

|

||||

<br/>

|

||||

Making pull requests less painful with an AI agent

|

||||

</div>

|

||||

|

||||

[](https://github.com/Codium-ai/pr-agent/blob/main/LICENSE)

|

||||

[](https://discord.com/channels/1057273017547378788/1126104260430528613)

|

||||

[](https://twitter.com/codiumai)

|

||||

<a href="https://github.com/Codium-ai/pr-agent/commits/main">

|

||||

<img alt="GitHub" src="https://img.shields.io/github/last-commit/Codium-ai/pr-agent/main?style=for-the-badge" height="20">

|

||||

</a>

|

||||

</div>

|

||||

<div style="text-align:left;">

|

||||

|

||||

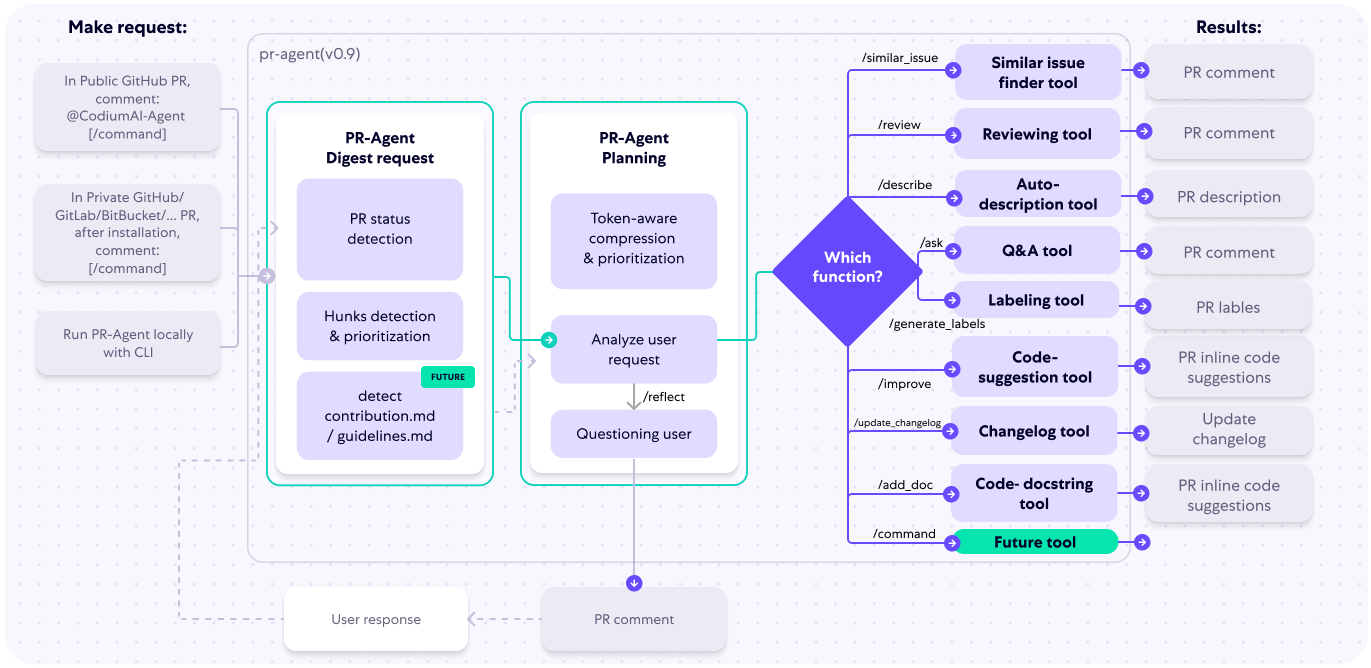

CodiumAI `PR-Agent` is an open-source tool aiming to help developers review PRs faster and more efficiently. It automatically analyzes the PR and can provide several types of feedback:

|

||||

CodiumAI `PR-Agent` is an open-source tool for efficient pull request reviewing and handling. It automatically analyzes the pull request and can provide several types of commands:

|

||||

|

||||

**Auto-Description**: Automatically generating PR description - name, type, summary, and code walkthrough.

|

||||

‣ **Auto Description ([`/describe`](./docs/DESCRIBE.md))**: Automatically generating PR description - title, type, summary, code walkthrough and labels.

|

||||

\

|

||||

**PR Review**: Feedback about the PR main theme, type, relevant tests, security issues, focused PR, and various suggestions for the PR content.

|

||||

‣ **Auto Review ([`/review`](./docs/REVIEW.md))**: Adjustable feedback about the PR main theme, type, relevant tests, security issues, score, and various suggestions for the PR content.

|

||||

\

|

||||

**Question Answering**: Answering free-text questions about the PR.

|

||||

‣ **Question Answering ([`/ask ...`](./docs/ASK.md))**: Answering free-text questions about the PR.

|

||||

\

|

||||

**Code Suggestion**: Committable code suggestions for improving the PR.

|

||||

‣ **Code Suggestions ([`/improve`](./docs/IMPROVE.md))**: Committable code suggestions for improving the PR.

|

||||

\

|

||||

‣ **Update Changelog ([`/update_changelog`](./docs/UPDATE_CHANGELOG.md))**: Automatically updating the CHANGELOG.md file with the PR changes.

|

||||

\

|

||||

‣ **Find Similar Issue ([`/similar_issue`](./docs/SIMILAR_ISSUE.md))**: Automatically retrieves and presents similar issues.

|

||||

\

|

||||

‣ **Add Documentation ([`/add_docs`](./docs/ADD_DOCUMENTATION.md))**: Automatically adds documentation to un-documented functions/classes in the PR.

|

||||

\

|

||||

‣ **Generate Custom Labels ([`/generate_labels`](./docs/GENERATE_CUSTOM_LABELS.md))**: Automatically suggests custom labels based on the PR code changes.

|

||||

|

||||

<h3>Example results:</h2>

|

||||

See the [Installation Guide](./INSTALL.md) for instructions on installing and running the tool on different git platforms.

|

||||

|

||||

See the [Usage Guide](./Usage.md) for running the PR-Agent commands via different interfaces, including _CLI_, _online usage_, or by _automatically triggering_ them when a new PR is opened.

|

||||

|

||||

See the [Tools Guide](./docs/TOOLS_GUIDE.md) for detailed description of the different tools (tools are run via the commands).

|

||||

|

||||

<h3>Example results:</h3>

|

||||

</div>

|

||||

<h4>Describe:</h4>

|

||||