mirror of

https://github.com/qodo-ai/pr-agent.git

synced 2025-07-05 21:30:40 +08:00

Compare commits

81 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

| c3f8ef939c | |||

| 34cc434459 | |||

| a3d52f9cc7 | |||

| f56728fbca | |||

| 19ddf1b2e4 | |||

| 23ce79589c | |||

| 8cd82b5dbf | |||

| dba6846a04 | |||

| 317eb65cc2 | |||

| 9817602ab5 | |||

| 8a7b37ab4c | |||

| 3b071ccb4e | |||

| 822a253eb5 | |||

| aeb1bd8dbc | |||

| df8290a290 | |||

| 9e20373cb0 | |||

| 6dc38e5bca | |||

| f7efa2c7c7 | |||

| d77d2f86da | |||

| 2276caba39 | |||

| 12d3d6cc0b | |||

| 630712e24c | |||

| e1a112d26e | |||

| 1b46d64d71 | |||

| 38eda2f7b6 | |||

| 53b9c8ec97 | |||

| 7e8e95b748 | |||

| 7f51661e64 | |||

| 70023d2c4f | |||

| c5d34f5ad5 | |||

| 8d3e51c205 | |||

| b213753420 | |||

| 2eb8019325 | |||

| 9115cb7d31 | |||

| 62adad8f12 | |||

| 56f7ae0b46 | |||

| 446c1fb49a | |||

| 7d50625bd6 | |||

| bd9ddc8b86 | |||

| dd4fe4dcb4 | |||

| 1c174f263f | |||

| d860e17b3b | |||

| f83970bc6b | |||

| 87a245bf9c | |||

| 2d1afc634e | |||

| e4f477dae0 | |||

| 8e210f8ea0 | |||

| 9c87056263 | |||

| 3251f19a19 | |||

| 299a2c89d1 | |||

| c7241ca093 | |||

| 1a00e61239 | |||

| f166e7f497 | |||

| 8dc08e4596 | |||

| ead2c9273f | |||

| 5062543325 | |||

| 35e865bfb6 | |||

| abb576c84f | |||

| 2d61ff7b88 | |||

| e75b863f3b | |||

| 849cb2ea5a | |||

| ab80677e3a | |||

| bd7017d630 | |||

| 6e2bc01294 | |||

| 22c16f586b | |||

| a42e3331d8 | |||

| e14834c84e | |||

| 915a1c563b | |||

| bc99cf83dd | |||

| d00cbd4da7 | |||

| 721ff18a63 | |||

| 1a003fe4d3 | |||

| 68f78e1a30 | |||

| 7759d1d3fc | |||

| 738f9856a4 | |||

| fbce8cd2f5 | |||

| ea63c8e63a | |||

| d8fea6afc4 | |||

| ff16e1cd26 | |||

| 9b5ae1a322 | |||

| 8b8464163d |

52

README.md

52

README.md

@ -31,12 +31,12 @@ PR-Agent aims to help efficiently review and handle pull requests, by providing

|

|||||||

|

|

||||||

- [Getting Started](#getting-started)

|

- [Getting Started](#getting-started)

|

||||||

- [News and Updates](#news-and-updates)

|

- [News and Updates](#news-and-updates)

|

||||||

- [Overview](#overview)

|

- [Why Use PR-Agent?](#why-use-pr-agent)

|

||||||

|

- [Features](#features)

|

||||||

- [See It in Action](#see-it-in-action)

|

- [See It in Action](#see-it-in-action)

|

||||||

- [Try It Now](#try-it-now)

|

- [Try It Now](#try-it-now)

|

||||||

- [Qodo Merge 💎](#qodo-merge-)

|

- [Qodo Merge 💎](#qodo-merge-)

|

||||||

- [How It Works](#how-it-works)

|

- [How It Works](#how-it-works)

|

||||||

- [Why Use PR-Agent?](#why-use-pr-agent)

|

|

||||||

- [Data Privacy](#data-privacy)

|

- [Data Privacy](#data-privacy)

|

||||||

- [Contributing](#contributing)

|

- [Contributing](#contributing)

|

||||||

- [Links](#links)

|

- [Links](#links)

|

||||||

@ -57,24 +57,34 @@ Add automated PR reviews to your repository with a simple workflow file using [G

|

|||||||

### CLI Usage

|

### CLI Usage

|

||||||

Run PR-Agent locally on your repository via command line: [Local CLI setup guide](https://qodo-merge-docs.qodo.ai/usage-guide/automations_and_usage/#local-repo-cli)

|

Run PR-Agent locally on your repository via command line: [Local CLI setup guide](https://qodo-merge-docs.qodo.ai/usage-guide/automations_and_usage/#local-repo-cli)

|

||||||

|

|

||||||

### Discover Qodo Merge 💎

|

### Qodo Merge as post-commit in your local IDE

|

||||||

|

See [here](https://github.com/qodo-ai/agents/tree/main/agents/qodo-merge-post-commit)

|

||||||

|

|

||||||

|

### Discover Qodo Merge 💎

|

||||||

Zero-setup hosted solution with advanced features and priority support

|

Zero-setup hosted solution with advanced features and priority support

|

||||||

|

- **[FREE for Open Source](https://github.com/marketplace/qodo-merge-pro-for-open-source)**: Full features, zero cost for public repos

|

||||||

- [Intro and Installation guide](https://qodo-merge-docs.qodo.ai/installation/qodo_merge/)

|

- [Intro and Installation guide](https://qodo-merge-docs.qodo.ai/installation/qodo_merge/)

|

||||||

- [Plans & Pricing](https://www.qodo.ai/pricing/)

|

- [Plans & Pricing](https://www.qodo.ai/pricing/)

|

||||||

|

|

||||||

|

### Qodo Merge as a Post-commit in Your Local IDE

|

||||||

|

You can receive automatic feedback from Qodo Merge on your local IDE after each [commit](https://github.com/qodo-ai/agents/tree/main/agents/qodo-merge-post-commit)

|

||||||

|

|

||||||

|

|

||||||

## News and Updates

|

## News and Updates

|

||||||

|

|

||||||

|

## Jul 1, 2025

|

||||||

|

You can now receive automatic feedback from Qodo Merge in your local IDE after each commit. Read more about it [here](https://github.com/qodo-ai/agents/tree/main/agents/qodo-merge-post-commit).

|

||||||

|

|

||||||

|

## Jun 21, 2025

|

||||||

|

|

||||||

|

v0.30 was [released](https://github.com/qodo-ai/pr-agent/releases)

|

||||||

|

|

||||||

|

|

||||||

## Jun 3, 2025

|

## Jun 3, 2025

|

||||||

|

|

||||||

Qodo Merge now offers a simplified free tier 💎.

|

Qodo Merge now offers a simplified free tier 💎.

|

||||||

Organizations can use Qodo Merge at no cost, with a [monthly limit](https://qodo-merge-docs.qodo.ai/installation/qodo_merge/#cloud-users) of 75 PR reviews per organization.

|

Organizations can use Qodo Merge at no cost, with a [monthly limit](https://qodo-merge-docs.qodo.ai/installation/qodo_merge/#cloud-users) of 75 PR reviews per organization.

|

||||||

|

|

||||||

## May 17, 2025

|

|

||||||

|

|

||||||

- v0.29 was [released](https://github.com/qodo-ai/pr-agent/releases)

|

|

||||||

- `Qodo Merge Pull Request Benchmark` was [released](https://qodo-merge-docs.qodo.ai/pr_benchmark/). This benchmark evaluates and compares the performance of LLMs in analyzing pull request code.

|

|

||||||

- `Recent Updates and Future Roadmap` page was added to the [Qodo Merge Docs](https://qodo-merge-docs.qodo.ai/recent_updates/)

|

|

||||||

|

|

||||||

## Apr 30, 2025

|

## Apr 30, 2025

|

||||||

|

|

||||||

@ -92,11 +102,22 @@ New tool for Qodo Merge 💎 - `/scan_repo_discussions`.

|

|||||||

|

|

||||||

Read more about it [here](https://qodo-merge-docs.qodo.ai/tools/scan_repo_discussions/).

|

Read more about it [here](https://qodo-merge-docs.qodo.ai/tools/scan_repo_discussions/).

|

||||||

|

|

||||||

## Overview

|

## Why Use PR-Agent?

|

||||||

|

|

||||||

|

A reasonable question that can be asked is: `"Why use PR-Agent? What makes it stand out from existing tools?"`

|

||||||

|

|

||||||

|

Here are some advantages of PR-Agent:

|

||||||

|

|

||||||

|

- We emphasize **real-life practical usage**. Each tool (review, improve, ask, ...) has a single LLM call, no more. We feel that this is critical for realistic team usage - obtaining an answer quickly (~30 seconds) and affordably.

|

||||||

|

- Our [PR Compression strategy](https://qodo-merge-docs.qodo.ai/core-abilities/#pr-compression-strategy) is a core ability that enables to effectively tackle both short and long PRs.

|

||||||

|

- Our JSON prompting strategy enables us to have **modular, customizable tools**. For example, the '/review' tool categories can be controlled via the [configuration](pr_agent/settings/configuration.toml) file. Adding additional categories is easy and accessible.

|

||||||

|

- We support **multiple git providers** (GitHub, GitLab, BitBucket), **multiple ways** to use the tool (CLI, GitHub Action, GitHub App, Docker, ...), and **multiple models** (GPT, Claude, Deepseek, ...)

|

||||||

|

|

||||||

|

## Features

|

||||||

|

|

||||||

<div style="text-align:left;">

|

<div style="text-align:left;">

|

||||||

|

|

||||||

Supported commands per platform:

|

PR-Agent and Qodo Merge offer comprehensive pull request functionalities integrated with various git providers:

|

||||||

|

|

||||||

| | | GitHub | GitLab | Bitbucket | Azure DevOps | Gitea |

|

| | | GitHub | GitLab | Bitbucket | Azure DevOps | Gitea |

|

||||||

|---------------------------------------------------------|---------------------------------------------------------------------------------------------------------------------|:------:|:------:|:---------:|:------------:|:-----:|

|

|---------------------------------------------------------|---------------------------------------------------------------------------------------------------------------------|:------:|:------:|:---------:|:------------:|:-----:|

|

||||||

@ -218,17 +239,6 @@ The following diagram illustrates PR-Agent tools and their flow:

|

|||||||

|

|

||||||

Check out the [PR Compression strategy](https://qodo-merge-docs.qodo.ai/core-abilities/#pr-compression-strategy) page for more details on how we convert a code diff to a manageable LLM prompt

|

Check out the [PR Compression strategy](https://qodo-merge-docs.qodo.ai/core-abilities/#pr-compression-strategy) page for more details on how we convert a code diff to a manageable LLM prompt

|

||||||

|

|

||||||

## Why Use PR-Agent?

|

|

||||||

|

|

||||||

A reasonable question that can be asked is: `"Why use PR-Agent? What makes it stand out from existing tools?"`

|

|

||||||

|

|

||||||

Here are some advantages of PR-Agent:

|

|

||||||

|

|

||||||

- We emphasize **real-life practical usage**. Each tool (review, improve, ask, ...) has a single LLM call, no more. We feel that this is critical for realistic team usage - obtaining an answer quickly (~30 seconds) and affordably.

|

|

||||||

- Our [PR Compression strategy](https://qodo-merge-docs.qodo.ai/core-abilities/#pr-compression-strategy) is a core ability that enables to effectively tackle both short and long PRs.

|

|

||||||

- Our JSON prompting strategy enables us to have **modular, customizable tools**. For example, the '/review' tool categories can be controlled via the [configuration](pr_agent/settings/configuration.toml) file. Adding additional categories is easy and accessible.

|

|

||||||

- We support **multiple git providers** (GitHub, GitLab, BitBucket), **multiple ways** to use the tool (CLI, GitHub Action, GitHub App, Docker, ...), and **multiple models** (GPT, Claude, Deepseek, ...)

|

|

||||||

|

|

||||||

## Data Privacy

|

## Data Privacy

|

||||||

|

|

||||||

### Self-hosted PR-Agent

|

### Self-hosted PR-Agent

|

||||||

|

|||||||

@ -202,7 +202,23 @@ h1 {

|

|||||||

|

|

||||||

<script>

|

<script>

|

||||||

window.addEventListener('load', function() {

|

window.addEventListener('load', function() {

|

||||||

function displayResults(responseText) {

|

function extractText(responseText) {

|

||||||

|

try {

|

||||||

|

console.log('responseText: ', responseText);

|

||||||

|

const results = JSON.parse(responseText);

|

||||||

|

const msg = results.message;

|

||||||

|

|

||||||

|

if (!msg || msg.trim() === '') {

|

||||||

|

return "No results found";

|

||||||

|

}

|

||||||

|

return msg;

|

||||||

|

} catch (error) {

|

||||||

|

console.error('Error parsing results:', error);

|

||||||

|

throw new Error("Failed parsing response message");

|

||||||

|

}

|

||||||

|

}

|

||||||

|

|

||||||

|

function displayResults(msg) {

|

||||||

const resultsContainer = document.getElementById('results');

|

const resultsContainer = document.getElementById('results');

|

||||||

const spinner = document.getElementById('spinner');

|

const spinner = document.getElementById('spinner');

|

||||||

const searchContainer = document.querySelector('.search-container');

|

const searchContainer = document.querySelector('.search-container');

|

||||||

@ -214,8 +230,6 @@ window.addEventListener('load', function() {

|

|||||||

searchContainer.scrollIntoView({ behavior: 'smooth', block: 'start' });

|

searchContainer.scrollIntoView({ behavior: 'smooth', block: 'start' });

|

||||||

|

|

||||||

try {

|

try {

|

||||||

const results = JSON.parse(responseText);

|

|

||||||

|

|

||||||

marked.setOptions({

|

marked.setOptions({

|

||||||

breaks: true,

|

breaks: true,

|

||||||

gfm: true,

|

gfm: true,

|

||||||

@ -223,7 +237,7 @@ window.addEventListener('load', function() {

|

|||||||

sanitize: false

|

sanitize: false

|

||||||

});

|

});

|

||||||

|

|

||||||

const htmlContent = marked.parse(results.message);

|

const htmlContent = marked.parse(msg);

|

||||||

|

|

||||||

resultsContainer.className = 'markdown-content';

|

resultsContainer.className = 'markdown-content';

|

||||||

resultsContainer.innerHTML = htmlContent;

|

resultsContainer.innerHTML = htmlContent;

|

||||||

@ -242,7 +256,7 @@ window.addEventListener('load', function() {

|

|||||||

}, 100);

|

}, 100);

|

||||||

} catch (error) {

|

} catch (error) {

|

||||||

console.error('Error parsing results:', error);

|

console.error('Error parsing results:', error);

|

||||||

resultsContainer.innerHTML = '<div class="error-message">Error processing results</div>';

|

resultsContainer.innerHTML = '<div class="error-message">Cannot process results</div>';

|

||||||

}

|

}

|

||||||

}

|

}

|

||||||

|

|

||||||

@ -275,24 +289,25 @@ window.addEventListener('load', function() {

|

|||||||

body: JSON.stringify(data)

|

body: JSON.stringify(data)

|

||||||

};

|

};

|

||||||

|

|

||||||

// const API_ENDPOINT = 'http://0.0.0.0:3000/api/v1/docs_help';

|

//const API_ENDPOINT = 'http://0.0.0.0:3000/api/v1/docs_help';

|

||||||

const API_ENDPOINT = 'https://help.merge.qodo.ai/api/v1/docs_help';

|

const API_ENDPOINT = 'https://help.merge.qodo.ai/api/v1/docs_help';

|

||||||

|

|

||||||

const response = await fetch(API_ENDPOINT, options);

|

const response = await fetch(API_ENDPOINT, options);

|

||||||

|

const responseText = await response.text();

|

||||||

|

const msg = extractText(responseText);

|

||||||

|

|

||||||

if (!response.ok) {

|

if (!response.ok) {

|

||||||

throw new Error(`HTTP error! status: ${response.status}`);

|

throw new Error(`An error (${response.status}) occurred during search: "${msg}"`);

|

||||||

}

|

}

|

||||||

|

|

||||||

const responseText = await response.text();

|

displayResults(msg);

|

||||||

displayResults(responseText);

|

|

||||||

} catch (error) {

|

} catch (error) {

|

||||||

spinner.style.display = 'none';

|

spinner.style.display = 'none';

|

||||||

resultsContainer.innerHTML = `

|

const errorDiv = document.createElement('div');

|

||||||

<div class="error-message">

|

errorDiv.className = 'error-message';

|

||||||

An error occurred while searching. Please try again later.

|

errorDiv.textContent = `${error}`;

|

||||||

</div>

|

resultsContainer.value = "";

|

||||||

`;

|

resultsContainer.appendChild(errorDiv);

|

||||||

}

|

}

|

||||||

}

|

}

|

||||||

|

|

||||||

|

|||||||

@ -1,6 +1,6 @@

|

|||||||

# Incremental Update 💎

|

# Incremental Update 💎

|

||||||

|

|

||||||

`Supported Git Platforms: GitHub`

|

`Supported Git Platforms: GitHub, GitLab (Both cloud & server. For server: Version 17 and above)`

|

||||||

|

|

||||||

## Overview

|

## Overview

|

||||||

The Incremental Update feature helps users focus on feedback for their newest changes, making large PRs more manageable.

|

The Incremental Update feature helps users focus on feedback for their newest changes, making large PRs more manageable.

|

||||||

|

|||||||

@ -5,6 +5,7 @@ Qodo Merge utilizes a variety of core abilities to provide a comprehensive and e

|

|||||||

- [Auto approval](https://qodo-merge-docs.qodo.ai/core-abilities/auto_approval/)

|

- [Auto approval](https://qodo-merge-docs.qodo.ai/core-abilities/auto_approval/)

|

||||||

- [Auto best practices](https://qodo-merge-docs.qodo.ai/core-abilities/auto_best_practices/)

|

- [Auto best practices](https://qodo-merge-docs.qodo.ai/core-abilities/auto_best_practices/)

|

||||||

- [Chat on code suggestions](https://qodo-merge-docs.qodo.ai/core-abilities/chat_on_code_suggestions/)

|

- [Chat on code suggestions](https://qodo-merge-docs.qodo.ai/core-abilities/chat_on_code_suggestions/)

|

||||||

|

- [Chrome extension](https://qodo-merge-docs.qodo.ai/chrome-extension/)

|

||||||

- [Code validation](https://qodo-merge-docs.qodo.ai/core-abilities/code_validation/)

|

- [Code validation](https://qodo-merge-docs.qodo.ai/core-abilities/code_validation/)

|

||||||

- [Compression strategy](https://qodo-merge-docs.qodo.ai/core-abilities/compression_strategy/)

|

- [Compression strategy](https://qodo-merge-docs.qodo.ai/core-abilities/compression_strategy/)

|

||||||

- [Dynamic context](https://qodo-merge-docs.qodo.ai/core-abilities/dynamic_context/)

|

- [Dynamic context](https://qodo-merge-docs.qodo.ai/core-abilities/dynamic_context/)

|

||||||

|

|||||||

@ -24,7 +24,7 @@ To search the documentation site using natural language:

|

|||||||

|

|

||||||

## Features

|

## Features

|

||||||

|

|

||||||

PR-Agent and Qodo Merge offers extensive pull request functionalities across various git providers:

|

PR-Agent and Qodo Merge offer comprehensive pull request functionalities integrated with various git providers:

|

||||||

|

|

||||||

| | | GitHub | GitLab | Bitbucket | Azure DevOps | Gitea |

|

| | | GitHub | GitLab | Bitbucket | Azure DevOps | Gitea |

|

||||||

| ----- |---------------------------------------------------------------------------------------------------------------------|:------:|:------:|:---------:|:------------:|:-----:|

|

| ----- |---------------------------------------------------------------------------------------------------------------------|:------:|:------:|:---------:|:------------:|:-----:|

|

||||||

|

|||||||

@ -3,7 +3,8 @@

|

|||||||

[Qodo Merge](https://www.codium.ai/pricing/){:target="_blank"} is a hosted version of the open-source [PR-Agent](https://github.com/Codium-ai/pr-agent){:target="_blank"}.

|

[Qodo Merge](https://www.codium.ai/pricing/){:target="_blank"} is a hosted version of the open-source [PR-Agent](https://github.com/Codium-ai/pr-agent){:target="_blank"}.

|

||||||

It is designed for companies and teams that require additional features and capabilities.

|

It is designed for companies and teams that require additional features and capabilities.

|

||||||

|

|

||||||

Free users receive a monthly quota of 75 PR reviews per git organization, while unlimited usage requires a paid subscription. See [details](https://qodo-merge-docs.qodo.ai/installation/qodo_merge/#cloud-users).

|

Free users receive a quota of 75 monthly PR feedbacks per git organization. Unlimited usage requires a paid subscription. See [details](https://qodo-merge-docs.qodo.ai/installation/qodo_merge/#cloud-users).

|

||||||

|

|

||||||

|

|

||||||

Qodo Merge provides the following benefits:

|

Qodo Merge provides the following benefits:

|

||||||

|

|

||||||

|

|||||||

@ -3,15 +3,18 @@

|

|||||||

## Methodology

|

## Methodology

|

||||||

|

|

||||||

Qodo Merge PR Benchmark evaluates and compares the performance of Large Language Models (LLMs) in analyzing pull request code and providing meaningful code suggestions.

|

Qodo Merge PR Benchmark evaluates and compares the performance of Large Language Models (LLMs) in analyzing pull request code and providing meaningful code suggestions.

|

||||||

Our diverse dataset comprises of 400 pull requests from over 100 repositories, spanning various programming languages and frameworks to reflect real-world scenarios.

|

Our diverse dataset contains 400 pull requests from over 100 repositories, spanning various programming languages and frameworks to reflect real-world scenarios.

|

||||||

|

|

||||||

- For each pull request, we have pre-generated suggestions from [11](https://qodo-merge-docs.qodo.ai/pr_benchmark/#models-used-for-generating-the-benchmark-baseline) different top-performing models using the Qodo Merge `improve` tool. The prompt for response generation can be found [here](https://github.com/qodo-ai/pr-agent/blob/main/pr_agent/settings/code_suggestions/pr_code_suggestions_prompts_not_decoupled.toml).

|

- For each pull request, we have pre-generated suggestions from eleven different top-performing models using the Qodo Merge `improve` tool. The prompt for response generation can be found [here](https://github.com/qodo-ai/pr-agent/blob/main/pr_agent/settings/code_suggestions/pr_code_suggestions_prompts_not_decoupled.toml).

|

||||||

|

|

||||||

- To benchmark a model, we generate its suggestions for the same pull requests and ask a high-performing judge model to **rank** the new model's output against the 11 pre-generated baseline suggestions. We utilize OpenAI's `o3` model as the judge, though other models have yielded consistent results. The prompt for this ranking judgment is available [here](https://github.com/Codium-ai/pr-agent-settings/tree/main/benchmark).

|

- To benchmark a model, we generate its suggestions for the same pull requests and ask a high-performing judge model to **rank** the new model's output against the pre-generated baseline suggestions. We utilize OpenAI's `o3` model as the judge, though other models have yielded consistent results. The prompt for this ranking judgment is available [here](https://github.com/Codium-ai/pr-agent-settings/tree/main/benchmark).

|

||||||

|

|

||||||

- We aggregate ranking outcomes across all pull requests, calculating performance metrics for the evaluated model. We also analyze the qualitative feedback from the judge to identify the model's comparative strengths and weaknesses against the established baselines.

|

- We aggregate ranking outcomes across all pull requests, calculating performance metrics for the evaluated model.

|

||||||

|

|

||||||

|

- We also analyze the qualitative feedback from the judge to identify the model's comparative strengths and weaknesses against the established baselines.

|

||||||

This approach provides not just a quantitative score but also a detailed analysis of each model's strengths and weaknesses.

|

This approach provides not just a quantitative score but also a detailed analysis of each model's strengths and weaknesses.

|

||||||

|

|

||||||

|

A list of the models used for generating the baseline suggestions, and example results, can be found in the [Appendix](#appendix-example-results).

|

||||||

|

|

||||||

[//]: # (Note that this benchmark focuses on quality: the ability of an LLM to process complex pull request with multiple files and nuanced task to produce high-quality code suggestions.)

|

[//]: # (Note that this benchmark focuses on quality: the ability of an LLM to process complex pull request with multiple files and nuanced task to produce high-quality code suggestions.)

|

||||||

|

|

||||||

@ -19,7 +22,7 @@ This approach provides not just a quantitative score but also a detailed analysi

|

|||||||

|

|

||||||

[//]: # ()

|

[//]: # ()

|

||||||

|

|

||||||

## Results

|

## PR Benchmark Results

|

||||||

|

|

||||||

<table>

|

<table>

|

||||||

<thead>

|

<thead>

|

||||||

@ -67,6 +70,12 @@ This approach provides not just a quantitative score but also a detailed analysi

|

|||||||

<td style="text-align:left;"></td>

|

<td style="text-align:left;"></td>

|

||||||

<td style="text-align:center;"><b>39.0</b></td>

|

<td style="text-align:center;"><b>39.0</b></td>

|

||||||

</tr>

|

</tr>

|

||||||

|

<tr>

|

||||||

|

<td style="text-align:left;">Codex-mini</td>

|

||||||

|

<td style="text-align:left;">2025-06-20</td>

|

||||||

|

<td style="text-align:left;"><a href="https://platform.openai.com/docs/models/codex-mini-latest">unknown</a></td>

|

||||||

|

<td style="text-align:center;"><b>37.2</b></td>

|

||||||

|

</tr>

|

||||||

<tr>

|

<tr>

|

||||||

<td style="text-align:left;">Gemini-2.5-flash</td>

|

<td style="text-align:left;">Gemini-2.5-flash</td>

|

||||||

<td style="text-align:left;">2025-04-17</td>

|

<td style="text-align:left;">2025-04-17</td>

|

||||||

@ -196,7 +205,7 @@ weaknesses:

|

|||||||

- **Very low recall / shallow coverage:** In a large majority of cases it gives 0-1 suggestions and misses other evident, critical bugs highlighted by peer models, leading to inferior rankings.

|

- **Very low recall / shallow coverage:** In a large majority of cases it gives 0-1 suggestions and misses other evident, critical bugs highlighted by peer models, leading to inferior rankings.

|

||||||

- **Occasional incorrect or harmful fixes:** A noticeable subset of answers propose changes that break functionality or misunderstand the code (e.g. bad constant, wrong header logic, speculative rollbacks).

|

- **Occasional incorrect or harmful fixes:** A noticeable subset of answers propose changes that break functionality or misunderstand the code (e.g. bad constant, wrong header logic, speculative rollbacks).

|

||||||

- **Non-actionable placeholders:** Some “improved_code” sections contain comments or “…” rather than real patches, reducing practical value.

|

- **Non-actionable placeholders:** Some “improved_code” sections contain comments or “…” rather than real patches, reducing practical value.

|

||||||

-

|

|

||||||

### GPT-4.1

|

### GPT-4.1

|

||||||

|

|

||||||

Final score: **26.5**

|

Final score: **26.5**

|

||||||

@ -214,19 +223,57 @@ weaknesses:

|

|||||||

- **Occasional technical inaccuracies:** A noticeable subset of suggestions are wrong (mis-ordered assertions, harmful Bash `set` change, false dangling-reference claims) or carry metadata errors (mis-labeling files as “python”).

|

- **Occasional technical inaccuracies:** A noticeable subset of suggestions are wrong (mis-ordered assertions, harmful Bash `set` change, false dangling-reference claims) or carry metadata errors (mis-labeling files as “python”).

|

||||||

- **Repetitive / derivative fixes:** Many outputs duplicate earlier simplistic ideas (e.g., single null-check) without new insight, showing limited reasoning breadth.

|

- **Repetitive / derivative fixes:** Many outputs duplicate earlier simplistic ideas (e.g., single null-check) without new insight, showing limited reasoning breadth.

|

||||||

|

|

||||||

|

### OpenAI codex-mini

|

||||||

|

|

||||||

## Appendix - models used for generating the benchmark baseline

|

final score: **37.2**

|

||||||

|

|

||||||

- anthropic_sonnet_3.7_v1:0

|

strengths:

|

||||||

- claude-4-opus-20250514

|

|

||||||

- claude-4-sonnet-20250514

|

|

||||||

- claude-4-sonnet-20250514_thinking_2048

|

|

||||||

- gemini-2.5-flash-preview-04-17

|

|

||||||

- gemini-2.5-pro-preview-05-06

|

|

||||||

- gemini-2.5-pro-preview-06-05_1024

|

|

||||||

- gemini-2.5-pro-preview-06-05_4096

|

|

||||||

- gpt-4.1

|

|

||||||

- o3

|

|

||||||

- o4-mini_medium

|

|

||||||

|

|

||||||

|

- **Can spot high-impact defects:** When it “locks on”, codex-mini often identifies the main runtime or security regression (e.g., race-conditions, logic inversions, blocking I/O, resource leaks) and proposes a minimal, direct patch that compiles and respects neighbouring style.

|

||||||

|

- **Produces concise, scoped fixes:** Valid answers usually stay within the allowed 3-suggestion limit, reference only the added lines, and contain clear before/after snippets that reviewers can apply verbatim.

|

||||||

|

- **Occasional broad coverage:** In a minority of cases the model catches multiple independent issues (logic + tests + docs) and outperforms every baseline answer, showing good contextual understanding of heterogeneous diffs.

|

||||||

|

|

||||||

|

weaknesses:

|

||||||

|

|

||||||

|

- **Output instability / format errors:** A very large share of responses are unusable—plain refusals, shell commands, or malformed/empty YAML—indicating brittle adherence to the required schema and tanking overall usefulness.

|

||||||

|

- **Critical-miss rate:** Even when the format is correct the model frequently overlooks the single most serious bug the diff introduces, instead focusing on stylistic nits or speculative refactors.

|

||||||

|

- **Introduces new problems:** Several suggestions add unsupported APIs, undeclared variables, wrong types, or break compilation, hurting trust in the recommendations.

|

||||||

|

- **Rule violations:** It often edits lines outside the diff, exceeds the 3-suggestion cap, or labels cosmetic tweaks as “critical”, showing inconsistent guideline compliance.

|

||||||

|

|

||||||

|

## Appendix - Example Results

|

||||||

|

|

||||||

|

Some examples of benchmarked PRs and their results:

|

||||||

|

|

||||||

|

- [Example 1](https://www.qodo.ai/images/qodo_merge_benchmark/example_results1.html)

|

||||||

|

- [Example 2](https://www.qodo.ai/images/qodo_merge_benchmark/example_results2.html)

|

||||||

|

- [Example 3](https://www.qodo.ai/images/qodo_merge_benchmark/example_results3.html)

|

||||||

|

- [Example 4](https://www.qodo.ai/images/qodo_merge_benchmark/example_results4.html)

|

||||||

|

|

||||||

|

### Models Used for Benchmarking

|

||||||

|

|

||||||

|

The following models were used for generating the benchmark baseline:

|

||||||

|

|

||||||

|

```markdown

|

||||||

|

(1) anthropic_sonnet_3.7_v1:0

|

||||||

|

|

||||||

|

(2) claude-4-opus-20250514

|

||||||

|

|

||||||

|

(3) claude-4-sonnet-20250514

|

||||||

|

|

||||||

|

(4) claude-4-sonnet-20250514_thinking_2048

|

||||||

|

|

||||||

|

(5) gemini-2.5-flash-preview-04-17

|

||||||

|

|

||||||

|

(6) gemini-2.5-pro-preview-05-06

|

||||||

|

|

||||||

|

(7) gemini-2.5-pro-preview-06-05_1024

|

||||||

|

|

||||||

|

(8) gemini-2.5-pro-preview-06-05_4096

|

||||||

|

|

||||||

|

(9) gpt-4.1

|

||||||

|

|

||||||

|

(10) o3

|

||||||

|

|

||||||

|

(11) o4-mini_medium

|

||||||

|

```

|

||||||

|

|

||||||

|

|||||||

@ -1,23 +1,21 @@

|

|||||||

# Recent Updates and Future Roadmap

|

# Recent Updates and Future Roadmap

|

||||||

|

|

||||||

`Page last updated: 2025-06-01`

|

`Page last updated: 2025-07-01`

|

||||||

|

|

||||||

This page summarizes recent enhancements to Qodo Merge (last three months).

|

This page summarizes recent enhancements to Qodo Merge (last three months).

|

||||||

|

|

||||||

It also outlines our development roadmap for the upcoming three months. Please note that the roadmap is subject to change, and features may be adjusted, added, or reprioritized.

|

It also outlines our development roadmap for the upcoming three months. Please note that the roadmap is subject to change, and features may be adjusted, added, or reprioritized.

|

||||||

|

|

||||||

=== "Recent Updates"

|

=== "Recent Updates"

|

||||||

|

- **Receiving Qodo Merge feedback locally**: You can receive automatic feedback from Qodo Merge on your local IDE after each commit. ([Learn more](https://github.com/qodo-ai/agents/tree/main/agents/qodo-merge-post-commit)).

|

||||||

|

- **Mermaid Diagrams**: Qodo Merge now generates by default Mermaid diagrams for PRs, providing a visual representation of code changes. ([Learn more](https://qodo-merge-docs.qodo.ai/tools/describe/#sequence-diagram-support))

|

||||||

|

- **Best Practices Hierarchy**: Introducing support for structured best practices, such as for folders in monorepos or a unified best practice file for a group of repositories. ([Learn more](https://qodo-merge-docs.qodo.ai/tools/improve/#global-hierarchical-best-practices))

|

||||||

- **Simplified Free Tier**: Qodo Merge now offers a simplified free tier with a monthly limit of 75 PR reviews per organization, replacing the previous two-week trial. ([Learn more](https://qodo-merge-docs.qodo.ai/installation/qodo_merge/#cloud-users))

|

- **Simplified Free Tier**: Qodo Merge now offers a simplified free tier with a monthly limit of 75 PR reviews per organization, replacing the previous two-week trial. ([Learn more](https://qodo-merge-docs.qodo.ai/installation/qodo_merge/#cloud-users))

|

||||||

- **CLI Endpoint**: A new Qodo Merge endpoint that accepts a lists of before/after code changes, executes Qodo Merge commands, and return the results. Currently available for enterprise customers. Contact [Qodo](https://www.qodo.ai/contact/) for more information.

|

- **CLI Endpoint**: A new Qodo Merge endpoint that accepts a lists of before/after code changes, executes Qodo Merge commands, and return the results. Currently available for enterprise customers. Contact [Qodo](https://www.qodo.ai/contact/) for more information.

|

||||||

- **Linear tickets support**: Qodo Merge now supports Linear tickets. ([Learn more](https://qodo-merge-docs.qodo.ai/core-abilities/fetching_ticket_context/#linear-integration))

|

- **Linear tickets support**: Qodo Merge now supports Linear tickets. ([Learn more](https://qodo-merge-docs.qodo.ai/core-abilities/fetching_ticket_context/#linear-integration))

|

||||||

- **Smart Update**: Upon PR updates, Qodo Merge will offer tailored code suggestions, addressing both the entire PR and the specific incremental changes since the last feedback ([Learn more](https://qodo-merge-docs.qodo.ai/core-abilities/incremental_update//))

|

- **Smart Update**: Upon PR updates, Qodo Merge will offer tailored code suggestions, addressing both the entire PR and the specific incremental changes since the last feedback ([Learn more](https://qodo-merge-docs.qodo.ai/core-abilities/incremental_update//))

|

||||||

- **Qodo Merge Pull Request Benchmark** - evaluating the performance of LLMs in analyzing pull request code ([Learn more](https://qodo-merge-docs.qodo.ai/pr_benchmark/))

|

|

||||||

- **Chat on Suggestions**: Users can now chat with code suggestions ([Learn more](https://qodo-merge-docs.qodo.ai/tools/improve/#chat-on-code-suggestions))

|

|

||||||

- **Scan Repo Discussions Tool**: A new tool that analyzes past code discussions to generate a `best_practices.md` file, distilling key insights and recommendations. ([Learn more](https://qodo-merge-docs.qodo.ai/tools/scan_repo_discussions/))

|

|

||||||

|

|

||||||

|

|

||||||

=== "Future Roadmap"

|

=== "Future Roadmap"

|

||||||

- **Best Practices Hierarchy**: Introducing support for structured best practices, such as for folders in monorepos or a unified best practice file for a group of repositories.

|

|

||||||

- **Enhanced `review` tool**: Enhancing the `review` tool validate compliance across multiple categories including security, tickets, and custom best practices.

|

- **Enhanced `review` tool**: Enhancing the `review` tool validate compliance across multiple categories including security, tickets, and custom best practices.

|

||||||

- **Smarter context retrieval**: Leverage AST and LSP analysis to gather relevant context from across the entire repository.

|

- **Smarter context retrieval**: Leverage AST and LSP analysis to gather relevant context from across the entire repository.

|

||||||

- **Enhanced portal experience**: Improved user experience in the Qodo Merge portal with new options and capabilities.

|

- **Enhanced portal experience**: Improved user experience in the Qodo Merge portal with new options and capabilities.

|

||||||

|

|||||||

@ -56,20 +56,27 @@ Everything below this marker is treated as previously auto-generated content and

|

|||||||

|

|

||||||

{width=512}

|

{width=512}

|

||||||

|

|

||||||

### Sequence Diagram Support

|

## Sequence Diagram Support

|

||||||

When the `enable_pr_diagram` option is enabled in your configuration, the `/describe` tool will include a `Mermaid` sequence diagram in the PR description.

|

The `/describe` tool includes a Mermaid sequence diagram showing component/function interactions.

|

||||||

|

|

||||||

This diagram represents interactions between components/functions based on the diff content.

|

This option is enabled by default via the `pr_description.enable_pr_diagram` param.

|

||||||

|

|

||||||

### How to enable

|

|

||||||

|

|

||||||

In your configuration:

|

[//]: # (### How to enable\disable)

|

||||||

|

|

||||||

```

|

[//]: # ()

|

||||||

toml

|

[//]: # (In your configuration:)

|

||||||

[pr_description]

|

|

||||||

enable_pr_diagram = true

|

[//]: # ()

|

||||||

```

|

[//]: # (```)

|

||||||

|

|

||||||

|

[//]: # (toml)

|

||||||

|

|

||||||

|

[//]: # ([pr_description])

|

||||||

|

|

||||||

|

[//]: # (enable_pr_diagram = true)

|

||||||

|

|

||||||

|

[//]: # (```)

|

||||||

|

|

||||||

## Configuration options

|

## Configuration options

|

||||||

|

|

||||||

@ -117,8 +124,8 @@ enable_pr_diagram = true

|

|||||||

<td>If set to true, the file list in the "Changes walkthrough" section will be collapsible. If set to "adaptive", the file list will be collapsible only if there are more than 8 files. Default is "adaptive".</td>

|

<td>If set to true, the file list in the "Changes walkthrough" section will be collapsible. If set to "adaptive", the file list will be collapsible only if there are more than 8 files. Default is "adaptive".</td>

|

||||||

</tr>

|

</tr>

|

||||||

<tr>

|

<tr>

|

||||||

<td><b>enable_large_pr_handling</b></td>

|

<td><b>enable_large_pr_handling 💎</b></td>

|

||||||

<td>Pro feature. If set to true, in case of a large PR the tool will make several calls to the AI and combine them to be able to cover more files. Default is true.</td>

|

<td>If set to true, in case of a large PR the tool will make several calls to the AI and combine them to be able to cover more files. Default is true.</td>

|

||||||

</tr>

|

</tr>

|

||||||

<tr>

|

<tr>

|

||||||

<td><b>enable_help_text</b></td>

|

<td><b>enable_help_text</b></td>

|

||||||

@ -126,7 +133,7 @@ enable_pr_diagram = true

|

|||||||

</tr>

|

</tr>

|

||||||

<tr>

|

<tr>

|

||||||

<td><b>enable_pr_diagram</b></td>

|

<td><b>enable_pr_diagram</b></td>

|

||||||

<td>If set to true, the tool will generate a horizontal Mermaid flowchart summarizing the main pull request changes. This field remains empty if not applicable. Default is false.</td>

|

<td>If set to true, the tool will generate a horizontal Mermaid flowchart summarizing the main pull request changes. This field remains empty if not applicable. Default is true.</td>

|

||||||

</tr>

|

</tr>

|

||||||

</table>

|

</table>

|

||||||

|

|

||||||

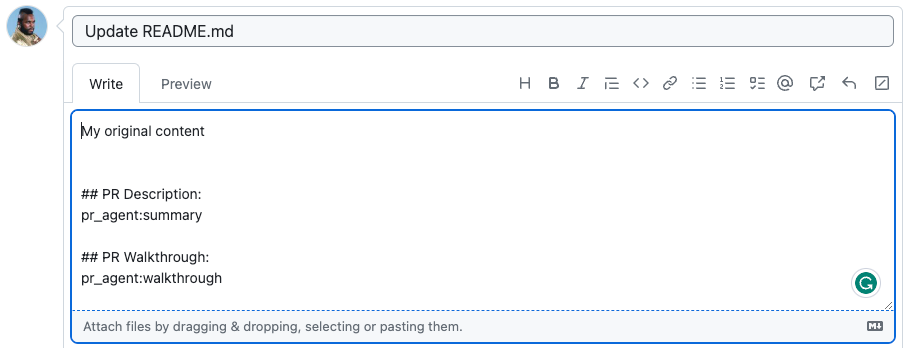

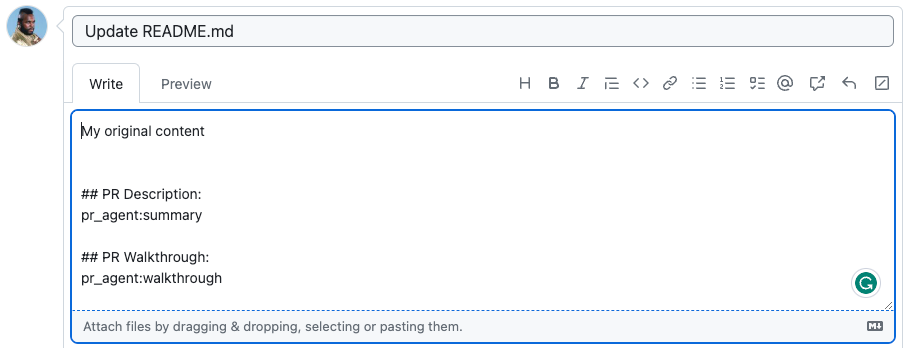

@ -170,9 +177,12 @@ pr_agent:summary

|

|||||||

|

|

||||||

## PR Walkthrough:

|

## PR Walkthrough:

|

||||||

pr_agent:walkthrough

|

pr_agent:walkthrough

|

||||||

|

|

||||||

|

## PR Diagram:

|

||||||

|

pr_agent:diagram

|

||||||

```

|

```

|

||||||

|

|

||||||

The marker `pr_agent:type` will be replaced with the PR type, `pr_agent:summary` will be replaced with the PR summary, and `pr_agent:walkthrough` will be replaced with the PR walkthrough.

|

The marker `pr_agent:type` will be replaced with the PR type, `pr_agent:summary` will be replaced with the PR summary, `pr_agent:walkthrough` will be replaced with the PR walkthrough, and `pr_agent:diagram` will be replaced with the sequence diagram (if enabled).

|

||||||

|

|

||||||

{width=512}

|

{width=512}

|

||||||

|

|

||||||

@ -184,6 +194,7 @@ becomes

|

|||||||

|

|

||||||

- `use_description_markers`: if set to true, the tool will use markers template. It replaces every marker of the form `pr_agent:marker_name` with the relevant content. Default is false.

|

- `use_description_markers`: if set to true, the tool will use markers template. It replaces every marker of the form `pr_agent:marker_name` with the relevant content. Default is false.

|

||||||

- `include_generated_by_header`: if set to true, the tool will add a dedicated header: 'Generated by PR Agent at ...' to any automatic content. Default is true.

|

- `include_generated_by_header`: if set to true, the tool will add a dedicated header: 'Generated by PR Agent at ...' to any automatic content. Default is true.

|

||||||

|

- `diagram`: if present as a marker, will be replaced by the PR sequence diagram (if enabled).

|

||||||

|

|

||||||

## Custom labels

|

## Custom labels

|

||||||

|

|

||||||

|

|||||||

@ -437,9 +437,26 @@ dual_publishing_score_threshold = x

|

|||||||

|

|

||||||

Where x represents the minimum score threshold (>=) for suggestions to be presented as committable PR comments in addition to the table. Default is -1 (disabled).

|

Where x represents the minimum score threshold (>=) for suggestions to be presented as committable PR comments in addition to the table. Default is -1 (disabled).

|

||||||

|

|

||||||

|

### Controlling suggestions depth

|

||||||

|

|

||||||

|

> `💎 feature`

|

||||||

|

|

||||||

|

You can control the depth and comprehensiveness of the code suggestions by using the `pr_code_suggestions.suggestions_depth` parameter.

|

||||||

|

|

||||||

|

Available options:

|

||||||

|

|

||||||

|

- `selective` - Shows only suggestions above a score threshold of 6

|

||||||

|

- `regular` - Default mode with balanced suggestion coverage

|

||||||

|

- `exhaustive` - Provides maximum suggestion comprehensiveness

|

||||||

|

|

||||||

|

(Alternatively, use numeric values: 1, 2, or 3 respectively)

|

||||||

|

|

||||||

|

We recommend starting with `regular` mode, then exploring `exhaustive` mode, which can provide more comprehensive suggestions and enhanced bug detection.

|

||||||

|

|

||||||

|

|

||||||

### Self-review

|

### Self-review

|

||||||

|

|

||||||

> `💎 feature` Platforms supported: GitHub, GitLab

|

> `💎 feature. Platforms supported: GitHub, GitLab`

|

||||||

|

|

||||||

If you set in a configuration file:

|

If you set in a configuration file:

|

||||||

|

|

||||||

@ -521,6 +538,10 @@ Note: Chunking is primarily relevant for large PRs. For most PRs (up to 600 line

|

|||||||

<td><b>enable_chat_in_code_suggestions</b></td>

|

<td><b>enable_chat_in_code_suggestions</b></td>

|

||||||

<td>If set to true, QM bot will interact with comments made on code changes it has proposed. Default is true.</td>

|

<td>If set to true, QM bot will interact with comments made on code changes it has proposed. Default is true.</td>

|

||||||

</tr>

|

</tr>

|

||||||

|

<tr>

|

||||||

|

<td><b>suggestions_depth 💎</b></td>

|

||||||

|

<td> Controls the depth of the suggestions. Can be set to 'selective', 'regular', or 'exhaustive'. Default is 'regular'.</td>

|

||||||

|

</tr>

|

||||||

<tr>

|

<tr>

|

||||||

<td><b>dual_publishing_score_threshold</b></td>

|

<td><b>dual_publishing_score_threshold</b></td>

|

||||||

<td>Minimum score threshold for suggestions to be presented as committable PR comments in addition to the table. Default is -1 (disabled).</td>

|

<td>Minimum score threshold for suggestions to be presented as committable PR comments in addition to the table. Default is -1 (disabled).</td>

|

||||||

|

|||||||

@ -250,3 +250,15 @@ Where the `ignore_pr_authors` is a list of usernames that you want to ignore.

|

|||||||

|

|

||||||

!!! note

|

!!! note

|

||||||

There is one specific case where bots will receive an automatic response - when they generated a PR with a _failed test_. In that case, the [`ci_feedback`](https://qodo-merge-docs.qodo.ai/tools/ci_feedback/) tool will be invoked.

|

There is one specific case where bots will receive an automatic response - when they generated a PR with a _failed test_. In that case, the [`ci_feedback`](https://qodo-merge-docs.qodo.ai/tools/ci_feedback/) tool will be invoked.

|

||||||

|

|

||||||

|

### Ignoring Generated Files by Language/Framework

|

||||||

|

|

||||||

|

To automatically exclude files generated by specific languages or frameworks, you can add the following to your `configuration.toml` file:

|

||||||

|

|

||||||

|

```

|

||||||

|

[config]

|

||||||

|

ignore_language_framework = ['protobuf', ...]

|

||||||

|

```

|

||||||

|

|

||||||

|

You can view the list of auto-generated file patterns in [`generated_code_ignore.toml`](https://github.com/qodo-ai/pr-agent/blob/main/pr_agent/settings/generated_code_ignore.toml).

|

||||||

|

Files matching these glob patterns will be automatically excluded from PR Agent analysis.

|

||||||

@ -232,6 +232,14 @@ AWS_SECRET_ACCESS_KEY="..."

|

|||||||

AWS_REGION_NAME="..."

|

AWS_REGION_NAME="..."

|

||||||

```

|

```

|

||||||

|

|

||||||

|

You can also use the new Meta Llama 4 models available on Amazon Bedrock:

|

||||||

|

|

||||||

|

```toml

|

||||||

|

[config] # in configuration.toml

|

||||||

|

model="bedrock/us.meta.llama4-scout-17b-instruct-v1:0"

|

||||||

|

fallback_models=["bedrock/us.meta.llama4-maverick-17b-instruct-v1:0"]

|

||||||

|

```

|

||||||

|

|

||||||

See [litellm](https://docs.litellm.ai/docs/providers/bedrock#usage) documentation for more information about the environment variables required for Amazon Bedrock.

|

See [litellm](https://docs.litellm.ai/docs/providers/bedrock#usage) documentation for more information about the environment variables required for Amazon Bedrock.

|

||||||

|

|

||||||

### DeepSeek

|

### DeepSeek

|

||||||

|

|||||||

@ -46,6 +46,7 @@ nav:

|

|||||||

- Auto approval: 'core-abilities/auto_approval.md'

|

- Auto approval: 'core-abilities/auto_approval.md'

|

||||||

- Auto best practices: 'core-abilities/auto_best_practices.md'

|

- Auto best practices: 'core-abilities/auto_best_practices.md'

|

||||||

- Chat on code suggestions: 'core-abilities/chat_on_code_suggestions.md'

|

- Chat on code suggestions: 'core-abilities/chat_on_code_suggestions.md'

|

||||||

|

- Chrome extension: 'chrome-extension/index.md'

|

||||||

- Code validation: 'core-abilities/code_validation.md'

|

- Code validation: 'core-abilities/code_validation.md'

|

||||||

# - Compression strategy: 'core-abilities/compression_strategy.md'

|

# - Compression strategy: 'core-abilities/compression_strategy.md'

|

||||||

- Dynamic context: 'core-abilities/dynamic_context.md'

|

- Dynamic context: 'core-abilities/dynamic_context.md'

|

||||||

@ -57,11 +58,11 @@ nav:

|

|||||||

- RAG context enrichment: 'core-abilities/rag_context_enrichment.md'

|

- RAG context enrichment: 'core-abilities/rag_context_enrichment.md'

|

||||||

- Self-reflection: 'core-abilities/self_reflection.md'

|

- Self-reflection: 'core-abilities/self_reflection.md'

|

||||||

- Static code analysis: 'core-abilities/static_code_analysis.md'

|

- Static code analysis: 'core-abilities/static_code_analysis.md'

|

||||||

- Chrome Extension:

|

# - Chrome Extension:

|

||||||

- Qodo Merge Chrome Extension: 'chrome-extension/index.md'

|

# - Qodo Merge Chrome Extension: 'chrome-extension/index.md'

|

||||||

- Features: 'chrome-extension/features.md'

|

# - Features: 'chrome-extension/features.md'

|

||||||

- Data Privacy: 'chrome-extension/data_privacy.md'

|

# - Data Privacy: 'chrome-extension/data_privacy.md'

|

||||||

- Options: 'chrome-extension/options.md'

|

# - Options: 'chrome-extension/options.md'

|

||||||

- PR Benchmark:

|

- PR Benchmark:

|

||||||

- PR Benchmark: 'pr_benchmark/index.md'

|

- PR Benchmark: 'pr_benchmark/index.md'

|

||||||

- Recent Updates:

|

- Recent Updates:

|

||||||

|

|||||||

@ -51,7 +51,7 @@ class PRAgent:

|

|||||||

def __init__(self, ai_handler: partial[BaseAiHandler,] = LiteLLMAIHandler):

|

def __init__(self, ai_handler: partial[BaseAiHandler,] = LiteLLMAIHandler):

|

||||||

self.ai_handler = ai_handler # will be initialized in run_action

|

self.ai_handler = ai_handler # will be initialized in run_action

|

||||||

|

|

||||||

async def handle_request(self, pr_url, request, notify=None) -> bool:

|

async def _handle_request(self, pr_url, request, notify=None) -> bool:

|

||||||

# First, apply repo specific settings if exists

|

# First, apply repo specific settings if exists

|

||||||

apply_repo_settings(pr_url)

|

apply_repo_settings(pr_url)

|

||||||

|

|

||||||

@ -117,3 +117,10 @@ class PRAgent:

|

|||||||

else:

|

else:

|

||||||

return False

|

return False

|

||||||

return True

|

return True

|

||||||

|

|

||||||

|

async def handle_request(self, pr_url, request, notify=None) -> bool:

|

||||||

|

try:

|

||||||

|

return await self._handle_request(pr_url, request, notify)

|

||||||

|

except:

|

||||||

|

get_logger().exception("Failed to process the command.")

|

||||||

|

return False

|

||||||

|

|||||||

@ -62,19 +62,23 @@ MAX_TOKENS = {

|

|||||||

'vertex_ai/gemini-2.5-pro-preview-03-25': 1048576,

|

'vertex_ai/gemini-2.5-pro-preview-03-25': 1048576,

|

||||||

'vertex_ai/gemini-2.5-pro-preview-05-06': 1048576,

|

'vertex_ai/gemini-2.5-pro-preview-05-06': 1048576,

|

||||||

'vertex_ai/gemini-2.5-pro-preview-06-05': 1048576,

|

'vertex_ai/gemini-2.5-pro-preview-06-05': 1048576,

|

||||||

|

'vertex_ai/gemini-2.5-pro': 1048576,

|

||||||

'vertex_ai/gemini-1.5-flash': 1048576,

|

'vertex_ai/gemini-1.5-flash': 1048576,

|

||||||

'vertex_ai/gemini-2.0-flash': 1048576,

|

'vertex_ai/gemini-2.0-flash': 1048576,

|

||||||

'vertex_ai/gemini-2.5-flash-preview-04-17': 1048576,

|

'vertex_ai/gemini-2.5-flash-preview-04-17': 1048576,

|

||||||

'vertex_ai/gemini-2.5-flash-preview-05-20': 1048576,

|

'vertex_ai/gemini-2.5-flash-preview-05-20': 1048576,

|

||||||

|

'vertex_ai/gemini-2.5-flash': 1048576,

|

||||||

'vertex_ai/gemma2': 8200,

|

'vertex_ai/gemma2': 8200,

|

||||||

'gemini/gemini-1.5-pro': 1048576,

|

'gemini/gemini-1.5-pro': 1048576,

|

||||||

'gemini/gemini-1.5-flash': 1048576,

|

'gemini/gemini-1.5-flash': 1048576,

|

||||||

'gemini/gemini-2.0-flash': 1048576,

|

'gemini/gemini-2.0-flash': 1048576,

|

||||||

'gemini/gemini-2.5-flash-preview-04-17': 1048576,

|

'gemini/gemini-2.5-flash-preview-04-17': 1048576,

|

||||||

'gemini/gemini-2.5-flash-preview-05-20': 1048576,

|

'gemini/gemini-2.5-flash-preview-05-20': 1048576,

|

||||||

|

'gemini/gemini-2.5-flash': 1048576,

|

||||||

'gemini/gemini-2.5-pro-preview-03-25': 1048576,

|

'gemini/gemini-2.5-pro-preview-03-25': 1048576,

|

||||||

'gemini/gemini-2.5-pro-preview-05-06': 1048576,

|

'gemini/gemini-2.5-pro-preview-05-06': 1048576,

|

||||||

'gemini/gemini-2.5-pro-preview-06-05': 1048576,

|

'gemini/gemini-2.5-pro-preview-06-05': 1048576,

|

||||||

|

'gemini/gemini-2.5-pro': 1048576,

|

||||||

'codechat-bison': 6144,

|

'codechat-bison': 6144,

|

||||||

'codechat-bison-32k': 32000,

|

'codechat-bison-32k': 32000,

|

||||||

'anthropic.claude-instant-v1': 100000,

|

'anthropic.claude-instant-v1': 100000,

|

||||||

@ -109,6 +113,8 @@ MAX_TOKENS = {

|

|||||||

'claude-3-5-sonnet': 100000,

|

'claude-3-5-sonnet': 100000,

|

||||||

'groq/meta-llama/llama-4-scout-17b-16e-instruct': 131072,

|

'groq/meta-llama/llama-4-scout-17b-16e-instruct': 131072,

|

||||||

'groq/meta-llama/llama-4-maverick-17b-128e-instruct': 131072,

|

'groq/meta-llama/llama-4-maverick-17b-128e-instruct': 131072,

|

||||||

|

'bedrock/us.meta.llama4-scout-17b-instruct-v1:0': 128000,

|

||||||

|

'bedrock/us.meta.llama4-maverick-17b-instruct-v1:0': 128000,

|

||||||

'groq/llama3-8b-8192': 8192,

|

'groq/llama3-8b-8192': 8192,

|

||||||

'groq/llama3-70b-8192': 8192,

|

'groq/llama3-70b-8192': 8192,

|

||||||

'groq/llama-3.1-8b-instant': 8192,

|

'groq/llama-3.1-8b-instant': 8192,

|

||||||

|

|||||||

@ -2,6 +2,7 @@ import fnmatch

|

|||||||

import re

|

import re

|

||||||

|

|

||||||

from pr_agent.config_loader import get_settings

|

from pr_agent.config_loader import get_settings

|

||||||

|

from pr_agent.log import get_logger

|

||||||

|

|

||||||

|

|

||||||

def filter_ignored(files, platform = 'github'):

|

def filter_ignored(files, platform = 'github'):

|

||||||

@ -17,7 +18,17 @@ def filter_ignored(files, platform = 'github'):

|

|||||||

glob_setting = get_settings().ignore.glob

|

glob_setting = get_settings().ignore.glob

|

||||||

if isinstance(glob_setting, str): # --ignore.glob=[.*utils.py], --ignore.glob=.*utils.py

|

if isinstance(glob_setting, str): # --ignore.glob=[.*utils.py], --ignore.glob=.*utils.py

|

||||||

glob_setting = glob_setting.strip('[]').split(",")

|

glob_setting = glob_setting.strip('[]').split(",")

|

||||||

patterns += [fnmatch.translate(glob) for glob in glob_setting]

|

patterns += translate_globs_to_regexes(glob_setting)

|

||||||

|

|

||||||

|

code_generators = get_settings().config.get('ignore_language_framework', [])

|

||||||

|

if isinstance(code_generators, str):

|

||||||

|

get_logger().warning("'ignore_language_framework' should be a list. Skipping language framework filtering.")

|

||||||

|

code_generators = []

|

||||||

|

for cg in code_generators:

|

||||||

|

glob_patterns = get_settings().generated_code.get(cg, [])

|

||||||

|

if isinstance(glob_patterns, str):

|

||||||

|

glob_patterns = [glob_patterns]

|

||||||

|

patterns += translate_globs_to_regexes(glob_patterns)

|

||||||

|

|

||||||

# compile all valid patterns

|

# compile all valid patterns

|

||||||

compiled_patterns = []

|

compiled_patterns = []

|

||||||

@ -66,3 +77,11 @@ def filter_ignored(files, platform = 'github'):

|

|||||||

print(f"Could not filter file list: {e}")

|

print(f"Could not filter file list: {e}")

|

||||||

|

|

||||||

return files

|

return files

|

||||||

|

|

||||||

|

def translate_globs_to_regexes(globs: list):

|

||||||

|

regexes = []

|

||||||

|

for pattern in globs:

|

||||||

|

regexes.append(fnmatch.translate(pattern))

|

||||||

|

if pattern.startswith("**/"): # cover root-level files

|

||||||

|

regexes.append(fnmatch.translate(pattern[3:]))

|

||||||

|

return regexes

|

||||||

|

|||||||

@ -14,6 +14,7 @@ global_settings = Dynaconf(

|

|||||||

settings_files=[join(current_dir, f) for f in [

|

settings_files=[join(current_dir, f) for f in [

|

||||||

"settings/configuration.toml",

|

"settings/configuration.toml",

|

||||||

"settings/ignore.toml",

|

"settings/ignore.toml",

|

||||||

|

"settings/generated_code_ignore.toml",

|

||||||

"settings/language_extensions.toml",

|

"settings/language_extensions.toml",

|

||||||

"settings/pr_reviewer_prompts.toml",

|

"settings/pr_reviewer_prompts.toml",

|

||||||

"settings/pr_questions_prompts.toml",

|

"settings/pr_questions_prompts.toml",

|

||||||

|

|||||||

@ -22,6 +22,7 @@ try:

|

|||||||

from azure.devops.connection import Connection

|

from azure.devops.connection import Connection

|

||||||

# noinspection PyUnresolvedReferences

|

# noinspection PyUnresolvedReferences

|

||||||

from azure.devops.released.git import (Comment, CommentThread, GitPullRequest, GitVersionDescriptor, GitClient, CommentThreadContext, CommentPosition)

|

from azure.devops.released.git import (Comment, CommentThread, GitPullRequest, GitVersionDescriptor, GitClient, CommentThreadContext, CommentPosition)

|

||||||

|

from azure.devops.released.work_item_tracking import WorkItemTrackingClient

|

||||||

# noinspection PyUnresolvedReferences

|

# noinspection PyUnresolvedReferences

|

||||||

from azure.identity import DefaultAzureCredential

|

from azure.identity import DefaultAzureCredential

|

||||||

from msrest.authentication import BasicAuthentication

|

from msrest.authentication import BasicAuthentication

|

||||||

@ -39,7 +40,7 @@ class AzureDevopsProvider(GitProvider):

|

|||||||

"Azure DevOps provider is not available. Please install the required dependencies."

|

"Azure DevOps provider is not available. Please install the required dependencies."

|

||||||

)

|

)

|

||||||

|

|

||||||

self.azure_devops_client = self._get_azure_devops_client()

|

self.azure_devops_client, self.azure_devops_board_client = self._get_azure_devops_client()

|

||||||

self.diff_files = None

|

self.diff_files = None

|

||||||

self.workspace_slug = None

|

self.workspace_slug = None

|

||||||

self.repo_slug = None

|

self.repo_slug = None

|

||||||

@ -566,7 +567,7 @@ class AzureDevopsProvider(GitProvider):

|

|||||||

return workspace_slug, repo_slug, pr_number

|

return workspace_slug, repo_slug, pr_number

|

||||||

|

|

||||||

@staticmethod

|

@staticmethod

|

||||||

def _get_azure_devops_client() -> GitClient:

|

def _get_azure_devops_client() -> Tuple[GitClient, WorkItemTrackingClient]:

|

||||||

org = get_settings().azure_devops.get("org", None)

|

org = get_settings().azure_devops.get("org", None)

|

||||||

pat = get_settings().azure_devops.get("pat", None)

|

pat = get_settings().azure_devops.get("pat", None)

|

||||||

|

|

||||||

@ -588,13 +589,12 @@ class AzureDevopsProvider(GitProvider):

|

|||||||

get_logger().error(f"No PAT found in settings, and Azure Default Authentication failed, error: {e}")

|

get_logger().error(f"No PAT found in settings, and Azure Default Authentication failed, error: {e}")

|

||||||

raise

|

raise

|

||||||

|

|

||||||

credentials = BasicAuthentication("", auth_token)

|

|

||||||

|

|

||||||

credentials = BasicAuthentication("", auth_token)

|

credentials = BasicAuthentication("", auth_token)

|

||||||

azure_devops_connection = Connection(base_url=org, creds=credentials)

|

azure_devops_connection = Connection(base_url=org, creds=credentials)

|

||||||

azure_devops_client = azure_devops_connection.clients.get_git_client()

|

azure_devops_client = azure_devops_connection.clients.get_git_client()

|

||||||

|

azure_devops_board_client = azure_devops_connection.clients.get_work_item_tracking_client()

|

||||||

|

|

||||||

return azure_devops_client

|

return azure_devops_client, azure_devops_board_client

|

||||||

|

|

||||||

def _get_repo(self):

|

def _get_repo(self):

|

||||||

if self.repo is None:

|

if self.repo is None:

|

||||||

@ -635,4 +635,50 @@ class AzureDevopsProvider(GitProvider):

|

|||||||

last = commits[0]

|

last = commits[0]

|

||||||

url = self.azure_devops_client.normalized_url + "/" + self.workspace_slug + "/_git/" + self.repo_slug + "/commit/" + last.commit_id

|

url = self.azure_devops_client.normalized_url + "/" + self.workspace_slug + "/_git/" + self.repo_slug + "/commit/" + last.commit_id

|

||||||

return url

|

return url

|

||||||

|

|

||||||

|

def get_linked_work_items(self) -> list:

|

||||||

|

"""

|

||||||

|

Get linked work items from the PR.

|

||||||

|

"""

|

||||||

|

try:

|

||||||

|

work_items = self.azure_devops_client.get_pull_request_work_item_refs(

|

||||||

|

project=self.workspace_slug,

|

||||||

|

repository_id=self.repo_slug,

|

||||||

|

pull_request_id=self.pr_num,

|

||||||

|

)

|

||||||

|

ids = [work_item.id for work_item in work_items]

|

||||||

|

if not work_items:

|

||||||

|

return []

|

||||||

|

items = self.get_work_items(ids)

|

||||||

|

return items

|

||||||

|

except Exception as e:

|

||||||

|

get_logger().exception(f"Failed to get linked work items, error: {e}")

|

||||||

|

return []

|

||||||

|

|

||||||

|

def get_work_items(self, work_item_ids: list) -> list:

|

||||||

|

"""

|

||||||

|

Get work items by their IDs.

|

||||||

|

"""

|

||||||

|

try:

|

||||||

|

raw_work_items = self.azure_devops_board_client.get_work_items(

|

||||||

|

project=self.workspace_slug,

|

||||||

|

ids=work_item_ids,

|

||||||

|

)

|

||||||

|

work_items = []

|

||||||

|

for item in raw_work_items:

|

||||||

|

work_items.append(

|

||||||

|

{

|

||||||

|

"id": item.id,

|

||||||

|

"title": item.fields.get("System.Title", ""),

|

||||||

|

"url": item.url,

|

||||||

|

"body": item.fields.get("System.Description", ""),

|

||||||

|

"acceptance_criteria": item.fields.get(

|

||||||

|

"Microsoft.VSTS.Common.AcceptanceCriteria", ""

|

||||||

|

),

|

||||||

|

"tags": item.fields.get("System.Tags", "").split("; ") if item.fields.get("System.Tags") else [],

|

||||||

|

}

|

||||||

|

)

|

||||||

|

return work_items

|

||||||

|

except Exception as e:

|

||||||

|

get_logger().exception(f"Failed to get work items, error: {e}")

|

||||||

|

return []

|

||||||

|

|||||||

38

pr_agent/servers/atlassian-connect-qodo-merge.json

Normal file

38

pr_agent/servers/atlassian-connect-qodo-merge.json

Normal file

@ -0,0 +1,38 @@

|

|||||||

|

{

|

||||||

|

"name": "Qodo Merge",

|

||||||

|

"description": "Qodo Merge",

|

||||||

|

"key": "app_key",

|

||||||

|

"vendor": {

|

||||||

|

"name": "Qodo",

|

||||||

|

"url": "https://qodo.ai"

|

||||||

|

},

|

||||||

|

"authentication": {

|

||||||

|

"type": "jwt"

|

||||||

|

},

|

||||||

|

"baseUrl": "base_url",

|

||||||

|

"lifecycle": {

|

||||||

|

"installed": "/installed",

|

||||||

|

"uninstalled": "/uninstalled"

|

||||||

|

},

|

||||||

|

"scopes": [

|

||||||

|

"account",

|

||||||

|

"repository:write",

|

||||||

|

"pullrequest:write",

|

||||||

|

"wiki"

|

||||||

|

],

|

||||||

|

"contexts": [

|

||||||

|

"account"

|

||||||

|

],

|

||||||

|

"modules": {

|

||||||

|

"webhooks": [

|

||||||

|

{

|

||||||

|

"event": "*",

|

||||||

|

"url": "/webhook"

|

||||||

|

}

|

||||||

|

]

|

||||||

|

},

|

||||||

|

"links": {

|

||||||

|

"privacy": "https://qodo.ai/privacy-policy",

|

||||||

|

"terms": "https://qodo.ai/terms"

|

||||||

|

}

|

||||||

|

}

|

||||||

@ -234,6 +234,9 @@ async def gitlab_webhook(background_tasks: BackgroundTasks, request: Request):

|

|||||||

get_logger().info(f"Skipping draft MR: {url}")

|

get_logger().info(f"Skipping draft MR: {url}")

|

||||||

return JSONResponse(status_code=status.HTTP_200_OK, content=jsonable_encoder({"message": "success"}))

|

return JSONResponse(status_code=status.HTTP_200_OK, content=jsonable_encoder({"message": "success"}))

|

||||||

|

|

||||||

|