mirror of

https://github.com/qodo-ai/pr-agent.git

synced 2025-07-03 04:10:49 +08:00

Compare commits

331 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

| d4d9a7f8b4 | |||

| c14c49727f | |||

| 292a5015d6 | |||

| 6776f7c296 | |||

| 7287a94e88 | |||

| e2cf1d0068 | |||

| 8ada3111ec | |||

| 9c9611e81a | |||

| 4fb93e3b62 | |||

| 5a27e1dd7e | |||

| 6e6151d201 | |||

| e468efb53e | |||

| 95e1ebada1 | |||

| d74c867eca | |||

| 2448281a45 | |||

| 9e063bf48a | |||

| 5432469ef6 | |||

| 2c496b9d4e | |||

| 5ac41dddd6 | |||

| 9df554ed1c | |||

| cf14e45674 | |||

| 1c51b5b762 | |||

| e5715e12cb | |||

| 578d7c69f8 | |||

| 29c50758bc | |||

| 97b48da03b | |||

| 4203ee4ca8 | |||

| 84dc976ebb | |||

| d9571ee7cb | |||

| 7373ed36e6 | |||

| cdf13925b0 | |||

| c2f52539aa | |||

| 0442cdcd3d | |||

| 93773f3c08 | |||

| 53a974c282 | |||

| c9ed271eaf | |||

| 6a5ff2fa3b | |||

| 25d661c152 | |||

| d20c9c6c94 | |||

| d1d861e163 | |||

| 033db1015e | |||

| abf2f68c61 | |||

| 441e098e2a | |||

| 2bbf4b366e | |||

| b9d096187a | |||

| ce156751e8 | |||

| dae87d7da8 | |||

| a99ebf8953 | |||

| 2a9e3ee1ef | |||

| 2beefab89a | |||

| 415f44d763 | |||

| 8fb9b8ed3e | |||

| 4f1dccf67b | |||

| 3778cc2745 | |||

| 8793f8d9b0 | |||

| 61837c69a3 | |||

| ffaf5d5271 | |||

| cd526a233c | |||

| 745e955d1f | |||

| 771d0b8c60 | |||

| 91a7c08546 | |||

| 4d9d6f7477 | |||

| 2591a5d6c1 | |||

| 772499fce1 | |||

| d467f5a7fd | |||

| 2d5b060168 | |||

| b7eb6be5a0 | |||

| df57367426 | |||

| 660a60924e | |||

| 8aa76a0ac5 | |||

| b034d16c23 | |||

| 9bec97c66c | |||

| 8fd8d298e7 | |||

| 2e186ebae8 | |||

| fc40ca9196 | |||

| 91a8938a37 | |||

| d97e1862da | |||

| f042c061de | |||

| c47afd9c0d | |||

| c6d16ced07 | |||

| e9535ea164 | |||

| dc8a4be2d4 | |||

| f9de8f283b | |||

| bd5c19ee05 | |||

| 7cbe797108 | |||

| 435d9d41c8 | |||

| a510d93e6e | |||

| 48cc2f6833 | |||

| 229d7b34c7 | |||

| 03b194c337 | |||

| a6f772c6d5 | |||

| ba1ba98dec | |||

| 5954c7cec2 | |||

| dc1a8e8314 | |||

| aa87bc60f6 | |||

| c76aabc71e | |||

| 81081186d9 | |||

| 4a71ec90c6 | |||

| 3456c8e039 | |||

| 402a388be0 | |||

| 4e26c02b01 | |||

| ea4f88edd3 | |||

| 217f615dfb | |||

| a6fb351789 | |||

| bfab660414 | |||

| 2e63653bb0 | |||

| b9df034c97 | |||

| bae8d36698 | |||

| 67a04e1cb2 | |||

| 4fea780b9b | |||

| 01c18d7d98 | |||

| f4b06640d2 | |||

| f1981092d3 | |||

| 8414e109c5 | |||

| 8adfca5b3c | |||

| 672cdc03ab | |||

| 86a9cfedc8 | |||

| 7ac9f27b70 | |||

| c97c39d57d | |||

| a3b3d6c77a | |||

| 2e41701d07 | |||

| 578f56148a | |||

| b3da84b4aa | |||

| f89bdcf3c3 | |||

| e7e3970874 | |||

| 1f7a8eada0 | |||

| 38638bd1c4 | |||

| 26f3bd8900 | |||

| a2fb415c53 | |||

| 8038eaf876 | |||

| d8572f8d13 | |||

| 78b11c80c7 | |||

| cb65b05e85 | |||

| 1aa6dd9b5d | |||

| 11d69e05aa | |||

| 0722af4702 | |||

| 99e99345b2 | |||

| 5252e1826d | |||

| a18a0bf2e3 | |||

| 396d11aa45 | |||

| 4a38861d06 | |||

| 5feb66597e | |||

| 8589941ffe | |||

| 7f0e6aeb37 | |||

| 8a768aa7fd | |||

| f399f9ebe4 | |||

| cc73d4599b | |||

| 4228f92e7e | |||

| 1f4ab43fa6 | |||

| b59111e4a6 | |||

| 70da871876 | |||

| 9c1ab06491 | |||

| 5c4bc0a008 | |||

| ef37271ce9 | |||

| 8dd4c15d4b | |||

| f9afada1ed | |||

| 4c1c313031 | |||

| 1f126069b1 | |||

| 12742ef499 | |||

| 63e921a2c5 | |||

| 8f04387331 | |||

| a06670bc27 | |||

| 2525392814 | |||

| 23aa2a9388 | |||

| e85b75fe64 | |||

| df04a7e046 | |||

| 9c3f080112 | |||

| ed65493718 | |||

| 983233c193 | |||

| 7438190ed1 | |||

| 2b2b851cb9 | |||

| 5701816b2e | |||

| 40a25a1082 | |||

| e238a88824 | |||

| 61bdfd3b99 | |||

| c00d1e9858 | |||

| 1a8b143f58 | |||

| dfbe7432b8 | |||

| ab69f1769b | |||

| 089210d9fa | |||

| 0f9d89c67a | |||

| 84b80f792d | |||

| 219d962cbe | |||

| e531245f4a | |||

| 89e9413d75 | |||

| b370cb6ae7 | |||

| 4201779ce2 | |||

| 71f7c09ed7 | |||

| edad244a86 | |||

| 9752987966 | |||

| 200da44e5a | |||

| 4c0fd37ac2 | |||

| c996c7117f | |||

| 943ba95924 | |||

| 8a75d3101d | |||

| 944f54b431 | |||

| 9be5cc6dec | |||

| 884286ebf1 | |||

| 620dbbeb1a | |||

| c07059e139 | |||

| 3b88d6afdb | |||

| e717e8ae81 | |||

| 8ec1fb5937 | |||

| cb10ceadd7 | |||

| 96d3f3cc0b | |||

| a98d972041 | |||

| 09a1d74a00 | |||

| 31f6f8f8ea | |||

| e7c99f0e6f | |||

| ac53e6728d | |||

| b100e7098a | |||

| 2b77d07725 | |||

| ee1676cf7e | |||

| 3420e6f30d | |||

| 85cc0ad08c | |||

| 3756b547da | |||

| e34bcace29 | |||

| 2a675b80ca | |||

| 1cefd23739 | |||

| aef9a04b32 | |||

| fe4e642a47 | |||

| 039d85b836 | |||

| 0fa342ddd2 | |||

| c95a8cde72 | |||

| 23ec25c949 | |||

| 9560bc1b44 | |||

| 346ea8fbae | |||

| d671c78233 | |||

| 240e0374e7 | |||

| 288e9bb8ca | |||

| d8545a2b28 | |||

| 95f23de7ec | |||

| 0390a85f5a | |||

| 172d0c0358 | |||

| 41588efe9a | |||

| f50832e19b | |||

| 927f124dca | |||

| 232b540f60 | |||

| 452eda25cd | |||

| 110e593d03 | |||

| af84409c1d | |||

| c2c69f2950 | |||

| e946a0ea9f | |||

| 866476080c | |||

| 27d6560de8 | |||

| 6ba7b3eea2 | |||

| 86d9612882 | |||

| 49f608c968 | |||

| 11f85cad62 | |||

| 5f5257d195 | |||

| 495e2ccb7d | |||

| a176adb23e | |||

| 68ef11a2fc | |||

| 38c38ec280 | |||

| 3904eebf85 | |||

| 778d7ce1ed | |||

| 3067afbcb3 | |||

| 70f7a90226 | |||

| 7eadb45c09 | |||

| ac247dbc2c | |||

| 3a77652660 | |||

| 0bd4c9b78a | |||

| 81d07a55d7 | |||

| 652ced5406 | |||

| aaf037570b | |||

| cfa565b5d7 | |||

| c8819472cf | |||

| 53744af32f | |||

| 41c6502190 | |||

| 32604d8103 | |||

| 581c95c4ab | |||

| 789c48a216 | |||

| 6b9de6b253 | |||

| 003846a90d | |||

| d088f9c19a | |||

| a272c761a9 | |||

| 9449f2aebe | |||

| 28ea4a685a | |||

| b798291bc8 | |||

| 62df50cf86 | |||

| 917e1607de | |||

| 8f11a19c32 | |||

| 0f5cccd18f | |||

| 2be459e576 | |||

| cbdb451c95 | |||

| 6871193381 | |||

| 8a7f3501ea | |||

| 80bbe23ad5 | |||

| 05f3fa5ebc | |||

| 1b2a2075ae | |||

| 3d3b49e3ee | |||

| 174b4b76eb | |||

| 2b28153749 | |||

| 6151bfac25 | |||

| 5d6e1de157 | |||

| ce35d2c313 | |||

| b51abe9af7 | |||

| 20206af1bf | |||

| 34ae1f1ab6 | |||

| 887283632b | |||

| 7f84b5738e | |||

| dc917587ef | |||

| b2710ec029 | |||

| 41c48ca5b5 | |||

| e0012702c6 | |||

| dfb339ab44 | |||

| 54947573bf | |||

| 228ceff3a6 | |||

| 8766140554 | |||

| 034ec8f53a | |||

| eccd00b86f | |||

| 4b351cfe38 | |||

| 734a027702 | |||

| d0948329d3 | |||

| 6135bf1f53 | |||

| ea9deccb91 | |||

| daa68f3b2f | |||

| e82430891c | |||

| 19ca7f887a | |||

| 888306c160 | |||

| 3ef4daafd5 | |||

| f76f750757 | |||

| 055bc4ceec | |||

| 487efa4bf4 | |||

| 050ffcdd06 | |||

| 8f9879cf01 | |||

| c3fac86067 | |||

| 9a57d00951 | |||

| 745d0c537c | |||

| 5b594dadee | |||

| 4246792261 |

2

.github/workflows/build-and-test.yaml

vendored

2

.github/workflows/build-and-test.yaml

vendored

@ -36,6 +36,6 @@ jobs:

|

||||

- id: test

|

||||

name: Test dev docker

|

||||

run: |

|

||||

docker run --rm codiumai/pr-agent:test pytest -v

|

||||

docker run --rm codiumai/pr-agent:test pytest -v tests/unittest

|

||||

|

||||

|

||||

|

||||

54

.github/workflows/code_coverage.yaml

vendored

Normal file

54

.github/workflows/code_coverage.yaml

vendored

Normal file

@ -0,0 +1,54 @@

|

||||

name: Code-coverage

|

||||

|

||||

on:

|

||||

workflow_dispatch:

|

||||

# push:

|

||||

# branches:

|

||||

# - main

|

||||

pull_request:

|

||||

branches:

|

||||

- main

|

||||

|

||||

jobs:

|

||||

build-and-test:

|

||||

runs-on: ubuntu-latest

|

||||

|

||||

steps:

|

||||

- id: checkout

|

||||

uses: actions/checkout@v2

|

||||

|

||||

- id: dockerx

|

||||

name: Setup Docker Buildx

|

||||

uses: docker/setup-buildx-action@v2

|

||||

|

||||

- id: build

|

||||

name: Build dev docker

|

||||

uses: docker/build-push-action@v2

|

||||

with:

|

||||

context: .

|

||||

file: ./docker/Dockerfile

|

||||

push: false

|

||||

load: true

|

||||

tags: codiumai/pr-agent:test

|

||||

cache-from: type=gha,scope=dev

|

||||

cache-to: type=gha,mode=max,scope=dev

|

||||

target: test

|

||||

|

||||

- id: code_cov

|

||||

name: Test dev docker

|

||||

run: |

|

||||

docker run --name test_container codiumai/pr-agent:test pytest tests/unittest --cov=pr_agent --cov-report term --cov-report xml:coverage.xml

|

||||

docker cp test_container:/app/coverage.xml coverage.xml

|

||||

docker rm test_container

|

||||

|

||||

|

||||

- name: Validate coverage report

|

||||

run: |

|

||||

if [ ! -f coverage.xml ]; then

|

||||

echo "Coverage report not found"

|

||||

exit 1

|

||||

fi

|

||||

- name: Upload coverage to Codecov

|

||||

uses: codecov/codecov-action@v4.0.1

|

||||

with:

|

||||

token: ${{ secrets.CODECOV_TOKEN }}

|

||||

46

.github/workflows/e2e_tests.yaml

vendored

Normal file

46

.github/workflows/e2e_tests.yaml

vendored

Normal file

@ -0,0 +1,46 @@

|

||||

name: PR-Agent E2E tests

|

||||

|

||||

on:

|

||||

workflow_dispatch:

|

||||

# schedule:

|

||||

# - cron: '0 0 * * *' # This cron expression runs the workflow every night at midnight UTC

|

||||

|

||||

jobs:

|

||||

pr_agent_job:

|

||||

runs-on: ubuntu-latest

|

||||

name: PR-Agent E2E GitHub App Test

|

||||

steps:

|

||||

- name: Checkout repository

|

||||

uses: actions/checkout@v2

|

||||

|

||||

- name: Setup Docker Buildx

|

||||

uses: docker/setup-buildx-action@v2

|

||||

|

||||

- id: build

|

||||

name: Build dev docker

|

||||

uses: docker/build-push-action@v2

|

||||

with:

|

||||

context: .

|

||||

file: ./docker/Dockerfile

|

||||

push: false

|

||||

load: true

|

||||

tags: codiumai/pr-agent:test

|

||||

cache-from: type=gha,scope=dev

|

||||

cache-to: type=gha,mode=max,scope=dev

|

||||

target: test

|

||||

|

||||

- id: test1

|

||||

name: E2E test github app

|

||||

run: |

|

||||

docker run -e GITHUB.USER_TOKEN=${{ secrets.TOKEN_GITHUB }} --rm codiumai/pr-agent:test pytest -v tests/e2e_tests/test_github_app.py

|

||||

|

||||

- id: test2

|

||||

name: E2E gitlab webhook

|

||||

run: |

|

||||

docker run -e gitlab.PERSONAL_ACCESS_TOKEN=${{ secrets.TOKEN_GITLAB }} --rm codiumai/pr-agent:test pytest -v tests/e2e_tests/test_gitlab_webhook.py

|

||||

|

||||

|

||||

- id: test3

|

||||

name: E2E bitbucket app

|

||||

run: |

|

||||

docker run -e BITBUCKET.USERNAME=${{ secrets.BITBUCKET_USERNAME }} -e BITBUCKET.PASSWORD=${{ secrets.BITBUCKET_PASSWORD }} --rm codiumai/pr-agent:test pytest -v tests/e2e_tests/test_bitbucket_app.py

|

||||

@ -1,3 +1,6 @@

|

||||

[pr_reviewer]

|

||||

enable_review_labels_effort = true

|

||||

enable_auto_approval = true

|

||||

|

||||

[config]

|

||||

model="claude-3-5-sonnet"

|

||||

|

||||

141

README.md

141

README.md

@ -10,7 +10,7 @@

|

||||

|

||||

</picture>

|

||||

<br/>

|

||||

CodiumAI PR-Agent aims to help efficiently review and handle pull requests, by providing AI feedbacks and suggestions

|

||||

CodiumAI PR-Agent aims to help efficiently review and handle pull requests, by providing AI feedback and suggestions

|

||||

</div>

|

||||

|

||||

[](https://github.com/Codium-ai/pr-agent/blob/main/LICENSE)

|

||||

@ -18,6 +18,7 @@ CodiumAI PR-Agent aims to help efficiently review and handle pull requests, by p

|

||||

[](https://pr-agent-docs.codium.ai/finetuning_benchmark/)

|

||||

[](https://discord.com/channels/1057273017547378788/1126104260430528613)

|

||||

[](https://twitter.com/codiumai)

|

||||

[](https://www.codium.ai/images/pr_agent/cheat_sheet.pdf)

|

||||

<a href="https://github.com/Codium-ai/pr-agent/commits/main">

|

||||

<img alt="GitHub" src="https://img.shields.io/github/last-commit/Codium-ai/pr-agent/main?style=for-the-badge" height="20">

|

||||

</a>

|

||||

@ -36,31 +37,36 @@ CodiumAI PR-Agent aims to help efficiently review and handle pull requests, by p

|

||||

- [Overview](#overview)

|

||||

- [Example results](#example-results)

|

||||

- [Try it now](#try-it-now)

|

||||

- [PR-Agent Pro 💎](#pr-agent-pro-)

|

||||

- [PR-Agent Pro 💎](https://pr-agent-docs.codium.ai/overview/pr_agent_pro/)

|

||||

- [How it works](#how-it-works)

|

||||

- [Why use PR-Agent?](#why-use-pr-agent)

|

||||

|

||||

## News and Updates

|

||||

|

||||

### July 4, 2024

|

||||

### August 26, 2024

|

||||

|

||||

Added improved support for claude-sonnet-3.5 model (anthropic, vertex, bedrock), including dedicated prompts.

|

||||

New version of [PR Agent Chrom Extension](https://chromewebstore.google.com/detail/pr-agent-chrome-extension/ephlnjeghhogofkifjloamocljapahnl) was released, with full support of context-aware **PR Chat**. This novel feature is free to use for any open-source repository. See more details in [here](https://pr-agent-docs.codium.ai/chrome-extension/#pr-chat).

|

||||

|

||||

### June 17, 2024

|

||||

<kbd><img src="https://www.codium.ai/images/pr_agent/pr_chat_1.png" width="768"></kbd>

|

||||

|

||||

New option for a self-review checkbox is now available for the `/improve` tool, along with the ability(💎) to enable auto-approve, or demand self-review in addition to human reviewer. See more [here](https://pr-agent-docs.codium.ai/tools/improve/#self-review).

|

||||

<kbd><img src="https://www.codium.ai/images/pr_agent/pr_chat_2.png" width="768"></kbd>

|

||||

|

||||

<kbd><img src="https://www.codium.ai/images/pr_agent/self_review_1.png" width="512"></kbd>

|

||||

|

||||

### June 6, 2024

|

||||

### August 11, 2024

|

||||

Increased PR context size for improved results, and enabled [asymmetric context](https://github.com/Codium-ai/pr-agent/pull/1114/files#diff-9290a3ad9a86690b31f0450b77acd37ef1914b41fabc8a08682d4da433a77f90R69-R70)

|

||||

|

||||

New option now available (💎) - **apply suggestions**:

|

||||

### August 10, 2024

|

||||

Added support for [Azure devops pipeline](https://pr-agent-docs.codium.ai/installation/azure/) - you can now easily run PR-Agent as an Azure devops pipeline, without needing to set up your own server.

|

||||

|

||||

<kbd><img src="https://www.codium.ai/images/pr_agent/apply_suggestion_1.png" width="512"></kbd>

|

||||

|

||||

→

|

||||

### August 5, 2024

|

||||

Added support for [GitLab pipeline](https://pr-agent-docs.codium.ai/installation/gitlab/#run-as-a-gitlab-pipeline) - you can now run easily PR-Agent as a GitLab pipeline, without needing to set up your own server.

|

||||

|

||||

<kbd><img src="https://www.codium.ai/images/pr_agent/apply_suggestion_2.png" width="512"></kbd>

|

||||

### July 28, 2024

|

||||

|

||||

(1) improved support for bitbucket server - [auto commands](https://github.com/Codium-ai/pr-agent/pull/1059) and [direct links](https://github.com/Codium-ai/pr-agent/pull/1061)

|

||||

|

||||

(2) custom models are now [supported](https://pr-agent-docs.codium.ai/usage-guide/changing_a_model/#custom-models)

|

||||

|

||||

|

||||

|

||||

@ -69,40 +75,40 @@ New option now available (💎) - **apply suggestions**:

|

||||

|

||||

Supported commands per platform:

|

||||

|

||||

| | | GitHub | Gitlab | Bitbucket | Azure DevOps |

|

||||

|-------|---------------------------------------------------------------------------------------------------------|:--------------------:|:--------------------:|:--------------------:|:--------------------:|

|

||||

| TOOLS | Review | ✅ | ✅ | ✅ | ✅ |

|

||||

| | ⮑ Incremental | ✅ | | | |

|

||||

| | ⮑ [SOC2 Compliance](https://pr-agent-docs.codium.ai/tools/review/#soc2-ticket-compliance) 💎 | ✅ | ✅ | ✅ | ✅ |

|

||||

| | Describe | ✅ | ✅ | ✅ | ✅ |

|

||||

| | ⮑ [Inline File Summary](https://pr-agent-docs.codium.ai/tools/describe#inline-file-summary) 💎 | ✅ | | | |

|

||||

| | Improve | ✅ | ✅ | ✅ | ✅ |

|

||||

| | ⮑ Extended | ✅ | ✅ | ✅ | ✅ |

|

||||

| | Ask | ✅ | ✅ | ✅ | ✅ |

|

||||

| | ⮑ [Ask on code lines](https://pr-agent-docs.codium.ai/tools/ask#ask-lines) | ✅ | ✅ | | |

|

||||

| | [Custom Prompt](https://pr-agent-docs.codium.ai/tools/custom_prompt/) 💎 | ✅ | ✅ | ✅ | ✅ |

|

||||

| | [Test](https://pr-agent-docs.codium.ai/tools/test/) 💎 | ✅ | ✅ | | ✅ |

|

||||

| | Reflect and Review | ✅ | ✅ | ✅ | ✅ |

|

||||

| | Update CHANGELOG.md | ✅ | ✅ | ✅ | ✅ |

|

||||

| | Find Similar Issue | ✅ | | | |

|

||||

| | [Add PR Documentation](https://pr-agent-docs.codium.ai/tools/documentation/) 💎 | ✅ | ✅ | | ✅ |

|

||||

| | [Custom Labels](https://pr-agent-docs.codium.ai/tools/custom_labels/) 💎 | ✅ | ✅ | | ✅ |

|

||||

| | [Analyze](https://pr-agent-docs.codium.ai/tools/analyze/) 💎 | ✅ | ✅ | | ✅ |

|

||||

| | [CI Feedback](https://pr-agent-docs.codium.ai/tools/ci_feedback/) 💎 | ✅ | | | |

|

||||

| | [Similar Code](https://pr-agent-docs.codium.ai/tools/similar_code/) 💎 | ✅ | | | |

|

||||

| | | | | | |

|

||||

| USAGE | CLI | ✅ | ✅ | ✅ | ✅ |

|

||||

| | App / webhook | ✅ | ✅ | ✅ | ✅ |

|

||||

| | Tagging bot | ✅ | | | |

|

||||

| | Actions | ✅ | | ✅ | |

|

||||

| | | | | | |

|

||||

| CORE | PR compression | ✅ | ✅ | ✅ | ✅ |

|

||||

| | Repo language prioritization | ✅ | ✅ | ✅ | ✅ |

|

||||

| | Adaptive and token-aware file patch fitting | ✅ | ✅ | ✅ | ✅ |

|

||||

| | Multiple models support | ✅ | ✅ | ✅ | ✅ |

|

||||

| | [Static code analysis](https://pr-agent-docs.codium.ai/core-abilities/#static-code-analysis) 💎 | ✅ | ✅ | ✅ | ✅ |

|

||||

| | [Global and wiki configurations](https://pr-agent-docs.codium.ai/usage-guide/configuration_options/) 💎 | ✅ | ✅ | ✅ | ✅ |

|

||||

| | [PR interactive actions](https://www.codium.ai/images/pr_agent/pr-actions.mp4) 💎 | ✅ | | | |

|

||||

| | | GitHub | Gitlab | Bitbucket | Azure DevOps |

|

||||

|-------|---------------------------------------------------------------------------------------------------------|:--------------------:|:--------------------:|:--------------------:|:------------:|

|

||||

| TOOLS | Review | ✅ | ✅ | ✅ | ✅ |

|

||||

| | ⮑ Incremental | ✅ | | | |

|

||||

| | ⮑ [SOC2 Compliance](https://pr-agent-docs.codium.ai/tools/review/#soc2-ticket-compliance) 💎 | ✅ | ✅ | ✅ | |

|

||||

| | Describe | ✅ | ✅ | ✅ | ✅ |

|

||||

| | ⮑ [Inline File Summary](https://pr-agent-docs.codium.ai/tools/describe#inline-file-summary) 💎 | ✅ | | | |

|

||||

| | Improve | ✅ | ✅ | ✅ | ✅ |

|

||||

| | ⮑ Extended | ✅ | ✅ | ✅ | ✅ |

|

||||

| | Ask | ✅ | ✅ | ✅ | ✅ |

|

||||

| | ⮑ [Ask on code lines](https://pr-agent-docs.codium.ai/tools/ask#ask-lines) | ✅ | ✅ | | |

|

||||

| | [Custom Prompt](https://pr-agent-docs.codium.ai/tools/custom_prompt/) 💎 | ✅ | ✅ | ✅ | |

|

||||

| | [Test](https://pr-agent-docs.codium.ai/tools/test/) 💎 | ✅ | ✅ | | |

|

||||

| | Reflect and Review | ✅ | ✅ | ✅ | ✅ |

|

||||

| | Update CHANGELOG.md | ✅ | ✅ | ✅ | ✅ |

|

||||

| | Find Similar Issue | ✅ | | | |

|

||||

| | [Add PR Documentation](https://pr-agent-docs.codium.ai/tools/documentation/) 💎 | ✅ | ✅ | | |

|

||||

| | [Custom Labels](https://pr-agent-docs.codium.ai/tools/custom_labels/) 💎 | ✅ | ✅ | | |

|

||||

| | [Analyze](https://pr-agent-docs.codium.ai/tools/analyze/) 💎 | ✅ | ✅ | | |

|

||||

| | [CI Feedback](https://pr-agent-docs.codium.ai/tools/ci_feedback/) 💎 | ✅ | | | |

|

||||

| | [Similar Code](https://pr-agent-docs.codium.ai/tools/similar_code/) 💎 | ✅ | | | |

|

||||

| | | | | | |

|

||||

| USAGE | CLI | ✅ | ✅ | ✅ | ✅ |

|

||||

| | App / webhook | ✅ | ✅ | ✅ | ✅ |

|

||||

| | Tagging bot | ✅ | | | |

|

||||

| | Actions | ✅ |✅| ✅ |✅|

|

||||

| | | | | | |

|

||||

| CORE | PR compression | ✅ | ✅ | ✅ | ✅ |

|

||||

| | Repo language prioritization | ✅ | ✅ | ✅ | ✅ |

|

||||

| | Adaptive and token-aware file patch fitting | ✅ | ✅ | ✅ | ✅ |

|

||||

| | Multiple models support | ✅ | ✅ | ✅ | ✅ |

|

||||

| | [Static code analysis](https://pr-agent-docs.codium.ai/core-abilities/#static-code-analysis) 💎 | ✅ | ✅ | ✅ | |

|

||||

| | [Global and wiki configurations](https://pr-agent-docs.codium.ai/usage-guide/configuration_options/) 💎 | ✅ | ✅ | ✅ | |

|

||||

| | [PR interactive actions](https://www.codium.ai/images/pr_agent/pr-actions.mp4) 💎 | ✅ | ✅ | | |

|

||||

- 💎 means this feature is available only in [PR-Agent Pro](https://www.codium.ai/pricing/)

|

||||

|

||||

[//]: # (- Support for additional git providers is described in [here](./docs/Full_environments.md))

|

||||

@ -221,7 +227,11 @@ For example, add a comment to any pull request with the following text:

|

||||

```

|

||||

@CodiumAI-Agent /review

|

||||

```

|

||||

and the agent will respond with a review of your PR

|

||||

and the agent will respond with a review of your PR.

|

||||

|

||||

Note that this is a promotional bot, suitable only for initial experimentation.

|

||||

It does not have 'edit' access to your repo, for example, so it cannot update the PR description or add labels (`@CodiumAI-Agent /describe` will publish PR description as a comment). In addition, the bot cannot be used on private repositories, as it does not have access to the files there.

|

||||

|

||||

|

||||

|

||||

|

||||

@ -231,43 +241,6 @@ Note that when you set your own PR-Agent or use CodiumAI hosted PR-Agent, there

|

||||

|

||||

---

|

||||

|

||||

[//]: # (## Installation)

|

||||

|

||||

[//]: # (To use your own version of PR-Agent, you first need to acquire two tokens:)

|

||||

|

||||

[//]: # ()

|

||||

[//]: # (1. An OpenAI key from [here](https://platform.openai.com/), with access to GPT-4.)

|

||||

|

||||

[//]: # (2. A GitHub personal access token (classic) with the repo scope.)

|

||||

|

||||

[//]: # ()

|

||||

[//]: # (There are several ways to use PR-Agent:)

|

||||

|

||||

[//]: # ()

|

||||

[//]: # (**Locally**)

|

||||

|

||||

[//]: # (- [Using pip package](https://pr-agent-docs.codium.ai/installation/locally/#using-pip-package))

|

||||

|

||||

[//]: # (- [Using Docker image](https://pr-agent-docs.codium.ai/installation/locally/#using-docker-image))

|

||||

|

||||

[//]: # (- [Run from source](https://pr-agent-docs.codium.ai/installation/locally/#run-from-source))

|

||||

|

||||

[//]: # ()

|

||||

[//]: # (**GitHub specific methods**)

|

||||

|

||||

[//]: # (- [Run as a GitHub Action](https://pr-agent-docs.codium.ai/installation/github/#run-as-a-github-action))

|

||||

|

||||

[//]: # (- [Run as a GitHub App](https://pr-agent-docs.codium.ai/installation/github/#run-as-a-github-app))

|

||||

|

||||

[//]: # ()

|

||||

[//]: # (**GitLab specific methods**)

|

||||

|

||||

[//]: # (- [Run a GitLab webhook server](https://pr-agent-docs.codium.ai/installation/gitlab/))

|

||||

|

||||

[//]: # ()

|

||||

[//]: # (**BitBucket specific methods**)

|

||||

|

||||

[//]: # (- [Run as a Bitbucket Pipeline](https://pr-agent-docs.codium.ai/installation/bitbucket/))

|

||||

|

||||

## PR-Agent Pro 💎

|

||||

[PR-Agent Pro](https://www.codium.ai/pricing/) is a hosted version of PR-Agent, provided by CodiumAI. It is available for a monthly fee, and provides the following benefits:

|

||||

|

||||

5

codecov.yml

Normal file

5

codecov.yml

Normal file

@ -0,0 +1,5 @@

|

||||

comment: false

|

||||

coverage:

|

||||

status:

|

||||

patch: false

|

||||

project: false

|

||||

@ -1,4 +1,4 @@

|

||||

FROM python:3.10 as base

|

||||

FROM python:3.12.3 AS base

|

||||

|

||||

WORKDIR /app

|

||||

ADD pyproject.toml .

|

||||

@ -6,36 +6,36 @@ ADD requirements.txt .

|

||||

RUN pip install . && rm pyproject.toml requirements.txt

|

||||

ENV PYTHONPATH=/app

|

||||

|

||||

FROM base as github_app

|

||||

FROM base AS github_app

|

||||

ADD pr_agent pr_agent

|

||||

CMD ["python", "-m", "gunicorn", "-k", "uvicorn.workers.UvicornWorker", "-c", "pr_agent/servers/gunicorn_config.py", "--forwarded-allow-ips", "*", "pr_agent.servers.github_app:app"]

|

||||

|

||||

FROM base as bitbucket_app

|

||||

FROM base AS bitbucket_app

|

||||

ADD pr_agent pr_agent

|

||||

CMD ["python", "pr_agent/servers/bitbucket_app.py"]

|

||||

|

||||

FROM base as bitbucket_server_webhook

|

||||

FROM base AS bitbucket_server_webhook

|

||||

ADD pr_agent pr_agent

|

||||

CMD ["python", "pr_agent/servers/bitbucket_server_webhook.py"]

|

||||

|

||||

FROM base as github_polling

|

||||

FROM base AS github_polling

|

||||

ADD pr_agent pr_agent

|

||||

CMD ["python", "pr_agent/servers/github_polling.py"]

|

||||

|

||||

FROM base as gitlab_webhook

|

||||

FROM base AS gitlab_webhook

|

||||

ADD pr_agent pr_agent

|

||||

CMD ["python", "pr_agent/servers/gitlab_webhook.py"]

|

||||

|

||||

FROM base as azure_devops_webhook

|

||||

FROM base AS azure_devops_webhook

|

||||

ADD pr_agent pr_agent

|

||||

CMD ["python", "pr_agent/servers/azuredevops_server_webhook.py"]

|

||||

|

||||

FROM base as test

|

||||

FROM base AS test

|

||||

ADD requirements-dev.txt .

|

||||

RUN pip install -r requirements-dev.txt && rm requirements-dev.txt

|

||||

ADD pr_agent pr_agent

|

||||

ADD tests tests

|

||||

|

||||

FROM base as cli

|

||||

FROM base AS cli

|

||||

ADD pr_agent pr_agent

|

||||

ENTRYPOINT ["python", "pr_agent/cli.py"]

|

||||

|

||||

5

docs/docs/chrome-extension/data_privacy.md

Normal file

5

docs/docs/chrome-extension/data_privacy.md

Normal file

@ -0,0 +1,5 @@

|

||||

We take your code's security and privacy seriously:

|

||||

|

||||

- The Chrome extension will not send your code to any external servers.

|

||||

- For private repositories, we will first validate the user's identity and permissions. After authentication, we generate responses using the existing PR-Agent Pro integration.

|

||||

|

||||

47

docs/docs/chrome-extension/features.md

Normal file

47

docs/docs/chrome-extension/features.md

Normal file

@ -0,0 +1,47 @@

|

||||

|

||||

### PR Chat

|

||||

|

||||

The PR-Chat feature allows to freely chat with your PR code, within your GitHub environment.

|

||||

It will seamlessly add the PR code as context to your chat session, and provide AI-powered feedback.

|

||||

|

||||

To enable private chat, simply install the PR-Agent Chrome extension. After installation, each PR's file-changed tab will include a chat box, where you may ask questions about your code.

|

||||

This chat session is **private**, and won't be visible to other users.

|

||||

|

||||

All open-source repositories are supported.

|

||||

For private repositories, you will also need to install PR-Agent Pro, After installation, make sure to open at least one new PR to fully register your organization. Once done, you can chat with both new and existing PRs across all installed repositories.

|

||||

|

||||

<img src="https://codium.ai/images/pr_agent/pr_chat1.png" width="768">

|

||||

<img src="https://codium.ai/images/pr_agent/pr_chat2.png" width="768">

|

||||

|

||||

|

||||

### Toolbar extension

|

||||

With PR-Agent Chrome extension, it's [easier than ever](https://www.youtube.com/watch?v=gT5tli7X4H4) to interactively configure and experiment with the different tools and configuration options.

|

||||

|

||||

For private repositories, after you found the setup that works for you, you can also easily export it as a persistent configuration file, and use it for automatic commands.

|

||||

|

||||

<img src="https://codium.ai/images/pr_agent/toolbar1.png" width="512">

|

||||

|

||||

<img src="https://codium.ai/images/pr_agent/toolbar2.png" width="512">

|

||||

|

||||

### PR-Agent filters

|

||||

|

||||

PR-Agent filters is a sidepanel option. that allows you to filter different message in the conversation tab.

|

||||

|

||||

For example, you can choose to present only message from PR-Agent, or filter those messages, focusing only on user's comments.

|

||||

|

||||

<img src="https://codium.ai/images/pr_agent/pr_agent_filters1.png" width="256">

|

||||

|

||||

<img src="https://codium.ai/images/pr_agent/pr_agent_filters2.png" width="256">

|

||||

|

||||

|

||||

### Enhanced code suggestions

|

||||

|

||||

PR-Agent Chrome extension adds the following capabilities to code suggestions tool's comments:

|

||||

|

||||

- Auto-expand the table when you are viewing a code block, to avoid clipping.

|

||||

- Adding a "quote-and-reply" button, that enables to address and comment on a specific suggestion (for example, asking the author to fix the issue)

|

||||

|

||||

|

||||

<img src="https://codium.ai/images/pr_agent/chrome_extension_code_suggestion1.png" width="512">

|

||||

|

||||

<img src="https://codium.ai/images/pr_agent/chrome_extension_code_suggestion2.png" width="512">

|

||||

@ -1,49 +1,8 @@

|

||||

## PR-Agent chrome extension

|

||||

PR-Agent Chrome extension is a collection of tools that integrates seamlessly with your GitHub environment, aiming to enhance your PR-Agent usage experience, and providing additional features.

|

||||

[PR-Agent Chrome extension](https://chromewebstore.google.com/detail/pr-agent-chrome-extension/ephlnjeghhogofkifjloamocljapahnl) is a collection of tools that integrates seamlessly with your GitHub environment, aiming to enhance your Git usage experience, and providing AI-powered capabilities to your PRs.

|

||||

|

||||

## Features

|

||||

With a single-click installation you will gain access to a context-aware PR chat with top models, a toolbar extension with multiple AI feedbacks, PR-Agent filters, and additional abilities.

|

||||

|

||||

### Toolbar extension

|

||||

With PR-Agent Chrome extension, it's [easier than ever](https://www.youtube.com/watch?v=gT5tli7X4H4) to interactively configure and experiment with the different tools and configuration options.

|

||||

All the extension's features are free to use on public repositories. For private repositories, you will need to install in addition to the extension [PR-Agent Pro](https://github.com/apps/codiumai-pr-agent-pro) (fast and easy installation with two weeks of trial, no credit card required).

|

||||

|

||||

After you found the setup that works for you, you can also easily export it as a persistent configuration file, and use it for automatic commands.

|

||||

|

||||

<img src="https://codium.ai/images/pr_agent/toolbar1.png" width="512">

|

||||

|

||||

<img src="https://codium.ai/images/pr_agent/toolbar2.png" width="512">

|

||||

|

||||

### PR-Agent filters

|

||||

|

||||

PR-Agent filters is a sidepanel option. that allows you to filter different message in the conversation tab.

|

||||

|

||||

For example, you can choose to present only message from PR-Agent, or filter those messages, focusing only on user's comments.

|

||||

|

||||

<img src="https://codium.ai/images/pr_agent/pr_agent_filters1.png" width="256">

|

||||

|

||||

<img src="https://codium.ai/images/pr_agent/pr_agent_filters2.png" width="256">

|

||||

|

||||

|

||||

### Enhanced code suggestions

|

||||

|

||||

PR-Agent Chrome extension adds the following capabilities to code suggestions tool's comments:

|

||||

|

||||

- Auto-expand the table when you are viewing a code block, to avoid clipping.

|

||||

- Adding a "quote-and-reply" button, that enables to address and comment on a specific suggestion (for example, asking the author to fix the issue)

|

||||

|

||||

|

||||

<img src="https://codium.ai/images/pr_agent/chrome_extension_code_suggestion1.png" width="512">

|

||||

|

||||

<img src="https://codium.ai/images/pr_agent/chrome_extension_code_suggestion2.png" width="512">

|

||||

|

||||

## Installation

|

||||

|

||||

Go to the marketplace and install the extension:

|

||||

[PR-Agent Chrome Extension](https://chromewebstore.google.com/detail/pr-agent-chrome-extension/ephlnjeghhogofkifjloamocljapahnl)

|

||||

|

||||

## Pre-requisites

|

||||

|

||||

The PR-Agent Chrome extension will work on any repo where you have previously [installed PR-Agent](https://pr-agent-docs.codium.ai/installation/).

|

||||

|

||||

## Data privacy and security

|

||||

|

||||

The PR-Agent Chrome extension only modifies the visual appearance of a GitHub PR screen. It does not transmit any user's repo or pull request code. Code is only sent for processing when a user submits a GitHub comment that activates a PR-Agent tool, in accordance with the standard privacy policy of PR-Agent.

|

||||

<img src="https://codium.ai/images/pr_agent/pr_chat1.png" width="768">

|

||||

<img src="https://codium.ai/images/pr_agent/pr_chat2.png" width="768">

|

||||

|

||||

@ -11,6 +11,19 @@

|

||||

}

|

||||

}

|

||||

|

||||

.md-nav--primary {

|

||||

position: relative; /* Ensure the element is positioned */

|

||||

}

|

||||

|

||||

.md-nav--primary::before {

|

||||

content: "";

|

||||

position: absolute;

|

||||

top: 0;

|

||||

right: 10px; /* Move the border 10 pixels to the right */

|

||||

width: 2px;

|

||||

height: 100%;

|

||||

background-color: #f5f5f5; /* Match the border color */

|

||||

}

|

||||

/*.md-nav__title, .md-nav__link {*/

|

||||

/* font-size: 18px;*/

|

||||

/* margin-top: 14px; !* Adjust the space as needed *!*/

|

||||

|

||||

@ -23,6 +23,7 @@ Here are the results:

|

||||

| QWEN-1.5-32B | 32 | 29 |

|

||||

| | | |

|

||||

| **CodeQwen1.5-7B** | **7** | **35.4** |

|

||||

| Llama-3.1-8B-Instruct | 8 | 35.2 |

|

||||

| Granite-8b-code-instruct | 8 | 34.2 |

|

||||

| CodeLlama-7b-hf | 7 | 31.8 |

|

||||

| Gemma-7B | 7 | 27.2 |

|

||||

|

||||

@ -14,33 +14,33 @@ PR-Agent offers extensive pull request functionalities across various git provid

|

||||

|

||||

| | | GitHub | Gitlab | Bitbucket | Azure DevOps |

|

||||

|-------|-----------------------------------------------------------------------------------------------------------------------|:------:|:------:|:---------:|:------------:|

|

||||

| TOOLS | Review | ✅ | ✅ | ✅ | ✅ |

|

||||

| TOOLS | Review | ✅ | ✅ | ✅ | ✅ |

|

||||

| | ⮑ Incremental | ✅ | | | |

|

||||

| | ⮑ [SOC2 Compliance](https://pr-agent-docs.codium.ai/tools/review/#soc2-ticket-compliance){:target="_blank"} 💎 | ✅ | ✅ | ✅ | ✅ |

|

||||

| | Ask | ✅ | ✅ | ✅ | ✅ |

|

||||

| | Describe | ✅ | ✅ | ✅ | ✅ |

|

||||

| | ⮑ [Inline file summary](https://pr-agent-docs.codium.ai/tools/describe/#inline-file-summary){:target="_blank"} 💎 | ✅ | ✅ | | ✅ |

|

||||

| | Improve | ✅ | ✅ | ✅ | ✅ |

|

||||

| | ⮑ Extended | ✅ | ✅ | ✅ | ✅ |

|

||||

| | [Custom Prompt](./tools/custom_prompt.md){:target="_blank"} 💎 | ✅ | ✅ | ✅ | ✅ |

|

||||

| | Reflect and Review | ✅ | ✅ | ✅ | ✅ |

|

||||

| | ⮑ [SOC2 Compliance](https://pr-agent-docs.codium.ai/tools/review/#soc2-ticket-compliance){:target="_blank"} 💎 | ✅ | ✅ | ✅ | |

|

||||

| | Ask | ✅ | ✅ | ✅ | ✅ |

|

||||

| | Describe | ✅ | ✅ | ✅ | ✅ |

|

||||

| | ⮑ [Inline file summary](https://pr-agent-docs.codium.ai/tools/describe/#inline-file-summary){:target="_blank"} 💎 | ✅ | ✅ | | |

|

||||

| | Improve | ✅ | ✅ | ✅ | ✅ |

|

||||

| | ⮑ Extended | ✅ | ✅ | ✅ | ✅ |

|

||||

| | [Custom Prompt](./tools/custom_prompt.md){:target="_blank"} 💎 | ✅ | ✅ | ✅ | |

|

||||

| | Reflect and Review | ✅ | ✅ | ✅ | |

|

||||

| | Update CHANGELOG.md | ✅ | ✅ | ✅ | ️ |

|

||||

| | Find Similar Issue | ✅ | | | ️ |

|

||||

| | [Add PR Documentation](./tools/documentation.md){:target="_blank"} 💎 | ✅ | ✅ | | ✅ |

|

||||

| | [Generate Custom Labels](./tools/describe.md#handle-custom-labels-from-the-repos-labels-page-💎){:target="_blank"} 💎 | ✅ | ✅ | | ✅ |

|

||||

| | [Analyze PR Components](./tools/analyze.md){:target="_blank"} 💎 | ✅ | ✅ | | ✅ |

|

||||

| | [Add PR Documentation](./tools/documentation.md){:target="_blank"} 💎 | ✅ | ✅ | | |

|

||||

| | [Generate Custom Labels](./tools/describe.md#handle-custom-labels-from-the-repos-labels-page-💎){:target="_blank"} 💎 | ✅ | ✅ | | |

|

||||

| | [Analyze PR Components](./tools/analyze.md){:target="_blank"} 💎 | ✅ | ✅ | | |

|

||||

| | | | | | ️ |

|

||||

| USAGE | CLI | ✅ | ✅ | ✅ | ✅ |

|

||||

| | App / webhook | ✅ | ✅ | ✅ | ✅ |

|

||||

| USAGE | CLI | ✅ | ✅ | ✅ | ✅ |

|

||||

| | App / webhook | ✅ | ✅ | ✅ | ✅ |

|

||||

| | Actions | ✅ | | | ️ |

|

||||

| | | | | |

|

||||

| CORE | PR compression | ✅ | ✅ | ✅ | ✅ |

|

||||

| | Repo language prioritization | ✅ | ✅ | ✅ | ✅ |

|

||||

| | Adaptive and token-aware file patch fitting | ✅ | ✅ | ✅ | ✅ |

|

||||

| | Multiple models support | ✅ | ✅ | ✅ | ✅ |

|

||||

| | Incremental PR review | ✅ | | | |

|

||||

| | [Static code analysis](./tools/analyze.md/){:target="_blank"} 💎 | ✅ | ✅ | ✅ | ✅ |

|

||||

| | [Multiple configuration options](./usage-guide/configuration_options.md){:target="_blank"} 💎 | ✅ | ✅ | ✅ | ✅ |

|

||||

| CORE | PR compression | ✅ | ✅ | ✅ | ✅ |

|

||||

| | Repo language prioritization | ✅ | ✅ | ✅ | ✅ |

|

||||

| | Adaptive and token-aware file patch fitting | ✅ | ✅ | ✅ | ✅ |

|

||||

| | Multiple models support | ✅ | ✅ | ✅ | ✅ |

|

||||

| | Incremental PR review | ✅ | | | |

|

||||

| | [Static code analysis](./tools/analyze.md/){:target="_blank"} 💎 | ✅ | ✅ | ✅ | |

|

||||

| | [Multiple configuration options](./usage-guide/configuration_options.md){:target="_blank"} 💎 | ✅ | ✅ | ✅ | |

|

||||

|

||||

💎 marks a feature available only in [PR-Agent Pro](https://www.codium.ai/pricing/){:target="_blank"}

|

||||

|

||||

|

||||

@ -1,4 +1,62 @@

|

||||

## Azure DevOps provider

|

||||

## Azure DevOps Pipeline

|

||||

You can use a pre-built Action Docker image to run PR-Agent as an Azure devops pipeline.

|

||||

add the following file to your repository under `azure-pipelines.yml`:

|

||||

```yaml

|

||||

# Opt out of CI triggers

|

||||

trigger: none

|

||||

|

||||

# Configure PR trigger

|

||||

pr:

|

||||

branches:

|

||||

include:

|

||||

- '*'

|

||||

autoCancel: true

|

||||

drafts: false

|

||||

|

||||

stages:

|

||||

- stage: pr_agent

|

||||

displayName: 'PR Agent Stage'

|

||||

jobs:

|

||||

- job: pr_agent_job

|

||||

displayName: 'PR Agent Job'

|

||||

pool:

|

||||

vmImage: 'ubuntu-latest'

|

||||

container:

|

||||

image: codiumai/pr-agent:latest

|

||||

options: --entrypoint ""

|

||||

variables:

|

||||

- group: pr_agent

|

||||

steps:

|

||||

- script: |

|

||||

echo "Running PR Agent action step"

|

||||

|

||||

# Construct PR_URL

|

||||

PR_URL="${SYSTEM_COLLECTIONURI}${SYSTEM_TEAMPROJECT}/_git/${BUILD_REPOSITORY_NAME}/pullrequest/${SYSTEM_PULLREQUEST_PULLREQUESTID}"

|

||||

echo "PR_URL=$PR_URL"

|

||||

|

||||

# Extract organization URL from System.CollectionUri

|

||||

ORG_URL=$(echo "$(System.CollectionUri)" | sed 's/\/$//') # Remove trailing slash if present

|

||||

echo "Organization URL: $ORG_URL"

|

||||

|

||||

export azure_devops__org="$ORG_URL"

|

||||

export config__git_provider="azure"

|

||||

|

||||

pr-agent --pr_url="$PR_URL" describe

|

||||

pr-agent --pr_url="$PR_URL" review

|

||||

pr-agent --pr_url="$PR_URL" improve

|

||||

env:

|

||||

azure_devops__pat: $(azure_devops_pat)

|

||||

openai__key: $(OPENAI_KEY)

|

||||

displayName: 'Run PR Agent'

|

||||

```

|

||||

This script will run PR-Agent on every new merge request, with the `improve`, `review`, and `describe` commands.

|

||||

Note that you need to export the `azure_devops__pat` and `OPENAI_KEY` variables in the Azure DevOps pipeline settings (Pipelines -> Library -> + Variable group):

|

||||

{width=468}

|

||||

|

||||

Make sure to give pipeline permissions to the `pr_agent` variable group.

|

||||

|

||||

|

||||

## Azure DevOps from CLI

|

||||

|

||||

To use Azure DevOps provider use the following settings in configuration.toml:

|

||||

```

|

||||

|

||||

@ -27,9 +27,9 @@ You can get a Bitbucket token for your repository by following Repository Settin

|

||||

Note that comments on a PR are not supported in Bitbucket Pipeline.

|

||||

|

||||

|

||||

## Run using CodiumAI-hosted Bitbucket app

|

||||

## Run using CodiumAI-hosted Bitbucket app 💎

|

||||

|

||||

Please contact [support@codium.ai](mailto:support@codium.ai) or visit [CodiumAI pricing page](https://www.codium.ai/pricing/) if you're interested in a hosted BitBucket app solution that provides full functionality including PR reviews and comment handling. It's based on the [bitbucket_app.py](https://github.com/Codium-ai/pr-agent/blob/main/pr_agent/git_providers/bitbucket_provider.py) implementation.

|

||||

Please contact visit [PR-Agent pro](https://www.codium.ai/pricing/) if you're interested in a hosted BitBucket app solution that provides full functionality including PR reviews and comment handling. It's based on the [bitbucket_app.py](https://github.com/Codium-ai/pr-agent/blob/main/pr_agent/git_providers/bitbucket_provider.py) implementation.

|

||||

|

||||

|

||||

## Bitbucket Server and Data Center

|

||||

|

||||

@ -26,15 +26,28 @@ jobs:

|

||||

OPENAI_KEY: ${{ secrets.OPENAI_KEY }}

|

||||

GITHUB_TOKEN: ${{ secrets.GITHUB_TOKEN }}

|

||||

```

|

||||

** if you want to pin your action to a specific release (v2.0 for example) for stability reasons, use:

|

||||

|

||||

|

||||

if you want to pin your action to a specific release (v0.23 for example) for stability reasons, use:

|

||||

```yaml

|

||||

...

|

||||

steps:

|

||||

- name: PR Agent action step

|

||||

id: pragent

|

||||

uses: Codium-ai/pr-agent@v2.0

|

||||

uses: docker://codiumai/pr-agent:0.23-github_action

|

||||

...

|

||||

```

|

||||

|

||||

For enhanced security, you can also specify the Docker image by its [digest](https://hub.docker.com/repository/docker/codiumai/pr-agent/tags):

|

||||

```yaml

|

||||

...

|

||||

steps:

|

||||

- name: PR Agent action step

|

||||

id: pragent

|

||||

uses: docker://codiumai/pr-agent@sha256:14165e525678ace7d9b51cda8652c2d74abb4e1d76b57c4a6ccaeba84663cc64

|

||||

...

|

||||

```

|

||||

|

||||

2) Add the following secret to your repository under `Settings > Secrets and variables > Actions > New repository secret > Add secret`:

|

||||

|

||||

```

|

||||

|

||||

@ -1,3 +1,44 @@

|

||||

## Run as a GitLab Pipeline

|

||||

You can use a pre-built Action Docker image to run PR-Agent as a GitLab pipeline. This is a simple way to get started with PR-Agent without setting up your own server.

|

||||

|

||||

(1) Add the following file to your repository under `.gitlab-ci.yml`:

|

||||

```yaml

|

||||

stages:

|

||||

- pr_agent

|

||||

|

||||

pr_agent_job:

|

||||

stage: pr_agent

|

||||

image:

|

||||

name: codiumai/pr-agent:latest

|

||||

entrypoint: [""]

|

||||

script:

|

||||

- cd /app

|

||||

- echo "Running PR Agent action step"

|

||||

- export MR_URL="$CI_MERGE_REQUEST_PROJECT_URL/merge_requests/$CI_MERGE_REQUEST_IID"

|

||||

- echo "MR_URL=$MR_URL"

|

||||

- export gitlab__PERSONAL_ACCESS_TOKEN=$GITLAB_PERSONAL_ACCESS_TOKEN

|

||||

- export config__git_provider="gitlab"

|

||||

- export openai__key=$OPENAI_KEY

|

||||

- python -m pr_agent.cli --pr_url="$MR_URL" describe

|

||||

- python -m pr_agent.cli --pr_url="$MR_URL" review

|

||||

- python -m pr_agent.cli --pr_url="$MR_URL" improve

|

||||

rules:

|

||||

- if: '$CI_PIPELINE_SOURCE == "merge_request_event"'

|

||||

```

|

||||

This script will run PR-Agent on every new merge request. You can modify the `rules` section to run PR-Agent on different events.

|

||||

You can also modify the `script` section to run different PR-Agent commands, or with different parameters by exporting different environment variables.

|

||||

|

||||

|

||||

(2) Add the following masked variables to your GitLab repository (CI/CD -> Variables):

|

||||

|

||||

- `GITLAB_PERSONAL_ACCESS_TOKEN`: Your GitLab personal access token.

|

||||

|

||||

- `OPENAI_KEY`: Your OpenAI key.

|

||||

|

||||

Note that if your base branches are not protected, don't set the variables as `protected`, since the pipeline will not have access to them.

|

||||

|

||||

|

||||

|

||||

## Run a GitLab webhook server

|

||||

|

||||

1. From the GitLab workspace or group, create an access token. Enable the "api" scope only.

|

||||

@ -14,7 +55,7 @@ WEBHOOK_SECRET=$(python -c "import secrets; print(secrets.token_hex(10))")

|

||||

- In the [gitlab] section, fill in personal_access_token and shared_secret. The access token can be a personal access token, or a group or project access token.

|

||||

- Set deployment_type to 'gitlab' in [configuration.toml](https://github.com/Codium-ai/pr-agent/blob/main/pr_agent/settings/configuration.toml)

|

||||

|

||||

5. Create a webhook in GitLab. Set the URL to the URL of your app's server. Set the secret token to the generated secret from step 2.

|

||||

5. Create a webhook in GitLab. Set the URL to ```http[s]://<PR_AGENT_HOSTNAME>/webhook```. Set the secret token to the generated secret from step 2.

|

||||

In the "Trigger" section, check the ‘comments’ and ‘merge request events’ boxes.

|

||||

|

||||

6. Test your installation by opening a merge request or commenting or a merge request using one of CodiumAI's commands.

|

||||

6. Test your installation by opening a merge request or commenting or a merge request using one of CodiumAI's commands.

|

||||

|

||||

@ -3,7 +3,7 @@

|

||||

## Self-hosted PR-Agent

|

||||

If you choose to host you own PR-Agent, you first need to acquire two tokens:

|

||||

|

||||

1. An OpenAI key from [here](https://platform.openai.com/api-keys), with access to GPT-4 (or a key for [other models](../usage-guide/additional_configurations.md/#changing-a-model), if you prefer).

|

||||

1. An OpenAI key from [here](https://platform.openai.com/api-keys), with access to GPT-4 (or a key for other [language models](https://pr-agent-docs.codium.ai/usage-guide/changing_a_model/), if you prefer).

|

||||

2. A GitHub\GitLab\BitBucket personal access token (classic), with the repo scope. [GitHub from [here](https://github.com/settings/tokens)]

|

||||

|

||||

There are several ways to use self-hosted PR-Agent:

|

||||

@ -15,8 +15,7 @@ There are several ways to use self-hosted PR-Agent:

|

||||

- [Azure DevOps](./azure.md)

|

||||

|

||||

## PR-Agent Pro 💎

|

||||

PR-Agent Pro, an app for GitHub\GitLab\BitBucket hosted by CodiumAI, is also available.

|

||||

PR-Agent Pro, an app hosted by CodiumAI for GitHub\GitLab\BitBucket, is also available.

|

||||

<br>

|

||||

With PR-Agent Pro Installation is as simple as signing up and adding the PR-Agent app to your relevant repo.

|

||||

<br>

|

||||

See [here](./pr_agent_pro.md) for more details.

|

||||

With PR-Agent Pro, installation is as simple as signing up and adding the PR-Agent app to your relevant repo.

|

||||

See [here](https://pr-agent-docs.codium.ai/installation/pr_agent_pro/) for more details.

|

||||

@ -16,6 +16,10 @@ Once a user acquires a seat, they gain the flexibility to use PR-Agent Pro acros

|

||||

Users without a purchased seat who interact with a repository featuring PR-Agent Pro are entitled to receive up to five complimentary feedbacks.

|

||||

Beyond this limit, PR-Agent Pro will cease to respond to their inquiries unless a seat is purchased.

|

||||

|

||||

## Install PR-Agent Pro for GitHub Enterprise Server

|

||||

You can install PR-Agent Pro application on your GitHub Enterprise Server, and enjoy two weeks of free trial.

|

||||

After the trial period, to continue using PR-Agent Pro, you will need to contact us for an [Enterprise license](https://www.codium.ai/pricing/).

|

||||

|

||||

|

||||

## Install PR-Agent Pro for GitLab (Teams & Enterprise)

|

||||

|

||||

|

||||

@ -1,7 +1,6 @@

|

||||

## Self-hosted PR-Agent

|

||||

|

||||

- If you host PR-Agent with your OpenAI API key, it is between you and OpenAI. You can read their API data privacy policy here:

|

||||

https://openai.com/enterprise-privacy

|

||||

- If you self-host PR-Agent with your OpenAI (or other LLM provider) API key, it is between you and the provider. We don't send your code data to PR-Agent servers.

|

||||

|

||||

## PR-Agent Pro 💎

|

||||

|

||||

@ -14,4 +13,4 @@ https://openai.com/enterprise-privacy

|

||||

|

||||

## PR-Agent Chrome extension

|

||||

|

||||

- The [PR-Agent Chrome extension](https://chromewebstore.google.com/detail/pr-agent-chrome-extension/ephlnjeghhogofkifjloamocljapahnl) serves solely to modify the visual appearance of a GitHub PR screen. It does not transmit any user's repo or pull request code. Code is only sent for processing when a user submits a GitHub comment that activates a PR-Agent tool, in accordance with the standard privacy policy of PR-Agent.

|

||||

- The [PR-Agent Chrome extension](https://chromewebstore.google.com/detail/pr-agent-chrome-extension/ephlnjeghhogofkifjloamocljapahnl) will not send your code to any external servers.

|

||||

|

||||

@ -1,18 +1,42 @@

|

||||

[PR-Agent Pro](https://www.codium.ai/pricing/) is a hosted version of PR-Agent, provided by CodiumAI. It is available for a monthly fee, and provides the following benefits:

|

||||

[PR-Agent Pro](https://www.codium.ai/pricing/) is a hosted version of PR-Agent, provided by CodiumAI. A complimentary two-week trial is offered, followed by a monthly subscription fee.

|

||||

PR-Agent Pro is designed for companies and teams that require additional features and capabilities. It provides the following benefits:

|

||||

|

||||

1. **Fully managed** - We take care of everything for you - hosting, models, regular updates, and more. Installation is as simple as signing up and adding the PR-Agent app to your GitHub\GitLab\BitBucket repo.

|

||||

|

||||

2. **Improved privacy** - No data will be stored or used to train models. PR-Agent Pro will employ zero data retention, and will use an OpenAI account with zero data retention.

|

||||

|

||||

3. **Improved support** - PR-Agent Pro users will receive priority support, and will be able to request new features and capabilities.

|

||||

4. **Extra features** -In addition to the benefits listed above, PR-Agent Pro will emphasize more customization, and the usage of static code analysis, in addition to LLM logic, to improve results. It has the following additional tools and features:

|

||||

- (Tool): [**Analyze PR components**](./tools/analyze.md/)

|

||||

- (Tool): [**Custom Prompt Suggestions**](./tools/custom_prompt.md/)

|

||||

- (Tool): [**Tests**](./tools/test.md/)

|

||||

- (Tool): [**PR documentation**](./tools/documentation.md/)

|

||||

- (Tool): [**Improve Component**](https://pr-agent-docs.codium.ai/tools/improve_component/)

|

||||

- (Tool): [**Similar code search**](https://pr-agent-docs.codium.ai/tools/similar_code/)

|

||||

- (Tool): [**CI feedback**](./tools/ci_feedback.md/)

|

||||

- (Feature): [**Interactive triggering**](./usage-guide/automations_and_usage.md/#interactive-triggering)

|

||||

- (Feature): [**SOC2 compliance check**](./tools/review.md/#soc2-ticket-compliance)

|

||||

- (Feature): [**Custom labels**](./tools/describe.md/#handle-custom-labels-from-the-repos-labels-page)

|

||||

- (Feature): [**Global and wiki configuration**](./usage-guide/configuration_options.md/#wiki-configuration-file)

|

||||

- (Feature): [**Inline file summary**](https://pr-agent-docs.codium.ai/tools/describe/#inline-file-summary)

|

||||

|

||||

4. **Supporting self-hosted git servers** - PR-Agent Pro can be installed on GitHub Enterprise Server, GitLab, and BitBucket. For more information, see the [installation guide](https://pr-agent-docs.codium.ai/installation/pr_agent_pro/).

|

||||

|

||||

**Additional features:**

|

||||

|

||||

Here are some of the additional features and capabilities that PR-Agent Pro offers:

|

||||

|

||||

| Feature | Description |

|

||||

|----------------------------------------------------------------------------------------------------------------------|------------------------------------------------------------------------------------------------------------------------------------------------------------------|

|

||||

| [**Model selection**](https://pr-agent-docs.codium.ai/usage-guide/PR_agent_pro_models/#pr-agent-pro-models) | Choose the model that best fits your needs, among top models like `GPT4` and `Claude-Sonnet-3.5`

|

||||

| [**Global and wiki configuration**](https://pr-agent-docs.codium.ai/usage-guide/configuration_options/) | Control configurations for many repositories from a single location; <br>Edit configuration of a single repo without commiting code |

|

||||

| [**Apply suggestions**](https://pr-agent-docs.codium.ai/tools/improve/#overview) | Generate commitable code from the relevant suggestions interactively by clicking on a checkbox |

|

||||

| [**Suggestions impact**](https://pr-agent-docs.codium.ai/tools/improve/#assessing-impact) | Automatically mark suggestions that were implemented by the user (either directly in GitHub, or indirectly in the IDE) to enable tracking of the impact of the suggestions |

|

||||

| [**CI feedback**](https://pr-agent-docs.codium.ai/tools/ci_feedback/) | Automatically analyze failed CI checks on GitHub and provide actionable feedback in the PR conversation, helping to resolve issues quickly |

|

||||

| [**Advanced usage statistics**](https://www.codium.ai/contact/#/) | PR-Agent Pro offers detailed statistics at user, repository, and company levels, including metrics about PR-Agent usage, and also general statistics and insights |

|

||||

| [**Incorporating companies' best practices**](https://pr-agent-docs.codium.ai/tools/improve/#best-practices) | Use the companies' best practices as reference to increase the effectiveness and the relevance of the code suggestions |

|

||||

| [**Interactive triggering**](https://pr-agent-docs.codium.ai/tools/analyze/#example-usage) | Interactively apply different tools via the `analyze` command |

|

||||

| [**SOC2 compliance check**](https://pr-agent-docs.codium.ai/tools/review/#configuration-options) | Ensures the PR contains a ticket to a project management system (e.g., Jira, Asana, Trello, etc.)

|

||||

| [**Custom labels**](https://pr-agent-docs.codium.ai/tools/describe/#handle-custom-labels-from-the-repos-labels-page) | Define custom labels for PR-Agent to assign to the PR |

|

||||

|

||||

**Additional tools:**

|

||||

|

||||

Here are additional tools that are available only for PR-Agent Pro users:

|

||||

|

||||

| Feature | Description |

|

||||

|---------|-------------|

|

||||

| [**Custom Prompt Suggestions**](https://pr-agent-docs.codium.ai/tools/custom_prompt/) | Generate code suggestions based on custom prompts from the user |

|

||||

| [**Analyze PR components**](https://pr-agent-docs.codium.ai/tools/analyze/) | Identify the components that changed in the PR, and enable to interactively apply different tools to them |

|

||||

| [**Tests**](https://pr-agent-docs.codium.ai/tools/test/) | Generate tests for code components that changed in the PR |

|

||||

| [**PR documentation**](https://pr-agent-docs.codium.ai/tools/documentation/) | Generate docstring for code components that changed in the PR |

|

||||

| [**Improve Component**](https://pr-agent-docs.codium.ai/tools/improve_component/) | Generate code suggestions for code components that changed in the PR |

|

||||

| [**Similar code search**](https://pr-agent-docs.codium.ai/tools/similar_code/) | Search for similar code in the repository, organization, or entire GitHub |

|

||||

|

||||

|

||||

|

||||

@ -1,24 +1,29 @@

|

||||

## Overview

|

||||

The `improve` tool scans the PR code changes, and automatically generates suggestions for improving the PR code.

|

||||

The `improve` tool scans the PR code changes, and automatically generates [meaningful](https://github.com/Codium-ai/pr-agent/blob/main/pr_agent/settings/pr_code_suggestions_prompts.toml#L41) suggestions for improving the PR code.

|

||||

The tool can be triggered automatically every time a new PR is [opened](../usage-guide/automations_and_usage.md#github-app-automatic-tools-when-a-new-pr-is-opened), or it can be invoked manually by commenting on any PR:

|

||||

```

|

||||

/improve

|

||||

```

|

||||

|

||||

{width=512}

|

||||

|

||||

{width=512}

|

||||

|

||||

Note that the `Apply this suggestion` checkbox, which interactively converts a suggestion into a commitable code comment, is available only for PR-Agent Pro 💎 users.

|

||||

|

||||

|

||||

## Example usage

|

||||

|

||||

### Manual triggering

|

||||

|

||||

Invoke the tool manually by commenting `/improve` on any PR. The code suggestions by default are presented as a single comment:

|

||||

|

||||

{width=512}

|

||||

|

||||

To edit [configurations](#configuration-options) related to the improve tool, use the following template:

|

||||

```

|

||||

/improve --pr_code_suggestions.some_config1=... --pr_code_suggestions.some_config2=...

|

||||

```

|

||||

|

||||

For example, you can choose to present the suggestions as commitable code comments, by running the following command:

|

||||

For example, you can choose to present all the suggestions as commitable code comments, by running the following command:

|

||||

```

|

||||

/improve --pr_code_suggestions.commitable_code_suggestions=true

|

||||

```

|

||||

@ -26,8 +31,8 @@ For example, you can choose to present the suggestions as commitable code commen

|

||||

{width=512}

|

||||

|

||||

|

||||

Note that a single comment has a significantly smaller PR footprint. We recommend this mode for most cases.

|

||||

Also note that collapsible are not supported in _Bitbucket_. Hence, the suggestions are presented there as code comments.

|

||||

As can be seen, a single table comment has a significantly smaller PR footprint. We recommend this mode for most cases.

|

||||

Also note that collapsible are not supported in _Bitbucket_. Hence, the suggestions can only be presented in Bitbucket as code comments.

|

||||

|

||||

### Automatic triggering

|

||||

|

||||

@ -47,17 +52,23 @@ num_code_suggestions_per_chunk = ...

|

||||

- The `pr_commands` lists commands that will be executed automatically when a PR is opened.

|

||||

- The `[pr_code_suggestions]` section contains the configurations for the `improve` tool you want to edit (if any)

|

||||

|

||||

### Extended mode

|

||||

### Assessing Impact 💎

|

||||

|

||||

An extended mode, which does not involve PR Compression and provides more comprehensive suggestions, can be invoked by commenting on any PR by setting:

|

||||

```

|

||||

[pr_code_suggestions]

|

||||

auto_extended_mode=true

|

||||

```

|

||||

(This mode is true by default).

|

||||

Note that PR-Agent pro tracks two types of implementations:

|

||||

|

||||

Note that the extended mode divides the PR code changes into chunks, up to the token limits, where each chunk is handled separately (might use multiple calls to GPT-4 for large PRs).

|

||||

Hence, the total number of suggestions is proportional to the number of chunks, i.e., the size of the PR.

|

||||

- Direct implementation - when the user directly applies the suggestion by clicking the `Apply` checkbox.

|

||||

- Indirect implementation - when the user implements the suggestion in their IDE environment. In this case, PR-Agent will utilize, after each commit, a dedicated logic to identify if a suggestion was implemented, and will mark it as implemented.

|

||||

|

||||

{width=512}

|

||||

|

||||

In post-process, PR-Agent counts the number of suggestions that were implemented, and provides general statistics and insights about the suggestions' impact on the PR process.

|

||||

|

||||

{width=512}

|

||||

|

||||

{width=512}

|

||||

|

||||

|

||||

## Usage Tips

|

||||

|

||||

### Self-review

|

||||

If you set in a configuration file:

|

||||

@ -71,8 +82,10 @@ You can set the content of the checkbox text via:

|

||||

[pr_code_suggestions]

|

||||

code_suggestions_self_review_text = "... (your text here) ..."

|

||||

```

|

||||

|

||||

{width=512}

|

||||

|

||||

|

||||

💎 In addition, by setting:

|

||||

```

|

||||

[pr_code_suggestions]

|

||||

@ -80,10 +93,50 @@ approve_pr_on_self_review = true

|

||||

```

|

||||

the tool can automatically approve the PR when the user checks the self-review checkbox.

|

||||

|

||||

!!! tip "Demanding self-review from the PR author"

|

||||

If you set the number of required reviewers for a PR to 2, this effectively means that the PR author must click the self-review checkbox before the PR can be merged (in addition to a human reviewer).

|

||||

{width=512}

|

||||

!!! tip "Tip - demanding self-review from the PR author"

|

||||

If you set the number of required reviewers for a PR to 2, this effectively means that the PR author must click the self-review checkbox before the PR can be merged (in addition to a human reviewer).

|

||||

|

||||

{width=512}

|

||||

|

||||

### `Extra instructions` and `best practices`

|

||||

|

||||

#### Extra instructions

|

||||

You can use the `extra_instructions` configuration option to give the AI model additional instructions for the `improve` tool.

|

||||

Be specific, clear, and concise in the instructions. With extra instructions, you are the prompter. Specify relevant aspects that you want the model to focus on.

|

||||

|

||||

Examples for possible instructions:

|

||||

```

|

||||

[pr_code_suggestions]

|

||||

extra_instructions="""\

|

||||

(1) Answer in japanese

|

||||

(2) Don't suggest to add try-excpet block

|

||||

(3) Ignore changes in toml files

|

||||

...

|

||||

"""

|

||||

```

|

||||

Use triple quotes to write multi-line instructions. Use bullet points or numbers to make the instructions more readable.

|

||||

|

||||

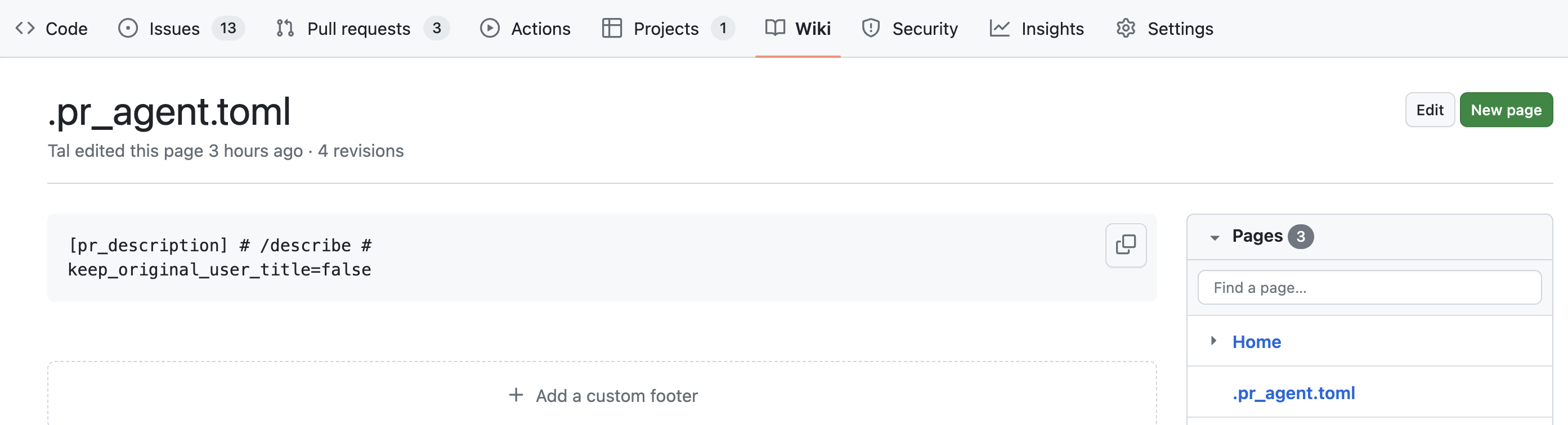

#### Best practices 💎

|

||||

Another option to give additional guidance to the AI model is by creating a dedicated [**wiki page**](https://github.com/Codium-ai/pr-agent/wiki) called `best_practices.md`.

|

||||

This page can contain a list of best practices, coding standards, and guidelines that are specific to your repo/organization

|

||||

|

||||

The AI model will use this page as a reference, and in case the PR code violates any of the guidelines, it will suggest improvements accordingly, with a dedicated label: `Organization

|

||||

best practice`.

|