mirror of

https://github.com/qodo-ai/pr-agent.git

synced 2025-07-21 04:50:39 +08:00

Compare commits

1 Commits

v0.2

...

example-pr

| Author | SHA1 | Date | |

|---|---|---|---|

| 7005a0466a |

32

.github/workflows/docs-ci.yaml

vendored

32

.github/workflows/docs-ci.yaml

vendored

@ -1,32 +0,0 @@

|

|||||||

name: docs-ci

|

|

||||||

on:

|

|

||||||

push:

|

|

||||||

branches:

|

|

||||||

- main

|

|

||||||

- add-docs-portal

|

|

||||||

paths:

|

|

||||||

- docs/**

|

|

||||||

permissions:

|

|

||||||

contents: write

|

|

||||||

jobs:

|

|

||||||

deploy:

|

|

||||||

runs-on: ubuntu-latest

|

|

||||||

steps:

|

|

||||||

- uses: actions/checkout@v4

|

|

||||||

- name: Configure Git Credentials

|

|

||||||

run: |

|

|

||||||

git config user.name github-actions[bot]

|

|

||||||

git config user.email 41898282+github-actions[bot]@users.noreply.github.com

|

|

||||||

- uses: actions/setup-python@v5

|

|

||||||

with:

|

|

||||||

python-version: 3.x

|

|

||||||

- run: echo "cache_id=$(date --utc '+%V')" >> $GITHUB_ENV

|

|

||||||

- uses: actions/cache@v4

|

|

||||||

with:

|

|

||||||

key: mkdocs-material-${{ env.cache_id }}

|

|

||||||

path: .cache

|

|

||||||

restore-keys: |

|

|

||||||

mkdocs-material-

|

|

||||||

- run: pip install mkdocs-material

|

|

||||||

- run: pip install "mkdocs-material[imaging]"

|

|

||||||

- run: mkdocs gh-deploy -f docs/mkdocs.yml --force

|

|

||||||

11

.github/workflows/pr-agent-review.yaml

vendored

11

.github/workflows/pr-agent-review.yaml

vendored

@ -5,9 +5,8 @@

|

|||||||

name: PR-Agent

|

name: PR-Agent

|

||||||

|

|

||||||

on:

|

on:

|

||||||

# pull_request:

|

pull_request:

|

||||||

# issue_comment:

|

issue_comment:

|

||||||

workflow_dispatch:

|

|

||||||

|

|

||||||

permissions:

|

permissions:

|

||||||

issues: write

|

issues: write

|

||||||

@ -27,9 +26,7 @@ jobs:

|

|||||||

GITHUB_TOKEN: ${{ secrets.GITHUB_TOKEN }}

|

GITHUB_TOKEN: ${{ secrets.GITHUB_TOKEN }}

|

||||||

PINECONE.API_KEY: ${{ secrets.PINECONE_API_KEY }}

|

PINECONE.API_KEY: ${{ secrets.PINECONE_API_KEY }}

|

||||||

PINECONE.ENVIRONMENT: ${{ secrets.PINECONE_ENVIRONMENT }}

|

PINECONE.ENVIRONMENT: ${{ secrets.PINECONE_ENVIRONMENT }}

|

||||||

GITHUB_ACTION_CONFIG.AUTO_DESCRIBE: true

|

GITHUB_ACTION.AUTO_REVIEW: true

|

||||||

GITHUB_ACTION_CONFIG.AUTO_REVIEW: true

|

GITHUB_ACTION.AUTO_IMPROVE: true

|

||||||

GITHUB_ACTION_CONFIG.AUTO_IMPROVE: true

|

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

|||||||

3

.gitignore

vendored

3

.gitignore

vendored

@ -5,5 +5,4 @@ __pycache__

|

|||||||

dist/

|

dist/

|

||||||

*.egg-info/

|

*.egg-info/

|

||||||

build/

|

build/

|

||||||

.DS_Store

|

review.md

|

||||||

docs/.cache/

|

|

||||||

|

|||||||

@ -1,6 +1,5 @@

|

|||||||

[pr_reviewer]

|

[pr_reviewer]

|

||||||

enable_review_labels_effort = true

|

enable_review_labels_effort = true

|

||||||

enable_auto_approval = true

|

|

||||||

|

|

||||||

|

|

||||||

[pr_code_suggestions]

|

[pr_code_suggestions]

|

||||||

|

|||||||

462

INSTALL.md

Normal file

462

INSTALL.md

Normal file

@ -0,0 +1,462 @@

|

|||||||

|

|

||||||

|

## Installation

|

||||||

|

|

||||||

|

To get started with PR-Agent quickly, you first need to acquire two tokens:

|

||||||

|

|

||||||

|

1. An OpenAI key from [here](https://platform.openai.com/), with access to GPT-4.

|

||||||

|

2. A GitHub\GitLab\BitBucket personal access token (classic) with the repo scope.

|

||||||

|

|

||||||

|

There are several ways to use PR-Agent:

|

||||||

|

|

||||||

|

**Locally**

|

||||||

|

- [Using Docker image (no installation required)](INSTALL.md#use-docker-image-no-installation-required)

|

||||||

|

- [Run from source](INSTALL.md#run-from-source)

|

||||||

|

|

||||||

|

**GitHub specific methods**

|

||||||

|

- [Run as a GitHub Action](INSTALL.md#run-as-a-github-action)

|

||||||

|

- [Run as a polling server](INSTALL.md#run-as-a-polling-server)

|

||||||

|

- [Run as a GitHub App](INSTALL.md#run-as-a-github-app)

|

||||||

|

- [Deploy as a Lambda Function](INSTALL.md#deploy-as-a-lambda-function)

|

||||||

|

- [AWS CodeCommit](INSTALL.md#aws-codecommit-setup)

|

||||||

|

|

||||||

|

**GitLab specific methods**

|

||||||

|

- [Run a GitLab webhook server](INSTALL.md#run-a-gitlab-webhook-server)

|

||||||

|

|

||||||

|

**BitBucket specific methods**

|

||||||

|

- [Run as a Bitbucket Pipeline](INSTALL.md#run-as-a-bitbucket-pipeline)

|

||||||

|

- [Run on a hosted app](INSTALL.md#run-on-a-hosted-bitbucket-app)

|

||||||

|

- [Bitbucket server and data center](INSTALL.md#bitbucket-server-and-data-center)

|

||||||

|

---

|

||||||

|

|

||||||

|

### Use Docker image (no installation required)

|

||||||

|

|

||||||

|

A list of the relevant tools can be found in the [tools guide](./docs/TOOLS_GUIDE.md).

|

||||||

|

|

||||||

|

To invoke a tool (for example `review`), you can run directly from the Docker image. Here's how:

|

||||||

|

|

||||||

|

- For GitHub:

|

||||||

|

```

|

||||||

|

docker run --rm -it -e OPENAI.KEY=<your key> -e GITHUB.USER_TOKEN=<your token> codiumai/pr-agent:latest --pr_url <pr_url> review

|

||||||

|

```

|

||||||

|

|

||||||

|

- For GitLab:

|

||||||

|

```

|

||||||

|

docker run --rm -it -e OPENAI.KEY=<your key> -e CONFIG.GIT_PROVIDER=gitlab -e GITLAB.PERSONAL_ACCESS_TOKEN=<your token> codiumai/pr-agent:latest --pr_url <pr_url> review

|

||||||

|

```

|

||||||

|

|

||||||

|

Note: If you have a dedicated GitLab instance, you need to specify the custom url as variable:

|

||||||

|

```

|

||||||

|

docker run --rm -it -e OPENAI.KEY=<your key> -e CONFIG.GIT_PROVIDER=gitlab -e GITLAB.PERSONAL_ACCESS_TOKEN=<your token> GITLAB.URL=<your gitlab instance url> codiumai/pr-agent:latest --pr_url <pr_url> review

|

||||||

|

```

|

||||||

|

|

||||||

|

- For BitBucket:

|

||||||

|

```

|

||||||

|

docker run --rm -it -e CONFIG.GIT_PROVIDER=bitbucket -e OPENAI.KEY=$OPENAI_API_KEY -e BITBUCKET.BEARER_TOKEN=$BITBUCKET_BEARER_TOKEN codiumai/pr-agent:latest --pr_url=<pr_url> review

|

||||||

|

```

|

||||||

|

|

||||||

|

For other git providers, update CONFIG.GIT_PROVIDER accordingly, and check the `pr_agent/settings/.secrets_template.toml` file for the environment variables expected names and values.

|

||||||

|

|

||||||

|

---

|

||||||

|

|

||||||

|

|

||||||

|

If you want to ensure you're running a specific version of the Docker image, consider using the image's digest:

|

||||||

|

```bash

|

||||||

|

docker run --rm -it -e OPENAI.KEY=<your key> -e GITHUB.USER_TOKEN=<your token> codiumai/pr-agent@sha256:71b5ee15df59c745d352d84752d01561ba64b6d51327f97d46152f0c58a5f678 --pr_url <pr_url> review

|

||||||

|

```

|

||||||

|

|

||||||

|

Or you can run a [specific released versions](./RELEASE_NOTES.md) of pr-agent, for example:

|

||||||

|

```

|

||||||

|

codiumai/pr-agent@v0.9

|

||||||

|

```

|

||||||

|

|

||||||

|

---

|

||||||

|

|

||||||

|

### Run from source

|

||||||

|

|

||||||

|

1. Clone this repository:

|

||||||

|

|

||||||

|

```

|

||||||

|

git clone https://github.com/Codium-ai/pr-agent.git

|

||||||

|

```

|

||||||

|

|

||||||

|

2. Install the requirements in your favorite virtual environment:

|

||||||

|

|

||||||

|

```

|

||||||

|

pip install -r requirements.txt

|

||||||

|

```

|

||||||

|

|

||||||

|

3. Copy the secrets template file and fill in your OpenAI key and your GitHub user token:

|

||||||

|

|

||||||

|

```

|

||||||

|

cp pr_agent/settings/.secrets_template.toml pr_agent/settings/.secrets.toml

|

||||||

|

chmod 600 pr_agent/settings/.secrets.toml

|

||||||

|

# Edit .secrets.toml file

|

||||||

|

```

|

||||||

|

|

||||||

|

4. Add the pr_agent folder to your PYTHONPATH, then run the cli.py script:

|

||||||

|

|

||||||

|

```

|

||||||

|

export PYTHONPATH=[$PYTHONPATH:]<PATH to pr_agent folder>

|

||||||

|

python3 -m pr_agent.cli --pr_url <pr_url> review

|

||||||

|

python3 -m pr_agent.cli --pr_url <pr_url> ask <your question>

|

||||||

|

python3 -m pr_agent.cli --pr_url <pr_url> describe

|

||||||

|

python3 -m pr_agent.cli --pr_url <pr_url> improve

|

||||||

|

python3 -m pr_agent.cli --pr_url <pr_url> add_docs

|

||||||

|

python3 -m pr_agent.cli --pr_url <pr_url> generate_labels

|

||||||

|

python3 -m pr_agent.cli --issue_url <issue_url> similar_issue

|

||||||

|

...

|

||||||

|

```

|

||||||

|

|

||||||

|

---

|

||||||

|

|

||||||

|

### Run as a GitHub Action

|

||||||

|

|

||||||

|

You can use our pre-built Github Action Docker image to run PR-Agent as a Github Action.

|

||||||

|

|

||||||

|

1. Add the following file to your repository under `.github/workflows/pr_agent.yml`:

|

||||||

|

|

||||||

|

```yaml

|

||||||

|

on:

|

||||||

|

pull_request:

|

||||||

|

issue_comment:

|

||||||

|

jobs:

|

||||||

|

pr_agent_job:

|

||||||

|

runs-on: ubuntu-latest

|

||||||

|

permissions:

|

||||||

|

issues: write

|

||||||

|

pull-requests: write

|

||||||

|

contents: write

|

||||||

|

name: Run pr agent on every pull request, respond to user comments

|

||||||

|

steps:

|

||||||

|

- name: PR Agent action step

|

||||||

|

id: pragent

|

||||||

|

uses: Codium-ai/pr-agent@main

|

||||||

|

env:

|

||||||

|

OPENAI_KEY: ${{ secrets.OPENAI_KEY }}

|

||||||

|

GITHUB_TOKEN: ${{ secrets.GITHUB_TOKEN }}

|

||||||

|

```

|

||||||

|

** if you want to pin your action to a specific release (v0.7 for example) for stability reasons, use:

|

||||||

|

```yaml

|

||||||

|

on:

|

||||||

|

pull_request:

|

||||||

|

issue_comment:

|

||||||

|

|

||||||

|

jobs:

|

||||||

|

pr_agent_job:

|

||||||

|

runs-on: ubuntu-latest

|

||||||

|

permissions:

|

||||||

|

issues: write

|

||||||

|

pull-requests: write

|

||||||

|

contents: write

|

||||||

|

name: Run pr agent on every pull request, respond to user comments

|

||||||

|

steps:

|

||||||

|

- name: PR Agent action step

|

||||||

|

id: pragent

|

||||||

|

uses: Codium-ai/pr-agent@v0.7

|

||||||

|

env:

|

||||||

|

OPENAI_KEY: ${{ secrets.OPENAI_KEY }}

|

||||||

|

GITHUB_TOKEN: ${{ secrets.GITHUB_TOKEN }}

|

||||||

|

```

|

||||||

|

2. Add the following secret to your repository under `Settings > Secrets and variables > Actions > New repository secret > Add secret`:

|

||||||

|

|

||||||

|

```

|

||||||

|

Name = OPENAI_KEY

|

||||||

|

Secret = <your key>

|

||||||

|

```

|

||||||

|

|

||||||

|

The GITHUB_TOKEN secret is automatically created by GitHub.

|

||||||

|

|

||||||

|

3. Merge this change to your main branch.

|

||||||

|

When you open your next PR, you should see a comment from `github-actions` bot with a review of your PR, and instructions on how to use the rest of the tools.

|

||||||

|

|

||||||

|

4. You may configure PR-Agent by adding environment variables under the env section corresponding to any configurable property in the [configuration](pr_agent/settings/configuration.toml) file. Some examples:

|

||||||

|

```yaml

|

||||||

|

env:

|

||||||

|

# ... previous environment values

|

||||||

|

OPENAI.ORG: "<Your organization name under your OpenAI account>"

|

||||||

|

PR_REVIEWER.REQUIRE_TESTS_REVIEW: "false" # Disable tests review

|

||||||

|

PR_CODE_SUGGESTIONS.NUM_CODE_SUGGESTIONS: 6 # Increase number of code suggestions

|

||||||

|

```

|

||||||

|

|

||||||

|

---

|

||||||

|

|

||||||

|

### Run as a polling server

|

||||||

|

Request reviews by tagging your GitHub user on a PR

|

||||||

|

|

||||||

|

Follow [steps 1-3](#run-as-a-github-action) of the GitHub Action setup.

|

||||||

|

|

||||||

|

Run the following command to start the server:

|

||||||

|

|

||||||

|

```

|

||||||

|

python pr_agent/servers/github_polling.py

|

||||||

|

```

|

||||||

|

|

||||||

|

---

|

||||||

|

|

||||||

|

### Run as a GitHub App

|

||||||

|

Allowing you to automate the review process on your private or public repositories.

|

||||||

|

|

||||||

|

1. Create a GitHub App from the [Github Developer Portal](https://docs.github.com/en/developers/apps/creating-a-github-app).

|

||||||

|

|

||||||

|

- Set the following permissions:

|

||||||

|

- Pull requests: Read & write

|

||||||

|

- Issue comment: Read & write

|

||||||

|

- Metadata: Read-only

|

||||||

|

- Contents: Read-only

|

||||||

|

- Set the following events:

|

||||||

|

- Issue comment

|

||||||

|

- Pull request

|

||||||

|

- Push (if you need to enable triggering on PR update)

|

||||||

|

|

||||||

|

2. Generate a random secret for your app, and save it for later. For example, you can use:

|

||||||

|

|

||||||

|

```

|

||||||

|

WEBHOOK_SECRET=$(python -c "import secrets; print(secrets.token_hex(10))")

|

||||||

|

```

|

||||||

|

|

||||||

|

3. Acquire the following pieces of information from your app's settings page:

|

||||||

|

|

||||||

|

- App private key (click "Generate a private key" and save the file)

|

||||||

|

- App ID

|

||||||

|

|

||||||

|

4. Clone this repository:

|

||||||

|

|

||||||

|

```

|

||||||

|

git clone https://github.com/Codium-ai/pr-agent.git

|

||||||

|

```

|

||||||

|

|

||||||

|

5. Copy the secrets template file and fill in the following:

|

||||||

|

```

|

||||||

|

cp pr_agent/settings/.secrets_template.toml pr_agent/settings/.secrets.toml

|

||||||

|

# Edit .secrets.toml file

|

||||||

|

```

|

||||||

|

- Your OpenAI key.

|

||||||

|

- Copy your app's private key to the private_key field.

|

||||||

|

- Copy your app's ID to the app_id field.

|

||||||

|

- Copy your app's webhook secret to the webhook_secret field.

|

||||||

|

- Set deployment_type to 'app' in [configuration.toml](./pr_agent/settings/configuration.toml)

|

||||||

|

|

||||||

|

> The .secrets.toml file is not copied to the Docker image by default, and is only used for local development.

|

||||||

|

> If you want to use the .secrets.toml file in your Docker image, you can add remove it from the .dockerignore file.

|

||||||

|

> In most production environments, you would inject the secrets file as environment variables or as mounted volumes.

|

||||||

|

> For example, in order to inject a secrets file as a volume in a Kubernetes environment you can update your pod spec to include the following,

|

||||||

|

> assuming you have a secret named `pr-agent-settings` with a key named `.secrets.toml`:

|

||||||

|

```

|

||||||

|

volumes:

|

||||||

|

- name: settings-volume

|

||||||

|

secret:

|

||||||

|

secretName: pr-agent-settings

|

||||||

|

// ...

|

||||||

|

containers:

|

||||||

|

// ...

|

||||||

|

volumeMounts:

|

||||||

|

- mountPath: /app/pr_agent/settings_prod

|

||||||

|

name: settings-volume

|

||||||

|

```

|

||||||

|

|

||||||

|

> Another option is to set the secrets as environment variables in your deployment environment, for example `OPENAI.KEY` and `GITHUB.USER_TOKEN`.

|

||||||

|

|

||||||

|

6. Build a Docker image for the app and optionally push it to a Docker repository. We'll use Dockerhub as an example:

|

||||||

|

|

||||||

|

```

|

||||||

|

docker build . -t codiumai/pr-agent:github_app --target github_app -f docker/Dockerfile

|

||||||

|

docker push codiumai/pr-agent:github_app # Push to your Docker repository

|

||||||

|

```

|

||||||

|

|

||||||

|

7. Host the app using a server, serverless function, or container environment. Alternatively, for development and

|

||||||

|

debugging, you may use tools like smee.io to forward webhooks to your local machine.

|

||||||

|

You can check [Deploy as a Lambda Function](#deploy-as-a-lambda-function)

|

||||||

|

|

||||||

|

8. Go back to your app's settings, and set the following:

|

||||||

|

|

||||||

|

- Webhook URL: The URL of your app's server or the URL of the smee.io channel.

|

||||||

|

- Webhook secret: The secret you generated earlier.

|

||||||

|

|

||||||

|

9. Install the app by navigating to the "Install App" tab and selecting your desired repositories.

|

||||||

|

|

||||||

|

> **Note:** When running PR-Agent from GitHub App, the default configuration file (configuration.toml) will be loaded.<br>

|

||||||

|

> However, you can override the default tool parameters by uploading a local configuration file `.pr_agent.toml`<br>

|

||||||

|

> For more information please check out the [USAGE GUIDE](./Usage.md#working-with-github-app)

|

||||||

|

---

|

||||||

|

|

||||||

|

### Deploy as a Lambda Function

|

||||||

|

|

||||||

|

1. Follow steps 1-5 of [Method 5](#run-as-a-github-app).

|

||||||

|

2. Build a docker image that can be used as a lambda function

|

||||||

|

```shell

|

||||||

|

docker buildx build --platform=linux/amd64 . -t codiumai/pr-agent:serverless -f docker/Dockerfile.lambda

|

||||||

|

```

|

||||||

|

3. Push image to ECR

|

||||||

|

```shell

|

||||||

|

docker tag codiumai/pr-agent:serverless <AWS_ACCOUNT>.dkr.ecr.<AWS_REGION>.amazonaws.com/codiumai/pr-agent:serverless

|

||||||

|

docker push <AWS_ACCOUNT>.dkr.ecr.<AWS_REGION>.amazonaws.com/codiumai/pr-agent:serverless

|

||||||

|

```

|

||||||

|

4. Create a lambda function that uses the uploaded image. Set the lambda timeout to be at least 3m.

|

||||||

|

5. Configure the lambda function to have a Function URL.

|

||||||

|

6. In the environment variables of the Lambda function, specify `AZURE_DEVOPS_CACHE_DIR` to a writable location such as /tmp. (see [link](https://github.com/Codium-ai/pr-agent/pull/450#issuecomment-1840242269))

|

||||||

|

7. Go back to steps 8-9 of [Method 5](#run-as-a-github-app) with the function url as your Webhook URL.

|

||||||

|

The Webhook URL would look like `https://<LAMBDA_FUNCTION_URL>/api/v1/github_webhooks`

|

||||||

|

|

||||||

|

---

|

||||||

|

|

||||||

|

### AWS CodeCommit Setup

|

||||||

|

|

||||||

|

Not all features have been added to CodeCommit yet. As of right now, CodeCommit has been implemented to run the pr-agent CLI on the command line, using AWS credentials stored in environment variables. (More features will be added in the future.) The following is a set of instructions to have pr-agent do a review of your CodeCommit pull request from the command line:

|

||||||

|

|

||||||

|

1. Create an IAM user that you will use to read CodeCommit pull requests and post comments

|

||||||

|

* Note: That user should have CLI access only, not Console access

|

||||||

|

2. Add IAM permissions to that user, to allow access to CodeCommit (see IAM Role example below)

|

||||||

|

3. Generate an Access Key for your IAM user

|

||||||

|

4. Set the Access Key and Secret using environment variables (see Access Key example below)

|

||||||

|

5. Set the `git_provider` value to `codecommit` in the `pr_agent/settings/configuration.toml` settings file

|

||||||

|

6. Set the `PYTHONPATH` to include your `pr-agent` project directory

|

||||||

|

* Option A: Add `PYTHONPATH="/PATH/TO/PROJECTS/pr-agent` to your `.env` file

|

||||||

|

* Option B: Set `PYTHONPATH` and run the CLI in one command, for example:

|

||||||

|

* `PYTHONPATH="/PATH/TO/PROJECTS/pr-agent python pr_agent/cli.py [--ARGS]`

|

||||||

|

|

||||||

|

##### AWS CodeCommit IAM Role Example

|

||||||

|

|

||||||

|

Example IAM permissions to that user to allow access to CodeCommit:

|

||||||

|

|

||||||

|

* Note: The following is a working example of IAM permissions that has read access to the repositories and write access to allow posting comments

|

||||||

|

* Note: If you only want pr-agent to review your pull requests, you can tighten the IAM permissions further, however this IAM example will work, and allow the pr-agent to post comments to the PR

|

||||||

|

* Note: You may want to replace the `"Resource": "*"` with your list of repos, to limit access to only those repos

|

||||||

|

|

||||||

|

```

|

||||||

|

{

|

||||||

|

"Version": "2012-10-17",

|

||||||

|

"Statement": [

|

||||||

|

{

|

||||||

|

"Effect": "Allow",

|

||||||

|

"Action": [

|

||||||

|

"codecommit:BatchDescribe*",

|

||||||

|

"codecommit:BatchGet*",

|

||||||

|

"codecommit:Describe*",

|

||||||

|

"codecommit:EvaluatePullRequestApprovalRules",

|

||||||

|

"codecommit:Get*",

|

||||||

|

"codecommit:List*",

|

||||||

|

"codecommit:PostComment*",

|

||||||

|

"codecommit:PutCommentReaction",

|

||||||

|

"codecommit:UpdatePullRequestDescription",

|

||||||

|

"codecommit:UpdatePullRequestTitle"

|

||||||

|

],

|

||||||

|

"Resource": "*"

|

||||||

|

}

|

||||||

|

]

|

||||||

|

}

|

||||||

|

```

|

||||||

|

|

||||||

|

##### AWS CodeCommit Access Key and Secret

|

||||||

|

|

||||||

|

Example setting the Access Key and Secret using environment variables

|

||||||

|

|

||||||

|

```sh

|

||||||

|

export AWS_ACCESS_KEY_ID="XXXXXXXXXXXXXXXX"

|

||||||

|

export AWS_SECRET_ACCESS_KEY="XXXXXXXXXXXXXXXX"

|

||||||

|

export AWS_DEFAULT_REGION="us-east-1"

|

||||||

|

```

|

||||||

|

|

||||||

|

##### AWS CodeCommit CLI Example

|

||||||

|

|

||||||

|

After you set up AWS CodeCommit using the instructions above, here is an example CLI run that tells pr-agent to **review** a given pull request.

|

||||||

|

(Replace your specific PYTHONPATH and PR URL in the example)

|

||||||

|

|

||||||

|

```sh

|

||||||

|

PYTHONPATH="/PATH/TO/PROJECTS/pr-agent" python pr_agent/cli.py \

|

||||||

|

--pr_url https://us-east-1.console.aws.amazon.com/codesuite/codecommit/repositories/MY_REPO_NAME/pull-requests/321 \

|

||||||

|

review

|

||||||

|

```

|

||||||

|

|

||||||

|

---

|

||||||

|

|

||||||

|

### Run a GitLab webhook server

|

||||||

|

|

||||||

|

1. From the GitLab workspace or group, create an access token. Enable the "api" scope only.

|

||||||

|

2. Generate a random secret for your app, and save it for later. For example, you can use:

|

||||||

|

|

||||||

|

```

|

||||||

|

WEBHOOK_SECRET=$(python -c "import secrets; print(secrets.token_hex(10))")

|

||||||

|

```

|

||||||

|

3. Follow the instructions to build the Docker image, setup a secrets file and deploy on your own server from [Method 5](#run-as-a-github-app) steps 4-7.

|

||||||

|

4. In the secrets file, fill in the following:

|

||||||

|

- Your OpenAI key.

|

||||||

|

- In the [gitlab] section, fill in personal_access_token and shared_secret. The access token can be a personal access token, or a group or project access token.

|

||||||

|

- Set deployment_type to 'gitlab' in [configuration.toml](./pr_agent/settings/configuration.toml)

|

||||||

|

5. Create a webhook in GitLab. Set the URL to the URL of your app's server. Set the secret token to the generated secret from step 2.

|

||||||

|

In the "Trigger" section, check the ‘comments’ and ‘merge request events’ boxes.

|

||||||

|

6. Test your installation by opening a merge request or commenting or a merge request using one of CodiumAI's commands.

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

### Run as a Bitbucket Pipeline

|

||||||

|

|

||||||

|

|

||||||

|

You can use the Bitbucket Pipeline system to run PR-Agent on every pull request open or update.

|

||||||

|

|

||||||

|

1. Add the following file in your repository bitbucket_pipelines.yml

|

||||||

|

|

||||||

|

```yaml

|

||||||

|

pipelines:

|

||||||

|

pull-requests:

|

||||||

|

'**':

|

||||||

|

- step:

|

||||||

|

name: PR Agent Review

|

||||||

|

image: python:3.10

|

||||||

|

services:

|

||||||

|

- docker

|

||||||

|

script:

|

||||||

|

- docker run -e CONFIG.GIT_PROVIDER=bitbucket -e OPENAI.KEY=$OPENAI_API_KEY -e BITBUCKET.BEARER_TOKEN=$BITBUCKET_BEARER_TOKEN codiumai/pr-agent:latest --pr_url=https://bitbucket.org/$BITBUCKET_WORKSPACE/$BITBUCKET_REPO_SLUG/pull-requests/$BITBUCKET_PR_ID review

|

||||||

|

```

|

||||||

|

|

||||||

|

2. Add the following secure variables to your repository under Repository settings > Pipelines > Repository variables.

|

||||||

|

OPENAI_API_KEY: <your key>

|

||||||

|

BITBUCKET_BEARER_TOKEN: <your token>

|

||||||

|

|

||||||

|

You can get a Bitbucket token for your repository by following Repository Settings -> Security -> Access Tokens.

|

||||||

|

|

||||||

|

Note that comments on a PR are not supported in Bitbucket Pipeline.

|

||||||

|

|

||||||

|

|

||||||

|

### Run using CodiumAI-hosted Bitbucket app

|

||||||

|

|

||||||

|

Please contact <support@codium.ai> or visit [CodiumAI pricing page](https://www.codium.ai/pricing/) if you're interested in a hosted BitBucket app solution that provides full functionality including PR reviews and comment handling. It's based on the [bitbucket_app.py](https://github.com/Codium-ai/pr-agent/blob/main/pr_agent/git_providers/bitbucket_provider.py) implementation.

|

||||||

|

|

||||||

|

|

||||||

|

### Bitbucket Server and Data Center

|

||||||

|

|

||||||

|

Login into your on-prem instance of Bitbucket with your service account username and password.

|

||||||

|

Navigate to `Manage account`, `HTTP Access tokens`, `Create Token`.

|

||||||

|

Generate the token and add it to .secret.toml under `bitbucket_server` section

|

||||||

|

|

||||||

|

```toml

|

||||||

|

[bitbucket_server]

|

||||||

|

bearer_token = "<your key>"

|

||||||

|

```

|

||||||

|

|

||||||

|

#### Run it as CLI

|

||||||

|

|

||||||

|

Modify `configuration.toml`:

|

||||||

|

|

||||||

|

```toml

|

||||||

|

git_provider="bitbucket_server"

|

||||||

|

```

|

||||||

|

|

||||||

|

and pass the Pull request URL:

|

||||||

|

```shell

|

||||||

|

python cli.py --pr_url https://git.onpreminstanceofbitbucket.com/projects/PROJECT/repos/REPO/pull-requests/1 review

|

||||||

|

```

|

||||||

|

|

||||||

|

#### Run it as service

|

||||||

|

|

||||||

|

To run pr-agent as webhook, build the docker image:

|

||||||

|

```

|

||||||

|

docker build . -t codiumai/pr-agent:bitbucket_server_webhook --target bitbucket_server_webhook -f docker/Dockerfile

|

||||||

|

docker push codiumai/pr-agent:bitbucket_server_webhook # Push to your Docker repository

|

||||||

|

```

|

||||||

|

|

||||||

|

Navigate to `Projects` or `Repositories`, `Settings`, `Webhooks`, `Create Webhook`.

|

||||||

|

Fill the name and URL, Authentication None select the Pull Request Opened checkbox to receive that event as webhook.

|

||||||

|

|

||||||

|

The URL should end with `/webhook`, for example: https://domain.com/webhook

|

||||||

|

|

||||||

|

=======

|

||||||

@ -1,52 +1,42 @@

|

|||||||

## PR Compression Strategy

|

# PR Compression Strategy

|

||||||

There are two scenarios:

|

There are two scenarios:

|

||||||

|

|

||||||

1. The PR is small enough to fit in a single prompt (including system and user prompt)

|

1. The PR is small enough to fit in a single prompt (including system and user prompt)

|

||||||

2. The PR is too large to fit in a single prompt (including system and user prompt)

|

2. The PR is too large to fit in a single prompt (including system and user prompt)

|

||||||

|

|

||||||

For both scenarios, we first use the following strategy

|

For both scenarios, we first use the following strategy

|

||||||

|

|

||||||

#### Repo language prioritization strategy

|

#### Repo language prioritization strategy

|

||||||

We prioritize the languages of the repo based on the following criteria:

|

|

||||||

|

|

||||||

|

We prioritize the languages of the repo based on the following criteria:

|

||||||

1. Exclude binary files and non code files (e.g. images, pdfs, etc)

|

1. Exclude binary files and non code files (e.g. images, pdfs, etc)

|

||||||

2. Given the main languages used in the repo

|

2. Given the main languages used in the repo

|

||||||

3. We sort the PR files by the most common languages in the repo (in descending order):

|

2. We sort the PR files by the most common languages in the repo (in descending order):

|

||||||

* ```[[file.py, file2.py],[file3.js, file4.jsx],[readme.md]]```

|

* ```[[file.py, file2.py],[file3.js, file4.jsx],[readme.md]]```

|

||||||

|

|

||||||

|

|

||||||

### Small PR

|

## Small PR

|

||||||

In this case, we can fit the entire PR in a single prompt:

|

In this case, we can fit the entire PR in a single prompt:

|

||||||

1. Exclude binary files and non code files (e.g. images, pdfs, etc)

|

1. Exclude binary files and non code files (e.g. images, pdfs, etc)

|

||||||

2. We Expand the surrounding context of each patch to 3 lines above and below the patch

|

2. We Expand the surrounding context of each patch to 3 lines above and below the patch

|

||||||

|

## Large PR

|

||||||

|

|

||||||

### Large PR

|

### Motivation

|

||||||

|

|

||||||

#### Motivation

|

|

||||||

Pull Requests can be very long and contain a lot of information with varying degree of relevance to the pr-agent.

|

Pull Requests can be very long and contain a lot of information with varying degree of relevance to the pr-agent.

|

||||||

We want to be able to pack as much information as possible in a single LMM prompt, while keeping the information relevant to the pr-agent.

|

We want to be able to pack as much information as possible in a single LMM prompt, while keeping the information relevant to the pr-agent.

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

#### Compression strategy

|

#### Compression strategy

|

||||||

We prioritize additions over deletions:

|

We prioritize additions over deletions:

|

||||||

- Combine all deleted files into a single list (`deleted files`)

|

- Combine all deleted files into a single list (`deleted files`)

|

||||||

- File patches are a list of hunks, remove all hunks of type deletion-only from the hunks in the file patch

|

- File patches are a list of hunks, remove all hunks of type deletion-only from the hunks in the file patch

|

||||||

|

|

||||||

#### Adaptive and token-aware file patch fitting

|

#### Adaptive and token-aware file patch fitting

|

||||||

We use [tiktoken](https://github.com/openai/tiktoken) to tokenize the patches after the modifications described above, and we use the following strategy to fit the patches into the prompt:

|

We use [tiktoken](https://github.com/openai/tiktoken) to tokenize the patches after the modifications described above, and we use the following strategy to fit the patches into the prompt:

|

||||||

|

|

||||||

1. Within each language we sort the files by the number of tokens in the file (in descending order):

|

1. Within each language we sort the files by the number of tokens in the file (in descending order):

|

||||||

- ```[[file2.py, file.py],[file4.jsx, file3.js],[readme.md]]```

|

* ```[[file2.py, file.py],[file4.jsx, file3.js],[readme.md]]```

|

||||||

2. Iterate through the patches in the order described above

|

2. Iterate through the patches in the order described above

|

||||||

3. Add the patches to the prompt until the prompt reaches a certain buffer from the max token length

|

2. Add the patches to the prompt until the prompt reaches a certain buffer from the max token length

|

||||||

4. If there are still patches left, add the remaining patches as a list called `other modified files` to the prompt until the prompt reaches the max token length (hard stop), skip the rest of the patches.

|

3. If there are still patches left, add the remaining patches as a list called `other modified files` to the prompt until the prompt reaches the max token length (hard stop), skip the rest of the patches.

|

||||||

5. If we haven't reached the max token length, add the `deleted files` to the prompt until the prompt reaches the max token length (hard stop), skip the rest of the patches.

|

4. If we haven't reached the max token length, add the `deleted files` to the prompt until the prompt reaches the max token length (hard stop), skip the rest of the patches.

|

||||||

|

|

||||||

#### Example

|

|

||||||

|

|

||||||

|

### Example

|

||||||

<kbd><img src=https://codium.ai/images/git_patch_logic.png width="768"></kbd>

|

<kbd><img src=https://codium.ai/images/git_patch_logic.png width="768"></kbd>

|

||||||

|

|

||||||

## YAML Prompting

|

|

||||||

TBD

|

|

||||||

|

|

||||||

## Static Code Analysis 💎

|

|

||||||

TBD

|

|

||||||

273

README.md

273

README.md

@ -19,150 +19,45 @@ Making pull requests less painful with an AI agent

|

|||||||

<img alt="GitHub" src="https://img.shields.io/github/last-commit/Codium-ai/pr-agent/main?style=for-the-badge" height="20">

|

<img alt="GitHub" src="https://img.shields.io/github/last-commit/Codium-ai/pr-agent/main?style=for-the-badge" height="20">

|

||||||

</a>

|

</a>

|

||||||

</div>

|

</div>

|

||||||

|

|

||||||

## Table of Contents

|

|

||||||

- [News and Updates](#news-and-updates)

|

|

||||||

- [Overview](#overview)

|

|

||||||

- [Example results](#example-results)

|

|

||||||

- [Try it now](#try-it-now)

|

|

||||||

- [Installation](#installation)

|

|

||||||

- [PR-Agent Pro 💎](#pr-agent-pro-)

|

|

||||||

- [How it works](#how-it-works)

|

|

||||||

- [Why use PR-Agent?](#why-use-pr-agent)

|

|

||||||

|

|

||||||

## News and Updates

|

|

||||||

|

|

||||||

### Jan 10, 2024

|

|

||||||

- A new [knowledge-base website](https://pr-agent-docs.codium.ai/) for PR-Agent is now available. It includes detailed information about the different tools, usage guides and more, in an accessible and organized format.

|

|

||||||

|

|

||||||

### Jan 8, 2024

|

|

||||||

|

|

||||||

- A new tool, [Find Similar Code](https://pr-agent-docs.codium.ai/tools/similar_code/) 💎 is now available.

|

|

||||||

<br>This tool retrieves the most similar code components from inside the organization's codebase, or from open-source code:

|

|

||||||

|

|

||||||

<kbd><a href="https://codium.ai/images/pr_agent/similar_code.mp4"><img src="https://codium.ai/images/pr_agent/similar_code_global2.png" width="512"></a></kbd>

|

|

||||||

|

|

||||||

(click on the image to see an instructional video)

|

|

||||||

|

|

||||||

### Feb 29, 2024

|

|

||||||

- You can now use the repo's [wiki page](https://pr-agent-docs.codium.ai/usage-guide/configuration_options/) to set configurations for PR-Agent 💎

|

|

||||||

|

|

||||||

<kbd><img src="https://codium.ai/images/pr_agent/wiki_configuration.png" width="512"></kbd>

|

|

||||||

|

|

||||||

|

|

||||||

## Overview

|

|

||||||

<div style="text-align:left;">

|

<div style="text-align:left;">

|

||||||

|

|

||||||

CodiumAI PR-Agent is an open-source tool to help efficiently review and handle pull requests.

|

CodiumAI `PR-Agent` is an open-source tool for efficient pull request reviewing and handling. It automatically analyzes the pull request and can provide several types of commands:

|

||||||

|

|

||||||

- See the [Installation Guide](https://pr-agent-docs.codium.ai/installation/) for instructions on installing and running the tool on different git platforms.

|

‣ **Auto Description ([`/describe`](./docs/DESCRIBE.md))**: Automatically generating PR description - title, type, summary, code walkthrough and labels.

|

||||||

|

\

|

||||||

|

‣ **Auto Review ([`/review`](./docs/REVIEW.md))**: Adjustable feedback about the PR main theme, type, relevant tests, security issues, score, and various suggestions for the PR content.

|

||||||

|

\

|

||||||

|

‣ **Question Answering ([`/ask ...`](./docs/ASK.md))**: Answering free-text questions about the PR.

|

||||||

|

\

|

||||||

|

‣ **Code Suggestions ([`/improve`](./docs/IMPROVE.md))**: Committable code suggestions for improving the PR.

|

||||||

|

\

|

||||||

|

‣ **Update Changelog ([`/update_changelog`](./docs/UPDATE_CHANGELOG.md))**: Automatically updating the CHANGELOG.md file with the PR changes.

|

||||||

|

\

|

||||||

|

‣ **Find Similar Issue ([`/similar_issue`](./docs/SIMILAR_ISSUE.md))**: Automatically retrieves and presents similar issues.

|

||||||

|

\

|

||||||

|

‣ **Add Documentation ([`/add_docs`](./docs/ADD_DOCUMENTATION.md))**: Automatically adds documentation to un-documented functions/classes in the PR.

|

||||||

|

\

|

||||||

|

‣ **Generate Custom Labels ([`/generate_labels`](./docs/GENERATE_CUSTOM_LABELS.md))**: Automatically suggests custom labels based on the PR code changes.

|

||||||

|

|

||||||

- See the [Usage Guide](https://pr-agent-docs.codium.ai/usage-guide/) for instructions on running the PR-Agent commands via different interfaces, including _CLI_, _online usage_, or by _automatically triggering_ them when a new PR is opened.

|

See the [Installation Guide](./INSTALL.md) for instructions on installing and running the tool on different git platforms.

|

||||||

|

|

||||||

- See the [Tools Guide](https://pr-agent-docs.codium.ai/tools/) for a detailed description of the different tools.

|

See the [Usage Guide](./Usage.md) for running the PR-Agent commands via different interfaces, including _CLI_, _online usage_, or by _automatically triggering_ them when a new PR is opened.

|

||||||

|

|

||||||

Supported commands per platform:

|

See the [Tools Guide](./docs/TOOLS_GUIDE.md) for detailed description of the different tools (tools are run via the commands).

|

||||||

|

|

||||||

| | | GitHub | Gitlab | Bitbucket | Azure DevOps |

|

<h3>Example results:</h3>

|

||||||

|-------|-------------------------------------------------------------------------------------------------------------------|:--------------------:|:--------------------:|:--------------------:|:--------------------:|

|

|

||||||

| TOOLS | Review | :white_check_mark: | :white_check_mark: | :white_check_mark: | :white_check_mark: |

|

|

||||||

| | ⮑ Incremental | :white_check_mark: | | | |

|

|

||||||

| | ⮑ [SOC2 Compliance](https://pr-agent-docs.codium.ai/tools/review/#soc2-ticket-compliance) 💎 | :white_check_mark: | :white_check_mark: | :white_check_mark: | :white_check_mark: |

|

|

||||||

| | Describe | :white_check_mark: | :white_check_mark: | :white_check_mark: | :white_check_mark: |

|

|

||||||

| | ⮑ [Inline File Summary](https://pr-agent-docs.codium.ai/tools/describe#inline-file-summary) 💎 | :white_check_mark: | | | |

|

|

||||||

| | Improve | :white_check_mark: | :white_check_mark: | :white_check_mark: | :white_check_mark: |

|

|

||||||

| | ⮑ Extended | :white_check_mark: | :white_check_mark: | :white_check_mark: | :white_check_mark: |

|

|

||||||

| | Ask | :white_check_mark: | :white_check_mark: | :white_check_mark: | :white_check_mark: |

|

|

||||||

| | ⮑ [Ask on code lines](https://pr-agent-docs.codium.ai/tools/ask#ask-lines) | :white_check_mark: | :white_check_mark: | | |

|

|

||||||

| | [Custom Suggestions](https://pr-agent-docs.codium.ai/tools/custom_suggestions/) 💎 | :white_check_mark: | :white_check_mark: | :white_check_mark: | :white_check_mark: |

|

|

||||||

| | [Test](https://pr-agent-docs.codium.ai/tools/test/) 💎 | :white_check_mark: | :white_check_mark: | | :white_check_mark: |

|

|

||||||

| | Reflect and Review | :white_check_mark: | :white_check_mark: | :white_check_mark: | :white_check_mark: |

|

|

||||||

| | Update CHANGELOG.md | :white_check_mark: | :white_check_mark: | :white_check_mark: | :white_check_mark: |

|

|

||||||

| | Find Similar Issue | :white_check_mark: | | | |

|

|

||||||

| | [Add PR Documentation](https://pr-agent-docs.codium.ai/tools/documentation/) 💎 | :white_check_mark: | :white_check_mark: | | :white_check_mark: |

|

|

||||||

| | [Custom Labels](https://pr-agent-docs.codium.ai/tools/custom_labels/) 💎 | :white_check_mark: | :white_check_mark: | | :white_check_mark: |

|

|

||||||

| | [Analyze](https://pr-agent-docs.codium.ai/tools/analyze/) 💎 | :white_check_mark: | :white_check_mark: | | :white_check_mark: |

|

|

||||||

| | [CI Feedback](https://pr-agent-docs.codium.ai/tools/ci_feedback/) 💎 | :white_check_mark: | | | |

|

|

||||||

| | [Similar Code](https://pr-agent-docs.codium.ai/tools/similar_code/) 💎 | :white_check_mark: | | | |

|

|

||||||

| | | | | | |

|

|

||||||

| USAGE | CLI | :white_check_mark: | :white_check_mark: | :white_check_mark: | :white_check_mark: |

|

|

||||||

| | App / webhook | :white_check_mark: | :white_check_mark: | :white_check_mark: | :white_check_mark: |

|

|

||||||

| | Tagging bot | :white_check_mark: | | | |

|

|

||||||

| | Actions | :white_check_mark: | | :white_check_mark: | |

|

|

||||||

| | | | | | |

|

|

||||||

| CORE | PR compression | :white_check_mark: | :white_check_mark: | :white_check_mark: | :white_check_mark: |

|

|

||||||

| | Repo language prioritization | :white_check_mark: | :white_check_mark: | :white_check_mark: | :white_check_mark: |

|

|

||||||

| | Adaptive and token-aware file patch fitting | :white_check_mark: | :white_check_mark: | :white_check_mark: | :white_check_mark: |

|

|

||||||

| | Multiple models support | :white_check_mark: | :white_check_mark: | :white_check_mark: | :white_check_mark: |

|

|

||||||

| | [Static code analysis](https://pr-agent-docs.codium.ai/core-abilities/#static-code-analysis) 💎 | :white_check_mark: | :white_check_mark: | :white_check_mark: | :white_check_mark: |

|

|

||||||

| | [Global and wiki configurations](https://pr-agent-docs.codium.ai/usage-guide/configuration_options/) 💎 | :white_check_mark: | :white_check_mark: | :white_check_mark: | :white_check_mark: |

|

|

||||||

| | [PR interactive actions](https://www.codium.ai/images/pr_agent/pr-actions.mp4) 💎 | :white_check_mark: | | | |

|

|

||||||

- 💎 means this feature is available only in [PR-Agent Pro](https://www.codium.ai/pricing/)

|

|

||||||

|

|

||||||

[//]: # (- Support for additional git providers is described in [here](./docs/Full_environments.md))

|

|

||||||

___

|

|

||||||

|

|

||||||

‣ **Auto Description ([`/describe`](https://pr-agent-docs.codium.ai/tools/describe/))**: Automatically generating PR description - title, type, summary, code walkthrough and labels.

|

|

||||||

\

|

|

||||||

‣ **Auto Review ([`/review`](https://pr-agent-docs.codium.ai/tools/review/))**: Adjustable feedback about the PR, possible issues, security concerns, review effort and more.

|

|

||||||

\

|

|

||||||

‣ **Code Suggestions ([`/improve`](https://pr-agent-docs.codium.ai/tools/improve/))**: Code suggestions for improving the PR.

|

|

||||||

\

|

|

||||||

‣ **Question Answering ([`/ask ...`](https://pr-agent-docs.codium.ai/tools/ask/))**: Answering free-text questions about the PR.

|

|

||||||

\

|

|

||||||

‣ **Update Changelog ([`/update_changelog`](https://pr-agent-docs.codium.ai/tools/update_changelog/))**: Automatically updating the CHANGELOG.md file with the PR changes.

|

|

||||||

\

|

|

||||||

‣ **Find Similar Issue ([`/similar_issue`](https://pr-agent-docs.codium.ai/tools/similar_issues/))**: Automatically retrieves and presents similar issues.

|

|

||||||

\

|

|

||||||

‣ **Add Documentation 💎 ([`/add_docs`](https://pr-agent-docs.codium.ai/tools/documentation/))**: Generates documentation to methods/functions/classes that changed in the PR.

|

|

||||||

\

|

|

||||||

‣ **Generate Custom Labels 💎 ([`/generate_labels`](https://pr-agent-docs.codium.ai/tools/custom_labels/))**: Generates custom labels for the PR, based on specific guidelines defined by the user.

|

|

||||||

\

|

|

||||||

‣ **Analyze 💎 ([`/analyze`](https://pr-agent-docs.codium.ai/tools/analyze/))**: Identify code components that changed in the PR, and enables to interactively generate tests, docs, and code suggestions for each component.

|

|

||||||

\

|

|

||||||

‣ **Custom Suggestions 💎 ([`/custom_suggestions`](https://pr-agent-docs.codium.ai/tools/custom_suggestions/))**: Automatically generates custom suggestions for improving the PR code, based on specific guidelines defined by the user.

|

|

||||||

\

|

|

||||||

‣ **Generate Tests 💎 ([`/test component_name`](https://pr-agent-docs.codium.ai/tools/test/))**: Generates unit tests for a selected component, based on the PR code changes.

|

|

||||||

\

|

|

||||||

‣ **CI Feedback 💎 ([`/checks ci_job`](https://pr-agent-docs.codium.ai/tools/ci_feedback/))**: Automatically generates feedback and analysis for a failed CI job.

|

|

||||||

\

|

|

||||||

‣ **Similar Code 💎 ([`/find_similar_component`](https://pr-agent-docs.codium.ai/tools/similar_code//))**: Retrieves the most similar code components from inside the organization's codebase, or from open-source code.

|

|

||||||

___

|

|

||||||

|

|

||||||

## Example results

|

|

||||||

</div>

|

</div>

|

||||||

<h4><a href="https://github.com/Codium-ai/pr-agent/pull/530">/describe</a></h4>

|

<h4><a href="https://github.com/Codium-ai/pr-agent/pull/229#issuecomment-1687561986">/describe:</a></h4>

|

||||||

<div align="center">

|

<div align="center">

|

||||||

<p float="center">

|

<p float="center">

|

||||||

<img src="https://www.codium.ai/images/pr_agent/describe_new_short_main.png" width="512">

|

<img src="https://www.codium.ai/images/describe-2.gif" width="800">

|

||||||

</p>

|

</p>

|

||||||

</div>

|

</div>

|

||||||

<hr>

|

|

||||||

|

|

||||||

<h4><a href="https://github.com/Codium-ai/pr-agent/pull/732#issuecomment-1975099151">/review</a></h4>

|

<h4><a href="https://github.com/Codium-ai/pr-agent/pull/229#issuecomment-1695021901">/review:</a></h4>

|

||||||

<div align="center">

|

<div align="center">

|

||||||

<p float="center">

|

<p float="center">

|

||||||

<kbd>

|

<img src="https://www.codium.ai/images/review-2.gif" width="800">

|

||||||

<img src="https://www.codium.ai/images/pr_agent/review_new_short_main.png" width="512">

|

|

||||||

</kbd>

|

|

||||||

</p>

|

|

||||||

</div>

|

|

||||||

<hr>

|

|

||||||

|

|

||||||

<h4><a href="https://github.com/Codium-ai/pr-agent/pull/732#issuecomment-1975099159">/improve</a></h4>

|

|

||||||

<div align="center">

|

|

||||||

<p float="center">

|

|

||||||

<kbd>

|

|

||||||

<img src="https://www.codium.ai/images/pr_agent/improve_new_short_main.png" width="512">

|

|

||||||

</kbd>

|

|

||||||

</p>

|

|

||||||

</div>

|

|

||||||

<hr>

|

|

||||||

|

|

||||||

<h4><a href="https://github.com/Codium-ai/pr-agent/pull/530">/generate_labels</a></h4>

|

|

||||||

<div align="center">

|

|

||||||

<p float="center">

|

|

||||||

<kbd><img src="https://www.codium.ai/images/pr_agent/geneare_custom_labels_main_short.png" width="300"></kbd>

|

|

||||||

</p>

|

</p>

|

||||||

</div>

|

</div>

|

||||||

|

|

||||||

@ -203,11 +98,46 @@ ___

|

|||||||

[//]: # (</div>)

|

[//]: # (</div>)

|

||||||

<div align="left">

|

<div align="left">

|

||||||

|

|

||||||

|

## Table of Contents

|

||||||

|

- [Overview](#overview)

|

||||||

|

- [Try it now](#try-it-now)

|

||||||

|

- [Installation](#installation)

|

||||||

|

- [How it works](#how-it-works)

|

||||||

|

- [Why use PR-Agent?](#why-use-pr-agent)

|

||||||

|

- [Roadmap](#roadmap)

|

||||||

</div>

|

</div>

|

||||||

<hr>

|

|

||||||

|

|

||||||

|

|

||||||

|

## Overview

|

||||||

|

`PR-Agent` offers extensive pull request functionalities across various git providers:

|

||||||

|

| | | GitHub | Gitlab | Bitbucket | CodeCommit | Azure DevOps | Gerrit |

|

||||||

|

|-------|---------------------------------------------|:------:|:------:|:---------:|:----------:|:----------:|:----------:|

|

||||||

|

| TOOLS | Review | :white_check_mark: | :white_check_mark: | :white_check_mark: | :white_check_mark: | :white_check_mark: | :white_check_mark: |

|

||||||

|

| | ⮑ Incremental | :white_check_mark: | | | | | |

|

||||||

|

| | Ask | :white_check_mark: | :white_check_mark: | :white_check_mark: | :white_check_mark: | :white_check_mark: | :white_check_mark: |

|

||||||

|

| | Auto-Description | :white_check_mark: | :white_check_mark: | :white_check_mark: | :white_check_mark: | :white_check_mark: | :white_check_mark: |

|

||||||

|

| | Improve Code | :white_check_mark: | :white_check_mark: | :white_check_mark: | :white_check_mark: | | :white_check_mark: |

|

||||||

|

| | ⮑ Extended | :white_check_mark: | :white_check_mark: | :white_check_mark: | :white_check_mark: | | :white_check_mark: |

|

||||||

|

| | Reflect and Review | :white_check_mark: | :white_check_mark: | :white_check_mark: | | :white_check_mark: | :white_check_mark: |

|

||||||

|

| | Update CHANGELOG.md | :white_check_mark: | :white_check_mark: | :white_check_mark: | :white_check_mark: | | |

|

||||||

|

| | Find similar issue | :white_check_mark: | | | | | |

|

||||||

|

| | Add Documentation | :white_check_mark: | :white_check_mark: | :white_check_mark: | :white_check_mark: | | :white_check_mark: |

|

||||||

|

| | Generate Labels | :white_check_mark: | :white_check_mark: | | | | |

|

||||||

|

| | | | | | | |

|

||||||

|

| USAGE | CLI | :white_check_mark: | :white_check_mark: | :white_check_mark: | :white_check_mark: | :white_check_mark: |

|

||||||

|

| | App / webhook | :white_check_mark: | :white_check_mark: | | | |

|

||||||

|

| | Tagging bot | :white_check_mark: | | | | |

|

||||||

|

| | Actions | :white_check_mark: | | | | |

|

||||||

|

| | Web server | | | | | | :white_check_mark: |

|

||||||

|

| | | | | | | |

|

||||||

|

| CORE | PR compression | :white_check_mark: | :white_check_mark: | :white_check_mark: | :white_check_mark: | :white_check_mark: | :white_check_mark: |

|

||||||

|

| | Repo language prioritization | :white_check_mark: | :white_check_mark: | :white_check_mark: | :white_check_mark: | :white_check_mark: | :white_check_mark: |

|

||||||

|

| | Adaptive and token-aware<br />file patch fitting | :white_check_mark: | :white_check_mark: | :white_check_mark: | :white_check_mark: | :white_check_mark: | :white_check_mark: |

|

||||||

|

| | Multiple models support | :white_check_mark: | :white_check_mark: | :white_check_mark: | :white_check_mark: | :white_check_mark: | :white_check_mark: |

|

||||||

|

| | Incremental PR Review | :white_check_mark: | | | | | |

|

||||||

|

|

||||||

|

Review the [usage guide](./Usage.md) section for detailed instructions how to use the different tools, select the relevant git provider (GitHub, Gitlab, Bitbucket,...), and adjust the configuration file to your needs.

|

||||||

|

|

||||||

## Try it now

|

## Try it now

|

||||||

|

|

||||||

Try the GPT-4 powered PR-Agent instantly on _your public GitHub repository_. Just mention `@CodiumAI-Agent` and add the desired command in any PR comment. The agent will generate a response based on your command.

|

Try the GPT-4 powered PR-Agent instantly on _your public GitHub repository_. Just mention `@CodiumAI-Agent` and add the desired command in any PR comment. The agent will generate a response based on your command.

|

||||||

@ -220,42 +150,31 @@ and the agent will respond with a review of your PR

|

|||||||

|

|

||||||

|

|

||||||

|

|

||||||

To set up your own PR-Agent, see the [Installation](https://pr-agent-docs.codium.ai/installation/) section below.

|

To set up your own PR-Agent, see the [Installation](#installation) section below.

|

||||||

Note that when you set your own PR-Agent or use CodiumAI hosted PR-Agent, there is no need to mention `@CodiumAI-Agent ...`. Instead, directly start with the command, e.g., `/ask ...`.

|

Note that when you set your own PR-Agent or use CodiumAI hosted PR-Agent, there is no need to mention `@CodiumAI-Agent ...`. Instead, directly start with the command, e.g., `/ask ...`.

|

||||||

|

|

||||||

---

|

---

|

||||||

|

|

||||||

## Installation

|

## Installation

|

||||||

To use your own version of PR-Agent, you first need to acquire two tokens:

|

|

||||||

|

To get started with PR-Agent quickly, you first need to acquire two tokens:

|

||||||

|

|

||||||

1. An OpenAI key from [here](https://platform.openai.com/), with access to GPT-4.

|

1. An OpenAI key from [here](https://platform.openai.com/), with access to GPT-4.

|

||||||

2. A GitHub personal access token (classic) with the repo scope.

|

2. A GitHub personal access token (classic) with the repo scope.

|

||||||

|

|

||||||

There are several ways to use PR-Agent:

|

There are several ways to use PR-Agent:

|

||||||

|

|

||||||

**Locally**

|

- [Method 1: Use Docker image (no installation required)](INSTALL.md#method-1-use-docker-image-no-installation-required)

|

||||||

- [Use Docker image (no installation required)](https://pr-agent-docs.codium.ai/installation/locally/#use-docker-image-no-installation-required)

|

- [Method 2: Run from source](INSTALL.md#method-2-run-from-source)

|

||||||

- [Run from source](https://pr-agent-docs.codium.ai/installation/locally/#run-from-source)

|

- [Method 3: Run as a GitHub Action](INSTALL.md#method-3-run-as-a-github-action)

|

||||||

|

- [Method 4: Run as a polling server](INSTALL.md#method-4-run-as-a-polling-server)

|

||||||

**GitHub specific methods**

|

- Request reviews by tagging your GitHub user on a PR

|

||||||

- [Run as a GitHub Action](https://pr-agent-docs.codium.ai/installation/github/#run-as-a-github-action)

|

- [Method 5: Run as a GitHub App](INSTALL.md#method-5-run-as-a-github-app)

|

||||||

- [Run as a GitHub App](https://pr-agent-docs.codium.ai/installation/github/#run-as-a-github-app)

|

- Allowing you to automate the review process on your private or public repositories

|

||||||

|

- [Method 6: Deploy as a Lambda Function](INSTALL.md#method-6---deploy-as-a-lambda-function)

|

||||||

**GitLab specific methods**

|

- [Method 7: AWS CodeCommit](INSTALL.md#method-7---aws-codecommit-setup)

|

||||||

- [Run a GitLab webhook server](https://pr-agent-docs.codium.ai/installation/gitlab/)

|

- [Method 8: Run a GitLab webhook server](INSTALL.md#method-8---run-a-gitlab-webhook-server)

|

||||||

|

- [Method 9: Run as a Bitbucket Pipeline](INSTALL.md#method-9-run-as-a-bitbucket-pipeline)

|

||||||

**BitBucket specific methods**

|

|

||||||

- [Run as a Bitbucket Pipeline](https://pr-agent-docs.codium.ai/installation/bitbucket/)

|

|

||||||

|

|

||||||

## PR-Agent Pro 💎

|

|

||||||

[PR-Agent Pro](https://www.codium.ai/pricing/) is a hosted version of PR-Agent, provided by CodiumAI. It is available for a monthly fee, and provides the following benefits:

|

|

||||||

1. **Fully managed** - We take care of everything for you - hosting, models, regular updates, and more. Installation is as simple as signing up and adding the PR-Agent app to your GitHub\BitBucket repo.

|

|

||||||

2. **Improved privacy** - No data will be stored or used to train models. PR-Agent Pro will employ zero data retention, and will use an OpenAI account with zero data retention.

|

|

||||||

3. **Improved support** - PR-Agent Pro users will receive priority support, and will be able to request new features and capabilities.

|

|

||||||

4. **Extra features** -In addition to the benefits listed above, PR-Agent Pro will emphasize more customization, and the usage of static code analysis, in addition to LLM logic, to improve results.

|

|

||||||

See [here](https://pr-agent-docs.codium.ai/#pr-agent-pro) for a list of features available in PR-Agent Pro.

|

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

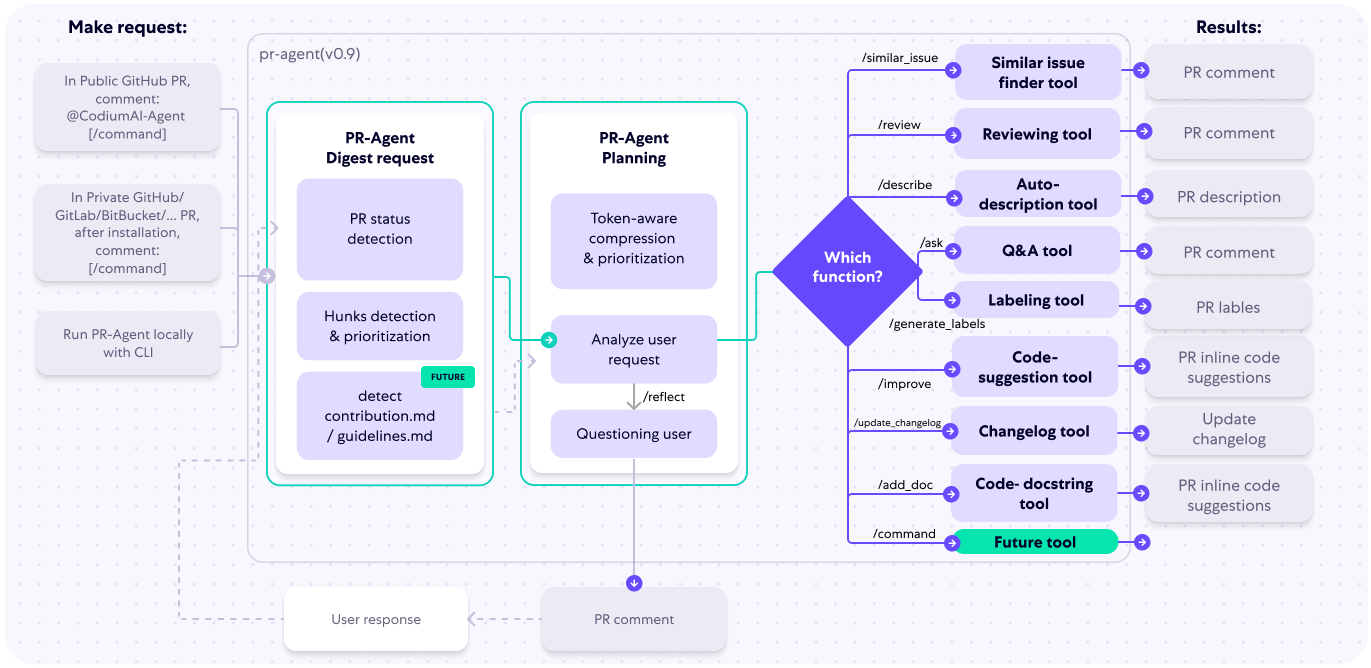

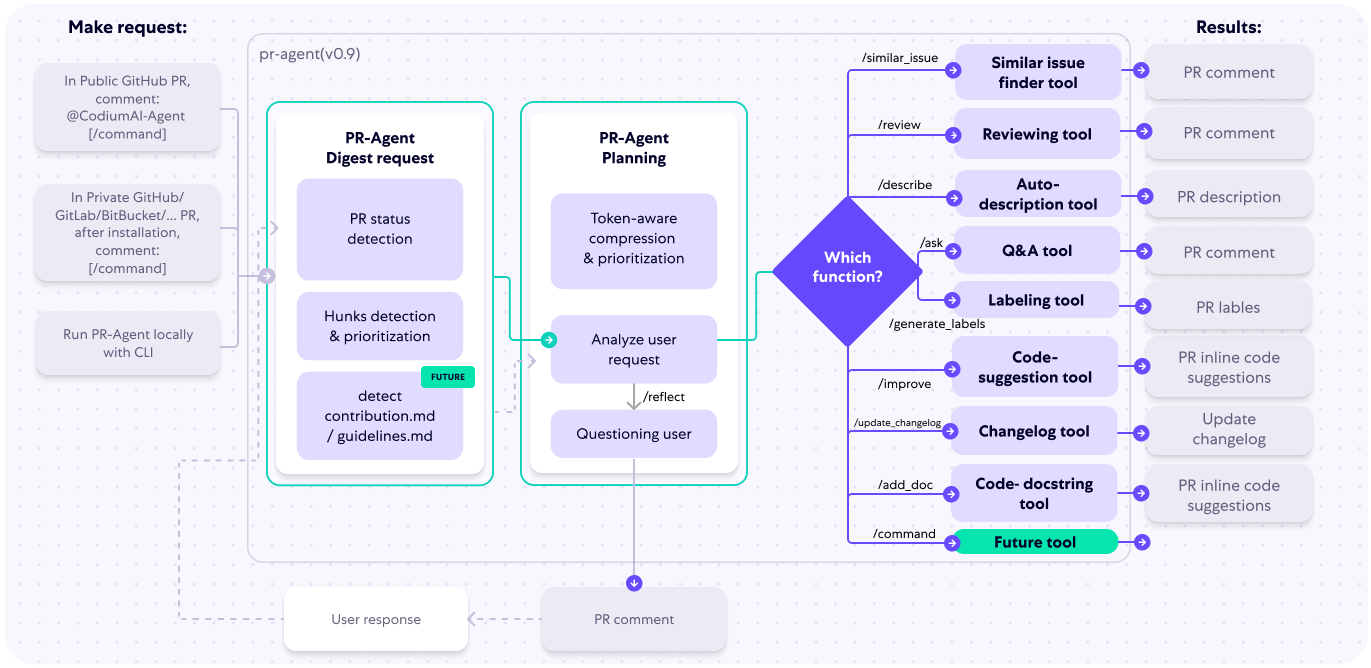

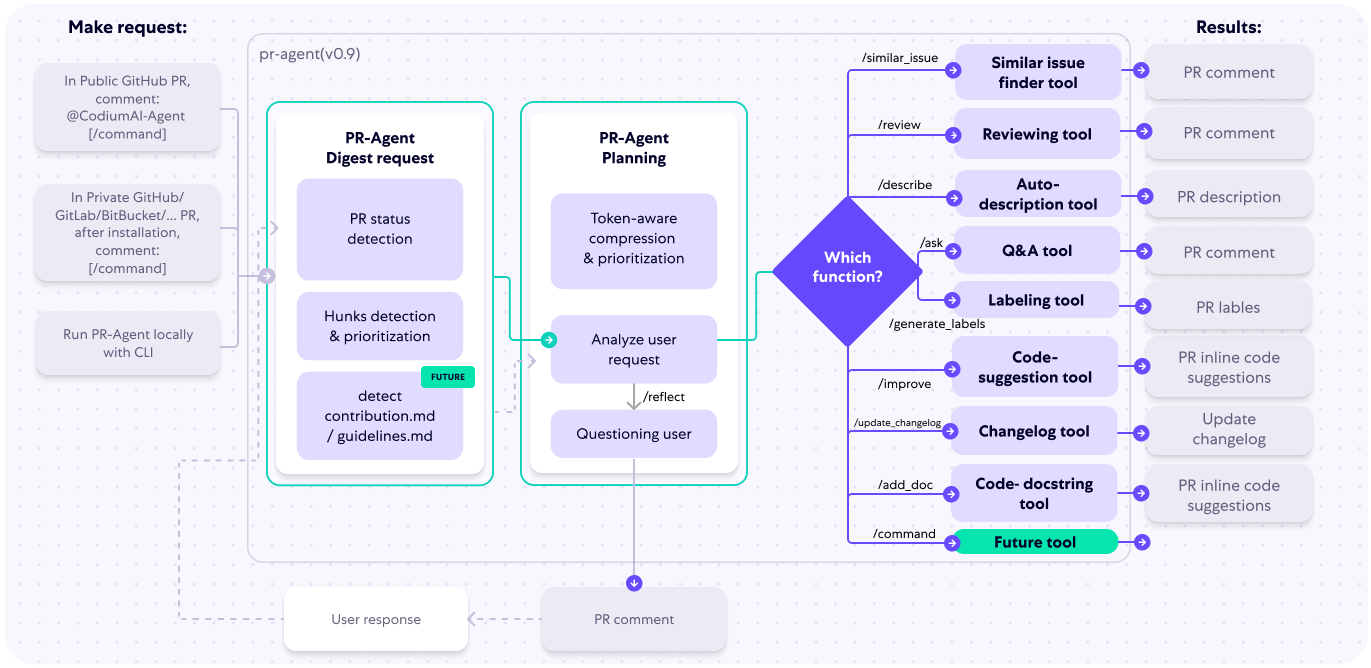

## How it works

|

## How it works

|

||||||

|

|

||||||

@ -263,26 +182,54 @@ The following diagram illustrates PR-Agent tools and their flow:

|

|||||||

|

|

||||||

|

|

||||||

|

|

||||||

Check out the [PR Compression strategy](https://pr-agent-docs.codium.ai/core-abilities/#pr-compression-strategy) page for more details on how we convert a code diff to a manageable LLM prompt

|

Check out the [PR Compression strategy](./PR_COMPRESSION.md) page for more details on how we convert a code diff to a manageable LLM prompt

|

||||||

|

|

||||||

## Why use PR-Agent?

|

## Why use PR-Agent?

|

||||||

|

|

||||||

A reasonable question that can be asked is: `"Why use PR-Agent? What makes it stand out from existing tools?"`

|

A reasonable question that can be asked is: `"Why use PR-Agent? What make it stand out from existing tools?"`

|

||||||

|

|

||||||

Here are some advantages of PR-Agent:

|

Here are some advantages of PR-Agent:

|

||||||

|

|

||||||

- We emphasize **real-life practical usage**. Each tool (review, improve, ask, ...) has a single GPT-4 call, no more. We feel that this is critical for realistic team usage - obtaining an answer quickly (~30 seconds) and affordably.

|

- We emphasize **real-life practical usage**. Each tool (review, improve, ask, ...) has a single GPT-4 call, no more. We feel that this is critical for realistic team usage - obtaining an answer quickly (~30 seconds) and affordably.

|

||||||

- Our [PR Compression strategy](https://pr-agent-docs.codium.ai/core-abilities/#pr-compression-strategy) is a core ability that enables to effectively tackle both short and long PRs.

|

- Our [PR Compression strategy](./PR_COMPRESSION.md) is a core ability that enables to effectively tackle both short and long PRs.

|

||||||

- Our JSON prompting strategy enables to have **modular, customizable tools**. For example, the '/review' tool categories can be controlled via the [configuration](pr_agent/settings/configuration.toml) file. Adding additional categories is easy and accessible.

|

- Our JSON prompting strategy enables to have **modular, customizable tools**. For example, the '/review' tool categories can be controlled via the [configuration](pr_agent/settings/configuration.toml) file. Adding additional categories is easy and accessible.

|

||||||

- We support **multiple git providers** (GitHub, Gitlab, Bitbucket), **multiple ways** to use the tool (CLI, GitHub Action, GitHub App, Docker, ...), and **multiple models** (GPT-4, GPT-3.5, Anthropic, Cohere, Llama2).

|

- We support **multiple git providers** (GitHub, Gitlab, Bitbucket, CodeCommit), **multiple ways** to use the tool (CLI, GitHub Action, GitHub App, Docker, ...), and **multiple models** (GPT-4, GPT-3.5, Anthropic, Cohere, Llama2).

|

||||||

|

- We are open-source, and welcome contributions from the community.

|

||||||

|

|

||||||

|

|

||||||

## Data privacy

|

## Roadmap

|

||||||

|

|

||||||

If you host PR-Agent with your OpenAI API key, it is between you and OpenAI. You can read their API data privacy policy here:

|

- [x] Support additional models, as a replacement for OpenAI (see [here](https://github.com/Codium-ai/pr-agent/pull/172))

|

||||||

|

- [x] Develop additional logic for handling large PRs (see [here](https://github.com/Codium-ai/pr-agent/pull/229))

|

||||||

|

- [ ] Add additional context to the prompt. For example, repo (or relevant files) summarization, with tools such a [ctags](https://github.com/universal-ctags/ctags)

|

||||||

|

- [x] PR-Agent for issues

|

||||||

|

- [ ] Adding more tools. Possible directions:

|

||||||

|

- [x] PR description

|

||||||

|

- [x] Inline code suggestions

|

||||||

|

- [x] Reflect and review

|

||||||

|

- [x] Rank the PR (see [here](https://github.com/Codium-ai/pr-agent/pull/89))

|

||||||

|

- [ ] Enforcing CONTRIBUTING.md guidelines

|

||||||

|

- [ ] Performance (are there any performance issues)

|

||||||

|

- [x] Documentation (is the PR properly documented)

|

||||||

|

- [ ] ...

|

||||||

|

|

||||||

|