mirror of

https://github.com/qodo-ai/pr-agent.git

synced 2025-07-12 16:50:37 +08:00

Compare commits

185 Commits

tr/static_

...

v0.25

| Author | SHA1 | Date | |

|---|---|---|---|

| 91bf3c0749 | |||

| 159155785e | |||

| eabc296246 | |||

| b44030114e | |||

| 1d6f87be3b | |||

| a7c6fa7bd2 | |||

| a825aec5f3 | |||

| 4df097c228 | |||

| 6871e1b27a | |||

| 4afe05761d | |||

| 7d1b6c2f0a | |||

| 3547cf2057 | |||

| f2043d639c | |||

| 6240de3898 | |||

| f08b20c667 | |||

| e64b468556 | |||

| d48d14dac7 | |||

| eb0c959ca9 | |||

| 741a70ad9d | |||

| 22ee03981e | |||

| b1336e7d08 | |||

| 751caca141 | |||

| 612004727c | |||

| 577ee0241d | |||

| a141ca133c | |||

| a14b6a580d | |||

| cc5005c490 | |||

| 3a5d0f54ce | |||

| cd8ba4f59f | |||

| fe27f96bf1 | |||

| 2c3aa7b2dc | |||

| c934523f2d | |||

| 2f4545dc15 | |||

| cbd490b3d7 | |||

| b07f96d26a | |||

| 065777040f | |||

| 9c82047dc3 | |||

| e0c15409bb | |||

| d956c72cb6 | |||

| dfb3d801cf | |||

| 5c5a3e267c | |||

| f9380c2440 | |||

| e6a1f14c0e | |||

| 6339845eb4 | |||

| 732cc18fd6 | |||

| 84d0f80c81 | |||

| ee26bf35c1 | |||

| 7a5e9102fd | |||

| a8c97bfa73 | |||

| af653a048f | |||

| d2663f959a | |||

| e650fe9ce9 | |||

| daeca42ae8 | |||

| 04496f9b0e | |||

| 0eacb3e35e | |||

| c5ed2f040a | |||

| c394fc2767 | |||

| 157251493a | |||

| 4a982a849d | |||

| 6e3544f523 | |||

| bf3ebbb95f | |||

| eb44ecb1be | |||

| 45bae48701 | |||

| b2181e4c79 | |||

| 5939d3b17b | |||

| c1f4964a55 | |||

| 022e407d84 | |||

| 93ba2d239a | |||

| fa49dd5167 | |||

| 16029e66ad | |||

| 7bd6713335 | |||

| ef3241285d | |||

| d9ef26dc1c | |||

| 02949b2b96 | |||

| d301c76b65 | |||

| dacb45dd8a | |||

| 443d06df06 | |||

| 15e8c988a4 | |||

| 60fab1b301 | |||

| 84c1c1b1ca | |||

| 7419a6d51a | |||

| ee58a92fb3 | |||

| 6b64924355 | |||

| 2f5e8472b9 | |||

| 852bb371af | |||

| 7c90e44656 | |||

| 81dea65856 | |||

| a3d572fb69 | |||

| 7186bf4bb3 | |||

| 115fca58a3 | |||

| cbf60ca636 | |||

| 64ac45d03b | |||

| db062e3e35 | |||

| e85472f367 | |||

| 597f1c6f83 | |||

| 66d4f56777 | |||

| fbfb9e0881 | |||

| 223b5408d7 | |||

| 509135a8d4 | |||

| 8db7151bf0 | |||

| b8cfcdbc12 | |||

| a3cd433184 | |||

| 0f284711e6 | |||

| 67b46e7f30 | |||

| 68f2cec077 | |||

| 8e94c8b2f5 | |||

| a221f8edd0 | |||

| 3b47c75c32 | |||

| 2e34d7a05a | |||

| 204a0a7912 | |||

| 9786499fa6 | |||

| 4f14742233 | |||

| c077c71fdb | |||

| 7b5a3d45bd | |||

| c6c6a9b4f0 | |||

| a5e7c37fcc | |||

| 12a9e13509 | |||

| 0b4b6b1589 | |||

| bf049381bd | |||

| 65c917b84b | |||

| b4700bd7c0 | |||

| a957554262 | |||

| d491a942cc | |||

| 6c55a2720a | |||

| f1d0401f82 | |||

| c5bd09e2c9 | |||

| c70acdc7cd | |||

| 1c6f7f9c06 | |||

| 0b32b253ca | |||

| 3efd2213f2 | |||

| 0705bd03c4 | |||

| 927d005e99 | |||

| 0dccfdbbf0 | |||

| dcb7b66fd7 | |||

| b7437147af | |||

| e82afdd2cb | |||

| 0946da3810 | |||

| d1f4069d3f | |||

| d45a892fd2 | |||

| 4a91b8ed8d | |||

| fb85cb721a | |||

| 3a52122677 | |||

| 13c6ed9098 | |||

| 9dd1995464 | |||

| eb804d0b34 | |||

| e0ee878e84 | |||

| 27abe48a34 | |||

| 8fe504a7ec | |||

| f6ba49819a | |||

| 22bf7af9ba | |||

| 840e8c4d6b | |||

| 49f8d86c77 | |||

| 05827d125b | |||

| 74ee9a333e | |||

| e9769fa602 | |||

| 3adff8cf4c | |||

| d249b47ce9 | |||

| 892d1ad15c | |||

| 76d95bb6d7 | |||

| 7db9a03805 | |||

| 4eef3e9190 | |||

| 014ea884d2 | |||

| c1c5ee7cfb | |||

| 3ac1ddc5d7 | |||

| e6c56c7355 | |||

| 727b08fde3 | |||

| 5d9d48dc82 | |||

| 8e8062fefc | |||

| 23a3e208a5 | |||

| bb84063ef2 | |||

| a476e85fa7 | |||

| 4b05a3e858 | |||

| cd158f24f6 | |||

| ada0a3d10f | |||

| ddf1afb23f | |||

| e2b5489495 | |||

| 6459535e39 | |||

| 5a719c1904 | |||

| 1a2ea2c87d | |||

| ca79bafab3 | |||

| 618224beef | |||

| 481c2a5985 | |||

| e21d9dc9e3 | |||

| 6872a7076b | |||

| c2ae429805 |

2

.github/workflows/build-and-test.yaml

vendored

2

.github/workflows/build-and-test.yaml

vendored

@ -37,5 +37,3 @@ jobs:

|

||||

name: Test dev docker

|

||||

run: |

|

||||

docker run --rm codiumai/pr-agent:test pytest -v tests/unittest

|

||||

|

||||

|

||||

|

||||

3

.github/workflows/pr-agent-review.yaml

vendored

3

.github/workflows/pr-agent-review.yaml

vendored

@ -30,6 +30,3 @@ jobs:

|

||||

GITHUB_ACTION_CONFIG.AUTO_DESCRIBE: true

|

||||

GITHUB_ACTION_CONFIG.AUTO_REVIEW: true

|

||||

GITHUB_ACTION_CONFIG.AUTO_IMPROVE: true

|

||||

|

||||

|

||||

|

||||

|

||||

17

.github/workflows/pre-commit.yml

vendored

Normal file

17

.github/workflows/pre-commit.yml

vendored

Normal file

@ -0,0 +1,17 @@

|

||||

# disabled. We might run it manually if needed.

|

||||

name: pre-commit

|

||||

|

||||

on:

|

||||

workflow_dispatch:

|

||||

# pull_request:

|

||||

# push:

|

||||

# branches: [main]

|

||||

|

||||

jobs:

|

||||

pre-commit:

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- uses: actions/checkout@v3

|

||||

- uses: actions/setup-python@v5

|

||||

# SEE https://github.com/pre-commit/action

|

||||

- uses: pre-commit/action@v3.0.1

|

||||

@ -1,6 +1,3 @@

|

||||

[pr_reviewer]

|

||||

enable_review_labels_effort = true

|

||||

enable_auto_approval = true

|

||||

|

||||

[config]

|

||||

model="claude-3-5-sonnet"

|

||||

|

||||

46

.pre-commit-config.yaml

Normal file

46

.pre-commit-config.yaml

Normal file

@ -0,0 +1,46 @@

|

||||

# See https://pre-commit.com for more information

|

||||

# See https://pre-commit.com/hooks.html for more hooks

|

||||

|

||||

default_language_version:

|

||||

python: python3

|

||||

|

||||

repos:

|

||||

- repo: https://github.com/pre-commit/pre-commit-hooks

|

||||

rev: v5.0.0

|

||||

hooks:

|

||||

- id: check-added-large-files

|

||||

- id: check-toml

|

||||

- id: check-yaml

|

||||

- id: end-of-file-fixer

|

||||

- id: trailing-whitespace

|

||||

# - repo: https://github.com/rhysd/actionlint

|

||||

# rev: v1.7.3

|

||||

# hooks:

|

||||

# - id: actionlint

|

||||

- repo: https://github.com/pycqa/isort

|

||||

# rev must match what's in dev-requirements.txt

|

||||

rev: 5.13.2

|

||||

hooks:

|

||||

- id: isort

|

||||

# - repo: https://github.com/PyCQA/bandit

|

||||

# rev: 1.7.10

|

||||

# hooks:

|

||||

# - id: bandit

|

||||

# args: [

|

||||

# "-c", "pyproject.toml",

|

||||

# ]

|

||||

# - repo: https://github.com/astral-sh/ruff-pre-commit

|

||||

# rev: v0.7.1

|

||||

# hooks:

|

||||

# - id: ruff

|

||||

# args:

|

||||

# - --fix

|

||||

# - id: ruff-format

|

||||

# - repo: https://github.com/PyCQA/autoflake

|

||||

# rev: v2.3.1

|

||||

# hooks:

|

||||

# - id: autoflake

|

||||

# args:

|

||||

# - --in-place

|

||||

# - --remove-all-unused-imports

|

||||

# - --remove-unused-variables

|

||||

@ -1,10 +1,11 @@

|

||||

FROM python:3.10 as base

|

||||

FROM python:3.12 as base

|

||||

|

||||

WORKDIR /app

|

||||

ADD pyproject.toml .

|

||||

ADD requirements.txt .

|

||||

RUN pip install . && rm pyproject.toml requirements.txt

|

||||

ENV PYTHONPATH=/app

|

||||

ADD docs docs

|

||||

ADD pr_agent pr_agent

|

||||

ADD github_action/entrypoint.sh /

|

||||

RUN chmod +x /entrypoint.sh

|

||||

|

||||

54

README.md

54

README.md

@ -10,7 +10,7 @@

|

||||

|

||||

</picture>

|

||||

<br/>

|

||||

CodiumAI PR-Agent aims to help efficiently review and handle pull requests, by providing AI feedback and suggestions

|

||||

Qode Merge PR-Agent aims to help efficiently review and handle pull requests, by providing AI feedback and suggestions

|

||||

</div>

|

||||

|

||||

[](https://github.com/Codium-ai/pr-agent/blob/main/LICENSE)

|

||||

@ -25,9 +25,9 @@ CodiumAI PR-Agent aims to help efficiently review and handle pull requests, by p

|

||||

</div>

|

||||

|

||||

### [Documentation](https://pr-agent-docs.codium.ai/)

|

||||

- See the [Installation Guide](https://qodo-merge-docs.qodo.ai/installation/) for instructions on installing PR-Agent on different platforms.

|

||||

- See the [Installation Guide](https://qodo-merge-docs.qodo.ai/installation/) for instructions on installing Qode Merge PR-Agent on different platforms.

|

||||

|

||||

- See the [Usage Guide](https://qodo-merge-docs.qodo.ai/usage-guide/) for instructions on running PR-Agent tools via different interfaces, such as CLI, PR Comments, or by automatically triggering them when a new PR is opened.

|

||||

- See the [Usage Guide](https://qodo-merge-docs.qodo.ai/usage-guide/) for instructions on running Qode Merge PR-Agent tools via different interfaces, such as CLI, PR Comments, or by automatically triggering them when a new PR is opened.

|

||||

|

||||

- See the [Tools Guide](https://qodo-merge-docs.qodo.ai/tools/) for a detailed description of the different tools, and the available configurations for each tool.

|

||||

|

||||

@ -43,45 +43,38 @@ CodiumAI PR-Agent aims to help efficiently review and handle pull requests, by p

|

||||

|

||||

## News and Updates

|

||||

|

||||

### September 21, 2024

|

||||

Need help with PR-Agent? New feature - simply comment `/help "your question"` in a pull request, and PR-Agent will provide you with the [relevant documentation](https://github.com/Codium-ai/pr-agent/pull/1241#issuecomment-2365259334).

|

||||

### December 2, 2024

|

||||

|

||||

<kbd><img src="https://www.codium.ai/images/pr_agent/pr_help_chat.png" width="768"></kbd>

|

||||

Open-source repositories can now freely use Qodo Merge Pro, and enjoy easy one-click installation using our dedicated [app](https://github.com/apps/qodo-merge-pro-for-open-source).

|

||||

|

||||

<kbd><img src="https://github.com/user-attachments/assets/b0838724-87b9-43b0-ab62-73739a3a855c" width="512"></kbd>

|

||||

|

||||

|

||||

### September 12, 2024

|

||||

[Dynamic context](https://pr-agent-docs.codium.ai/core-abilities/dynamic_context/) is now the default option for context extension.

|

||||

This feature enables PR-Agent to dynamically adjusting the relevant context for each code hunk, while avoiding overflowing the model with too much information.

|

||||

### November 18, 2024

|

||||

|

||||

### September 3, 2024

|

||||

A new mode was enabled by default for code suggestions - `--pr_code_suggestions.focus_only_on_problems=true`:

|

||||

|

||||

New version of PR-Agent, v0.24 was released. See the [release notes](https://github.com/Codium-ai/pr-agent/releases/tag/v0.24) for more information.

|

||||

- This option reduces the number of code suggestions received

|

||||

- The suggestions will focus more on identifying and fixing code problems, rather than style considerations like best practices, maintainability, or readability.

|

||||

- The suggestions will be categorized into just two groups: "Possible Issues" and "General".

|

||||

|

||||

### August 26, 2024

|

||||

Still, if you prefer the previous mode, you can set `--pr_code_suggestions.focus_only_on_problems=false` in the [configuration file](https://qodo-merge-docs.qodo.ai/usage-guide/configuration_options/).

|

||||

|

||||

New version of [PR Agent Chrome Extension](https://chromewebstore.google.com/detail/pr-agent-chrome-extension/ephlnjeghhogofkifjloamocljapahnl) was released, with full support of context-aware **PR Chat**. This novel feature is free to use for any open-source repository. See more details in [here](https://pr-agent-docs.codium.ai/chrome-extension/#pr-chat).

|

||||

**Example results:**

|

||||

|

||||

<kbd><img src="https://www.codium.ai/images/pr_agent/pr_chat_1.png" width="768"></kbd>

|

||||

Original mode

|

||||

|

||||

<kbd><img src="https://www.codium.ai/images/pr_agent/pr_chat_2.png" width="768"></kbd>

|

||||

<kbd><img src="https://qodo.ai/images/pr_agent/code_suggestions_original_mode.png" width="512"></kbd>

|

||||

|

||||

Focused mode

|

||||

|

||||

<kbd><img src="https://qodo.ai/images/pr_agent/code_suggestions_focused_mode.png" width="512"></kbd>

|

||||

|

||||

|

||||

### August 11, 2024

|

||||

Increased PR context size for improved results, and enabled [asymmetric context](https://github.com/Codium-ai/pr-agent/pull/1114/files#diff-9290a3ad9a86690b31f0450b77acd37ef1914b41fabc8a08682d4da433a77f90R69-R70)

|

||||

|

||||

### August 10, 2024

|

||||

Added support for [Azure devops pipeline](https://pr-agent-docs.codium.ai/installation/azure/) - you can now easily run PR-Agent as an Azure devops pipeline, without needing to set up your own server.

|

||||

|

||||

|

||||

### August 5, 2024

|

||||

Added support for [GitLab pipeline](https://pr-agent-docs.codium.ai/installation/gitlab/#run-as-a-gitlab-pipeline) - you can now run easily PR-Agent as a GitLab pipeline, without needing to set up your own server.

|

||||

|

||||

### July 28, 2024

|

||||

|

||||

(1) improved support for bitbucket server - [auto commands](https://github.com/Codium-ai/pr-agent/pull/1059) and [direct links](https://github.com/Codium-ai/pr-agent/pull/1061)

|

||||

|

||||

(2) custom models are now [supported](https://pr-agent-docs.codium.ai/usage-guide/changing_a_model/#custom-models)

|

||||

### November 4, 2024

|

||||

|

||||

Qodo Merge PR Agent will now leverage context from Jira or GitHub tickets to enhance the PR Feedback. Read more about this feature

|

||||

[here](https://qodo-merge-docs.qodo.ai/core-abilities/fetching_ticket_context/)

|

||||

|

||||

|

||||

## Overview

|

||||

@ -93,7 +86,6 @@ Supported commands per platform:

|

||||

|-------|---------------------------------------------------------------------------------------------------------|:--------------------:|:--------------------:|:--------------------:|:------------:|

|

||||

| TOOLS | Review | ✅ | ✅ | ✅ | ✅ |

|

||||

| | ⮑ Incremental | ✅ | | | |

|

||||

| | ⮑ [SOC2 Compliance](https://pr-agent-docs.codium.ai/tools/review/#soc2-ticket-compliance) 💎 | ✅ | ✅ | ✅ | |

|

||||

| | Describe | ✅ | ✅ | ✅ | ✅ |

|

||||

| | ⮑ [Inline File Summary](https://pr-agent-docs.codium.ai/tools/describe#inline-file-summary) 💎 | ✅ | | | |

|

||||

| | Improve | ✅ | ✅ | ✅ | ✅ |

|

||||

|

||||

@ -1,9 +1,9 @@

|

||||

FROM python:3.12.3 AS base

|

||||

|

||||

WORKDIR /app

|

||||

ADD docs/chroma_db.zip /app/docs/chroma_db.zip

|

||||

ADD pyproject.toml .

|

||||

ADD requirements.txt .

|

||||

ADD docs docs

|

||||

RUN pip install . && rm pyproject.toml requirements.txt

|

||||

ENV PYTHONPATH=/app

|

||||

|

||||

|

||||

Binary file not shown.

Binary file not shown.

|

Before Width: | Height: | Size: 15 KiB After Width: | Height: | Size: 4.2 KiB |

@ -1,140 +1 @@

|

||||

<?xml version="1.0" encoding="utf-8"?>

|

||||

<!-- Generator: Adobe Illustrator 28.1.0, SVG Export Plug-In . SVG Version: 6.00 Build 0) -->

|

||||

<svg version="1.1" id="Layer_1" xmlns="http://www.w3.org/2000/svg" xmlns:xlink="http://www.w3.org/1999/xlink" x="0px" y="0px"

|

||||

width="64px" height="64px" viewBox="0 0 64 64" enable-background="new 0 0 64 64" xml:space="preserve">

|

||||

<g>

|

||||

<defs>

|

||||

<rect id="SVGID_1_" x="0.4" y="0.1" width="63.4" height="63.4"/>

|

||||

</defs>

|

||||

<clipPath id="SVGID_00000008836131916906499950000015813697852011234749_">

|

||||

<use xlink:href="#SVGID_1_" overflow="visible"/>

|

||||

</clipPath>

|

||||

<g clip-path="url(#SVGID_00000008836131916906499950000015813697852011234749_)">

|

||||

<path fill="#05E5AD" d="M21.4,9.8c3,0,5.9,0.7,8.5,1.9c-5.7,3.4-9.8,11.1-9.8,20.1c0,9,4,16.7,9.8,20.1c-2.6,1.2-5.5,1.9-8.5,1.9

|

||||

c-11.6,0-21-9.8-21-22S9.8,9.8,21.4,9.8z"/>

|

||||

|

||||

<radialGradient id="SVGID_00000150822754378345238340000008985053211526864828_" cx="-140.0905" cy="350.1757" r="4.8781" gradientTransform="matrix(-4.7708 -6.961580e-02 -0.1061 7.2704 -601.3099 -2523.8489)" gradientUnits="userSpaceOnUse">

|

||||

<stop offset="0" style="stop-color:#6447FF"/>

|

||||

<stop offset="6.666670e-02" style="stop-color:#6348FE"/>

|

||||

<stop offset="0.1333" style="stop-color:#614DFC"/>

|

||||

<stop offset="0.2" style="stop-color:#5C54F8"/>

|

||||

<stop offset="0.2667" style="stop-color:#565EF3"/>

|

||||

<stop offset="0.3333" style="stop-color:#4E6CEC"/>

|

||||

<stop offset="0.4" style="stop-color:#447BE4"/>

|

||||

<stop offset="0.4667" style="stop-color:#3A8DDB"/>

|

||||

<stop offset="0.5333" style="stop-color:#2F9FD1"/>

|

||||

<stop offset="0.6" style="stop-color:#25B1C8"/>

|

||||

<stop offset="0.6667" style="stop-color:#1BC0C0"/>

|

||||

<stop offset="0.7333" style="stop-color:#13CEB9"/>

|

||||

<stop offset="0.8" style="stop-color:#0DD8B4"/>

|

||||

<stop offset="0.8667" style="stop-color:#08DFB0"/>

|

||||

<stop offset="0.9333" style="stop-color:#06E4AE"/>

|

||||

<stop offset="1" style="stop-color:#05E5AD"/>

|

||||

</radialGradient>

|

||||

<path fill="url(#SVGID_00000150822754378345238340000008985053211526864828_)" d="M21.4,9.8c3,0,5.9,0.7,8.5,1.9

|

||||

c-5.7,3.4-9.8,11.1-9.8,20.1c0,9,4,16.7,9.8,20.1c-2.6,1.2-5.5,1.9-8.5,1.9c-11.6,0-21-9.8-21-22S9.8,9.8,21.4,9.8z"/>

|

||||

|

||||

<radialGradient id="SVGID_00000022560571240417802950000012439139323268113305_" cx="-191.7649" cy="385.7387" r="4.8781" gradientTransform="matrix(-2.5514 -0.7616 -0.8125 2.7217 -130.733 -1180.2209)" gradientUnits="userSpaceOnUse">

|

||||

<stop offset="0" style="stop-color:#6447FF"/>

|

||||

<stop offset="6.666670e-02" style="stop-color:#6348FE"/>

|

||||

<stop offset="0.1333" style="stop-color:#614DFC"/>

|

||||

<stop offset="0.2" style="stop-color:#5C54F8"/>

|

||||

<stop offset="0.2667" style="stop-color:#565EF3"/>

|

||||

<stop offset="0.3333" style="stop-color:#4E6CEC"/>

|

||||

<stop offset="0.4" style="stop-color:#447BE4"/>

|

||||

<stop offset="0.4667" style="stop-color:#3A8DDB"/>

|

||||

<stop offset="0.5333" style="stop-color:#2F9FD1"/>

|

||||

<stop offset="0.6" style="stop-color:#25B1C8"/>

|

||||

<stop offset="0.6667" style="stop-color:#1BC0C0"/>

|

||||

<stop offset="0.7333" style="stop-color:#13CEB9"/>

|

||||

<stop offset="0.8" style="stop-color:#0DD8B4"/>

|

||||

<stop offset="0.8667" style="stop-color:#08DFB0"/>

|

||||

<stop offset="0.9333" style="stop-color:#06E4AE"/>

|

||||

<stop offset="1" style="stop-color:#05E5AD"/>

|

||||

</radialGradient>

|

||||

<path fill="url(#SVGID_00000022560571240417802950000012439139323268113305_)" d="M38,18.3c-2.1-2.8-4.9-5.1-8.1-6.6

|

||||

c2-1.2,4.2-1.9,6.6-1.9c2.2,0,4.3,0.6,6.2,1.7C40.8,12.9,39.2,15.3,38,18.3L38,18.3z"/>

|

||||

|

||||

<radialGradient id="SVGID_00000143611122169386473660000017673587931016751800_" cx="-194.7918" cy="395.2442" r="4.8781" gradientTransform="matrix(-2.5514 -0.7616 -0.8125 2.7217 -130.733 -1172.9556)" gradientUnits="userSpaceOnUse">

|

||||

<stop offset="0" style="stop-color:#6447FF"/>

|

||||

<stop offset="6.666670e-02" style="stop-color:#6348FE"/>

|

||||

<stop offset="0.1333" style="stop-color:#614DFC"/>

|

||||

<stop offset="0.2" style="stop-color:#5C54F8"/>

|

||||

<stop offset="0.2667" style="stop-color:#565EF3"/>

|

||||

<stop offset="0.3333" style="stop-color:#4E6CEC"/>

|

||||

<stop offset="0.4" style="stop-color:#447BE4"/>

|

||||

<stop offset="0.4667" style="stop-color:#3A8DDB"/>

|

||||

<stop offset="0.5333" style="stop-color:#2F9FD1"/>

|

||||

<stop offset="0.6" style="stop-color:#25B1C8"/>

|

||||

<stop offset="0.6667" style="stop-color:#1BC0C0"/>

|

||||

<stop offset="0.7333" style="stop-color:#13CEB9"/>

|

||||

<stop offset="0.8" style="stop-color:#0DD8B4"/>

|

||||

<stop offset="0.8667" style="stop-color:#08DFB0"/>

|

||||

<stop offset="0.9333" style="stop-color:#06E4AE"/>

|

||||

<stop offset="1" style="stop-color:#05E5AD"/>

|

||||

</radialGradient>

|

||||

<path fill="url(#SVGID_00000143611122169386473660000017673587931016751800_)" d="M38,45.2c1.2,3,2.9,5.3,4.7,6.8

|

||||

c-1.9,1.1-4,1.7-6.2,1.7c-2.3,0-4.6-0.7-6.6-1.9C33.1,50.4,35.8,48.1,38,45.2L38,45.2z"/>

|

||||

<path fill="#684BFE" d="M20.1,31.8c0-9,4-16.7,9.8-20.1c3.2,1.5,6,3.8,8.1,6.6c-1.5,3.7-2.5,8.4-2.5,13.5s0.9,9.8,2.5,13.5

|

||||

c-2.1,2.8-4.9,5.1-8.1,6.6C24.1,48.4,20.1,40.7,20.1,31.8z"/>

|

||||

|

||||

<radialGradient id="SVGID_00000147942998054305738810000004710078864578628519_" cx="-212.7358" cy="363.2475" r="4.8781" gradientTransform="matrix(-2.3342 -1.063 -1.623 3.5638 149.3813 -1470.1027)" gradientUnits="userSpaceOnUse">

|

||||

<stop offset="0" style="stop-color:#6447FF"/>

|

||||

<stop offset="6.666670e-02" style="stop-color:#6348FE"/>

|

||||

<stop offset="0.1333" style="stop-color:#614DFC"/>

|

||||

<stop offset="0.2" style="stop-color:#5C54F8"/>

|

||||

<stop offset="0.2667" style="stop-color:#565EF3"/>

|

||||

<stop offset="0.3333" style="stop-color:#4E6CEC"/>

|

||||

<stop offset="0.4" style="stop-color:#447BE4"/>

|

||||

<stop offset="0.4667" style="stop-color:#3A8DDB"/>

|

||||

<stop offset="0.5333" style="stop-color:#2F9FD1"/>

|

||||

<stop offset="0.6" style="stop-color:#25B1C8"/>

|

||||

<stop offset="0.6667" style="stop-color:#1BC0C0"/>

|

||||

<stop offset="0.7333" style="stop-color:#13CEB9"/>

|

||||

<stop offset="0.8" style="stop-color:#0DD8B4"/>

|

||||

<stop offset="0.8667" style="stop-color:#08DFB0"/>

|

||||

<stop offset="0.9333" style="stop-color:#06E4AE"/>

|

||||

<stop offset="1" style="stop-color:#05E5AD"/>

|

||||

</radialGradient>

|

||||

<path fill="url(#SVGID_00000147942998054305738810000004710078864578628519_)" d="M50.7,42.5c0.6,3.3,1.5,6.1,2.5,8

|

||||

c-1.8,2-3.8,3.1-6,3.1c-1.6,0-3.1-0.6-4.5-1.7C46.1,50.2,48.9,46.8,50.7,42.5L50.7,42.5z"/>

|

||||

|

||||

<radialGradient id="SVGID_00000083770737908230256670000016126156495859285174_" cx="-208.5327" cy="357.2025" r="4.8781" gradientTransform="matrix(-2.3342 -1.063 -1.623 3.5638 149.3813 -1476.8097)" gradientUnits="userSpaceOnUse">

|

||||

<stop offset="0" style="stop-color:#6447FF"/>

|

||||

<stop offset="6.666670e-02" style="stop-color:#6348FE"/>

|

||||

<stop offset="0.1333" style="stop-color:#614DFC"/>

|

||||

<stop offset="0.2" style="stop-color:#5C54F8"/>

|

||||

<stop offset="0.2667" style="stop-color:#565EF3"/>

|

||||

<stop offset="0.3333" style="stop-color:#4E6CEC"/>

|

||||

<stop offset="0.4" style="stop-color:#447BE4"/>

|

||||

<stop offset="0.4667" style="stop-color:#3A8DDB"/>

|

||||

<stop offset="0.5333" style="stop-color:#2F9FD1"/>

|

||||

<stop offset="0.6" style="stop-color:#25B1C8"/>

|

||||

<stop offset="0.6667" style="stop-color:#1BC0C0"/>

|

||||

<stop offset="0.7333" style="stop-color:#13CEB9"/>

|

||||

<stop offset="0.8" style="stop-color:#0DD8B4"/>

|

||||

<stop offset="0.8667" style="stop-color:#08DFB0"/>

|

||||

<stop offset="0.9333" style="stop-color:#06E4AE"/>

|

||||

<stop offset="1" style="stop-color:#05E5AD"/>

|

||||

</radialGradient>

|

||||

<path fill="url(#SVGID_00000083770737908230256670000016126156495859285174_)" d="M42.7,11.5c1.4-1.1,2.9-1.7,4.5-1.7

|

||||

c2.2,0,4.3,1.1,6,3.1c-1,2-1.9,4.7-2.5,8C48.9,16.7,46.1,13.4,42.7,11.5L42.7,11.5z"/>

|

||||

<path fill="#684BFE" d="M38,45.2c2.8-3.7,4.4-8.4,4.4-13.5c0-5.1-1.7-9.8-4.4-13.5c1.2-3,2.9-5.3,4.7-6.8c3.4,1.9,6.2,5.3,8,9.5

|

||||

c-0.6,3.2-0.9,6.9-0.9,10.8s0.3,7.6,0.9,10.8c-1.8,4.3-4.6,7.6-8,9.5C40.8,50.6,39.2,48.2,38,45.2L38,45.2z"/>

|

||||

<path fill="#321BB2" d="M38,45.2c-1.5-3.7-2.5-8.4-2.5-13.5S36.4,22,38,18.3c2.8,3.7,4.4,8.4,4.4,13.5S40.8,41.5,38,45.2z"/>

|

||||

<path fill="#05E6AD" d="M53.2,12.9c1.1-2,2.3-3.1,3.6-3.1c3.9,0,7,9.8,7,22s-3.1,22-7,22c-1.3,0-2.6-1.1-3.6-3.1

|

||||

c3.4-3.8,5.7-10.8,5.7-18.8C58.8,23.8,56.6,16.8,53.2,12.9z"/>

|

||||

|

||||

<radialGradient id="SVGID_00000009565123575973598080000009335550354766300606_" cx="-7.8671" cy="278.2442" r="4.8781" gradientTransform="matrix(1.5187 0 0 -7.8271 69.237 2209.3281)" gradientUnits="userSpaceOnUse">

|

||||

<stop offset="0" style="stop-color:#05E5AD"/>

|

||||

<stop offset="0.32" style="stop-color:#05E5AD;stop-opacity:0"/>

|

||||

<stop offset="0.9028" style="stop-color:#6447FF"/>

|

||||

</radialGradient>

|

||||

<path fill="url(#SVGID_00000009565123575973598080000009335550354766300606_)" d="M53.2,12.9c1.1-2,2.3-3.1,3.6-3.1

|

||||

c3.9,0,7,9.8,7,22s-3.1,22-7,22c-1.3,0-2.6-1.1-3.6-3.1c3.4-3.8,5.7-10.8,5.7-18.8C58.8,23.8,56.6,16.8,53.2,12.9z"/>

|

||||

<path fill="#684BFE" d="M52.8,31.8c0-3.9-0.8-7.6-2.1-10.8c0.6-3.3,1.5-6.1,2.5-8c3.4,3.8,5.7,10.8,5.7,18.8c0,8-2.3,15-5.7,18.8

|

||||

c-1-2-1.9-4.7-2.5-8C52,39.3,52.8,35.7,52.8,31.8z"/>

|

||||

<path fill="#321BB2" d="M50.7,42.5c-0.6-3.2-0.9-6.9-0.9-10.8s0.3-7.6,0.9-10.8c1.3,3.2,2.1,6.9,2.1,10.8S52,39.3,50.7,42.5z"/>

|

||||

</g>

|

||||

</g>

|

||||

</svg>

|

||||

<?xml version="1.0" encoding="UTF-8"?><svg id="Layer_1" xmlns="http://www.w3.org/2000/svg" viewBox="0 0 109.77 81.94"><defs><style>.cls-1{fill:#7968fa;}.cls-1,.cls-2{stroke-width:0px;}.cls-2{fill:#5ae3ae;}</style></defs><path class="cls-2" d="m109.77,40.98c0,22.62-7.11,40.96-15.89,40.96-3.6,0-6.89-3.09-9.58-8.31,6.82-7.46,11.22-19.3,11.22-32.64s-4.4-25.21-11.22-32.67C86.99,3.09,90.29,0,93.89,0c8.78,0,15.89,18.33,15.89,40.97"/><path class="cls-1" d="m95.53,40.99c0,13.35-4.4,25.19-11.23,32.64-3.81-7.46-6.28-19.3-6.28-32.64s2.47-25.21,6.28-32.67c6.83,7.46,11.23,19.32,11.23,32.67"/><path class="cls-2" d="m55.38,78.15c-4.99,2.42-10.52,3.79-16.38,3.79C17.46,81.93,0,63.6,0,40.98S17.46,0,39,0C44.86,0,50.39,1.37,55.38,3.79c-9.69,6.47-16.43,20.69-16.43,37.19s6.73,30.7,16.43,37.17"/><path class="cls-1" d="m78.02,40.99c0,16.48-9.27,30.7-22.65,37.17-9.69-6.47-16.43-20.69-16.43-37.17S45.68,10.28,55.38,3.81c13.37,6.49,22.65,20.69,22.65,37.19"/><path class="cls-2" d="m84.31,73.63c-4.73,5.22-10.64,8.31-17.06,8.31-4.24,0-8.27-1.35-11.87-3.79,13.37-6.48,22.65-20.7,22.65-37.17,0,13.35,2.47,25.19,6.28,32.64"/><path class="cls-2" d="m84.31,8.31c-3.81,7.46-6.28,19.32-6.28,32.67,0-16.5-9.27-30.7-22.65-37.19,3.6-2.45,7.63-3.8,11.87-3.8,6.43,0,12.33,3.09,17.06,8.31"/></svg>

|

||||

|

||||

|

Before Width: | Height: | Size: 9.0 KiB After Width: | Height: | Size: 1.2 KiB |

BIN

docs/docs/assets/logo_.png

Normal file

BIN

docs/docs/assets/logo_.png

Normal file

Binary file not shown.

|

After Width: | Height: | Size: 8.7 KiB |

@ -2,4 +2,3 @@ We take your code's security and privacy seriously:

|

||||

|

||||

- The Chrome extension will not send your code to any external servers.

|

||||

- For private repositories, we will first validate the user's identity and permissions. After authentication, we generate responses using the existing Qodo Merge Pro integration.

|

||||

|

||||

|

||||

115

docs/docs/core-abilities/fetching_ticket_context.md

Normal file

115

docs/docs/core-abilities/fetching_ticket_context.md

Normal file

@ -0,0 +1,115 @@

|

||||

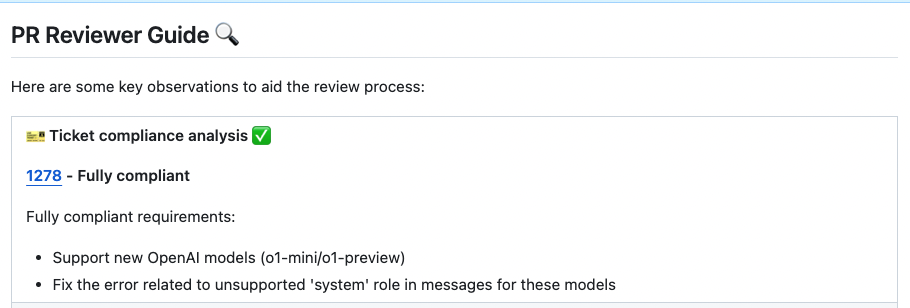

# Fetching Ticket Context for PRs

|

||||

## Overview

|

||||

Qodo Merge PR Agent streamlines code review workflows by seamlessly connecting with multiple ticket management systems.

|

||||

This integration enriches the review process by automatically surfacing relevant ticket information and context alongside code changes.

|

||||

|

||||

|

||||

## Affected Tools

|

||||

|

||||

Ticket Recognition Requirements:

|

||||

|

||||

1. The PR description should contain a link to the ticket.

|

||||

2. For Jira tickets, you should follow the instructions in [Jira Integration](https://qodo-merge-docs.qodo.ai/core-abilities/fetching_ticket_context/#jira-integration) in order to authenticate with Jira.

|

||||

|

||||

|

||||

### Describe tool

|

||||

Qodo Merge PR Agent will recognize the ticket and use the ticket content (title, description, labels) to provide additional context for the code changes.

|

||||

By understanding the reasoning and intent behind modifications, the LLM can offer more insightful and relevant code analysis.

|

||||

|

||||

### Review tool

|

||||

Similarly to the `describe` tool, the `review` tool will use the ticket content to provide additional context for the code changes.

|

||||

|

||||

In addition, this feature will evaluate how well a Pull Request (PR) adheres to its original purpose/intent as defined by the associated ticket or issue mentioned in the PR description.

|

||||

Each ticket will be assigned a label (Compliance/Alignment level), Indicates the degree to which the PR fulfills its original purpose, Options: Fully compliant, Partially compliant or Not compliant.

|

||||

|

||||

|

||||

{width=768}

|

||||

|

||||

By default, the tool will automatically validate if the PR complies with the referenced ticket.

|

||||

If you want to disable this feedback, add the following line to your configuration file:

|

||||

|

||||

```toml

|

||||

[pr_reviewer]

|

||||

require_ticket_analysis_review=false

|

||||

```

|

||||

|

||||

## Providers

|

||||

|

||||

### Github Issues Integration

|

||||

|

||||

Qodo Merge PR Agent will automatically recognize Github issues mentioned in the PR description and fetch the issue content.

|

||||

Examples of valid GitHub issue references:

|

||||

|

||||

- `https://github.com/<ORG_NAME>/<REPO_NAME>/issues/<ISSUE_NUMBER>`

|

||||

- `#<ISSUE_NUMBER>`

|

||||

- `<ORG_NAME>/<REPO_NAME>#<ISSUE_NUMBER>`

|

||||

|

||||

Since Qodo Merge PR Agent is integrated with GitHub, it doesn't require any additional configuration to fetch GitHub issues.

|

||||

|

||||

### Jira Integration 💎

|

||||

|

||||

We support both Jira Cloud and Jira Server/Data Center.

|

||||

To integrate with Jira, The PR Description should contain a link to the Jira ticket.

|

||||

|

||||

For Jira integration, include a ticket reference in your PR description using either the complete URL format `https://<JIRA_ORG>.atlassian.net/browse/ISSUE-123` or the shortened ticket ID `ISSUE-123`.

|

||||

|

||||

!!! note "Jira Base URL"

|

||||

If using the shortened format, ensure your configuration file contains the Jira base URL under the [jira] section like this:

|

||||

|

||||

```toml

|

||||

[jira]

|

||||

jira_base_url = "https://<JIRA_ORG>.atlassian.net"

|

||||

```

|

||||

|

||||

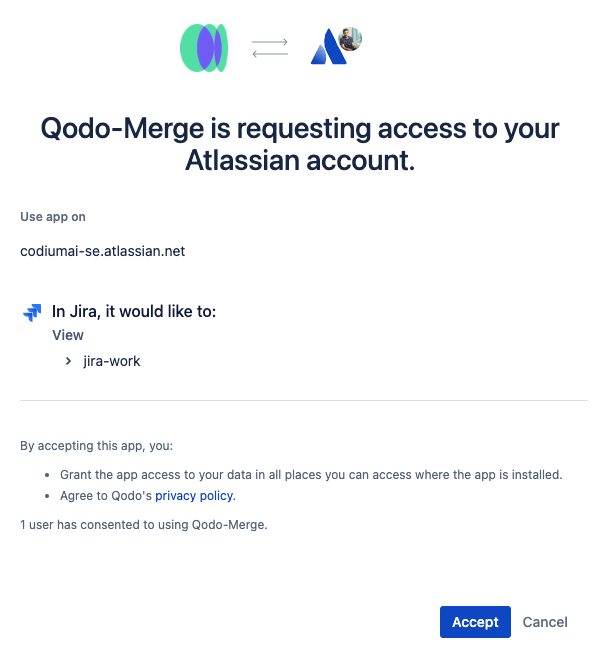

#### Jira Cloud 💎

|

||||

There are two ways to authenticate with Jira Cloud:

|

||||

|

||||

**1) Jira App Authentication**

|

||||

|

||||

The recommended way to authenticate with Jira Cloud is to install the Qodo Merge app in your Jira Cloud instance. This will allow Qodo Merge to access Jira data on your behalf.

|

||||

|

||||

Installation steps:

|

||||

|

||||

1. Click [here](https://auth.atlassian.com/authorize?audience=api.atlassian.com&client_id=8krKmA4gMD8mM8z24aRCgPCSepZNP1xf&scope=read%3Ajira-work%20offline_access&redirect_uri=https%3A%2F%2Fregister.jira.pr-agent.codium.ai&state=qodomerge&response_type=code&prompt=consent) to install the Qodo Merge app in your Jira Cloud instance, click the `accept` button.<br>

|

||||

{width=384}

|

||||

|

||||

2. After installing the app, you will be redirected to the Qodo Merge registration page. and you will see a success message.<br>

|

||||

{width=384}

|

||||

|

||||

3. Now you can use the Jira integration in Qodo Merge PR Agent.

|

||||

|

||||

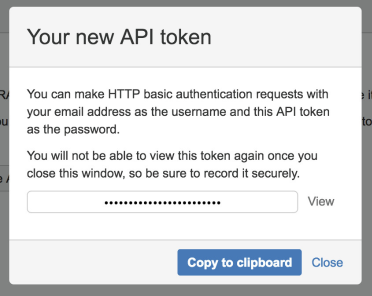

**2) Email/Token Authentication**

|

||||

|

||||

You can create an API token from your Atlassian account:

|

||||

|

||||

1. Log in to https://id.atlassian.com/manage-profile/security/api-tokens.

|

||||

|

||||

2. Click Create API token.

|

||||

|

||||

3. From the dialog that appears, enter a name for your new token and click Create.

|

||||

|

||||

4. Click Copy to clipboard.

|

||||

|

||||

{width=384}

|

||||

|

||||

5. In your [configuration file](https://qodo-merge-docs.qodo.ai/usage-guide/configuration_options/) add the following lines:

|

||||

|

||||

```toml

|

||||

[jira]

|

||||

jira_api_token = "YOUR_API_TOKEN"

|

||||

jira_api_email = "YOUR_EMAIL"

|

||||

```

|

||||

|

||||

|

||||

#### Jira Server/Data Center 💎

|

||||

|

||||

Currently, we only support the Personal Access Token (PAT) Authentication method.

|

||||

|

||||

1. Create a [Personal Access Token (PAT)](https://confluence.atlassian.com/enterprise/using-personal-access-tokens-1026032365.html) in your Jira account

|

||||

2. In your Configuration file/Environment variables/Secrets file, add the following lines:

|

||||

|

||||

```toml

|

||||

[jira]

|

||||

jira_base_url = "YOUR_JIRA_BASE_URL" # e.g. https://jira.example.com

|

||||

jira_api_token = "YOUR_API_TOKEN"

|

||||

```

|

||||

@ -1,6 +1,7 @@

|

||||

# Core Abilities

|

||||

Qodo Merge utilizes a variety of core abilities to provide a comprehensive and efficient code review experience. These abilities include:

|

||||

|

||||

- [Fetching ticket context](https://qodo-merge-docs.qodo.ai/core-abilities/fetching_ticket_context/)

|

||||

- [Local and global metadata](https://qodo-merge-docs.qodo.ai/core-abilities/metadata/)

|

||||

- [Dynamic context](https://qodo-merge-docs.qodo.ai/core-abilities/dynamic_context/)

|

||||

- [Self-reflection](https://qodo-merge-docs.qodo.ai/core-abilities/self_reflection/)

|

||||

@ -10,3 +11,19 @@ Qodo Merge utilizes a variety of core abilities to provide a comprehensive and e

|

||||

- [Code-oriented YAML](https://qodo-merge-docs.qodo.ai/core-abilities/code_oriented_yaml/)

|

||||

- [Static code analysis](https://qodo-merge-docs.qodo.ai/core-abilities/static_code_analysis/)

|

||||

- [Code fine-tuning benchmark](https://qodo-merge-docs.qodo.ai/finetuning_benchmark/)

|

||||

|

||||

## Blogs

|

||||

|

||||

Here are some additional technical blogs from Qodo, that delve deeper into the core capabilities and features of Large Language Models (LLMs) when applied to coding tasks.

|

||||

These resources provide more comprehensive insights into leveraging LLMs for software development.

|

||||

|

||||

### Code Generation and LLMs

|

||||

- [State-of-the-art Code Generation with AlphaCodium – From Prompt Engineering to Flow Engineering](https://www.qodo.ai/blog/qodoflow-state-of-the-art-code-generation-for-code-contests/)

|

||||

- [RAG for a Codebase with 10k Repos](https://www.qodo.ai/blog/rag-for-large-scale-code-repos/)

|

||||

|

||||

### Development Processes

|

||||

- [Understanding the Challenges and Pain Points of the Pull Request Cycle](https://www.qodo.ai/blog/understanding-the-challenges-and-pain-points-of-the-pull-request-cycle/)

|

||||

- [Introduction to Code Coverage Testing](https://www.qodo.ai/blog/introduction-to-code-coverage-testing/)

|

||||

|

||||

### Cost Optimization

|

||||

- [Reduce Your Costs by 30% When Using GPT for Python Code](https://www.qodo.ai/blog/reduce-your-costs-by-30-when-using-gpt-3-for-python-code/)

|

||||

|

||||

@ -49,7 +49,7 @@ __old hunk__

|

||||

...

|

||||

```

|

||||

|

||||

(3) The entire PR files that were retrieved are also used to expand and enhance the PR context (see [Dynamic Context](https://qodo-merge-docs.qodo.ai/core-abilities/dynamic-context/)).

|

||||

(3) The entire PR files that were retrieved are also used to expand and enhance the PR context (see [Dynamic Context](https://qodo-merge-docs.qodo.ai/core-abilities/dynamic_context/)).

|

||||

|

||||

|

||||

(4) All the metadata described above represents several level of cumulative analysis - ranging from hunk level, to file level, to PR level, to organization level.

|

||||

|

||||

@ -46,6 +46,5 @@ This results in a more refined and valuable set of suggestions for the user, sav

|

||||

## Appendix - Relevant Configuration Options

|

||||

```

|

||||

[pr_code_suggestions]

|

||||

self_reflect_on_suggestions = true # Enable self-reflection on code suggestions

|

||||

suggestions_score_threshold = 0 # Filter out suggestions with a score below this threshold (0-10)

|

||||

```

|

||||

@ -29,7 +29,6 @@ Qodo Merge offers extensive pull request functionalities across various git prov

|

||||

|-------|-----------------------------------------------------------------------------------------------------------------------|:------:|:------:|:---------:|:------------:|

|

||||

| TOOLS | Review | ✅ | ✅ | ✅ | ✅ |

|

||||

| | ⮑ Incremental | ✅ | | | |

|

||||

| | ⮑ [SOC2 Compliance](https://qodo-merge-docs.qodo.ai/tools/review/#soc2-ticket-compliance){:target="_blank"} 💎 | ✅ | ✅ | ✅ | |

|

||||

| | Ask | ✅ | ✅ | ✅ | ✅ |

|

||||

| | Describe | ✅ | ✅ | ✅ | ✅ |

|

||||

| | ⮑ [Inline file summary](https://qodo-merge-docs.qodo.ai/tools/describe/#inline-file-summary){:target="_blank"} 💎 | ✅ | ✅ | | |

|

||||

|

||||

@ -51,10 +51,12 @@ stages:

|

||||

```

|

||||

This script will run Qodo Merge on every new merge request, with the `improve`, `review`, and `describe` commands.

|

||||

Note that you need to export the `azure_devops__pat` and `OPENAI_KEY` variables in the Azure DevOps pipeline settings (Pipelines -> Library -> + Variable group):

|

||||

|

||||

{width=468}

|

||||

|

||||

Make sure to give pipeline permissions to the `pr_agent` variable group.

|

||||

|

||||

> Note that Azure Pipelines lacks support for triggering workflows from PR comments. If you find a viable solution, please contribute it to our [issue tracker](https://github.com/Codium-ai/pr-agent/issues)

|

||||

|

||||

## Azure DevOps from CLI

|

||||

|

||||

|

||||

@ -3,7 +3,7 @@

|

||||

|

||||

You can use the Bitbucket Pipeline system to run Qodo Merge on every pull request open or update.

|

||||

|

||||

1. Add the following file in your repository bitbucket_pipelines.yml

|

||||

1. Add the following file in your repository bitbucket-pipelines.yml

|

||||

|

||||

```yaml

|

||||

pipelines:

|

||||

|

||||

@ -27,27 +27,6 @@ jobs:

|

||||

GITHUB_TOKEN: ${{ secrets.GITHUB_TOKEN }}

|

||||

```

|

||||

|

||||

|

||||

if you want to pin your action to a specific release (v0.23 for example) for stability reasons, use:

|

||||

```yaml

|

||||

...

|

||||

steps:

|

||||

- name: PR Agent action step

|

||||

id: pragent

|

||||

uses: docker://codiumai/pr-agent:0.23-github_action

|

||||

...

|

||||

```

|

||||

|

||||

For enhanced security, you can also specify the Docker image by its [digest](https://hub.docker.com/repository/docker/codiumai/pr-agent/tags):

|

||||

```yaml

|

||||

...

|

||||

steps:

|

||||

- name: PR Agent action step

|

||||

id: pragent

|

||||

uses: docker://codiumai/pr-agent@sha256:14165e525678ace7d9b51cda8652c2d74abb4e1d76b57c4a6ccaeba84663cc64

|

||||

...

|

||||

```

|

||||

|

||||

2) Add the following secret to your repository under `Settings > Secrets and variables > Actions > New repository secret > Add secret`:

|

||||

|

||||

```

|

||||

@ -70,6 +49,40 @@ When you open your next PR, you should see a comment from `github-actions` bot w

|

||||

```

|

||||

See detailed usage instructions in the [USAGE GUIDE](https://qodo-merge-docs.qodo.ai/usage-guide/automations_and_usage/#github-action)

|

||||

|

||||

### Using a specific release

|

||||

!!! tip ""

|

||||

if you want to pin your action to a specific release (v0.23 for example) for stability reasons, use:

|

||||

```yaml

|

||||

...

|

||||

steps:

|

||||

- name: PR Agent action step

|

||||

id: pragent

|

||||

uses: docker://codiumai/pr-agent:0.23-github_action

|

||||

...

|

||||

```

|

||||

|

||||

For enhanced security, you can also specify the Docker image by its [digest](https://hub.docker.com/repository/docker/codiumai/pr-agent/tags):

|

||||

```yaml

|

||||

...

|

||||

steps:

|

||||

- name: PR Agent action step

|

||||

id: pragent

|

||||

uses: docker://codiumai/pr-agent@sha256:14165e525678ace7d9b51cda8652c2d74abb4e1d76b57c4a6ccaeba84663cc64

|

||||

...

|

||||

```

|

||||

|

||||

### Action for GitHub enterprise server

|

||||

!!! tip ""

|

||||

To use the action with a GitHub enterprise server, add an environment variable `GITHUB.BASE_URL` with the API URL of your GitHub server.

|

||||

|

||||

For example, if your GitHub server is at `https://github.mycompany.com`, add the following to your workflow file:

|

||||

```yaml

|

||||

env:

|

||||

# ... previous environment values

|

||||

GITHUB.BASE_URL: "https://github.mycompany.com/api/v3"

|

||||

```

|

||||

|

||||

|

||||

---

|

||||

|

||||

## Run as a GitHub App

|

||||

|

||||

@ -38,25 +38,41 @@ You can also modify the `script` section to run different Qodo Merge commands, o

|

||||

|

||||

Note that if your base branches are not protected, don't set the variables as `protected`, since the pipeline will not have access to them.

|

||||

|

||||

> **Note**: The `$CI_SERVER_FQDN` variable is available starting from GitLab version 16.10. If you're using an earlier version, this variable will not be available. However, you can combine `$CI_SERVER_HOST` and `$CI_SERVER_PORT` to achieve the same result. Please ensure you're using a compatible version or adjust your configuration.

|

||||

|

||||

|

||||

## Run a GitLab webhook server

|

||||

|

||||

1. From the GitLab workspace or group, create an access token. Enable the "api" scope only.

|

||||

1. From the GitLab workspace or group, create an access token with "Reporter" role ("Developer" if using Pro version of the agent) and "api" scope.

|

||||

|

||||

2. Generate a random secret for your app, and save it for later. For example, you can use:

|

||||

|

||||

```

|

||||

WEBHOOK_SECRET=$(python -c "import secrets; print(secrets.token_hex(10))")

|

||||

```

|

||||

3. Follow the instructions to build the Docker image, setup a secrets file and deploy on your own server from [here](https://qodo-merge-docs.qodo.ai/installation/github/#run-as-a-github-app) steps 4-7.

|

||||

|

||||

4. In the secrets file, fill in the following:

|

||||

- Your OpenAI key.

|

||||

- In the [gitlab] section, fill in personal_access_token and shared_secret. The access token can be a personal access token, or a group or project access token.

|

||||

- Set deployment_type to 'gitlab' in [configuration.toml](https://github.com/Codium-ai/pr-agent/blob/main/pr_agent/settings/configuration.toml)

|

||||

3. Clone this repository:

|

||||

|

||||

5. Create a webhook in GitLab. Set the URL to ```http[s]://<PR_AGENT_HOSTNAME>/webhook```. Set the secret token to the generated secret from step 2.

|

||||

In the "Trigger" section, check the ‘comments’ and ‘merge request events’ boxes.

|

||||

```

|

||||

git clone https://github.com/Codium-ai/pr-agent.git

|

||||

```

|

||||

|

||||

6. Test your installation by opening a merge request or commenting or a merge request using one of CodiumAI's commands.

|

||||

4. Prepare variables and secrets. Skip this step if you plan on settings these as environment variables when running the agent:

|

||||

1. In the configuration file/variables:

|

||||

- Set `deployment_type` to "gitlab"

|

||||

|

||||

2. In the secrets file/variables:

|

||||

- Set your AI model key in the respective section

|

||||

- In the [gitlab] section, set `personal_access_token` (with token from step 1) and `shared_secret` (with secret from step 2)

|

||||

|

||||

|

||||

5. Build a Docker image for the app and optionally push it to a Docker repository. We'll use Dockerhub as an example:

|

||||

```

|

||||

docker build . -t gitlab_pr_agent --target gitlab_webhook -f docker/Dockerfile

|

||||

docker push codiumai/pr-agent:gitlab_webhook # Push to your Docker repository

|

||||

```

|

||||

|

||||

6. Create a webhook in GitLab. Set the URL to ```http[s]://<PR_AGENT_HOSTNAME>/webhook```, the secret token to the generated secret from step 2, and enable the triggers `push`, `comments` and `merge request events`.

|

||||

|

||||

7. Test your installation by opening a merge request or commenting on a merge request using one of CodiumAI's commands.

|

||||

boxes

|

||||

|

||||

@ -1,7 +1,7 @@

|

||||

# Installation

|

||||

|

||||

## Self-hosted Qodo Merge

|

||||

If you choose to host you own Qodo Merge, you first need to acquire two tokens:

|

||||

If you choose to host your own Qodo Merge, you first need to acquire two tokens:

|

||||

|

||||

1. An OpenAI key from [here](https://platform.openai.com/api-keys), with access to GPT-4 (or a key for other [language models](https://qodo-merge-docs.qodo.ai/usage-guide/changing_a_model/), if you prefer).

|

||||

2. A GitHub\GitLab\BitBucket personal access token (classic), with the repo scope. [GitHub from [here](https://github.com/settings/tokens)]

|

||||

|

||||

@ -16,8 +16,8 @@ from pr_agent.config_loader import get_settings

|

||||

|

||||

def main():

|

||||

# Fill in the following values

|

||||

provider = "github" # GitHub provider

|

||||

user_token = "..." # GitHub user token

|

||||

provider = "github" # github/gitlab/bitbucket/azure_devops

|

||||

user_token = "..." # user token

|

||||

openai_key = "..." # OpenAI key

|

||||

pr_url = "..." # PR URL, for example 'https://github.com/Codium-ai/pr-agent/pull/809'

|

||||

command = "/review" # Command to run (e.g. '/review', '/describe', '/ask="What is the purpose of this PR?"', ...)

|

||||

@ -42,42 +42,34 @@ A list of the relevant tools can be found in the [tools guide](../tools/ask.md).

|

||||

To invoke a tool (for example `review`), you can run directly from the Docker image. Here's how:

|

||||

|

||||

- For GitHub:

|

||||

```

|

||||

docker run --rm -it -e OPENAI.KEY=<your key> -e GITHUB.USER_TOKEN=<your token> codiumai/pr-agent:latest --pr_url <pr_url> review

|

||||

```

|

||||

```

|

||||

docker run --rm -it -e OPENAI.KEY=<your key> -e GITHUB.USER_TOKEN=<your token> codiumai/pr-agent:latest --pr_url <pr_url> review

|

||||

```

|

||||

If you are using GitHub enterprise server, you need to specify the custom url as variable.

|

||||

For example, if your GitHub server is at `https://github.mycompany.com`, add the following to the command:

|

||||

```

|

||||

-e GITHUB.BASE_URL=https://github.mycompany.com/api/v3

|

||||

```

|

||||

|

||||

- For GitLab:

|

||||

```

|

||||

docker run --rm -it -e OPENAI.KEY=<your key> -e CONFIG.GIT_PROVIDER=gitlab -e GITLAB.PERSONAL_ACCESS_TOKEN=<your token> codiumai/pr-agent:latest --pr_url <pr_url> review

|

||||

```

|

||||

```

|

||||

docker run --rm -it -e OPENAI.KEY=<your key> -e CONFIG.GIT_PROVIDER=gitlab -e GITLAB.PERSONAL_ACCESS_TOKEN=<your token> codiumai/pr-agent:latest --pr_url <pr_url> review

|

||||

```

|

||||

|

||||

Note: If you have a dedicated GitLab instance, you need to specify the custom url as variable:

|

||||

```

|

||||

docker run --rm -it -e OPENAI.KEY=<your key> -e CONFIG.GIT_PROVIDER=gitlab -e GITLAB.PERSONAL_ACCESS_TOKEN=<your token> -e GITLAB.URL=<your gitlab instance url> codiumai/pr-agent:latest --pr_url <pr_url> review

|

||||

```

|

||||

If you have a dedicated GitLab instance, you need to specify the custom url as variable:

|

||||

```

|

||||

-e GITLAB.URL=<your gitlab instance url>

|

||||

```

|

||||

|

||||

- For BitBucket:

|

||||

```

|

||||

docker run --rm -it -e CONFIG.GIT_PROVIDER=bitbucket -e OPENAI.KEY=$OPENAI_API_KEY -e BITBUCKET.BEARER_TOKEN=$BITBUCKET_BEARER_TOKEN codiumai/pr-agent:latest --pr_url=<pr_url> review

|

||||

```

|

||||

```

|

||||

docker run --rm -it -e CONFIG.GIT_PROVIDER=bitbucket -e OPENAI.KEY=$OPENAI_API_KEY -e BITBUCKET.BEARER_TOKEN=$BITBUCKET_BEARER_TOKEN codiumai/pr-agent:latest --pr_url=<pr_url> review

|

||||

```

|

||||

|

||||

For other git providers, update CONFIG.GIT_PROVIDER accordingly, and check the `pr_agent/settings/.secrets_template.toml` file for the environment variables expected names and values.

|

||||

|

||||

---

|

||||

|

||||

|

||||

If you want to ensure you're running a specific version of the Docker image, consider using the image's digest:

|

||||

```bash

|

||||

docker run --rm -it -e OPENAI.KEY=<your key> -e GITHUB.USER_TOKEN=<your token> codiumai/pr-agent@sha256:71b5ee15df59c745d352d84752d01561ba64b6d51327f97d46152f0c58a5f678 --pr_url <pr_url> review

|

||||

```

|

||||

|

||||

Or you can run a [specific released versions](https://github.com/Codium-ai/pr-agent/blob/main/RELEASE_NOTES.md) of pr-agent, for example:

|

||||

```

|

||||

codiumai/pr-agent@v0.9

|

||||

```

|

||||

|

||||

---

|

||||

|

||||

## Run from source

|

||||

|

||||

1. Clone this repository:

|

||||

@ -115,7 +107,7 @@ python3 -m pr_agent.cli --issue_url <issue_url> similar_issue

|

||||

...

|

||||

```

|

||||

|

||||

[Optional] Add the pr_agent folder to your PYTHONPATH

|

||||

[Optional] Add the pr_agent folder to your PYTHONPATH

|

||||

```

|

||||

export PYTHONPATH=$PYTHONPATH:<PATH to pr_agent folder>

|

||||

```

|

||||

@ -17,8 +17,8 @@ Users without a purchased seat who interact with a repository featuring Qodo Mer

|

||||

Beyond this limit, Qodo Merge Pro will cease to respond to their inquiries unless a seat is purchased.

|

||||

|

||||

## Install Qodo Merge Pro for GitHub Enterprise Server

|

||||

You can install Qodo Merge Pro application on your GitHub Enterprise Server, and enjoy two weeks of free trial.

|

||||

After the trial period, to continue using Qodo Merge Pro, you will need to contact us for an [Enterprise license](https://www.codium.ai/pricing/).

|

||||

|

||||

To use Qodo Merge Pro application on your private GitHub Enterprise Server, you will need to contact us for starting an [Enterprise](https://www.codium.ai/pricing/) trial.

|

||||

|

||||

|

||||

## Install Qodo Merge Pro for GitLab (Teams & Enterprise)

|

||||

|

||||

@ -29,7 +29,6 @@ Qodo Merge offers extensive pull request functionalities across various git prov

|

||||

|-------|-----------------------------------------------------------------------------------------------------------------------|:------:|:------:|:---------:|:------------:|

|

||||

| TOOLS | Review | ✅ | ✅ | ✅ | ✅ |

|

||||

| | ⮑ Incremental | ✅ | | | |

|

||||

| | ⮑ [SOC2 Compliance](https://qodo-merge-docs.qodo.ai/tools/review/#soc2-ticket-compliance){:target="_blank"} 💎 | ✅ | ✅ | ✅ | ✅ |

|

||||

| | Ask | ✅ | ✅ | ✅ | ✅ |

|

||||

| | Describe | ✅ | ✅ | ✅ | ✅ |

|

||||

| | ⮑ [Inline file summary](https://qodo-merge-docs.qodo.ai/tools/describe/#inline-file-summary){:target="_blank"} 💎 | ✅ | ✅ | | ✅ |

|

||||

|

||||

@ -20,14 +20,13 @@ Here are some of the additional features and capabilities that Qodo Merge Pro of

|

||||

| Feature | Description |

|

||||

|----------------------------------------------------------------------------------------------------------------------|------------------------------------------------------------------------------------------------------------------------------------------------------------------|

|

||||

| [**Model selection**](https://qodo-merge-docs.qodo.ai/usage-guide/PR_agent_pro_models/) | Choose the model that best fits your needs, among top models like `GPT4` and `Claude-Sonnet-3.5`

|

||||

| [**Global and wiki configuration**](https://qodo-merge-docs.qodo.ai/usage-guide/configuration_options/) | Control configurations for many repositories from a single location; <br>Edit configuration of a single repo without commiting code |

|

||||

| [**Apply suggestions**](https://qodo-merge-docs.qodo.ai/tools/improve/#overview) | Generate commitable code from the relevant suggestions interactively by clicking on a checkbox |

|

||||

| [**Global and wiki configuration**](https://qodo-merge-docs.qodo.ai/usage-guide/configuration_options/) | Control configurations for many repositories from a single location; <br>Edit configuration of a single repo without committing code |

|

||||

| [**Apply suggestions**](https://qodo-merge-docs.qodo.ai/tools/improve/#overview) | Generate committable code from the relevant suggestions interactively by clicking on a checkbox |

|

||||

| [**Suggestions impact**](https://qodo-merge-docs.qodo.ai/tools/improve/#assessing-impact) | Automatically mark suggestions that were implemented by the user (either directly in GitHub, or indirectly in the IDE) to enable tracking of the impact of the suggestions |

|

||||

| [**CI feedback**](https://qodo-merge-docs.qodo.ai/tools/ci_feedback/) | Automatically analyze failed CI checks on GitHub and provide actionable feedback in the PR conversation, helping to resolve issues quickly |

|

||||

| [**Advanced usage statistics**](https://www.codium.ai/contact/#/) | Qodo Merge Pro offers detailed statistics at user, repository, and company levels, including metrics about Qodo Merge usage, and also general statistics and insights |

|

||||

| [**Incorporating companies' best practices**](https://qodo-merge-docs.qodo.ai/tools/improve/#best-practices) | Use the companies' best practices as reference to increase the effectiveness and the relevance of the code suggestions |

|

||||

| [**Interactive triggering**](https://qodo-merge-docs.qodo.ai/tools/analyze/#example-usage) | Interactively apply different tools via the `analyze` command |

|

||||

| [**SOC2 compliance check**](https://qodo-merge-docs.qodo.ai/tools/review/#configuration-options) | Ensures the PR contains a ticket to a project management system (e.g., Jira, Asana, Trello, etc.)

|

||||

| [**Custom labels**](https://qodo-merge-docs.qodo.ai/tools/describe/#handle-custom-labels-from-the-repos-labels-page) | Define custom labels for Qodo Merge to assign to the PR |

|

||||

|

||||

### Additional tools

|

||||

|

||||

@ -25,7 +25,7 @@ There are 3 ways to enable custom labels:

|

||||

When working from CLI, you need to apply the [configuration changes](#configuration-options) to the [custom_labels file](https://github.com/Codium-ai/pr-agent/blob/main/pr_agent/settings/custom_labels.toml):

|

||||

|

||||

#### 2. Repo configuration file

|

||||

To enable custom labels, you need to apply the [configuration changes](#configuration-options) to the local `.pr_agent.toml` file in you repository.

|

||||

To enable custom labels, you need to apply the [configuration changes](#configuration-options) to the local `.pr_agent.toml` file in your repository.

|

||||

|

||||

#### 3. Handle custom labels from the Repo's labels page 💎

|

||||

> This feature is available only in Qodo Merge Pro

|

||||

|

||||

@ -34,7 +34,7 @@ pr_commands = [

|

||||

]

|

||||

|

||||

[pr_description]

|

||||

publish_labels = ...

|

||||

publish_labels = true

|

||||

...

|

||||

```

|

||||

|

||||

@ -49,7 +49,7 @@ publish_labels = ...

|

||||

<table>

|

||||

<tr>

|

||||

<td><b>publish_labels</b></td>

|

||||

<td>If set to true, the tool will publish the labels to the PR. Default is true.</td>

|

||||

<td>If set to true, the tool will publish labels to the PR. Default is false.</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td><b>publish_description_as_comment</b></td>

|

||||

|

||||

@ -67,6 +67,33 @@ In post-process, Qodo Merge counts the number of suggestions that were implement

|

||||

|

||||

{width=512}

|

||||

|

||||

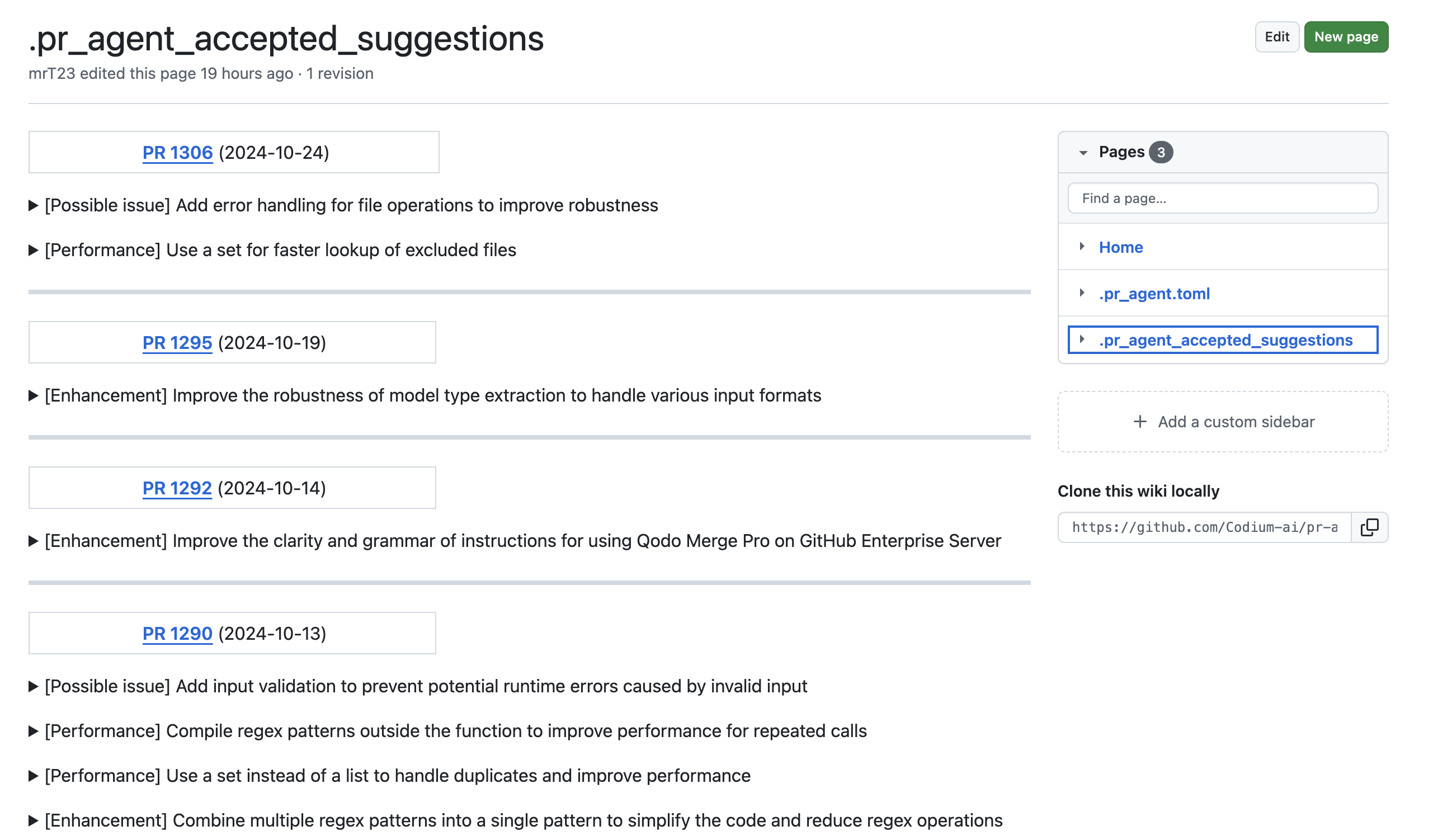

## Suggestion tracking 💎

|

||||

`Platforms supported: GitHub, GitLab`

|

||||

|

||||

Qodo Merge employs an novel detection system to automatically [identify](https://qodo-merge-docs.qodo.ai/core-abilities/impact_evaluation/) AI code suggestions that PR authors have accepted and implemented.

|

||||

|

||||

Accepted suggestions are also automatically documented in a dedicated wiki page called `.pr_agent_accepted_suggestions`, allowing users to track historical changes, assess the tool's effectiveness, and learn from previously implemented recommendations in the repository.

|

||||

An example [result](https://github.com/Codium-ai/pr-agent/wiki/.pr_agent_accepted_suggestions):

|

||||

|

||||

[{width=768}](https://github.com/Codium-ai/pr-agent/wiki/.pr_agent_accepted_suggestions)

|

||||

|

||||

This dedicated wiki page will also serve as a foundation for future AI model improvements, allowing it to learn from historically implemented suggestions and generate more targeted, contextually relevant recommendations.

|

||||

|

||||

This feature is controlled by a boolean configuration parameter: `pr_code_suggestions.wiki_page_accepted_suggestions` (default is true).

|

||||

|

||||

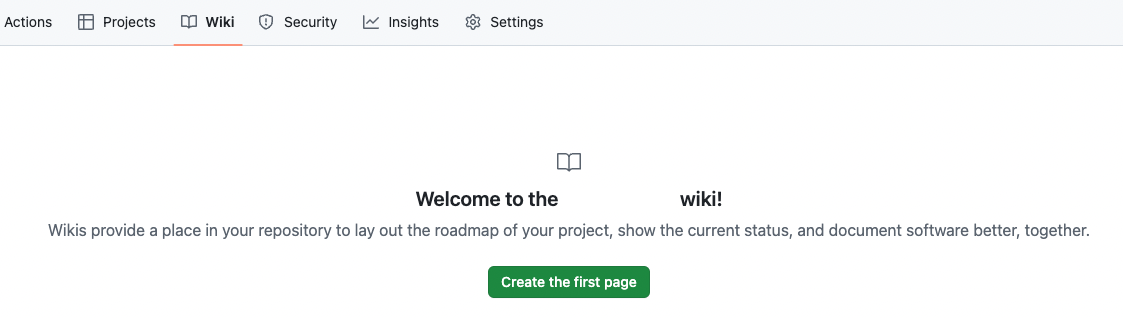

!!! note "Wiki must be enabled"

|

||||

While the aggregation process is automatic, GitHub repositories require a one-time manual wiki setup.

|

||||

|

||||

To initialize the wiki: navigate to `Wiki`, select `Create the first page`, then click `Save page`.

|

||||

|

||||

{width=768}

|

||||

|

||||

Once a wiki repo is created, the tool will automatically use this wiki for tracking suggestions.

|

||||

|

||||

!!! note "Why a wiki page?"

|

||||

Your code belongs to you, and we respect your privacy. Hence, we won't store any code suggestions in an external database.

|

||||

|

||||

Instead, we leverage a dedicated private page, within your repository wiki, to track suggestions. This approach offers convenient secure suggestion tracking while avoiding pull requests or any noise to the main repository.

|

||||

|

||||

## Usage Tips

|

||||

|

||||

@ -114,9 +141,16 @@ code_suggestions_self_review_text = "... (your text here) ..."

|

||||

{width=512}

|

||||

|

||||

|

||||

!!! tip "Tip - Reducing visual footprint after self-review 💎"

|

||||

|

||||

The configuration parameter `pr_code_suggestions.fold_suggestions_on_self_review` (default is True)

|

||||

can be used to automatically fold the suggestions after the user clicks the self-review checkbox.

|

||||

|

||||

This reduces the visual footprint of the suggestions, and also indicates to the PR reviewer that the suggestions have been reviewed by the PR author, and don't require further attention.

|

||||

|

||||

|

||||

!!! tip "Tip - demanding self-review from the PR author 💎"

|

||||

|

||||

!!! tip "Tip - Demanding self-review from the PR author 💎"

|

||||

|

||||

By setting:

|

||||

```toml

|

||||

@ -211,6 +245,32 @@ enable_global_best_practices = true

|

||||

|

||||

Then, create a `best_practices.md` wiki file in the root of [global](https://qodo-merge-docs.qodo.ai/usage-guide/configuration_options/#global-configuration-file) configuration repository, `pr-agent-settings`.

|

||||

|

||||

##### Best practices for multiple languages

|

||||

For a git organization working with multiple programming languages, you can maintain a centralized global `best_practices.md` file containing language-specific guidelines.

|

||||

When reviewing pull requests, Qodo Merge automatically identifies the programming language and applies the relevant best practices from this file.

|

||||

Structure your `best_practices.md` file using the following format:

|

||||

|

||||

```

|

||||

# [Python]

|

||||

...

|

||||

# [Java]

|

||||

...

|

||||

# [JavaScript]

|

||||

...

|

||||

```

|

||||

|

||||

##### Dedicated label for best practices suggestions

|

||||

Best practice suggestions are labeled as `Organization best practice` by default.

|

||||

To customize this label, modify it in your configuration file:

|

||||

|

||||

```toml

|

||||

[best_practices]

|

||||

organization_name = ""

|

||||

```

|

||||

|

||||

And the label will be: `{organization_name} best practice`.

|

||||

|

||||

|

||||

##### Example results

|

||||

|

||||

{width=512}

|

||||

@ -242,12 +302,12 @@ Using a combination of both can help the AI model to provide relevant and tailor

|

||||

<td>Minimum score threshold for suggestions to be presented as commitable PR comments in addition to the table. Default is -1 (disabled).</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td><b>persistent_comment</b></td>

|

||||

<td>If set to true, the improve comment will be persistent, meaning that every new improve request will edit the previous one. Default is false.</td>

|

||||

<td><b>focus_only_on_problems</b></td>

|

||||

<td>If set to true, suggestions will focus primarily on identifying and fixing code problems, and less on style considerations like best practices, maintainability, or readability. Default is true.</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td><b>self_reflect_on_suggestions</b></td>

|

||||

<td>If set to true, the improve tool will calculate an importance score for each suggestion [1-10], and sort the suggestion labels group based on this score. Default is true.</td>

|

||||

<td><b>persistent_comment</b></td>

|

||||

<td>If set to true, the improve comment will be persistent, meaning that every new improve request will edit the previous one. Default is false.</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td><b>suggestions_score_threshold</b></td>

|

||||

@ -265,6 +325,10 @@ Using a combination of both can help the AI model to provide relevant and tailor

|

||||

<td><b>enable_chat_text</b></td>

|

||||

<td>If set to true, the tool will display a reference to the PR chat in the comment. Default is true.</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td><b>wiki_page_accepted_suggestions</b></td>

|

||||

<td>If set to true, the tool will automatically track accepted suggestions in a dedicated wiki page called `.pr_agent_accepted_suggestions`. Default is true.</td>

|

||||

</tr>

|

||||

</table>

|

||||

|

||||

??? example "Params for number of suggestions and AI calls"

|

||||

|

||||

@ -138,20 +138,9 @@ num_code_suggestions = ...

|

||||

<td><b>require_security_review</b></td>

|

||||

<td>If set to true, the tool will add a section that checks if the PR contains a possible security or vulnerability issue. Default is true.</td>

|

||||

</tr>

|

||||

</table>

|

||||

|

||||

!!! example "SOC2 ticket compliance 💎"

|

||||

|

||||

This sub-tool checks if the PR description properly contains a ticket to a project management system (e.g., Jira, Asana, Trello, etc.), as required by SOC2 compliance. If not, it will add a label to the PR: "Missing SOC2 ticket".

|

||||

|

||||

<table>

|

||||

<tr>

|

||||

<td><b>require_soc2_ticket</b></td>

|

||||

<td>If set to true, the SOC2 ticket checker sub-tool will be enabled. Default is false.</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td><b>soc2_ticket_prompt</b></td>

|

||||

<td>The prompt for the SOC2 ticket review. Default is: `Does the PR description include a link to ticket in a project management system (e.g., Jira, Asana, Trello, etc.) ?`. Edit this field if your compliance requirements are different.</td>

|

||||

<td><b>require_ticket_analysis_review</b></td>

|

||||

<td>If set to true, and the PR contains a GitHub or Jira ticket link, the tool will add a section that checks if the PR in fact fulfilled the ticket requirements. Default is true.</td>

|

||||

</tr>

|

||||

</table>

|

||||

|

||||

@ -193,7 +182,7 @@ If enabled, the `review` tool can approve a PR when a specific comment, `/review

|

||||

It is recommended to review the [Configuration options](#configuration-options) section, and choose the relevant options for your use case.

|

||||

|