Compare commits

1 Commits

test-almog

...

ofir-frd-p

| Author | SHA1 | Date | |

|---|---|---|---|

| 661a4571f9 |

19

README.md

@ -4,8 +4,8 @@

|

||||

|

||||

|

||||

<picture>

|

||||

<source media="(prefers-color-scheme: dark)" srcset="https://www.qodo.ai/wp-content/uploads/2025/02/PR-Agent-Purple-2.png">

|

||||

<source media="(prefers-color-scheme: light)" srcset="https://www.qodo.ai/wp-content/uploads/2025/02/PR-Agent-Purple-2.png">

|

||||

<source media="(prefers-color-scheme: dark)" srcset="https://codium.ai/images/pr_agent/logo-dark.png" width="330">

|

||||

<source media="(prefers-color-scheme: light)" srcset="https://codium.ai/images/pr_agent/logo-light.png" width="330">

|

||||

<img src="https://codium.ai/images/pr_agent/logo-light.png" alt="logo" width="330">

|

||||

|

||||

</picture>

|

||||

@ -74,7 +74,7 @@ to

|

||||

|

||||

New tool [/Implement](https://qodo-merge-docs.qodo.ai/tools/implement/) (💎), which converts human code review discussions and feedback into ready-to-commit code changes.

|

||||

|

||||

<kbd><img src="https://www.qodo.ai/images/pr_agent/implement1.png?v=2" width="512"></kbd>

|

||||

<kbd><img src="https://www.qodo.ai/images/pr_agent/implement1.png" width="512"></kbd>

|

||||

|

||||

|

||||

### Jan 1, 2025

|

||||

@ -92,7 +92,7 @@ Following feedback from the community, we have addressed two vulnerabilities ide

|

||||

Supported commands per platform:

|

||||

|

||||

| | | GitHub | GitLab | Bitbucket | Azure DevOps |

|

||||

|-------|---------------------------------------------------------------------------------------------------------|:--------------------:|:--------------------:|:---------:|:------------:|

|

||||

|-------|---------------------------------------------------------------------------------------------------------|:--------------------:|:--------------------:|:--------------------:|:------------:|

|

||||

| TOOLS | [Review](https://qodo-merge-docs.qodo.ai/tools/review/) | ✅ | ✅ | ✅ | ✅ |

|

||||

| | [Describe](https://qodo-merge-docs.qodo.ai/tools/describe/) | ✅ | ✅ | ✅ | ✅ |

|

||||

| | [Improve](https://qodo-merge-docs.qodo.ai/tools/improve/) | ✅ | ✅ | ✅ | ✅ |

|

||||

@ -111,7 +111,6 @@ Supported commands per platform:

|

||||

| | [Custom Prompt](https://qodo-merge-docs.qodo.ai/tools/custom_prompt/) 💎 | ✅ | ✅ | ✅ | |

|

||||

| | [Test](https://qodo-merge-docs.qodo.ai/tools/test/) 💎 | ✅ | ✅ | | |

|

||||

| | [Implement](https://qodo-merge-docs.qodo.ai/tools/implement/) 💎 | ✅ | ✅ | ✅ | |

|

||||

| | [Auto-Approve](https://qodo-merge-docs.qodo.ai/tools/improve/?h=auto#auto-approval) 💎 | ✅ | ✅ | ✅ | |

|

||||

| | | | | | |

|

||||

| USAGE | [CLI](https://qodo-merge-docs.qodo.ai/usage-guide/automations_and_usage/#local-repo-cli) | ✅ | ✅ | ✅ | ✅ |

|

||||

| | [App / webhook](https://qodo-merge-docs.qodo.ai/usage-guide/automations_and_usage/#github-app) | ✅ | ✅ | ✅ | ✅ |

|

||||

@ -124,7 +123,7 @@ Supported commands per platform:

|

||||

| | [Local and global metadata](https://qodo-merge-docs.qodo.ai/core-abilities/metadata/) | ✅ | ✅ | ✅ | ✅ |

|

||||

| | [Dynamic context](https://qodo-merge-docs.qodo.ai/core-abilities/dynamic_context/) | ✅ | ✅ | ✅ | ✅ |

|

||||

| | [Self reflection](https://qodo-merge-docs.qodo.ai/core-abilities/self_reflection/) | ✅ | ✅ | ✅ | ✅ |

|

||||

| | [Static code analysis](https://qodo-merge-docs.qodo.ai/core-abilities/static_code_analysis/) 💎 | ✅ | ✅ | | |

|

||||

| | [Static code analysis](https://qodo-merge-docs.qodo.ai/core-abilities/static_code_analysis/) 💎 | ✅ | ✅ | ✅ | |

|

||||

| | [Global and wiki configurations](https://qodo-merge-docs.qodo.ai/usage-guide/configuration_options/) 💎 | ✅ | ✅ | ✅ | |

|

||||

| | [PR interactive actions](https://www.qodo.ai/images/pr_agent/pr-actions.mp4) 💎 | ✅ | ✅ | | |

|

||||

| | [Impact Evaluation](https://qodo-merge-docs.qodo.ai/core-abilities/impact_evaluation/) 💎 | ✅ | ✅ | | |

|

||||

@ -214,6 +213,12 @@ Note that this is a promotional bot, suitable only for initial experimentation.

|

||||

It does not have 'edit' access to your repo, for example, so it cannot update the PR description or add labels (`@CodiumAI-Agent /describe` will publish PR description as a comment). In addition, the bot cannot be used on private repositories, as it does not have access to the files there.

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

To set up your own PR-Agent, see the [Installation](https://qodo-merge-docs.qodo.ai/installation/) section below.

|

||||

Note that when you set your own PR-Agent or use Qodo hosted PR-Agent, there is no need to mention `@CodiumAI-Agent ...`. Instead, directly start with the command, e.g., `/ask ...`.

|

||||

|

||||

---

|

||||

|

||||

|

||||

@ -268,6 +273,8 @@ https://openai.com/enterprise-privacy

|

||||

|

||||

## Links

|

||||

|

||||

[](https://discord.gg/kG35uSHDBc)

|

||||

|

||||

- Discord community: https://discord.gg/kG35uSHDBc

|

||||

- Qodo site: https://www.qodo.ai/

|

||||

- Blog: https://www.qodo.ai/blog/

|

||||

|

||||

@ -1,315 +0,0 @@

|

||||

<div class="search-section">

|

||||

<h1>AI Docs Search</h1>

|

||||

<p class="search-description">

|

||||

Search through our documentation using AI-powered natural language queries.

|

||||

</p>

|

||||

<div class="search-container">

|

||||

<input

|

||||

type="text"

|

||||

id="searchInput"

|

||||

class="search-input"

|

||||

placeholder="Enter your search term..."

|

||||

>

|

||||

<button id="searchButton" class="search-button">Search</button>

|

||||

</div>

|

||||

<div id="spinner" class="spinner-container" style="display: none;">

|

||||

<div class="spinner"></div>

|

||||

</div>

|

||||

<div id="results" class="results-container"></div>

|

||||

</div>

|

||||

|

||||

<style>

|

||||

Untitled

|

||||

.search-section {

|

||||

max-width: 800px;

|

||||

margin: 0 auto;

|

||||

padding: 0 1rem 2rem;

|

||||

}

|

||||

|

||||

h1 {

|

||||

color: #666;

|

||||

font-size: 2.125rem;

|

||||

font-weight: normal;

|

||||

margin-bottom: 1rem;

|

||||

}

|

||||

|

||||

.search-description {

|

||||

color: #666;

|

||||

font-size: 1rem;

|

||||

line-height: 1.5;

|

||||

margin-bottom: 2rem;

|

||||

max-width: 800px;

|

||||

}

|

||||

|

||||

.search-container {

|

||||

display: flex;

|

||||

gap: 1rem;

|

||||

max-width: 800px;

|

||||

margin: 0; /* Changed from auto to 0 to align left */

|

||||

}

|

||||

|

||||

.search-input {

|

||||

flex: 1;

|

||||

padding: 0 0.875rem;

|

||||

border: 1px solid #ddd;

|

||||

border-radius: 4px;

|

||||

font-size: 0.9375rem;

|

||||

outline: none;

|

||||

height: 40px; /* Explicit height */

|

||||

}

|

||||

|

||||

.search-input:focus {

|

||||

border-color: #6c63ff;

|

||||

}

|

||||

|

||||

.search-button {

|

||||

padding: 0 1.25rem;

|

||||

background-color: #2196F3;

|

||||

color: white;

|

||||

border: none;

|

||||

border-radius: 4px;

|

||||

cursor: pointer;

|

||||

font-size: 0.875rem;

|

||||

transition: background-color 0.2s;

|

||||

height: 40px; /* Match the height of search input */

|

||||

display: flex;

|

||||

align-items: center;

|

||||

justify-content: center;

|

||||

}

|

||||

|

||||

.search-button:hover {

|

||||

background-color: #1976D2;

|

||||

}

|

||||

|

||||

.spinner-container {

|

||||

display: flex;

|

||||

justify-content: center;

|

||||

margin-top: 2rem;

|

||||

}

|

||||

|

||||

.spinner {

|

||||

width: 40px;

|

||||

height: 40px;

|

||||

border: 4px solid #f3f3f3;

|

||||

border-top: 4px solid #2196F3;

|

||||

border-radius: 50%;

|

||||

animation: spin 1s linear infinite;

|

||||

}

|

||||

|

||||

@keyframes spin {

|

||||

0% { transform: rotate(0deg); }

|

||||

100% { transform: rotate(360deg); }

|

||||

}

|

||||

|

||||

.results-container {

|

||||

margin-top: 2rem;

|

||||

max-width: 800px;

|

||||

}

|

||||

|

||||

.result-item {

|

||||

padding: 1rem;

|

||||

border: 1px solid #ddd;

|

||||

border-radius: 4px;

|

||||

margin-bottom: 1rem;

|

||||

}

|

||||

|

||||

.result-title {

|

||||

font-size: 1.2rem;

|

||||

color: #2196F3;

|

||||

margin-bottom: 0.5rem;

|

||||

}

|

||||

|

||||

.result-description {

|

||||

color: #666;

|

||||

}

|

||||

|

||||

.error-message {

|

||||

color: #dc3545;

|

||||

padding: 1rem;

|

||||

border: 1px solid #dc3545;

|

||||

border-radius: 4px;

|

||||

margin-top: 1rem;

|

||||

}

|

||||

|

||||

.markdown-content {

|

||||

line-height: 1.6;

|

||||

color: var(--md-typeset-color);

|

||||

background: var(--md-default-bg-color);

|

||||

border: 1px solid var(--md-default-fg-color--lightest);

|

||||

border-radius: 12px;

|

||||

padding: 1.5rem;

|

||||

box-shadow: 0 2px 4px rgba(0,0,0,0.05);

|

||||

position: relative;

|

||||

margin-top: 2rem;

|

||||

}

|

||||

|

||||

.markdown-content::before {

|

||||

content: '';

|

||||

position: absolute;

|

||||

top: -8px;

|

||||

left: 24px;

|

||||

width: 16px;

|

||||

height: 16px;

|

||||

background: var(--md-default-bg-color);

|

||||

border-left: 1px solid var(--md-default-fg-color--lightest);

|

||||

border-top: 1px solid var(--md-default-fg-color--lightest);

|

||||

transform: rotate(45deg);

|

||||

}

|

||||

|

||||

.markdown-content > *:first-child {

|

||||

margin-top: 0;

|

||||

padding-top: 0;

|

||||

}

|

||||

|

||||

.markdown-content p {

|

||||

margin-bottom: 1rem;

|

||||

}

|

||||

|

||||

.markdown-content p:last-child {

|

||||

margin-bottom: 0;

|

||||

}

|

||||

|

||||

.markdown-content code {

|

||||

background: var(--md-code-bg-color);

|

||||

color: var(--md-code-fg-color);

|

||||

padding: 0.2em 0.4em;

|

||||

border-radius: 3px;

|

||||

font-size: 0.9em;

|

||||

font-family: ui-monospace, SFMono-Regular, SF Mono, Menlo, Consolas, Liberation Mono, monospace;

|

||||

}

|

||||

|

||||

.markdown-content pre {

|

||||

background: var(--md-code-bg-color);

|

||||

padding: 1rem;

|

||||

border-radius: 6px;

|

||||

overflow-x: auto;

|

||||

margin: 1rem 0;

|

||||

}

|

||||

|

||||

.markdown-content pre code {

|

||||

background: none;

|

||||

padding: 0;

|

||||

font-size: 0.9em;

|

||||

}

|

||||

|

||||

[data-md-color-scheme="slate"] .markdown-content {

|

||||

box-shadow: 0 2px 4px rgba(0,0,0,0.1);

|

||||

}

|

||||

|

||||

</style>

|

||||

|

||||

<script src="https://cdnjs.cloudflare.com/ajax/libs/marked/9.1.6/marked.min.js"></script>

|

||||

|

||||

<script>

|

||||

window.addEventListener('load', function() {

|

||||

function displayResults(responseText) {

|

||||

const resultsContainer = document.getElementById('results');

|

||||

const spinner = document.getElementById('spinner');

|

||||

const searchContainer = document.querySelector('.search-container');

|

||||

|

||||

// Hide spinner

|

||||

spinner.style.display = 'none';

|

||||

|

||||

// Scroll to search bar

|

||||

searchContainer.scrollIntoView({ behavior: 'smooth', block: 'start' });

|

||||

|

||||

try {

|

||||

const results = JSON.parse(responseText);

|

||||

|

||||

marked.setOptions({

|

||||

breaks: true,

|

||||

gfm: true,

|

||||

headerIds: false,

|

||||

sanitize: false

|

||||

});

|

||||

|

||||

const htmlContent = marked.parse(results.message);

|

||||

|

||||

resultsContainer.className = 'markdown-content';

|

||||

resultsContainer.innerHTML = htmlContent;

|

||||

|

||||

// Scroll after content is rendered

|

||||

setTimeout(() => {

|

||||

const searchContainer = document.querySelector('.search-container');

|

||||

const offset = 55; // Offset from top in pixels

|

||||

const elementPosition = searchContainer.getBoundingClientRect().top;

|

||||

const offsetPosition = elementPosition + window.pageYOffset - offset;

|

||||

|

||||

window.scrollTo({

|

||||

top: offsetPosition,

|

||||

behavior: 'smooth'

|

||||

});

|

||||

}, 100);

|

||||

} catch (error) {

|

||||

console.error('Error parsing results:', error);

|

||||

resultsContainer.innerHTML = '<div class="error-message">Error processing results</div>';

|

||||

}

|

||||

}

|

||||

|

||||

async function performSearch() {

|

||||

const searchInput = document.getElementById('searchInput');

|

||||

const resultsContainer = document.getElementById('results');

|

||||

const spinner = document.getElementById('spinner');

|

||||

const searchTerm = searchInput.value.trim();

|

||||

|

||||

if (!searchTerm) {

|

||||

resultsContainer.innerHTML = '<div class="error-message">Please enter a search term</div>';

|

||||

return;

|

||||

}

|

||||

|

||||

// Show spinner, clear results

|

||||

spinner.style.display = 'flex';

|

||||

resultsContainer.innerHTML = '';

|

||||

|

||||

try {

|

||||

const data = {

|

||||

"query": searchTerm

|

||||

};

|

||||

|

||||

const options = {

|

||||

method: 'POST',

|

||||

headers: {

|

||||

'accept': 'text/plain',

|

||||

'content-type': 'application/json',

|

||||

},

|

||||

body: JSON.stringify(data)

|

||||

};

|

||||

|

||||

// const API_ENDPOINT = 'http://0.0.0.0:3000/api/v1/docs_help';

|

||||

const API_ENDPOINT = 'https://help.merge.qodo.ai/api/v1/docs_help';

|

||||

|

||||

const response = await fetch(API_ENDPOINT, options);

|

||||

|

||||

if (!response.ok) {

|

||||

throw new Error(`HTTP error! status: ${response.status}`);

|

||||

}

|

||||

|

||||

const responseText = await response.text();

|

||||

displayResults(responseText);

|

||||

} catch (error) {

|

||||

spinner.style.display = 'none';

|

||||

resultsContainer.innerHTML = `

|

||||

<div class="error-message">

|

||||

An error occurred while searching. Please try again later.

|

||||

</div>

|

||||

`;

|

||||

}

|

||||

}

|

||||

|

||||

// Add event listeners

|

||||

const searchButton = document.getElementById('searchButton');

|

||||

const searchInput = document.getElementById('searchInput');

|

||||

|

||||

if (searchButton) {

|

||||

searchButton.addEventListener('click', performSearch);

|

||||

}

|

||||

|

||||

if (searchInput) {

|

||||

searchInput.addEventListener('keypress', function(e) {

|

||||

if (e.key === 'Enter') {

|

||||

performSearch();

|

||||

}

|

||||

});

|

||||

}

|

||||

});

|

||||

</script>

|

||||

|

Before Width: | Height: | Size: 15 KiB After Width: | Height: | Size: 4.2 KiB |

|

Before Width: | Height: | Size: 57 KiB After Width: | Height: | Size: 263 KiB |

|

Before Width: | Height: | Size: 24 KiB After Width: | Height: | Size: 1.2 KiB |

|

Before Width: | Height: | Size: 17 KiB After Width: | Height: | Size: 8.7 KiB |

@ -1,5 +1,5 @@

|

||||

## Local and global metadata injection with multi-stage analysis

|

||||

1\.

|

||||

(1)

|

||||

Qodo Merge initially retrieves for each PR the following data:

|

||||

|

||||

- PR title and branch name

|

||||

@ -11,7 +11,7 @@ Qodo Merge initially retrieves for each PR the following data:

|

||||

!!! tip "Tip: Organization-level metadata"

|

||||

In addition to the inputs above, Qodo Merge can incorporate supplementary preferences provided by the user, like [`extra_instructions` and `organization best practices`](https://qodo-merge-docs.qodo.ai/tools/improve/#extra-instructions-and-best-practices). This information can be used to enhance the PR analysis.

|

||||

|

||||

2\.

|

||||

(2)

|

||||

By default, the first command that Qodo Merge executes is [`describe`](https://qodo-merge-docs.qodo.ai/tools/describe/), which generates three types of outputs:

|

||||

|

||||

- PR Type (e.g. bug fix, feature, refactor, etc)

|

||||

@ -49,8 +49,8 @@ __old hunk__

|

||||

...

|

||||

```

|

||||

|

||||

3\. The entire PR files that were retrieved are also used to expand and enhance the PR context (see [Dynamic Context](https://qodo-merge-docs.qodo.ai/core-abilities/dynamic_context/)).

|

||||

(3) The entire PR files that were retrieved are also used to expand and enhance the PR context (see [Dynamic Context](https://qodo-merge-docs.qodo.ai/core-abilities/dynamic_context/)).

|

||||

|

||||

|

||||

4\. All the metadata described above represents several level of cumulative analysis - ranging from hunk level, to file level, to PR level, to organization level.

|

||||

(4) All the metadata described above represents several level of cumulative analysis - ranging from hunk level, to file level, to PR level, to organization level.

|

||||

This comprehensive approach enables Qodo Merge AI models to generate more precise and contextually relevant suggestions and feedback.

|

||||

|

||||

@ -35,7 +35,6 @@ Qodo Merge offers extensive pull request functionalities across various git prov

|

||||

| | ⮑ [Inline file summary](https://qodo-merge-docs.qodo.ai/tools/describe/#inline-file-summary){:target="_blank"} 💎 | ✅ | ✅ | | ✅ |

|

||||

| | Improve | ✅ | ✅ | ✅ | ✅ |

|

||||

| | ⮑ Extended | ✅ | ✅ | ✅ | ✅ |

|

||||

| | [Auto-Approve](https://qodo-merge-docs.qodo.ai/tools/improve/#auto-approval) 💎 | ✅ | ✅ | ✅ | |

|

||||

| | [Custom Prompt](./tools/custom_prompt.md){:target="_blank"} 💎 | ✅ | ✅ | ✅ | ✅ |

|

||||

| | Reflect and Review | ✅ | ✅ | ✅ | ✅ |

|

||||

| | Update CHANGELOG.md | ✅ | ✅ | ✅ | ️ |

|

||||

@ -54,7 +53,8 @@ Qodo Merge offers extensive pull request functionalities across various git prov

|

||||

| | Repo language prioritization | ✅ | ✅ | ✅ | ✅ |

|

||||

| | Adaptive and token-aware file patch fitting | ✅ | ✅ | ✅ | ✅ |

|

||||

| | Multiple models support | ✅ | ✅ | ✅ | ✅ |

|

||||

| | [Static code analysis](./core-abilities/static_code_analysis/){:target="_blank"} 💎 | ✅ | ✅ | | |

|

||||

| | Incremental PR review | ✅ | | | |

|

||||

| | [Static code analysis](./tools/analyze.md/){:target="_blank"} 💎 | ✅ | ✅ | ✅ | ✅ |

|

||||

| | [Multiple configuration options](./usage-guide/configuration_options.md){:target="_blank"} 💎 | ✅ | ✅ | ✅ | ✅ |

|

||||

|

||||

💎 marks a feature available only in [Qodo Merge](https://www.codium.ai/pricing/){:target="_blank"}, and not in the open-source version.

|

||||

|

||||

@ -14,5 +14,6 @@ An example result:

|

||||

|

||||

{width=750}

|

||||

|

||||

!!! note "Language that are currently supported:"

|

||||

Python, Java, C++, JavaScript, TypeScript, C#.

|

||||

**Notes**

|

||||

|

||||

- Language that are currently supported: Python, Java, C++, JavaScript, TypeScript, C#.

|

||||

|

||||

@ -38,20 +38,20 @@ where `https://real_link_to_image` is the direct link to the image.

|

||||

Note that GitHub has a built-in mechanism of pasting images in comments. However, pasted image does not provide a direct link.

|

||||

To get a direct link to an image, we recommend using the following scheme:

|

||||

|

||||

1\. First, post a comment that contains **only** the image:

|

||||

1) First, post a comment that contains **only** the image:

|

||||

|

||||

{width=512}

|

||||

|

||||

2\. Quote reply to that comment:

|

||||

2) Quote reply to that comment:

|

||||

|

||||

{width=512}

|

||||

|

||||

3\. In the screen opened, type the question below the image:

|

||||

3) In the screen opened, type the question below the image:

|

||||

|

||||

{width=512}

|

||||

{width=512}

|

||||

|

||||

4\. Post the comment, and receive the answer:

|

||||

4) Post the comment, and receive the answer:

|

||||

|

||||

{width=512}

|

||||

|

||||

|

||||

@ -51,8 +51,8 @@ Results obtained with the prompt above:

|

||||

|

||||

## Configuration options

|

||||

|

||||

- `prompt`: the prompt for the tool. It should be a multi-line string.

|

||||

`prompt`: the prompt for the tool. It should be a multi-line string.

|

||||

|

||||

- `num_code_suggestions_per_chunk`: number of code suggestions provided by the 'custom_prompt' tool, per chunk. Default is 4.

|

||||

`num_code_suggestions`: number of code suggestions provided by the 'custom_prompt' tool. Default is 4.

|

||||

|

||||

- `enable_help_text`: if set to true, the tool will display a help text in the comment. Default is true.

|

||||

`enable_help_text`: if set to true, the tool will display a help text in the comment. Default is true.

|

||||

|

||||

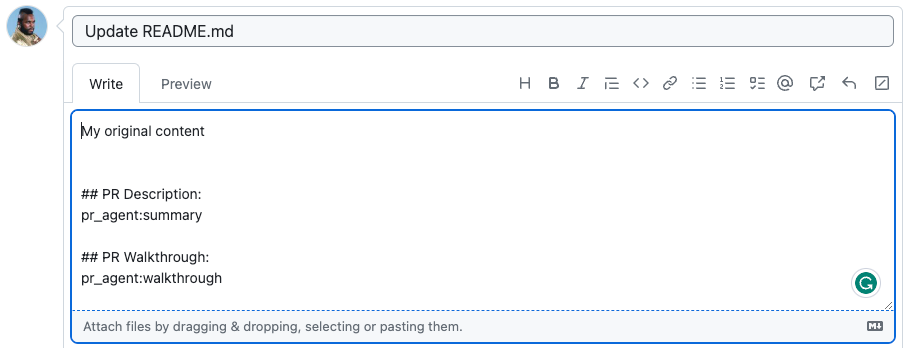

@ -143,7 +143,7 @@ The marker `pr_agent:type` will be replaced with the PR type, `pr_agent:summary`

|

||||

|

||||

{width=512}

|

||||

|

||||

becomes

|

||||

→

|

||||

|

||||

{width=512}

|

||||

|

||||

|

||||

@ -27,6 +27,7 @@ You can state a name of a specific component in the PR to get documentation only

|

||||

- `docs_style`: The exact style of the documentation (for python docstring). you can choose between: `google`, `numpy`, `sphinx`, `restructuredtext`, `plain`. Default is `sphinx`.

|

||||

- `extra_instructions`: Optional extra instructions to the tool. For example: "focus on the changes in the file X. Ignore change in ...".

|

||||

|

||||

!!! note "Notes"

|

||||

- The following languages are currently supported: Python, Java, C++, JavaScript, TypeScript, C#.

|

||||

**Notes**

|

||||

|

||||

- Language that are currently fully supported: Python, Java, C++, JavaScript, TypeScript, C#.

|

||||

- This tool can also be triggered interactively by using the [`analyze`](./analyze.md) tool.

|

||||

|

||||

@ -10,9 +10,8 @@ It leverages LLM technology to transform PR comments and review suggestions into

|

||||

|

||||

### For Reviewers

|

||||

|

||||

Reviewers can request code changes by:

|

||||

|

||||

1. Selecting the code block to be modified.

|

||||

Reviewers can request code changes by: <br>

|

||||

1. Selecting the code block to be modified. <br>

|

||||

2. Adding a comment with the syntax:

|

||||

```

|

||||

/implement <code-change-description>

|

||||

@ -47,8 +46,7 @@ You can reference and implement changes from any comment by:

|

||||

Note that the implementation will occur within the review discussion thread.

|

||||

|

||||

|

||||

**Configuration options**

|

||||

|

||||

- Use `/implement` to implement code change within and based on the review discussion.

|

||||

- Use `/implement <code-change-description>` inside a review discussion to implement specific instructions.

|

||||

- Use `/implement <link-to-review-comment>` to indirectly call the tool from any comment.

|

||||

**Configuration options** <br>

|

||||

- Use `/implement` to implement code change within and based on the review discussion. <br>

|

||||

- Use `/implement <code-change-description>` inside a review discussion to implement specific instructions. <br>

|

||||

- Use `/implement <link-to-review-comment>` to indirectly call the tool from any comment. <br>

|

||||

|

||||

@ -9,9 +9,8 @@ The tool can be triggered automatically every time a new PR is [opened](../usage

|

||||

|

||||

{width=512}

|

||||

|

||||

!!! note "The following features are available only for Qodo Merge 💎 users:"

|

||||

- The `Apply this suggestion` checkbox, which interactively converts a suggestion into a committable code comment

|

||||

- The `More` checkbox to generate additional suggestions

|

||||

Note that the `Apply this suggestion` checkbox, which interactively converts a suggestion into a commitable code comment, is available only for Qodo Merge💎 users.

|

||||

|

||||

|

||||

## Example usage

|

||||

|

||||

@ -53,10 +52,9 @@ num_code_suggestions_per_chunk = ...

|

||||

- The `pr_commands` lists commands that will be executed automatically when a PR is opened.

|

||||

- The `[pr_code_suggestions]` section contains the configurations for the `improve` tool you want to edit (if any)

|

||||

|

||||

### Assessing Impact

|

||||

>`💎 feature`

|

||||

### Assessing Impact 💎

|

||||

|

||||

Qodo Merge tracks two types of implementations for tracking implemented suggestions:

|

||||

Note that Qodo Merge tracks two types of implementations:

|

||||

|

||||

- Direct implementation - when the user directly applies the suggestion by clicking the `Apply` checkbox.

|

||||

- Indirect implementation - when the user implements the suggestion in their IDE environment. In this case, Qodo Merge will utilize, after each commit, a dedicated logic to identify if a suggestion was implemented, and will mark it as implemented.

|

||||

@ -69,8 +67,8 @@ In post-process, Qodo Merge counts the number of suggestions that were implement

|

||||

|

||||

{width=512}

|

||||

|

||||

## Suggestion tracking

|

||||

>`💎 feature. Platforms supported: GitHub, GitLab`

|

||||

## Suggestion tracking 💎

|

||||

`Platforms supported: GitHub, GitLab`

|

||||

|

||||

Qodo Merge employs a novel detection system to automatically [identify](https://qodo-merge-docs.qodo.ai/core-abilities/impact_evaluation/) AI code suggestions that PR authors have accepted and implemented.

|

||||

|

||||

@ -103,6 +101,8 @@ The `improve` tool can be further customized by providing additional instruction

|

||||

|

||||

### Extra instructions

|

||||

|

||||

>`Platforms supported: GitHub, GitLab, Bitbucket, Azure DevOps`

|

||||

|

||||

You can use the `extra_instructions` configuration option to give the AI model additional instructions for the `improve` tool.

|

||||

Be specific, clear, and concise in the instructions. With extra instructions, you are the prompter.

|

||||

|

||||

@ -118,9 +118,9 @@ extra_instructions="""\

|

||||

```

|

||||

Use triple quotes to write multi-line instructions. Use bullet points or numbers to make the instructions more readable.

|

||||

|

||||

### Best practices

|

||||

### Best practices 💎

|

||||

|

||||

> `💎 feature. Platforms supported: GitHub, GitLab, Bitbucket`

|

||||

>`Platforms supported: GitHub, GitLab, Bitbucket`

|

||||

|

||||

Another option to give additional guidance to the AI model is by creating a `best_practices.md` file, either in your repository's root directory or as a [**wiki page**](https://github.com/Codium-ai/pr-agent/wiki) (we recommend the wiki page, as editing and maintaining it over time is easier).

|

||||

This page can contain a list of best practices, coding standards, and guidelines that are specific to your repo/organization.

|

||||

@ -191,11 +191,11 @@ And the label will be: `{organization_name} best practice`.

|

||||

|

||||

{width=512}

|

||||

|

||||

### Auto best practices

|

||||

### Auto best practices 💎

|

||||

|

||||

>`💎 feature. Platforms supported: GitHub.`

|

||||

>`Platforms supported: GitHub`

|

||||

|

||||

`Auto best practices` is a novel Qodo Merge capability that:

|

||||

'Auto best practices' is a novel Qodo Merge capability that:

|

||||

|

||||

1. Identifies recurring patterns from accepted suggestions

|

||||

2. **Automatically** generates [best practices page](https://github.com/qodo-ai/pr-agent/wiki/.pr_agent_auto_best_practices) based on what your team consistently values

|

||||

@ -228,8 +228,7 @@ max_patterns = 5

|

||||

```

|

||||

|

||||

|

||||

### Combining 'extra instructions' and 'best practices'

|

||||

> `💎 feature`

|

||||

### Combining `extra instructions` and `best practices` 💎

|

||||

|

||||

The `extra instructions` configuration is more related to the `improve` tool prompt. It can be used, for example, to avoid specific suggestions ("Don't suggest to add try-except block", "Ignore changes in toml files", ...) or to emphasize specific aspects or formats ("Answer in Japanese", "Give only short suggestions", ...)

|

||||

|

||||

@ -268,8 +267,6 @@ dual_publishing_score_threshold = x

|

||||

Where x represents the minimum score threshold (>=) for suggestions to be presented as commitable PR comments in addition to the table. Default is -1 (disabled).

|

||||

|

||||

### Self-review

|

||||

> `💎 feature`

|

||||

|

||||

If you set in a configuration file:

|

||||

```toml

|

||||

[pr_code_suggestions]

|

||||

@ -313,56 +310,21 @@ code_suggestions_self_review_text = "... (your text here) ..."

|

||||

|

||||

To prevent unauthorized approvals, this configuration defaults to false, and cannot be altered through online comments; enabling requires a direct update to the configuration file and a commit to the repository. This ensures that utilizing the feature demands a deliberate documented decision by the repository owner.

|

||||

|

||||

### Auto-approval

|

||||

> `💎 feature. Platforms supported: GitHub, GitLab, Bitbucket`

|

||||

|

||||

Under specific conditions, Qodo Merge can auto-approve a PR when a specific comment is invoked, or when the PR meets certain criteria.

|

||||

|

||||

To ensure safety, the auto-approval feature is disabled by default. To enable auto-approval, you need to actively set, in a pre-defined _configuration file_, the following:

|

||||

```toml

|

||||

[config]

|

||||

enable_auto_approval = true

|

||||

```

|

||||

Note that this specific flag cannot be set with a command line argument, only in the configuration file, committed to the repository.

|

||||

This ensures that enabling auto-approval is a deliberate decision by the repository owner.

|

||||

|

||||

**(1) Auto-approval by commenting**

|

||||

|

||||

After enabling, by commenting on a PR:

|

||||

```

|

||||

/review auto_approve

|

||||

```

|

||||

Qodo Merge will automatically approve the PR, and add a comment with the approval.

|

||||

|

||||

**(2) Auto-approval when the PR meets certain criteria**

|

||||

|

||||

There are two criteria that can be set for auto-approval:

|

||||

|

||||

- **Review effort score**

|

||||

```toml

|

||||

[config]

|

||||

auto_approve_for_low_review_effort = X # X is a number between 1 to 5

|

||||

```

|

||||

When the [review effort score](https://www.qodo.ai/images/pr_agent/review3.png) is lower or equal to X, the PR will be auto-approved.

|

||||

|

||||

___

|

||||

- **No code suggestions**

|

||||

```toml

|

||||

[config]

|

||||

auto_approve_for_no_suggestions = true

|

||||

```

|

||||

When no [code suggestion](https://www.qodo.ai/images/pr_agent/code_suggestions_as_comment_closed.png) were found for the PR, the PR will be auto-approved.

|

||||

|

||||

### How many code suggestions are generated?

|

||||

Qodo Merge uses a dynamic strategy to generate code suggestions based on the size of the pull request (PR). Here's how it works:

|

||||

|

||||

#### 1. Chunking large PRs

|

||||

1) Chunking large PRs:

|

||||

|

||||

- Qodo Merge divides large PRs into 'chunks'.

|

||||

- Each chunk contains up to `pr_code_suggestions.max_context_tokens` tokens (default: 14,000).

|

||||

|

||||

#### 2. Generating suggestions

|

||||

|

||||

2) Generating suggestions:

|

||||

|

||||

- For each chunk, Qodo Merge generates up to `pr_code_suggestions.num_code_suggestions_per_chunk` suggestions (default: 4).

|

||||

|

||||

|

||||

This approach has two main benefits:

|

||||

|

||||

- Scalability: The number of suggestions scales with the PR size, rather than being fixed.

|

||||

@ -404,10 +366,6 @@ Note: Chunking is primarily relevant for large PRs. For most PRs (up to 500 line

|

||||

<td><b>apply_suggestions_checkbox</b></td>

|

||||

<td> Enable the checkbox to create a committable suggestion. Default is true.</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td><b>enable_more_suggestions_checkbox</b></td>

|

||||

<td> Enable the checkbox to generate more suggestions. Default is true.</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td><b>enable_help_text</b></td>

|

||||

<td>If set to true, the tool will display a help text in the comment. Default is true.</td>

|

||||

|

||||

@ -18,7 +18,7 @@ The tool will generate code suggestions for the selected component (if no compon

|

||||

|

||||

{width=768}

|

||||

|

||||

!!! note "Notes"

|

||||

**Notes**

|

||||

- Language that are currently supported by the tool: Python, Java, C++, JavaScript, TypeScript, C#.

|

||||

- This tool can also be triggered interactively by using the [`analyze`](./analyze.md) tool.

|

||||

|

||||

|

||||

@ -114,6 +114,16 @@ You can enable\disable the `review` tool to add specific labels to the PR:

|

||||

</tr>

|

||||

</table>

|

||||

|

||||

!!! example "Auto-approval"

|

||||

|

||||

If enabled, the `review` tool can approve a PR when a specific comment, `/review auto_approve`, is invoked.

|

||||

|

||||

<table>

|

||||

<tr>

|

||||

<td><b>enable_auto_approval</b></td>

|

||||

<td>If set to true, the tool will approve the PR when invoked with the 'auto_approve' command. Default is false. This flag can be changed only from a configuration file.</td>

|

||||

</tr>

|

||||

</table>

|

||||

|

||||

## Usage Tips

|

||||

|

||||

@ -165,6 +175,23 @@ You can enable\disable the `review` tool to add specific labels to the PR:

|

||||

Use triple quotes to write multi-line instructions. Use bullet points to make the instructions more readable.

|

||||

|

||||

|

||||

!!! tip "Auto-approval"

|

||||

|

||||

Qodo Merge can approve a PR when a specific comment is invoked.

|

||||

|

||||

To ensure safety, the auto-approval feature is disabled by default. To enable auto-approval, you need to actively set in a pre-defined configuration file the following:

|

||||

```

|

||||

[pr_reviewer]

|

||||

enable_auto_approval = true

|

||||

```

|

||||

(this specific flag cannot be set with a command line argument, only in the configuration file, committed to the repository)

|

||||

|

||||

|

||||

After enabling, by commenting on a PR:

|

||||

```

|

||||

/review auto_approve

|

||||

```

|

||||

Qodo Merge will automatically approve the PR, and add a comment with the approval.

|

||||

|

||||

|

||||

!!! tip "Code suggestions"

|

||||

|

||||

@ -16,17 +16,14 @@ It can be invoked manually by commenting on any PR:

|

||||

|

||||

Note that to perform retrieval, the `similar_issue` tool indexes all the repo previous issues (once).

|

||||

|

||||

### Selecting a Vector Database

|

||||

Configure your preferred database by changing the `pr_similar_issue` parameter in `configuration.toml` file.

|

||||

|

||||

#### Available Options

|

||||

Choose from the following Vector Databases:

|

||||

**Select VectorDBs** by changing `pr_similar_issue` parameter in `configuration.toml` file

|

||||

|

||||

2 VectorDBs are available to switch in

|

||||

1. LanceDB

|

||||

2. Pinecone

|

||||

|

||||

#### Pinecone Configuration

|

||||

To use Pinecone with the `similar issue` tool, add these credentials to `.secrets.toml` (or set as environment variables):

|

||||

To enable usage of the '**similar issue**' tool for Pinecone, you need to set the following keys in `.secrets.toml` (or in the relevant environment variables):

|

||||

|

||||

```

|

||||

[pinecone]

|

||||

|

||||

@ -17,8 +17,8 @@ The tool will generate tests for the selected component (if no component is stat

|

||||

|

||||

(Example taken from [here](https://github.com/Codium-ai/pr-agent/pull/598#issuecomment-1913679429)):

|

||||

|

||||

!!! note "Notes"

|

||||

- The following languages are currently supported: Python, Java, C++, JavaScript, TypeScript, C#.

|

||||

**Notes** <br>

|

||||

- The following languages are currently supported: Python, Java, C++, JavaScript, TypeScript, C#. <br>

|

||||

- This tool can also be triggered interactively by using the [`analyze`](./analyze.md) tool.

|

||||

|

||||

|

||||

|

||||

@ -142,11 +142,13 @@ Qodo Merge allows you to automatically ignore certain PRs based on various crite

|

||||

|

||||

- PRs with specific titles (using regex matching)

|

||||

- PRs between specific branches (using regex matching)

|

||||

- PRs not from specific folders

|

||||

- PRs that don't include changes from specific folders (using regex matching)

|

||||

- PRs containing specific labels

|

||||

- PRs opened by specific users

|

||||

|

||||

### Ignoring PRs with specific titles

|

||||

### Example usage

|

||||

|

||||

#### Ignoring PRs with specific titles

|

||||

|

||||

To ignore PRs with a specific title such as "[Bump]: ...", you can add the following to your `configuration.toml` file:

|

||||

|

||||

@ -157,7 +159,7 @@ ignore_pr_title = ["\\[Bump\\]"]

|

||||

|

||||

Where the `ignore_pr_title` is a list of regex patterns to match the PR title you want to ignore. Default is `ignore_pr_title = ["^\\[Auto\\]", "^Auto"]`.

|

||||

|

||||

### Ignoring PRs between specific branches

|

||||

#### Ignoring PRs between specific branches

|

||||

|

||||

To ignore PRs from specific source or target branches, you can add the following to your `configuration.toml` file:

|

||||

|

||||

@ -170,7 +172,7 @@ ignore_pr_target_branches = ["qa"]

|

||||

Where the `ignore_pr_source_branches` and `ignore_pr_target_branches` are lists of regex patterns to match the source and target branches you want to ignore.

|

||||

They are not mutually exclusive, you can use them together or separately.

|

||||

|

||||

### Ignoring PRs not from specific folders

|

||||

#### Ignoring PRs that don't include changes from specific folders

|

||||

|

||||

To allow only specific folders (often needed in large monorepos), set:

|

||||

|

||||

@ -179,9 +181,9 @@ To allow only specific folders (often needed in large monorepos), set:

|

||||

allow_only_specific_folders=['folder1','folder2']

|

||||

```

|

||||

|

||||

For the configuration above, automatic feedback will only be triggered when the PR changes include files where 'folder1' or 'folder2' is in the file path

|

||||

For the configuration above, automatic feedback will only be triggered when the PR changes include files from 'folder1' or 'folder2'

|

||||

|

||||

### Ignoring PRs containing specific labels

|

||||

#### Ignoring PRs containg specific labels

|

||||

|

||||

To ignore PRs containg specific labels, you can add the following to your `configuration.toml` file:

|

||||

|

||||

@ -192,7 +194,7 @@ ignore_pr_labels = ["do-not-merge"]

|

||||

|

||||

Where the `ignore_pr_labels` is a list of labels that when present in the PR, the PR will be ignored.

|

||||

|

||||

### Ignoring PRs from specific users

|

||||

#### Ignoring PRs from specific users

|

||||

|

||||

Qodo Merge automatically identifies and ignores pull requests created by bots using:

|

||||

|

||||

|

||||

@ -14,12 +14,12 @@ Examples of invoking the different tools via the CLI:

|

||||

|

||||

**Notes:**

|

||||

|

||||

1. in addition to editing your local configuration file, you can also change any configuration value by adding it to the command line:

|

||||

(1) in addition to editing your local configuration file, you can also change any configuration value by adding it to the command line:

|

||||

```

|

||||

python -m pr_agent.cli --pr_url=<pr_url> /review --pr_reviewer.extra_instructions="focus on the file: ..."

|

||||

```

|

||||

|

||||

2. You can print results locally, without publishing them, by setting in `configuration.toml`:

|

||||

(2) You can print results locally, without publishing them, by setting in `configuration.toml`:

|

||||

```

|

||||

[config]

|

||||

publish_output=false

|

||||

@ -27,9 +27,14 @@ verbosity_level=2

|

||||

```

|

||||

This is useful for debugging or experimenting with different tools.

|

||||

|

||||

3. **git provider**: The [git_provider](https://github.com/Codium-ai/pr-agent/blob/main/pr_agent/settings/configuration.toml#L5) field in a configuration file determines the GIT provider that will be used by Qodo Merge. Currently, the following providers are supported:

|

||||

`github` **(default)**, `gitlab`, `bitbucket`, `azure`, `codecommit`, `local`, and `gerrit`.

|

||||

(3)

|

||||

|

||||

**git provider**: The [git_provider](https://github.com/Codium-ai/pr-agent/blob/main/pr_agent/settings/configuration.toml#L5) field in a configuration file determines the GIT provider that will be used by Qodo Merge. Currently, the following providers are supported:

|

||||

`

|

||||

"github", "gitlab", "bitbucket", "azure", "codecommit", "local", "gerrit"

|

||||

`

|

||||

|

||||

Default is "github".

|

||||

|

||||

### CLI Health Check

|

||||

To verify that Qodo Merge has been configured correctly, you can run this health check command from the repository root:

|

||||

|

||||

@ -30,14 +30,6 @@ model="" # the OpenAI model you've deployed on Azure (e.g. gpt-4o)

|

||||

fallback_models=["..."]

|

||||

```

|

||||

|

||||

Passing custom headers to the underlying LLM Model API can be done by setting extra_headers parameter to litellm.

|

||||

```

|

||||

[litellm]

|

||||

extra_headers='{"projectId": "<authorized projectId >", ...}') #The value of this setting should be a JSON string representing the desired headers, a ValueError is thrown otherwise.

|

||||

```

|

||||

This enables users to pass authorization tokens or API keys, when routing requests through an API management gateway.

|

||||

|

||||

|

||||

### Ollama

|

||||

|

||||

You can run models locally through either [VLLM](https://docs.litellm.ai/docs/providers/vllm) or [Ollama](https://docs.litellm.ai/docs/providers/ollama)

|

||||

@ -197,27 +189,15 @@ key = ...

|

||||

|

||||

If the relevant model doesn't appear [here](https://github.com/Codium-ai/pr-agent/blob/main/pr_agent/algo/__init__.py), you can still use it as a custom model:

|

||||

|

||||

1. Set the model name in the configuration file:

|

||||

(1) Set the model name in the configuration file:

|

||||

```

|

||||

[config]

|

||||

model="custom_model_name"

|

||||

fallback_models=["custom_model_name"]

|

||||

```

|

||||

2. Set the maximal tokens for the model:

|

||||

(2) Set the maximal tokens for the model:

|

||||

```

|

||||

[config]

|

||||

custom_model_max_tokens= ...

|

||||

```

|

||||

3. Go to [litellm documentation](https://litellm.vercel.app/docs/proxy/quick_start#supported-llms), find the model you want to use, and set the relevant environment variables.

|

||||

|

||||

4. Most reasoning models do not support chat-style inputs (`system` and `user` messages) or temperature settings.

|

||||

To bypass chat templates and temperature controls, set `config.custom_reasoning_model = true` in your configuration file.

|

||||

|

||||

## Dedicated parameters

|

||||

|

||||

### OpenAI models

|

||||

|

||||

[config]

|

||||

reasoning_efffort= = "medium" # "low", "medium", "high"

|

||||

|

||||

With the OpenAI models that support reasoning effort (eg: o3-mini), you can specify its reasoning effort via `config` section. The default value is `medium`. You can change it to `high` or `low` based on your usage.

|

||||

(3) Go to [litellm documentation](https://litellm.vercel.app/docs/proxy/quick_start#supported-llms), find the model you want to use, and set the relevant environment variables.

|

||||

|

||||

@ -8,6 +8,7 @@ The models supported by Qodo Merge are:

|

||||

|

||||

- `claude-3-5-sonnet`

|

||||

- `gpt-4o`

|

||||

- `deepseek-r1`

|

||||

- `o3-mini`

|

||||

|

||||

To restrict Qodo Merge to using only `Claude-3.5-sonnet`, add this setting:

|

||||

@ -23,11 +24,11 @@ To restrict Qodo Merge to using only `GPT-4o`, add this setting:

|

||||

model="gpt-4o"

|

||||

```

|

||||

|

||||

[//]: # (To restrict Qodo Merge to using only `deepseek-r1` us-hosted, add this setting:)

|

||||

[//]: # (```)

|

||||

[//]: # ([config])

|

||||

[//]: # (model="deepseek/r1")

|

||||

[//]: # (```)

|

||||

To restrict Qodo Merge to using only `deepseek-r1`, add this setting:

|

||||

```

|

||||

[config]

|

||||

model="deepseek/r1"

|

||||

```

|

||||

|

||||

To restrict Qodo Merge to using only `o3-mini`, add this setting:

|

||||

```

|

||||

|

||||

@ -1,6 +1,6 @@

|

||||

site_name: Qodo Merge (and open-source PR-Agent)

|

||||

repo_url: https://github.com/qodo-ai/pr-agent

|

||||

repo_name: Qodo-ai/pr-agent

|

||||

repo_url: https://github.com/Codium-ai/pr-agent

|

||||

repo_name: Codium-ai/pr-agent

|

||||

|

||||

nav:

|

||||

- Overview:

|

||||

@ -58,7 +58,6 @@ nav:

|

||||

- Data Privacy: 'chrome-extension/data_privacy.md'

|

||||

- FAQ:

|

||||

- FAQ: 'faq/index.md'

|

||||

- AI Docs Search: 'ai_search/index.md'

|

||||

# - Code Fine-tuning Benchmark: 'finetuning_benchmark/index.md'

|

||||

|

||||

theme:

|

||||

@ -154,4 +153,4 @@ markdown_extensions:

|

||||

|

||||

|

||||

copyright: |

|

||||

© 2025 <a href="https://www.codium.ai/" target="_blank" rel="noopener">QodoAI</a>

|

||||

© 2024 <a href="https://www.codium.ai/" target="_blank" rel="noopener">CodiumAI</a>

|

||||

|

||||

@ -82,7 +82,7 @@

|

||||

|

||||

<footer class="wrapper">

|

||||

<div class="container">

|

||||

<p class="footer-text">© 2025 <a href="https://www.qodo.ai/" target="_blank" rel="noopener">Qodo</a></p>

|

||||

<p class="footer-text">© 2024 <a href="https://www.qodo.ai/" target="_blank" rel="noopener">Qodo</a></p>

|

||||

<div class="footer-links">

|

||||

<a href="https://qodo-gen-docs.qodo.ai/">Qodo Gen</a>

|

||||

<p>|</p>

|

||||

|

||||

@ -3,7 +3,6 @@ from functools import partial

|

||||

|

||||

from pr_agent.algo.ai_handlers.base_ai_handler import BaseAiHandler

|

||||

from pr_agent.algo.ai_handlers.litellm_ai_handler import LiteLLMAIHandler

|

||||

from pr_agent.algo.cli_args import CliArgs

|

||||

from pr_agent.algo.utils import update_settings_from_args

|

||||

from pr_agent.config_loader import get_settings

|

||||

from pr_agent.git_providers.utils import apply_repo_settings

|

||||

@ -61,15 +60,25 @@ class PRAgent:

|

||||

else:

|

||||

action, *args = request

|

||||

|

||||

# validate args

|

||||

is_valid, arg = CliArgs.validate_user_args(args)

|

||||

if not is_valid:

|

||||

forbidden_cli_args = ['enable_auto_approval', 'approve_pr_on_self_review', 'base_url', 'url', 'app_name', 'secret_provider',

|

||||

'git_provider', 'skip_keys', 'openai.key', 'ANALYTICS_FOLDER', 'uri', 'app_id', 'webhook_secret',

|

||||

'bearer_token', 'PERSONAL_ACCESS_TOKEN', 'override_deployment_type', 'private_key',

|

||||

'local_cache_path', 'enable_local_cache', 'jira_base_url', 'api_base', 'api_type', 'api_version',

|

||||

'skip_keys']

|

||||

if args:

|

||||

for arg in args:

|

||||

if arg.startswith('--'):

|

||||

arg_word = arg.lower()

|

||||

arg_word = arg_word.replace('__', '.') # replace double underscore with dot, e.g. --openai__key -> --openai.key

|

||||

for forbidden_arg in forbidden_cli_args:

|

||||

forbidden_arg_word = forbidden_arg.lower()

|

||||

if '.' not in forbidden_arg_word:

|

||||

forbidden_arg_word = '.' + forbidden_arg_word

|

||||

if forbidden_arg_word in arg_word:

|

||||

get_logger().error(

|

||||

f"CLI argument for param '{arg}' is forbidden. Use instead a configuration file."

|

||||

f"CLI argument for param '{forbidden_arg}' is forbidden. Use instead a configuration file."

|

||||

)

|

||||

return False

|

||||

|

||||

# Update settings from args

|

||||

args = update_settings_from_args(args)

|

||||

|

||||

action = action.lstrip("/").lower()

|

||||

|

||||

@ -43,14 +43,13 @@ MAX_TOKENS = {

|

||||

'vertex_ai/claude-3-opus@20240229': 100000,

|

||||

'vertex_ai/claude-3-5-sonnet@20240620': 100000,

|

||||

'vertex_ai/claude-3-5-sonnet-v2@20241022': 100000,

|

||||

'vertex_ai/claude-3-7-sonnet@20250219': 200000,

|

||||

'vertex_ai/gemini-1.5-pro': 1048576,

|

||||

'vertex_ai/gemini-1.5-flash': 1048576,

|

||||

'vertex_ai/gemini-2.0-flash': 1048576,

|

||||

'vertex_ai/gemini-2.0-flash-exp': 1048576,

|

||||

'vertex_ai/gemma2': 8200,

|

||||

'gemini/gemini-1.5-pro': 1048576,

|

||||

'gemini/gemini-1.5-flash': 1048576,

|

||||

'gemini/gemini-2.0-flash': 1048576,

|

||||

'gemini/gemini-2.0-flash-exp': 1048576,

|

||||

'codechat-bison': 6144,

|

||||

'codechat-bison-32k': 32000,

|

||||

'anthropic.claude-instant-v1': 100000,

|

||||

@ -59,7 +58,6 @@ MAX_TOKENS = {

|

||||

'anthropic/claude-3-opus-20240229': 100000,

|

||||

'anthropic/claude-3-5-sonnet-20240620': 100000,

|

||||

'anthropic/claude-3-5-sonnet-20241022': 100000,

|

||||

'anthropic/claude-3-7-sonnet-20250219': 200000,

|

||||

'anthropic/claude-3-5-haiku-20241022': 100000,

|

||||

'bedrock/anthropic.claude-instant-v1': 100000,

|

||||

'bedrock/anthropic.claude-v2': 100000,

|

||||

@ -69,7 +67,6 @@ MAX_TOKENS = {

|

||||

'bedrock/anthropic.claude-3-5-haiku-20241022-v1:0': 100000,

|

||||

'bedrock/anthropic.claude-3-5-sonnet-20240620-v1:0': 100000,

|

||||

'bedrock/anthropic.claude-3-5-sonnet-20241022-v2:0': 100000,

|

||||

'bedrock/anthropic.claude-3-7-sonnet-20250219-v1:0': 200000,

|

||||

"bedrock/us.anthropic.claude-3-5-sonnet-20241022-v2:0": 100000,

|

||||

'claude-3-5-sonnet': 100000,

|

||||

'groq/llama3-8b-8192': 8192,

|

||||

@ -104,8 +101,3 @@ NO_SUPPORT_TEMPERATURE_MODELS = [

|

||||

"o3-mini-2025-01-31",

|

||||

"o1-preview"

|

||||

]

|

||||

|

||||

SUPPORT_REASONING_EFFORT_MODELS = [

|

||||

"o3-mini",

|

||||

"o3-mini-2025-01-31"

|

||||

]

|

||||

|

||||

@ -6,12 +6,11 @@ import requests

|

||||

from litellm import acompletion

|

||||

from tenacity import retry, retry_if_exception_type, stop_after_attempt

|

||||

|

||||

from pr_agent.algo import NO_SUPPORT_TEMPERATURE_MODELS, SUPPORT_REASONING_EFFORT_MODELS, USER_MESSAGE_ONLY_MODELS

|

||||

from pr_agent.algo import NO_SUPPORT_TEMPERATURE_MODELS, USER_MESSAGE_ONLY_MODELS

|

||||

from pr_agent.algo.ai_handlers.base_ai_handler import BaseAiHandler

|

||||

from pr_agent.algo.utils import ReasoningEffort, get_version

|

||||

from pr_agent.algo.utils import get_version

|

||||

from pr_agent.config_loader import get_settings

|

||||

from pr_agent.log import get_logger

|

||||

import json

|

||||

|

||||

OPENAI_RETRIES = 5

|

||||

|

||||

@ -102,9 +101,6 @@ class LiteLLMAIHandler(BaseAiHandler):

|

||||

# Model that doesn't support temperature argument

|

||||

self.no_support_temperature_models = NO_SUPPORT_TEMPERATURE_MODELS

|

||||

|

||||

# Models that support reasoning effort

|

||||

self.support_reasoning_models = SUPPORT_REASONING_EFFORT_MODELS

|

||||

|

||||

def prepare_logs(self, response, system, user, resp, finish_reason):

|

||||

response_log = response.dict().copy()

|

||||

response_log['system'] = system

|

||||

@ -209,7 +205,7 @@ class LiteLLMAIHandler(BaseAiHandler):

|

||||

{"type": "image_url", "image_url": {"url": img_path}}]

|

||||

|

||||

# Currently, some models do not support a separate system and user prompts

|

||||

if model in self.user_message_only_models or get_settings().config.custom_reasoning_model:

|

||||

if model in self.user_message_only_models:

|

||||

user = f"{system}\n\n\n{user}"

|

||||

system = ""

|

||||

get_logger().info(f"Using model {model}, combining system and user prompts")

|

||||

@ -231,17 +227,9 @@ class LiteLLMAIHandler(BaseAiHandler):

|

||||

}

|

||||

|

||||

# Add temperature only if model supports it

|

||||

if model not in self.no_support_temperature_models and not get_settings().config.custom_reasoning_model:

|

||||

get_logger().info(f"Adding temperature with value {temperature} to model {model}.")

|

||||

if model not in self.no_support_temperature_models:

|

||||

kwargs["temperature"] = temperature

|

||||

|

||||

# Add reasoning_effort if model supports it

|

||||

if (model in self.support_reasoning_models):

|

||||

supported_reasoning_efforts = [ReasoningEffort.HIGH.value, ReasoningEffort.MEDIUM.value, ReasoningEffort.LOW.value]

|

||||

reasoning_effort = get_settings().config.reasoning_effort if (get_settings().config.reasoning_effort in supported_reasoning_efforts) else ReasoningEffort.MEDIUM.value

|

||||

get_logger().info(f"Adding reasoning_effort with value {reasoning_effort} to model {model}.")

|

||||

kwargs["reasoning_effort"] = reasoning_effort

|

||||

|

||||

if get_settings().litellm.get("enable_callbacks", False):

|

||||

kwargs = self.add_litellm_callbacks(kwargs)

|

||||

|

||||

@ -255,16 +243,6 @@ class LiteLLMAIHandler(BaseAiHandler):

|

||||

if self.repetition_penalty:

|

||||

kwargs["repetition_penalty"] = self.repetition_penalty

|

||||

|

||||

#Added support for extra_headers while using litellm to call underlying model, via a api management gateway, would allow for passing custom headers for security and authorization

|

||||

if get_settings().get("LITELLM.EXTRA_HEADERS", None):

|

||||

try:

|

||||

litellm_extra_headers = json.loads(get_settings().litellm.extra_headers)

|

||||

if not isinstance(litellm_extra_headers, dict):

|

||||

raise ValueError("LITELLM.EXTRA_HEADERS must be a JSON object")

|

||||

except json.JSONDecodeError as e:

|

||||

raise ValueError(f"LITELLM.EXTRA_HEADERS contains invalid JSON: {str(e)}")

|

||||

kwargs["extra_headers"] = litellm_extra_headers

|

||||

|

||||

get_logger().debug("Prompts", artifact={"system": system, "user": user})

|

||||

|

||||

if get_settings().config.verbosity_level >= 2:

|

||||

|

||||

@ -1,34 +0,0 @@

|

||||

from base64 import b64decode

|

||||

import hashlib

|

||||

|

||||

class CliArgs:

|

||||

@staticmethod

|

||||

def validate_user_args(args: list) -> (bool, str):

|

||||

try:

|

||||

if not args:

|

||||

return True, ""

|

||||

|

||||

# decode forbidden args

|

||||

_encoded_args = 'ZW5hYmxlX2F1dG9fYXBwcm92YWw=:YXBwcm92ZV9wcl9vbl9zZWxmX3Jldmlldw==:YmFzZV91cmw=:dXJs:YXBwX25hbWU=:c2VjcmV0X3Byb3ZpZGVy:Z2l0X3Byb3ZpZGVy:c2tpcF9rZXlz:b3BlbmFpLmtleQ==:QU5BTFlUSUNTX0ZPTERFUg==:dXJp:YXBwX2lk:d2ViaG9va19zZWNyZXQ=:YmVhcmVyX3Rva2Vu:UEVSU09OQUxfQUNDRVNTX1RPS0VO:b3ZlcnJpZGVfZGVwbG95bWVudF90eXBl:cHJpdmF0ZV9rZXk=:bG9jYWxfY2FjaGVfcGF0aA==:ZW5hYmxlX2xvY2FsX2NhY2hl:amlyYV9iYXNlX3VybA==:YXBpX2Jhc2U=:YXBpX3R5cGU=:YXBpX3ZlcnNpb24=:c2tpcF9rZXlz'

|

||||

forbidden_cli_args = []

|

||||

for e in _encoded_args.split(':'):

|

||||

forbidden_cli_args.append(b64decode(e).decode())

|

||||

|

||||

# lowercase all forbidden args

|

||||

for i, _ in enumerate(forbidden_cli_args):

|

||||

forbidden_cli_args[i] = forbidden_cli_args[i].lower()

|

||||

if '.' not in forbidden_cli_args[i]:

|

||||

forbidden_cli_args[i] = '.' + forbidden_cli_args[i]

|

||||

|

||||

for arg in args:

|

||||

if arg.startswith('--'):

|

||||

arg_word = arg.lower()

|

||||

arg_word = arg_word.replace('__', '.') # replace double underscore with dot, e.g. --openai__key -> --openai.key

|

||||

for forbidden_arg_word in forbidden_cli_args:

|

||||

if forbidden_arg_word in arg_word:

|

||||

return False, forbidden_arg_word

|

||||

return True, ""

|

||||

except Exception as e:

|

||||

return False, str(e)

|

||||

|

||||

|

||||

@ -9,12 +9,11 @@ from pr_agent.log import get_logger

|

||||

|

||||

|

||||

def extend_patch(original_file_str, patch_str, patch_extra_lines_before=0,

|

||||

patch_extra_lines_after=0, filename: str = "", new_file_str="") -> str:

|

||||

patch_extra_lines_after=0, filename: str = "") -> str:

|

||||

if not patch_str or (patch_extra_lines_before == 0 and patch_extra_lines_after == 0) or not original_file_str:

|

||||

return patch_str

|

||||

|

||||

original_file_str = decode_if_bytes(original_file_str)

|

||||

new_file_str = decode_if_bytes(new_file_str)

|

||||

if not original_file_str:

|

||||

return patch_str

|

||||

|

||||

@ -23,7 +22,7 @@ def extend_patch(original_file_str, patch_str, patch_extra_lines_before=0,

|

||||

|

||||

try:

|

||||

extended_patch_str = process_patch_lines(patch_str, original_file_str,

|

||||

patch_extra_lines_before, patch_extra_lines_after, new_file_str)

|

||||

patch_extra_lines_before, patch_extra_lines_after)

|

||||

except Exception as e:

|

||||

get_logger().warning(f"Failed to extend patch: {e}", artifact={"traceback": traceback.format_exc()})

|

||||

return patch_str

|

||||

@ -53,13 +52,12 @@ def should_skip_patch(filename):

|

||||

return False

|

||||

|

||||

|

||||

def process_patch_lines(patch_str, original_file_str, patch_extra_lines_before, patch_extra_lines_after, new_file_str=""):

|

||||

def process_patch_lines(patch_str, original_file_str, patch_extra_lines_before, patch_extra_lines_after):

|

||||

allow_dynamic_context = get_settings().config.allow_dynamic_context

|

||||

patch_extra_lines_before_dynamic = get_settings().config.max_extra_lines_before_dynamic_context

|

||||

|

||||

file_original_lines = original_file_str.splitlines()

|

||||

file_new_lines = new_file_str.splitlines() if new_file_str else []

|

||||

len_original_lines = len(file_original_lines)

|

||||

original_lines = original_file_str.splitlines()

|

||||

len_original_lines = len(original_lines)

|

||||

patch_lines = patch_str.splitlines()

|

||||

extended_patch_lines = []

|

||||

|

||||

@ -75,12 +73,12 @@ def process_patch_lines(patch_str, original_file_str, patch_extra_lines_before,

|

||||

if match:

|

||||

# finish processing previous hunk

|

||||

if is_valid_hunk and (start1 != -1 and patch_extra_lines_after > 0):

|

||||

delta_lines_original = [f' {line}' for line in file_original_lines[start1 + size1 - 1:start1 + size1 - 1 + patch_extra_lines_after]]

|

||||

extended_patch_lines.extend(delta_lines_original)

|

||||

delta_lines = [f' {line}' for line in original_lines[start1 + size1 - 1:start1 + size1 - 1 + patch_extra_lines_after]]

|

||||

extended_patch_lines.extend(delta_lines)

|

||||

|

||||

section_header, size1, size2, start1, start2 = extract_hunk_headers(match)

|

||||

|

||||

is_valid_hunk = check_if_hunk_lines_matches_to_file(i, file_original_lines, patch_lines, start1)

|

||||

is_valid_hunk = check_if_hunk_lines_matches_to_file(i, original_lines, patch_lines, start1)

|

||||

|

||||

if is_valid_hunk and (patch_extra_lines_before > 0 or patch_extra_lines_after > 0):

|

||||

def _calc_context_limits(patch_lines_before):

|

||||

@ -95,15 +93,12 @@ def process_patch_lines(patch_str, original_file_str, patch_extra_lines_before,

|

||||

extended_size2 = max(extended_size2 - delta_cap, size2)

|

||||

return extended_start1, extended_size1, extended_start2, extended_size2

|

||||

|

||||

if allow_dynamic_context and file_new_lines:

|

||||

if allow_dynamic_context:

|

||||

extended_start1, extended_size1, extended_start2, extended_size2 = \

|

||||

_calc_context_limits(patch_extra_lines_before_dynamic)

|

||||

|

||||

lines_before_original = file_original_lines[extended_start1 - 1:start1 - 1]

|

||||

lines_before_new = file_new_lines[extended_start2 - 1:start2 - 1]

|

||||

lines_before = original_lines[extended_start1 - 1:start1 - 1]

|

||||

found_header = False

|

||||

if lines_before_original == lines_before_new: # Making sure no changes from a previous hunk

|

||||

for i, line, in enumerate(lines_before_original):

|

||||

for i, line, in enumerate(lines_before):

|

||||

if section_header in line:

|

||||

found_header = True

|

||||

# Update start and size in one line each

|

||||

@ -112,11 +107,6 @@ def process_patch_lines(patch_str, original_file_str, patch_extra_lines_before,

|

||||

# get_logger().debug(f"Found section header in line {i} before the hunk")

|

||||

section_header = ''

|

||||

break

|

||||

else:

|

||||

get_logger().debug(f"Extra lines before hunk are different in original and new file - dynamic context",

|

||||

artifact={"lines_before_original": lines_before_original,

|

||||

"lines_before_new": lines_before_new})

|

||||

|

||||