mirror of

https://github.com/qodo-ai/pr-agent.git

synced 2025-07-21 04:50:39 +08:00

Compare commits

3 Commits

ok/handle_

...

ok/gitlab_

| Author | SHA1 | Date | |

|---|---|---|---|

| e41247c473 | |||

| 5704070834 | |||

| 98fe376add |

6

.gitignore

vendored

6

.gitignore

vendored

@ -1,8 +1,4 @@

|

||||

.idea/

|

||||

venv/

|

||||

pr_agent/settings/.secrets.toml

|

||||

__pycache__

|

||||

dist/

|

||||

*.egg-info/

|

||||

build/

|

||||

review.md

|

||||

__pycache__

|

||||

11

.gitlab-ci.yml

Normal file

11

.gitlab-ci.yml

Normal file

@ -0,0 +1,11 @@

|

||||

bot-review:

|

||||

stage: test

|

||||

variables:

|

||||

MR_URL: ${CI_MERGE_REQUEST_PROJECT_URL}/-/merge_requests/${CI_MERGE_REQUEST_IID}

|

||||

image: docker:latest

|

||||

services:

|

||||

- docker:19-dind

|

||||

script:

|

||||

- docker run --rm -e OPENAI.KEY=${OPEN_API_KEY} -e OPENAI.ORG=${OPEN_API_ORG} -e GITLAB.PERSONAL_ACCESS_TOKEN=${GITLAB_PAT} -e CONFIG.GIT_PROVIDER=gitlab codiumai/pr-agent --pr_url ${MR_URL} describe

|

||||

rules:

|

||||

- if: $CI_COMMIT_BRANCH != $CI_DEFAULT_BRANCH

|

||||

@ -1,6 +0,0 @@

|

||||

## 2023-07-26

|

||||

|

||||

### Added

|

||||

- New feature for updating the CHANGELOG.md based on the contents of a PR.

|

||||

- Added support for this feature for the Github provider.

|

||||

- New configuration settings and prompts for the changelog update feature.

|

||||

@ -1,8 +1,8 @@

|

||||

FROM python:3.10 as base

|

||||

|

||||

WORKDIR /app

|

||||

ADD pyproject.toml .

|

||||

RUN pip install . && rm pyproject.toml

|

||||

ADD requirements.txt .

|

||||

RUN pip install -r requirements.txt && rm requirements.txt

|

||||

ENV PYTHONPATH=/app

|

||||

ADD pr_agent pr_agent

|

||||

ADD github_action/entrypoint.sh /

|

||||

|

||||

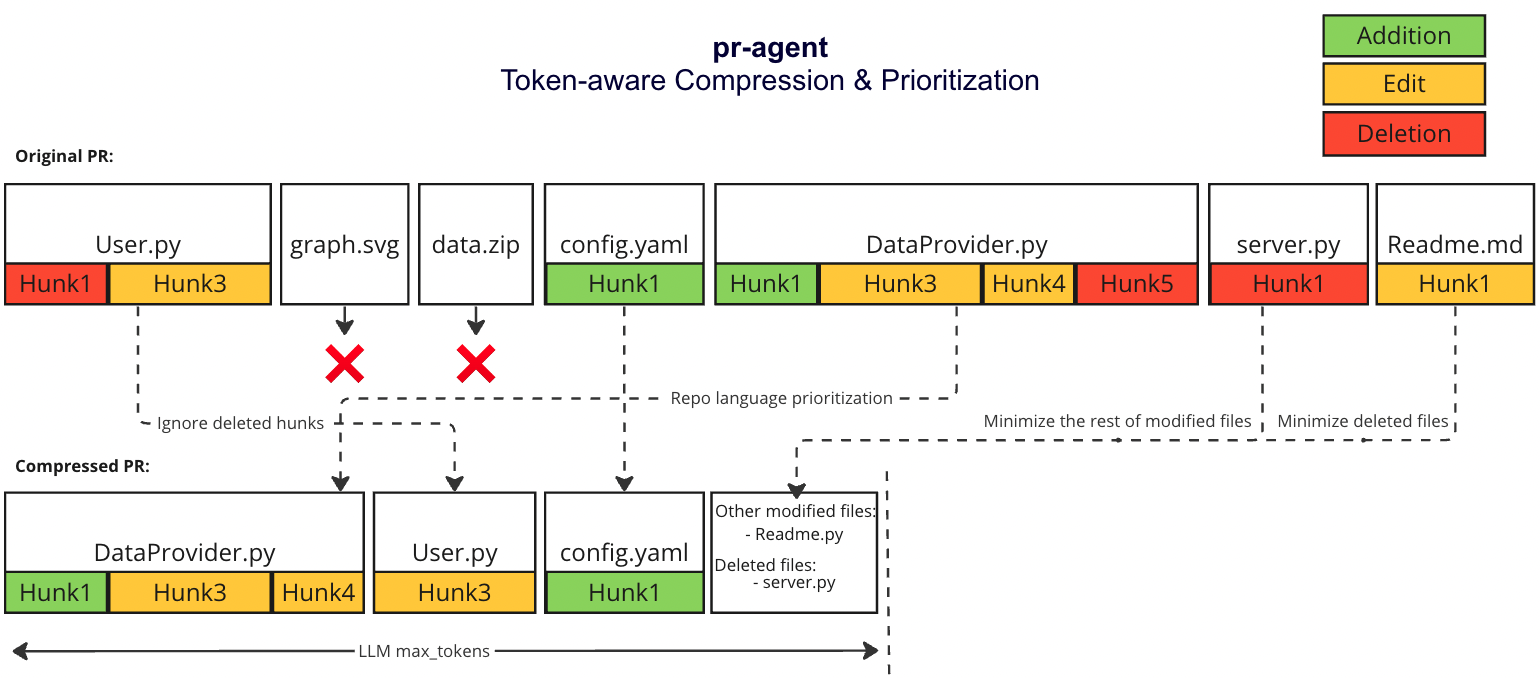

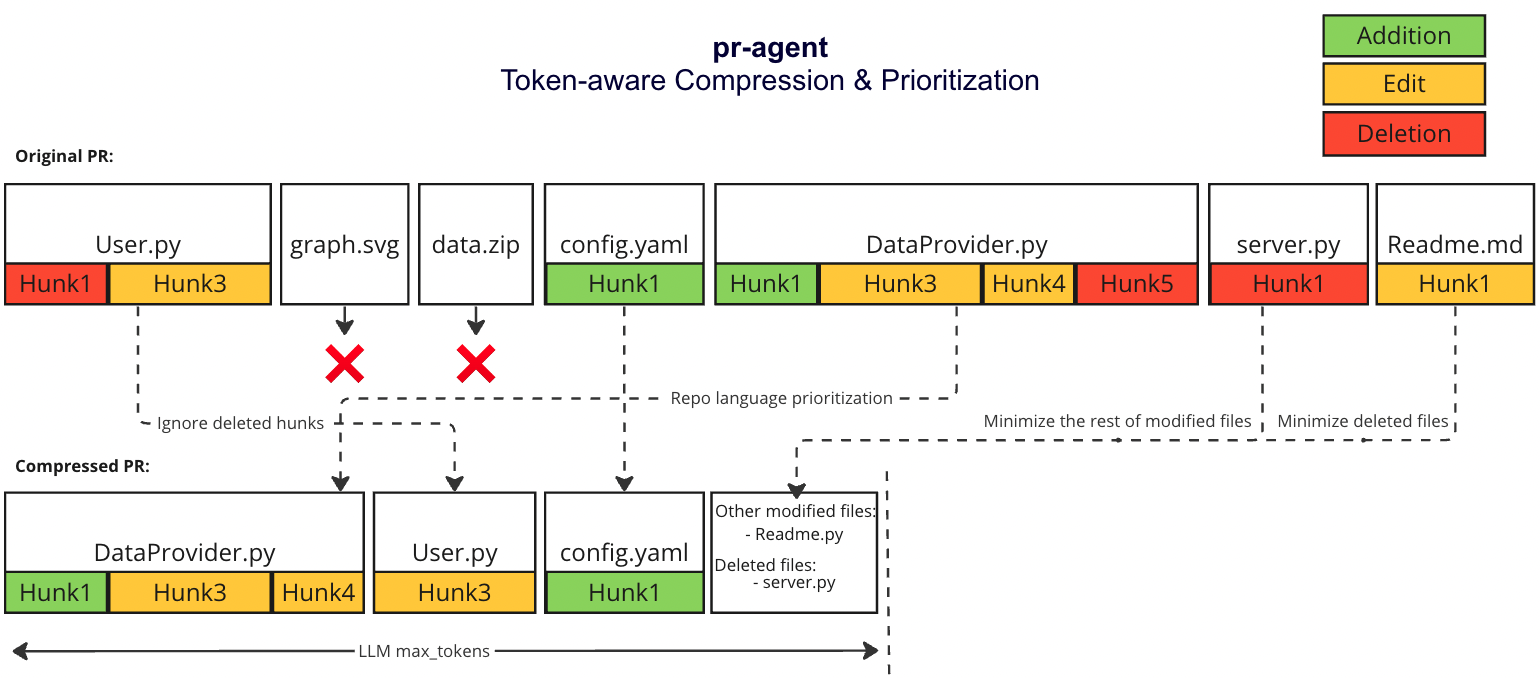

@ -31,7 +31,7 @@ We prioritize additions over deletions:

|

||||

- File patches are a list of hunks, remove all hunks of type deletion-only from the hunks in the file patch

|

||||

#### Adaptive and token-aware file patch fitting

|

||||

We use [tiktoken](https://github.com/openai/tiktoken) to tokenize the patches after the modifications described above, and we use the following strategy to fit the patches into the prompt:

|

||||

1. Within each language we sort the files by the number of tokens in the file (in descending order):

|

||||

1. Withing each language we sort the files by the number of tokens in the file (in descending order):

|

||||

* ```[[file2.py, file.py],[file4.jsx, file3.js],[readme.md]]```

|

||||

2. Iterate through the patches in the order described above

|

||||

2. Add the patches to the prompt until the prompt reaches a certain buffer from the max token length

|

||||

@ -39,4 +39,4 @@ We use [tiktoken](https://github.com/openai/tiktoken) to tokenize the patches af

|

||||

4. If we haven't reached the max token length, add the `deleted files` to the prompt until the prompt reaches the max token length (hard stop), skip the rest of the patches.

|

||||

|

||||

### Example

|

||||

|

||||

|

||||

17

README.md

17

README.md

@ -66,7 +66,6 @@ CodiumAI `PR-Agent` is an open-source tool aiming to help developers review pull

|

||||

- [Usage and tools](#usage-and-tools)

|

||||

- [Configuration](./CONFIGURATION.md)

|

||||

- [How it works](#how-it-works)

|

||||

- [Why use PR-Agent](#why-use-pr-agent)

|

||||

- [Roadmap](#roadmap)

|

||||

- [Similar projects](#similar-projects)

|

||||

</div>

|

||||

@ -82,10 +81,8 @@ CodiumAI `PR-Agent` is an open-source tool aiming to help developers review pull

|

||||

| | Auto-Description | :white_check_mark: | :white_check_mark: | |

|

||||

| | Improve Code | :white_check_mark: | :white_check_mark: | |

|

||||

| | Reflect and Review | :white_check_mark: | | |

|

||||

| | Update CHANGELOG.md | :white_check_mark: | | |

|

||||

| | | | | |

|

||||

| USAGE | CLI | :white_check_mark: | :white_check_mark: | :white_check_mark: |

|

||||

| | App / webhook | :white_check_mark: | :white_check_mark: | |

|

||||

| | Tagging bot | :white_check_mark: | | |

|

||||

| | Actions | :white_check_mark: | | |

|

||||

| | | | | |

|

||||

@ -100,7 +97,6 @@ Examples for invoking the different tools via the CLI:

|

||||

- **Improve**: python cli.py --pr-url=<pr_url> improve

|

||||

- **Ask**: python cli.py --pr-url=<pr_url> ask "Write me a poem about this PR"

|

||||

- **Reflect**: python cli.py --pr-url=<pr_url> reflect

|

||||

- **Update changelog**: python cli.py --pr-url=<pr_url> update_changelog

|

||||

|

||||

"<pr_url>" is the url of the relevant PR (for example: https://github.com/Codium-ai/pr-agent/pull/50).

|

||||

|

||||

@ -149,19 +145,6 @@ There are several ways to use PR-Agent:

|

||||

|

||||

Check out the [PR Compression strategy](./PR_COMPRESSION.md) page for more details on how we convert a code diff to a manageable LLM prompt

|

||||

|

||||

## Why use PR-Agent?

|

||||

|

||||

A reasonable question that can be asked is: `"Why use PR-Agent? What make it stand out from existing tools?"`

|

||||

|

||||

Here are some of the reasons why:

|

||||

|

||||

- We emphasize **real-life practical usage**. Each tool (review, improve, ask, ...) has a single GPT-4 call, no more. We feel that this is critical for realistic team usage - obtaining an answer quickly (~30 seconds) and affordably.

|

||||

- Our [PR Compression strategy](./PR_COMPRESSION.md) is a core ability that enables to effectively tackle both short and long PRs.

|

||||

- Our JSON prompting strategy enables to have **modular, customizable tools**. For example, the '/review' tool categories can be controlled via the configuration file. Adding additional categories is easy and accessible.

|

||||

- We support **multiple git providers** (GitHub, Gitlab, Bitbucket), and multiple ways to use the tool (CLI, GitHub Action, Docker, ...).

|

||||

- We are open-source, and welcome contributions from the community.

|

||||

|

||||

|

||||

## Roadmap

|

||||

|

||||

- [ ] Support open-source models, as a replacement for OpenAI models. (Note - a minimal requirement for each open-source model is to have 8k+ context, and good support for generating JSON as an output)

|

||||

|

||||

@ -1,8 +1,8 @@

|

||||

FROM python:3.10 as base

|

||||

|

||||

WORKDIR /app

|

||||

ADD pyproject.toml .

|

||||

RUN pip install . && rm pyproject.toml

|

||||

ADD requirements.txt .

|

||||

RUN pip install -r requirements.txt && rm requirements.txt

|

||||

ENV PYTHONPATH=/app

|

||||

ADD pr_agent pr_agent

|

||||

|

||||

|

||||

@ -4,9 +4,9 @@ RUN yum update -y && \

|

||||

yum install -y gcc python3-devel && \

|

||||

yum clean all

|

||||

|

||||

ADD pyproject.toml .

|

||||

RUN pip install . && rm pyproject.toml

|

||||

RUN pip install mangum==0.17.0

|

||||

ADD requirements.txt .

|

||||

RUN pip install -r requirements.txt && rm requirements.txt

|

||||

RUN pip install mangum==16.0.0

|

||||

COPY pr_agent/ ${LAMBDA_TASK_ROOT}/pr_agent/

|

||||

|

||||

CMD ["pr_agent.servers.serverless.serverless"]

|

||||

|

||||

@ -6,7 +6,6 @@ from pr_agent.tools.pr_description import PRDescription

|

||||

from pr_agent.tools.pr_information_from_user import PRInformationFromUser

|

||||

from pr_agent.tools.pr_questions import PRQuestions

|

||||

from pr_agent.tools.pr_reviewer import PRReviewer

|

||||

from pr_agent.tools.pr_update_changelog import PRUpdateChangelog

|

||||

|

||||

|

||||

class PRAgent:

|

||||

@ -27,9 +26,7 @@ class PRAgent:

|

||||

elif any(cmd == action for cmd in ["/improve", "/improve_code"]):

|

||||

await PRCodeSuggestions(pr_url).suggest()

|

||||

elif any(cmd == action for cmd in ["/ask", "/ask_question"]):

|

||||

await PRQuestions(pr_url, args=args).answer()

|

||||

elif any(cmd == action for cmd in ["/update_changelog"]):

|

||||

await PRUpdateChangelog(pr_url, args=args).update_changelog()

|

||||

await PRQuestions(pr_url, args).answer()

|

||||

else:

|

||||

return False

|

||||

|

||||

|

||||

@ -13,7 +13,7 @@ if settings.config.use_extra_bad_extensions:

|

||||

|

||||

|

||||

def filter_bad_extensions(files):

|

||||

return [f for f in files if f.filename is not None and is_valid_file(f.filename)]

|

||||

return [f for f in files if is_valid_file(f.filename)]

|

||||

|

||||

|

||||

def is_valid_file(filename):

|

||||

|

||||

@ -3,8 +3,6 @@ from __future__ import annotations

|

||||

import logging

|

||||

from typing import Tuple, Union, Callable, List

|

||||

|

||||

from github import RateLimitExceededException

|

||||

|

||||

from pr_agent.algo import MAX_TOKENS

|

||||

from pr_agent.algo.git_patch_processing import convert_to_hunks_with_lines_numbers, extend_patch, handle_patch_deletions

|

||||

from pr_agent.algo.language_handler import sort_files_by_main_languages

|

||||

@ -21,6 +19,7 @@ OUTPUT_BUFFER_TOKENS_SOFT_THRESHOLD = 1000

|

||||

OUTPUT_BUFFER_TOKENS_HARD_THRESHOLD = 600

|

||||

PATCH_EXTRA_LINES = 3

|

||||

|

||||

|

||||

def get_pr_diff(git_provider: GitProvider, token_handler: TokenHandler, model: str,

|

||||

add_line_numbers_to_hunks: bool = False, disable_extra_lines: bool = False) -> str:

|

||||

"""

|

||||

@ -41,11 +40,7 @@ def get_pr_diff(git_provider: GitProvider, token_handler: TokenHandler, model: s

|

||||

global PATCH_EXTRA_LINES

|

||||

PATCH_EXTRA_LINES = 0

|

||||

|

||||

try:

|

||||

diff_files = list(git_provider.get_diff_files())

|

||||

except RateLimitExceededException as e:

|

||||

logging.error(f"Rate limit exceeded for git provider API. original message {e}")

|

||||

raise

|

||||

diff_files = list(git_provider.get_diff_files())

|

||||

|

||||

# get pr languages

|

||||

pr_languages = sort_files_by_main_languages(git_provider.get_languages(), diff_files)

|

||||

@ -60,7 +55,7 @@ def get_pr_diff(git_provider: GitProvider, token_handler: TokenHandler, model: s

|

||||

|

||||

# if we are over the limit, start pruning

|

||||

patches_compressed, modified_file_names, deleted_file_names = \

|

||||

pr_generate_compressed_diff(pr_languages, token_handler, model, add_line_numbers_to_hunks)

|

||||

pr_generate_compressed_diff(pr_languages, token_handler, add_line_numbers_to_hunks)

|

||||

|

||||

final_diff = "\n".join(patches_compressed)

|

||||

if modified_file_names:

|

||||

|

||||

@ -8,7 +8,6 @@ from pr_agent.tools.pr_description import PRDescription

|

||||

from pr_agent.tools.pr_information_from_user import PRInformationFromUser

|

||||

from pr_agent.tools.pr_questions import PRQuestions

|

||||

from pr_agent.tools.pr_reviewer import PRReviewer

|

||||

from pr_agent.tools.pr_update_changelog import PRUpdateChangelog

|

||||

|

||||

|

||||

def run(args=None):

|

||||

@ -28,15 +27,13 @@ ask / ask_question [question] - Ask a question about the PR.

|

||||

describe / describe_pr - Modify the PR title and description based on the PR's contents.

|

||||

improve / improve_code - Suggest improvements to the code in the PR as pull request comments ready to commit.

|

||||

reflect - Ask the PR author questions about the PR.

|

||||

update_changelog - Update the changelog based on the PR's contents.

|

||||

""")

|

||||

parser.add_argument('--pr_url', type=str, help='The URL of the PR to review', required=True)

|

||||

parser.add_argument('command', type=str, help='The', choices=['review', 'review_pr',

|

||||

'ask', 'ask_question',

|

||||

'describe', 'describe_pr',

|

||||

'improve', 'improve_code',

|

||||

'reflect', 'review_after_reflect',

|

||||

'update_changelog'],

|

||||

'reflect', 'review_after_reflect'],

|

||||

default='review')

|

||||

parser.add_argument('rest', nargs=argparse.REMAINDER, default=[])

|

||||

args = parser.parse_args(args)

|

||||

@ -52,8 +49,7 @@ update_changelog - Update the changelog based on the PR's contents.

|

||||

'review': _handle_review_command,

|

||||

'review_pr': _handle_review_command,

|

||||

'reflect': _handle_reflect_command,

|

||||

'review_after_reflect': _handle_review_after_reflect_command,

|

||||

'update_changelog': _handle_update_changelog,

|

||||

'review_after_reflect': _handle_review_after_reflect_command

|

||||

}

|

||||

if command in commands:

|

||||

commands[command](args.pr_url, args.rest)

|

||||

@ -100,10 +96,6 @@ def _handle_review_after_reflect_command(pr_url: str, rest: list):

|

||||

reviewer = PRReviewer(pr_url, cli_mode=True, is_answer=True)

|

||||

asyncio.run(reviewer.review())

|

||||

|

||||

def _handle_update_changelog(pr_url: str, rest: list):

|

||||

print(f"Updating changlog for: {pr_url}")

|

||||

reviewer = PRUpdateChangelog(pr_url, cli_mode=True, args=rest)

|

||||

asyncio.run(reviewer.update_changelog())

|

||||

|

||||

if __name__ == '__main__':

|

||||

run()

|

||||

|

||||

@ -1,11 +1,7 @@

|

||||

from os.path import abspath, dirname, join

|

||||

from pathlib import Path

|

||||

from typing import Optional

|

||||

|

||||

from dynaconf import Dynaconf

|

||||

|

||||

PR_AGENT_TOML_KEY = 'pr-agent'

|

||||

|

||||

current_dir = dirname(abspath(__file__))

|

||||

settings = Dynaconf(

|

||||

envvar_prefix=False,

|

||||

@ -19,36 +15,6 @@ settings = Dynaconf(

|

||||

"settings/pr_description_prompts.toml",

|

||||

"settings/pr_code_suggestions_prompts.toml",

|

||||

"settings/pr_information_from_user_prompts.toml",

|

||||

"settings/pr_update_changelog.toml",

|

||||

"settings_prod/.secrets.toml"

|

||||

]]

|

||||

)

|

||||

|

||||

|

||||

# Add local configuration from pyproject.toml of the project being reviewed

|

||||

def _find_repository_root() -> Path:

|

||||

"""

|

||||

Identify project root directory by recursively searching for the .git directory in the parent directories.

|

||||

"""

|

||||

cwd = Path.cwd().resolve()

|

||||

no_way_up = False

|

||||

while not no_way_up:

|

||||

no_way_up = cwd == cwd.parent

|

||||

if (cwd / ".git").is_dir():

|

||||

return cwd

|

||||

cwd = cwd.parent

|

||||

return None

|

||||

|

||||

def _find_pyproject() -> Optional[Path]:

|

||||

"""

|

||||

Search for file pyproject.toml in the repository root.

|

||||

"""

|

||||

repo_root = _find_repository_root()

|

||||

if repo_root:

|

||||

pyproject = _find_repository_root() / "pyproject.toml"

|

||||

return pyproject if pyproject.is_file() else None

|

||||

return None

|

||||

|

||||

pyproject_path = _find_pyproject()

|

||||

if pyproject_path is not None:

|

||||

settings.load_file(pyproject_path, env=f'tool.{PR_AGENT_TOML_KEY}')

|

||||

|

||||

@ -2,13 +2,11 @@ from pr_agent.config_loader import settings

|

||||

from pr_agent.git_providers.bitbucket_provider import BitbucketProvider

|

||||

from pr_agent.git_providers.github_provider import GithubProvider

|

||||

from pr_agent.git_providers.gitlab_provider import GitLabProvider

|

||||

from pr_agent.git_providers.local_git_provider import LocalGitProvider

|

||||

|

||||

_GIT_PROVIDERS = {

|

||||

'github': GithubProvider,

|

||||

'gitlab': GitLabProvider,

|

||||

'bitbucket': BitbucketProvider,

|

||||

'local' : LocalGitProvider

|

||||

}

|

||||

|

||||

def get_git_provider():

|

||||

|

||||

@ -27,7 +27,7 @@ class BitbucketProvider:

|

||||

self.set_pr(pr_url)

|

||||

|

||||

def is_supported(self, capability: str) -> bool:

|

||||

if capability in ['get_issue_comments', 'create_inline_comment', 'publish_inline_comments', 'get_labels']:

|

||||

if capability in ['get_issue_comments', 'create_inline_comment', 'publish_inline_comments']:

|

||||

return False

|

||||

return True

|

||||

|

||||

|

||||

@ -60,10 +60,6 @@ class GitProvider(ABC):

|

||||

def publish_labels(self, labels):

|

||||

pass

|

||||

|

||||

@abstractmethod

|

||||

def get_labels(self):

|

||||

pass

|

||||

|

||||

@abstractmethod

|

||||

def remove_initial_comment(self):

|

||||

pass

|

||||

@ -136,4 +132,3 @@ class IncrementalPR:

|

||||

self.commits_range = None

|

||||

self.first_new_commit_sha = None

|

||||

self.last_seen_commit_sha = None

|

||||

|

||||

|

||||

@ -3,25 +3,19 @@ from datetime import datetime

|

||||

from typing import Optional, Tuple

|

||||

from urllib.parse import urlparse

|

||||

|

||||

from github import AppAuthentication, Auth, Github, GithubException

|

||||

from retry import retry

|

||||

from starlette_context import context

|

||||

from github import AppAuthentication, Github, Auth

|

||||

|

||||

from pr_agent.config_loader import settings

|

||||

|

||||

from .git_provider import FilePatchInfo, GitProvider, IncrementalPR

|

||||

from ..algo.language_handler import is_valid_file

|

||||

from ..algo.utils import load_large_diff

|

||||

from .git_provider import FilePatchInfo, GitProvider, IncrementalPR

|

||||

from ..servers.utils import RateLimitExceeded

|

||||

|

||||

|

||||

class GithubProvider(GitProvider):

|

||||

def __init__(self, pr_url: Optional[str] = None, incremental=IncrementalPR(False)):

|

||||

self.repo_obj = None

|

||||

try:

|

||||

self.installation_id = context.get("installation_id", None)

|

||||

except Exception:

|

||||

self.installation_id = None

|

||||

self.installation_id = settings.get("GITHUB.INSTALLATION_ID")

|

||||

self.github_client = self._get_github_client()

|

||||

self.repo = None

|

||||

self.pr_num = None

|

||||

@ -84,34 +78,27 @@ class GithubProvider(GitProvider):

|

||||

return self.file_set.values()

|

||||

return self.pr.get_files()

|

||||

|

||||

@retry(exceptions=RateLimitExceeded,

|

||||

tries=settings.github.ratelimit_retries, delay=2, backoff=2, jitter=(1, 3))

|

||||

def get_diff_files(self) -> list[FilePatchInfo]:

|

||||

try:

|

||||

files = self.get_files()

|

||||

diff_files = []

|

||||

for file in files:

|

||||

if is_valid_file(file.filename):

|

||||

new_file_content_str = self._get_pr_file_content(file, self.pr.head.sha)

|

||||

patch = file.patch

|

||||

if self.incremental.is_incremental and self.file_set:

|

||||

original_file_content_str = self._get_pr_file_content(file,

|

||||

self.incremental.last_seen_commit_sha)

|

||||

patch = load_large_diff(file,

|

||||

new_file_content_str,

|

||||

original_file_content_str,

|

||||

None)

|

||||

self.file_set[file.filename] = patch

|

||||

else:

|

||||

original_file_content_str = self._get_pr_file_content(file, self.pr.base.sha)

|

||||

files = self.get_files()

|

||||

diff_files = []

|

||||

for file in files:

|

||||

if is_valid_file(file.filename):

|

||||

new_file_content_str = self._get_pr_file_content(file, self.pr.head.sha)

|

||||

patch = file.patch

|

||||

if self.incremental.is_incremental and self.file_set:

|

||||

original_file_content_str = self._get_pr_file_content(file, self.incremental.last_seen_commit_sha)

|

||||

patch = load_large_diff(file,

|

||||

new_file_content_str,

|

||||

original_file_content_str,

|

||||

None)

|

||||

self.file_set[file.filename] = patch

|

||||

else:

|

||||

original_file_content_str = self._get_pr_file_content(file, self.pr.base.sha)

|

||||

|

||||

diff_files.append(

|

||||

FilePatchInfo(original_file_content_str, new_file_content_str, patch, file.filename))

|

||||

self.diff_files = diff_files

|

||||

return diff_files

|

||||

except GithubException.RateLimitExceededException as e:

|

||||

logging.error(f"Rate limit exceeded for GitHub API. Original message: {e}")

|

||||

raise RateLimitExceeded("Rate limit exceeded for GitHub API.") from e

|

||||

diff_files.append(

|

||||

FilePatchInfo(original_file_content_str, new_file_content_str, patch, file.filename))

|

||||

self.diff_files = diff_files

|

||||

return diff_files

|

||||

|

||||

def publish_description(self, pr_title: str, pr_body: str):

|

||||

self.pr.edit(title=pr_title, body=pr_body)

|

||||

@ -335,12 +322,5 @@ class GithubProvider(GitProvider):

|

||||

headers, data = self.pr._requester.requestJsonAndCheck(

|

||||

"PUT", f"{self.pr.issue_url}/labels", input=post_parameters

|

||||

)

|

||||

except Exception as e:

|

||||

logging.exception(f"Failed to publish labels, error: {e}")

|

||||

|

||||

def get_labels(self):

|

||||

try:

|

||||

return [label.name for label in self.pr.labels]

|

||||

except Exception as e:

|

||||

logging.exception(f"Failed to get labels, error: {e}")

|

||||

return []

|

||||

except:

|

||||

logging.exception("Failed to publish labels")

|

||||

|

||||

@ -8,14 +8,11 @@ from gitlab import GitlabGetError

|

||||

|

||||

from pr_agent.config_loader import settings

|

||||

|

||||

from ..algo.language_handler import is_valid_file

|

||||

from .git_provider import EDIT_TYPE, FilePatchInfo, GitProvider

|

||||

|

||||

logger = logging.getLogger()

|

||||

from ..algo.language_handler import is_valid_file

|

||||

|

||||

|

||||

class GitLabProvider(GitProvider):

|

||||

|

||||

def __init__(self, merge_request_url: Optional[str] = None, incremental: Optional[bool] = False):

|

||||

gitlab_url = settings.get("GITLAB.URL", None)

|

||||

if not gitlab_url:

|

||||

@ -24,8 +21,8 @@ class GitLabProvider(GitProvider):

|

||||

if not gitlab_access_token:

|

||||

raise ValueError("GitLab personal access token is not set in the config file")

|

||||

self.gl = gitlab.Gitlab(

|

||||

url=gitlab_url,

|

||||

oauth_token=gitlab_access_token

|

||||

gitlab_url,

|

||||

gitlab_access_token

|

||||

)

|

||||

self.id_project = None

|

||||

self.id_mr = None

|

||||

@ -50,12 +47,7 @@ class GitLabProvider(GitProvider):

|

||||

def _set_merge_request(self, merge_request_url: str):

|

||||

self.id_project, self.id_mr = self._parse_merge_request_url(merge_request_url)

|

||||

self.mr = self._get_merge_request()

|

||||

try:

|

||||

self.last_diff = self.mr.diffs.list(get_all=True)[-1]

|

||||

except IndexError as e:

|

||||

logger.error(f"Could not get diff for merge request {self.id_mr}")

|

||||

raise ValueError(f"Could not get diff for merge request {self.id_mr}") from e

|

||||

|

||||

self.last_diff = self.mr.diffs.list()[-1]

|

||||

|

||||

def _get_pr_file_content(self, file_path: str, branch: str) -> str:

|

||||

try:

|

||||

@ -120,7 +112,7 @@ class GitLabProvider(GitProvider):

|

||||

def create_inline_comment(self, body: str, relevant_file: str, relevant_line_in_file: str):

|

||||

raise NotImplementedError("Gitlab provider does not support creating inline comments yet")

|

||||

|

||||

def create_inline_comments(self, comments: list[dict]):

|

||||

def create_inline_comment(self, comments: list[dict]):

|

||||

raise NotImplementedError("Gitlab provider does not support publishing inline comments yet")

|

||||

|

||||

def send_inline_comment(self, body, edit_type, found, relevant_file, relevant_line_in_file, source_line_no,

|

||||

@ -244,30 +236,20 @@ class GitLabProvider(GitProvider):

|

||||

def get_issue_comments(self):

|

||||

raise NotImplementedError("GitLab provider does not support issue comments yet")

|

||||

|

||||

def _parse_merge_request_url(self, merge_request_url: str) -> Tuple[str, int]:

|

||||

def _parse_merge_request_url(self, merge_request_url: str) -> Tuple[int, int]:

|

||||

parsed_url = urlparse(merge_request_url)

|

||||

|

||||

path_parts = parsed_url.path.strip('/').split('/')

|

||||

if 'merge_requests' not in path_parts:

|

||||

if path_parts[-2] != 'merge_requests':

|

||||

raise ValueError("The provided URL does not appear to be a GitLab merge request URL")

|

||||

|

||||

mr_index = path_parts.index('merge_requests')

|

||||

# Ensure there is an ID after 'merge_requests'

|

||||

if len(path_parts) <= mr_index + 1:

|

||||

raise ValueError("The provided URL does not contain a merge request ID")

|

||||

|

||||

try:

|

||||

mr_id = int(path_parts[mr_index + 1])

|

||||

mr_id = int(path_parts[-1])

|

||||

except ValueError as e:

|

||||

raise ValueError("Unable to convert merge request ID to integer") from e

|

||||

|

||||

# Handle special delimiter (-)

|

||||

project_path = "/".join(path_parts[:mr_index])

|

||||

if project_path.endswith('/-'):

|

||||

project_path = project_path[:-2]

|

||||

|

||||

# Return the path before 'merge_requests' and the ID

|

||||

return project_path, mr_id

|

||||

# Gitlab supports access by both project numeric ID as well as 'namespace/project_name'

|

||||

return "/".join(path_parts[:2]), mr_id

|

||||

|

||||

def _get_merge_request(self):

|

||||

mr = self.gl.projects.get(self.id_project).mergerequests.get(self.id_mr)

|

||||

@ -276,15 +258,8 @@ class GitLabProvider(GitProvider):

|

||||

def get_user_id(self):

|

||||

return None

|

||||

|

||||

def publish_labels(self, pr_types):

|

||||

try:

|

||||

self.mr.labels = list(set(pr_types))

|

||||

self.mr.save()

|

||||

except Exception as e:

|

||||

logging.exception(f"Failed to publish labels, error: {e}")

|

||||

|

||||

def publish_inline_comments(self, comments: list[dict]):

|

||||

def publish_labels(self, labels):

|

||||

pass

|

||||

|

||||

def get_labels(self):

|

||||

return self.mr.labels

|

||||

def publish_inline_comments(self, comments: list[dict]):

|

||||

pass

|

||||

@ -1,178 +0,0 @@

|

||||

import logging

|

||||

from collections import Counter

|

||||

from pathlib import Path

|

||||

from typing import List

|

||||

|

||||

from git import Repo

|

||||

|

||||

from pr_agent.config_loader import _find_repository_root, settings

|

||||

from pr_agent.git_providers.git_provider import EDIT_TYPE, FilePatchInfo, GitProvider

|

||||

|

||||

|

||||

class PullRequestMimic:

|

||||

"""

|

||||

This class mimics the PullRequest class from the PyGithub library for the LocalGitProvider.

|

||||

"""

|

||||

|

||||

def __init__(self, title: str, diff_files: List[FilePatchInfo]):

|

||||

self.title = title

|

||||

self.diff_files = diff_files

|

||||

|

||||

|

||||

class LocalGitProvider(GitProvider):

|

||||

"""

|

||||

This class implements the GitProvider interface for local git repositories.

|

||||

It mimics the PR functionality of the GitProvider interface,

|

||||

but does not require a hosted git repository.

|

||||

Instead of providing a PR url, the user provides a local branch path to generate a diff-patch.

|

||||

For the MVP it only supports the /review and /describe capabilities.

|

||||

"""

|

||||

|

||||

def __init__(self, target_branch_name, incremental=False):

|

||||

self.repo_path = _find_repository_root()

|

||||

if self.repo_path is None:

|

||||

raise ValueError('Could not find repository root')

|

||||

self.repo = Repo(self.repo_path)

|

||||

self.head_branch_name = self.repo.head.ref.name

|

||||

self.target_branch_name = target_branch_name

|

||||

self._prepare_repo()

|

||||

self.diff_files = None

|

||||

self.pr = PullRequestMimic(self.get_pr_title(), self.get_diff_files())

|

||||

self.description_path = settings.get('local.description_path') \

|

||||

if settings.get('local.description_path') is not None else self.repo_path / 'description.md'

|

||||

self.review_path = settings.get('local.review_path') \

|

||||

if settings.get('local.review_path') is not None else self.repo_path / 'review.md'

|

||||

# inline code comments are not supported for local git repositories

|

||||

settings.pr_reviewer.inline_code_comments = False

|

||||

|

||||

def _prepare_repo(self):

|

||||

"""

|

||||

Prepare the repository for PR-mimic generation.

|

||||

"""

|

||||

logging.debug('Preparing repository for PR-mimic generation...')

|

||||

if self.repo.is_dirty():

|

||||

raise ValueError('The repository is not in a clean state. Please commit or stash pending changes.')

|

||||

if self.target_branch_name not in self.repo.heads:

|

||||

raise KeyError(f'Branch: {self.target_branch_name} does not exist')

|

||||

|

||||

def is_supported(self, capability: str) -> bool:

|

||||

if capability in ['get_issue_comments', 'create_inline_comment', 'publish_inline_comments', 'get_labels']:

|

||||

return False

|

||||

return True

|

||||

|

||||

def get_diff_files(self) -> list[FilePatchInfo]:

|

||||

diffs = self.repo.head.commit.diff(

|

||||

self.repo.merge_base(self.repo.head, self.repo.branches[self.target_branch_name]),

|

||||

create_patch=True,

|

||||

R=True

|

||||

)

|

||||

diff_files = []

|

||||

for diff_item in diffs:

|

||||

if diff_item.a_blob is not None:

|

||||

original_file_content_str = diff_item.a_blob.data_stream.read().decode('utf-8')

|

||||

else:

|

||||

original_file_content_str = "" # empty file

|

||||

if diff_item.b_blob is not None:

|

||||

new_file_content_str = diff_item.b_blob.data_stream.read().decode('utf-8')

|

||||

else:

|

||||

new_file_content_str = "" # empty file

|

||||

edit_type = EDIT_TYPE.MODIFIED

|

||||

if diff_item.new_file:

|

||||

edit_type = EDIT_TYPE.ADDED

|

||||

elif diff_item.deleted_file:

|

||||

edit_type = EDIT_TYPE.DELETED

|

||||

elif diff_item.renamed_file:

|

||||

edit_type = EDIT_TYPE.RENAMED

|

||||

diff_files.append(

|

||||

FilePatchInfo(original_file_content_str,

|

||||

new_file_content_str,

|

||||

diff_item.diff.decode('utf-8'),

|

||||

diff_item.b_path,

|

||||

edit_type=edit_type,

|

||||

old_filename=None if diff_item.a_path == diff_item.b_path else diff_item.a_path

|

||||

)

|

||||

)

|

||||

self.diff_files = diff_files

|

||||

return diff_files

|

||||

|

||||

def get_files(self) -> List[str]:

|

||||

"""

|

||||

Returns a list of files with changes in the diff.

|

||||

"""

|

||||

diff_index = self.repo.head.commit.diff(

|

||||

self.repo.merge_base(self.repo.head, self.repo.branches[self.target_branch_name]),

|

||||

R=True

|

||||

)

|

||||

# Get the list of changed files

|

||||

diff_files = [item.a_path for item in diff_index]

|

||||

return diff_files

|

||||

|

||||

def publish_description(self, pr_title: str, pr_body: str):

|

||||

with open(self.description_path, "w") as file:

|

||||

# Write the string to the file

|

||||

file.write(pr_title + '\n' + pr_body)

|

||||

|

||||

def publish_comment(self, pr_comment: str, is_temporary: bool = False):

|

||||

with open(self.review_path, "w") as file:

|

||||

# Write the string to the file

|

||||

file.write(pr_comment)

|

||||

|

||||

def publish_inline_comment(self, body: str, relevant_file: str, relevant_line_in_file: str):

|

||||

raise NotImplementedError('Publishing inline comments is not implemented for the local git provider')

|

||||

|

||||

def create_inline_comment(self, body: str, relevant_file: str, relevant_line_in_file: str):

|

||||

raise NotImplementedError('Creating inline comments is not implemented for the local git provider')

|

||||

|

||||

def publish_inline_comments(self, comments: list[dict]):

|

||||

raise NotImplementedError('Publishing inline comments is not implemented for the local git provider')

|

||||

|

||||

def publish_code_suggestion(self, body: str, relevant_file: str,

|

||||

relevant_lines_start: int, relevant_lines_end: int):

|

||||

raise NotImplementedError('Publishing code suggestions is not implemented for the local git provider')

|

||||

|

||||

def publish_code_suggestions(self, code_suggestions: list):

|

||||

raise NotImplementedError('Publishing code suggestions is not implemented for the local git provider')

|

||||

|

||||

def publish_labels(self, labels):

|

||||

pass # Not applicable to the local git provider, but required by the interface

|

||||

|

||||

def remove_initial_comment(self):

|

||||

pass # Not applicable to the local git provider, but required by the interface

|

||||

|

||||

def get_languages(self):

|

||||

"""

|

||||

Calculate percentage of languages in repository. Used for hunk prioritisation.

|

||||

"""

|

||||

# Get all files in repository

|

||||

filepaths = [Path(item.path) for item in self.repo.tree().traverse() if item.type == 'blob']

|

||||

# Identify language by file extension and count

|

||||

lang_count = Counter(ext.lstrip('.') for filepath in filepaths for ext in [filepath.suffix.lower()])

|

||||

# Convert counts to percentages

|

||||

total_files = len(filepaths)

|

||||

lang_percentage = {lang: count / total_files * 100 for lang, count in lang_count.items()}

|

||||

return lang_percentage

|

||||

|

||||

def get_pr_branch(self):

|

||||

return self.repo.head

|

||||

|

||||

def get_user_id(self):

|

||||

return -1 # Not used anywhere for the local provider, but required by the interface

|

||||

|

||||

def get_pr_description(self):

|

||||

commits_diff = list(self.repo.iter_commits(self.target_branch_name + '..HEAD'))

|

||||

# Get the commit messages and concatenate

|

||||

commit_messages = " ".join([commit.message for commit in commits_diff])

|

||||

# TODO Handle the description better - maybe use gpt-3.5 summarisation here?

|

||||

return commit_messages[:200] # Use max 200 characters

|

||||

|

||||

def get_pr_title(self):

|

||||

"""

|

||||

Substitutes the branch-name as the PR-mimic title.

|

||||

"""

|

||||

return self.head_branch_name

|

||||

|

||||

def get_issue_comments(self):

|

||||

raise NotImplementedError('Getting issue comments is not implemented for the local git provider')

|

||||

|

||||

def get_labels(self):

|

||||

raise NotImplementedError('Getting labels is not implemented for the local git provider')

|

||||

@ -8,61 +8,50 @@ from pr_agent.tools.pr_reviewer import PRReviewer

|

||||

|

||||

|

||||

async def run_action():

|

||||

# Get environment variables

|

||||

GITHUB_EVENT_NAME = os.environ.get('GITHUB_EVENT_NAME')

|

||||

GITHUB_EVENT_PATH = os.environ.get('GITHUB_EVENT_PATH')

|

||||

OPENAI_KEY = os.environ.get('OPENAI_KEY')

|

||||

OPENAI_ORG = os.environ.get('OPENAI_ORG')

|

||||

GITHUB_TOKEN = os.environ.get('GITHUB_TOKEN')

|

||||

|

||||

# Check if required environment variables are set

|

||||

GITHUB_EVENT_NAME = os.environ.get('GITHUB_EVENT_NAME', None)

|

||||

if not GITHUB_EVENT_NAME:

|

||||

print("GITHUB_EVENT_NAME not set")

|

||||

return

|

||||

GITHUB_EVENT_PATH = os.environ.get('GITHUB_EVENT_PATH', None)

|

||||

if not GITHUB_EVENT_PATH:

|

||||

print("GITHUB_EVENT_PATH not set")

|

||||

return

|

||||

try:

|

||||

event_payload = json.load(open(GITHUB_EVENT_PATH, 'r'))

|

||||

except json.decoder.JSONDecodeError as e:

|

||||

print(f"Failed to parse JSON: {e}")

|

||||

return

|

||||

OPENAI_KEY = os.environ.get('OPENAI_KEY', None)

|

||||

if not OPENAI_KEY:

|

||||

print("OPENAI_KEY not set")

|

||||

return

|

||||

OPENAI_ORG = os.environ.get('OPENAI_ORG', None)

|

||||

GITHUB_TOKEN = os.environ.get('GITHUB_TOKEN', None)

|

||||

if not GITHUB_TOKEN:

|

||||

print("GITHUB_TOKEN not set")

|

||||

return

|

||||

|

||||

# Set the environment variables in the settings

|

||||

settings.set("OPENAI.KEY", OPENAI_KEY)

|

||||

if OPENAI_ORG:

|

||||

settings.set("OPENAI.ORG", OPENAI_ORG)

|

||||

settings.set("GITHUB.USER_TOKEN", GITHUB_TOKEN)

|

||||

settings.set("GITHUB.DEPLOYMENT_TYPE", "user")

|

||||

|

||||

# Load the event payload

|

||||

try:

|

||||

with open(GITHUB_EVENT_PATH, 'r') as f:

|

||||

event_payload = json.load(f)

|

||||

except json.decoder.JSONDecodeError as e:

|

||||

print(f"Failed to parse JSON: {e}")

|

||||

return

|

||||

|

||||

# Handle pull request event

|

||||

if GITHUB_EVENT_NAME == "pull_request":

|

||||

action = event_payload.get("action")

|

||||

action = event_payload.get("action", None)

|

||||

if action in ["opened", "reopened"]:

|

||||

pr_url = event_payload.get("pull_request", {}).get("url")

|

||||

pr_url = event_payload.get("pull_request", {}).get("url", None)

|

||||

if pr_url:

|

||||

await PRReviewer(pr_url).review()

|

||||

|

||||

# Handle issue comment event

|

||||

elif GITHUB_EVENT_NAME == "issue_comment":

|

||||

action = event_payload.get("action")

|

||||

action = event_payload.get("action", None)

|

||||

if action in ["created", "edited"]:

|

||||

comment_body = event_payload.get("comment", {}).get("body")

|

||||

comment_body = event_payload.get("comment", {}).get("body", None)

|

||||

if comment_body:

|

||||

pr_url = event_payload.get("issue", {}).get("pull_request", {}).get("url")

|

||||

pr_url = event_payload.get("issue", {}).get("pull_request", {}).get("url", None)

|

||||

if pr_url:

|

||||

body = comment_body.strip().lower()

|

||||

await PRAgent().handle_request(pr_url, body)

|

||||

|

||||

|

||||

if __name__ == '__main__':

|

||||

asyncio.run(run_action())

|

||||

asyncio.run(run_action())

|

||||

|

||||

@ -1,12 +1,8 @@

|

||||

from typing import Dict, Any

|

||||

import logging

|

||||

import sys

|

||||

|

||||

import uvicorn

|

||||

from fastapi import APIRouter, FastAPI, HTTPException, Request, Response

|

||||

from starlette.middleware import Middleware

|

||||

from starlette_context import context

|

||||

from starlette_context.middleware import RawContextMiddleware

|

||||

|

||||

from pr_agent.agent.pr_agent import PRAgent

|

||||

from pr_agent.config_loader import settings

|

||||

@ -18,27 +14,7 @@ router = APIRouter()

|

||||

|

||||

@router.post("/api/v1/github_webhooks")

|

||||

async def handle_github_webhooks(request: Request, response: Response):

|

||||

"""

|

||||

Receives and processes incoming GitHub webhook requests.

|

||||

Verifies the request signature, parses the request body, and passes it to the handle_request function for further processing.

|

||||

"""

|

||||

logging.debug("Received a GitHub webhook")

|

||||

|

||||

body = await get_body(request)

|

||||

|

||||

logging.debug(f'Request body:\n{body}')

|

||||

installation_id = body.get("installation", {}).get("id")

|

||||

context["installation_id"] = installation_id

|

||||

|

||||

return await handle_request(body)

|

||||

|

||||

|

||||

@router.post("/api/v1/marketplace_webhooks")

|

||||

async def handle_marketplace_webhooks(request: Request, response: Response):

|

||||

body = await get_body(request)

|

||||

logging.info(f'Request body:\n{body}')

|

||||

|

||||

async def get_body(request):

|

||||

logging.debug("Received a github webhook")

|

||||

try:

|

||||

body = await request.json()

|

||||

except Exception as e:

|

||||

@ -46,47 +22,43 @@ async def get_body(request):

|

||||

raise HTTPException(status_code=400, detail="Error parsing request body") from e

|

||||

body_bytes = await request.body()

|

||||

signature_header = request.headers.get('x-hub-signature-256', None)

|

||||

webhook_secret = getattr(settings.github, 'webhook_secret', None)

|

||||

try:

|

||||

webhook_secret = settings.github.webhook_secret

|

||||

except AttributeError:

|

||||

webhook_secret = None

|

||||

if webhook_secret:

|

||||

verify_signature(body_bytes, webhook_secret, signature_header)

|

||||

return body

|

||||

logging.debug(f'Request body:\n{body}')

|

||||

return await handle_request(body)

|

||||

|

||||

|

||||

|

||||

|

||||

async def handle_request(body: Dict[str, Any]):

|

||||

"""

|

||||

Handle incoming GitHub webhook requests.

|

||||

|

||||

Args:

|

||||

body: The request body.

|

||||

"""

|

||||

action = body.get("action")

|

||||

async def handle_request(body):

|

||||

action = body.get("action", None)

|

||||

installation_id = body.get("installation", {}).get("id", None)

|

||||

settings.set("GITHUB.INSTALLATION_ID", installation_id)

|

||||

agent = PRAgent()

|

||||

|

||||

if action == 'created':

|

||||

if "comment" not in body:

|

||||

return {}

|

||||

comment_body = body.get("comment", {}).get("body")

|

||||

sender = body.get("sender", {}).get("login")

|

||||

if sender and 'bot' in sender:

|

||||

comment_body = body.get("comment", {}).get("body", None)

|

||||

if 'sender' in body and 'login' in body['sender'] and 'bot' in body['sender']['login']:

|

||||

return {}

|

||||

if "issue" not in body or "pull_request" not in body["issue"]:

|

||||

if "issue" not in body and "pull_request" not in body["issue"]:

|

||||

return {}

|

||||

pull_request = body["issue"]["pull_request"]

|

||||

api_url = pull_request.get("url")

|

||||

api_url = pull_request.get("url", None)

|

||||

await agent.handle_request(api_url, comment_body)

|

||||

|

||||

elif action in ["opened"] or 'reopened' in action:

|

||||

pull_request = body.get("pull_request")

|

||||

pull_request = body.get("pull_request", None)

|

||||

if not pull_request:

|

||||

return {}

|

||||

api_url = pull_request.get("url")

|

||||

if not api_url:

|

||||

api_url = pull_request.get("url", None)

|

||||

if api_url is None:

|

||||

return {}

|

||||

await agent.handle_request(api_url, "/review")

|

||||

|

||||

return {}

|

||||

else:

|

||||

return {}

|

||||

|

||||

|

||||

@router.get("/")

|

||||

@ -97,12 +69,11 @@ async def root():

|

||||

def start():

|

||||

# Override the deployment type to app

|

||||

settings.set("GITHUB.DEPLOYMENT_TYPE", "app")

|

||||

middleware = [Middleware(RawContextMiddleware)]

|

||||

app = FastAPI(middleware=middleware)

|

||||

app = FastAPI()

|

||||

app.include_router(router)

|

||||

|

||||

uvicorn.run(app, host="0.0.0.0", port=3000)

|

||||

|

||||

|

||||

if __name__ == '__main__':

|

||||

start()

|

||||

start()

|

||||

|

||||

@ -15,40 +15,28 @@ NOTIFICATION_URL = "https://api.github.com/notifications"

|

||||

|

||||

|

||||

def now() -> str:

|

||||

"""

|

||||

Get the current UTC time in ISO 8601 format.

|

||||

|

||||

Returns:

|

||||

str: The current UTC time in ISO 8601 format.

|

||||

"""

|

||||

now_utc = datetime.now(timezone.utc).isoformat()

|

||||

now_utc = now_utc.replace("+00:00", "Z")

|

||||

return now_utc

|

||||

|

||||

|

||||

async def polling_loop():

|

||||

"""

|

||||

Polls for notifications and handles them accordingly.

|

||||

"""

|

||||

handled_ids = set()

|

||||

since = [now()]

|

||||

last_modified = [None]

|

||||

git_provider = get_git_provider()()

|

||||

user_id = git_provider.get_user_id()

|

||||

agent = PRAgent()

|

||||

|

||||

try:

|

||||

deployment_type = settings.github.deployment_type

|

||||

token = settings.github.user_token

|

||||

except AttributeError:

|

||||

deployment_type = 'none'

|

||||

token = None

|

||||

|

||||

if deployment_type != 'user':

|

||||

raise ValueError("Deployment mode must be set to 'user' to get notifications")

|

||||

if not token:

|

||||

raise ValueError("User token must be set to get notifications")

|

||||

|

||||

async with aiohttp.ClientSession() as session:

|

||||

while True:

|

||||

try:

|

||||

@ -64,7 +52,6 @@ async def polling_loop():

|

||||

params["since"] = since[0]

|

||||

if last_modified[0]:

|

||||

headers["If-Modified-Since"] = last_modified[0]

|

||||

|

||||

async with session.get(NOTIFICATION_URL, headers=headers, params=params) as response:

|

||||

if response.status == 200:

|

||||

if 'Last-Modified' in response.headers:

|

||||

@ -113,4 +100,4 @@ async def polling_loop():

|

||||

|

||||

|

||||

if __name__ == '__main__':

|

||||

asyncio.run(polling_loop())

|

||||

asyncio.run(polling_loop())

|

||||

|

||||

@ -1,47 +0,0 @@

|

||||

import logging

|

||||

|

||||

import uvicorn

|

||||

from fastapi import APIRouter, FastAPI, Request, status

|

||||

from fastapi.encoders import jsonable_encoder

|

||||

from fastapi.responses import JSONResponse

|

||||

from starlette.background import BackgroundTasks

|

||||

|

||||

from pr_agent.agent.pr_agent import PRAgent

|

||||

from pr_agent.config_loader import settings

|

||||

|

||||

app = FastAPI()

|

||||

router = APIRouter()

|

||||

|

||||

|

||||

@router.post("/webhook")

|

||||

async def gitlab_webhook(background_tasks: BackgroundTasks, request: Request):

|

||||

data = await request.json()

|

||||

if data.get('object_kind') == 'merge_request' and data['object_attributes'].get('action') in ['open', 'reopen']:

|

||||

logging.info(f"A merge request has been opened: {data['object_attributes'].get('title')}")

|

||||

url = data['object_attributes'].get('url')

|

||||

background_tasks.add_task(PRAgent().handle_request, url, "/review")

|

||||

elif data.get('object_kind') == 'note' and data['event_type'] == 'note':

|

||||

if 'merge_request' in data:

|

||||

mr = data['merge_request']

|

||||

url = mr.get('url')

|

||||

body = data.get('object_attributes', {}).get('note')

|

||||

background_tasks.add_task(PRAgent().handle_request, url, body)

|

||||

return JSONResponse(status_code=status.HTTP_200_OK, content=jsonable_encoder({"message": "success"}))

|

||||

|

||||

def start():

|

||||

gitlab_url = settings.get("GITLAB.URL", None)

|

||||

if not gitlab_url:

|

||||

raise ValueError("GITLAB.URL is not set")

|

||||

gitlab_token = settings.get("GITLAB.PERSONAL_ACCESS_TOKEN", None)

|

||||

if not gitlab_token:

|

||||

raise ValueError("GITLAB.PERSONAL_ACCESS_TOKEN is not set")

|

||||

settings.config.git_provider = "gitlab"

|

||||

|

||||

app = FastAPI()

|

||||

app.include_router(router)

|

||||

|

||||

uvicorn.run(app, host="0.0.0.0", port=3000)

|

||||

|

||||

|

||||

if __name__ == '__main__':

|

||||

start()

|

||||

@ -21,7 +21,3 @@ def verify_signature(payload_body, secret_token, signature_header):

|

||||

if not hmac.compare_digest(expected_signature, signature_header):

|

||||

raise HTTPException(status_code=403, detail="Request signatures didn't match!")

|

||||

|

||||

|

||||

class RateLimitExceeded(Exception):

|

||||

"""Raised when the git provider API rate limit has been exceeded."""

|

||||

pass

|

||||

|

||||

@ -1,6 +1,6 @@

|

||||

[config]

|

||||

model="gpt-4"

|

||||

fallback_models=["gpt-3.5-turbo-16k"]

|

||||

fallback-models=["gpt-3.5-turbo-16k", "gpt-3.5-turbo"]

|

||||

git_provider="github"

|

||||

publish_output=true

|

||||

publish_output_progress=true

|

||||

@ -24,13 +24,9 @@ publish_description_as_comment=false

|

||||

[pr_code_suggestions]

|

||||

num_code_suggestions=4

|

||||

|

||||

[pr_update_changelog]

|

||||

push_changelog_changes=false

|

||||

|

||||

[github]

|

||||

# The type of deployment to create. Valid values are 'app' or 'user'.

|

||||

deployment_type = "user"

|

||||

ratelimit_retries = 5

|

||||

|

||||

[gitlab]

|

||||

# URL to the gitlab service

|

||||

@ -44,8 +40,3 @@ magic_word = "AutoReview"

|

||||

|

||||

# Polling interval

|

||||

polling_interval_seconds = 30

|

||||

|

||||

[local]

|

||||

# LocalGitProvider settings - uncomment to use paths other than default

|

||||

# description_path= "path/to/description.md"

|

||||

# review_path= "path/to/review.md"

|

||||

@ -1,34 +0,0 @@

|

||||

[pr_update_changelog_prompt]

|

||||

system="""You are a language model called CodiumAI-PR-Changlog-summarizer.

|

||||

Your task is to update the CHANGELOG.md file of the project, to shortly summarize important changes introduced in this PR (the '+' lines).

|

||||

- The output should match the existing CHANGELOG.md format, style and conventions, so it will look like a natural part of the file. For example, if previous changes were summarized in a single line, you should do the same.

|

||||

- Don't repeat previous changes. Generate only new content, that is not already in the CHANGELOG.md file.

|

||||

- Be general, and avoid specific details, files, etc. The output should be minimal, no more than 3-4 short lines. Ignore non-relevant subsections.

|

||||

"""

|

||||

|

||||

user="""PR Info:

|

||||

Title: '{{title}}'

|

||||

Branch: '{{branch}}'

|

||||

Description: '{{description}}'

|

||||

{%- if language %}

|

||||

Main language: {{language}}

|

||||

{%- endif %}

|

||||

|

||||

|

||||

The PR Diff:

|

||||

```

|

||||

{{diff}}

|

||||

```

|

||||

|

||||

Current date:

|

||||

```

|

||||

{{today}}

|

||||

```

|

||||

|

||||

The current CHANGELOG.md:

|

||||

```

|

||||

{{ changelog_file_str }}

|

||||

```

|

||||

|

||||

Response:

|

||||

"""

|

||||

@ -1,7 +1,6 @@

|

||||

import copy

|

||||

import json

|

||||

import logging

|

||||

from typing import Tuple, List

|

||||

|

||||

from jinja2 import Environment, StrictUndefined

|

||||

|

||||

@ -15,22 +14,11 @@ from pr_agent.git_providers.git_provider import get_main_pr_language

|

||||

|

||||

class PRDescription:

|

||||

def __init__(self, pr_url: str):

|

||||

"""

|

||||

Initialize the PRDescription object with the necessary attributes and objects for generating a PR description using an AI model.

|

||||

Args:

|

||||

pr_url (str): The URL of the pull request.

|

||||

"""

|

||||

|

||||

# Initialize the git provider and main PR language

|

||||

self.git_provider = get_git_provider()(pr_url)

|

||||

self.main_pr_language = get_main_pr_language(

|

||||

self.git_provider.get_languages(), self.git_provider.get_files()

|

||||

)

|

||||

|

||||

# Initialize the AI handler

|

||||

self.ai_handler = AiHandler()

|

||||

|

||||

# Initialize the variables dictionary

|

||||

self.vars = {

|

||||

"title": self.git_provider.pr.title,

|

||||

"branch": self.git_provider.get_pr_branch(),

|

||||

@ -38,135 +26,67 @@ class PRDescription:

|

||||

"language": self.main_pr_language,

|

||||

"diff": "", # empty diff for initial calculation

|

||||

}

|

||||

|

||||

# Initialize the token handler

|

||||

self.token_handler = TokenHandler(

|

||||

self.git_provider.pr,

|

||||

self.vars,

|

||||

settings.pr_description_prompt.system,

|

||||

settings.pr_description_prompt.user,

|

||||

)

|

||||

|

||||

# Initialize patches_diff and prediction attributes

|

||||

self.token_handler = TokenHandler(self.git_provider.pr,

|

||||

self.vars,

|

||||

settings.pr_description_prompt.system,

|

||||

settings.pr_description_prompt.user)

|

||||

self.patches_diff = None

|

||||

self.prediction = None

|

||||

|

||||

async def describe(self):

|

||||

"""

|

||||

Generates a PR description using an AI model and publishes it to the PR.

|

||||

"""

|

||||

logging.info('Generating a PR description...')

|

||||

if settings.config.publish_output:

|

||||

self.git_provider.publish_comment("Preparing pr description...", is_temporary=True)

|

||||

|

||||

await retry_with_fallback_models(self._prepare_prediction)

|

||||

|

||||

logging.info('Preparing answer...')

|

||||

pr_title, pr_body, pr_types, markdown_text = self._prepare_pr_answer()

|

||||

|

||||

if settings.config.publish_output:

|

||||

logging.info('Pushing answer...')

|

||||

if settings.pr_description.publish_description_as_comment:

|

||||

self.git_provider.publish_comment(markdown_text)

|

||||

else:

|

||||

self.git_provider.publish_description(pr_title, pr_body)

|

||||

if self.git_provider.is_supported("get_labels"):

|

||||

current_labels = self.git_provider.get_labels()

|

||||

if current_labels is None:

|

||||

current_labels = []

|

||||

self.git_provider.publish_labels(pr_types + current_labels)

|

||||

self.git_provider.publish_labels(pr_types)

|

||||

self.git_provider.remove_initial_comment()

|

||||

|

||||

return ""

|

||||

|

||||

async def _prepare_prediction(self, model: str) -> None:

|

||||

"""

|

||||

Prepare the AI prediction for the PR description based on the provided model.

|

||||

|

||||

Args:

|

||||

model (str): The name of the model to be used for generating the prediction.

|

||||

|

||||

Returns:

|

||||

None

|

||||

|

||||

Raises:

|

||||

Any exceptions raised by the 'get_pr_diff' and '_get_prediction' functions.

|

||||

|

||||

"""

|

||||

async def _prepare_prediction(self, model: str):

|

||||

logging.info('Getting PR diff...')

|

||||

self.patches_diff = get_pr_diff(self.git_provider, self.token_handler, model)

|

||||

logging.info('Getting AI prediction...')

|

||||

self.prediction = await self._get_prediction(model)

|

||||

|

||||

async def _get_prediction(self, model: str) -> str:

|

||||

"""

|

||||

Generate an AI prediction for the PR description based on the provided model.

|

||||

|

||||

Args:

|

||||

model (str): The name of the model to be used for generating the prediction.

|

||||

|

||||

Returns:

|

||||

str: The generated AI prediction.

|

||||

"""

|

||||

async def _get_prediction(self, model: str):

|

||||

variables = copy.deepcopy(self.vars)

|

||||

variables["diff"] = self.patches_diff # update diff

|

||||

|

||||

environment = Environment(undefined=StrictUndefined)

|

||||

system_prompt = environment.from_string(settings.pr_description_prompt.system).render(variables)

|

||||

user_prompt = environment.from_string(settings.pr_description_prompt.user).render(variables)

|

||||

|

||||

if settings.config.verbosity_level >= 2:

|

||||

logging.info(f"\nSystem prompt:\n{system_prompt}")

|

||||

logging.info(f"\nUser prompt:\n{user_prompt}")

|

||||

|

||||

response, finish_reason = await self.ai_handler.chat_completion(

|

||||

model=model,

|

||||

temperature=0.2,

|

||||

system=system_prompt,

|

||||

user=user_prompt

|

||||

)

|

||||

|

||||

response, finish_reason = await self.ai_handler.chat_completion(model=model, temperature=0.2,

|

||||

system=system_prompt, user=user_prompt)

|

||||

return response

|

||||

|

||||

def _prepare_pr_answer(self) -> Tuple[str, str, List[str], str]:

|

||||

"""

|

||||

Prepare the PR description based on the AI prediction data.

|

||||

|

||||

Returns:

|

||||

- title: a string containing the PR title.

|

||||

- pr_body: a string containing the PR body in a markdown format.

|

||||

- pr_types: a list of strings containing the PR types.

|

||||

- markdown_text: a string containing the AI prediction data in a markdown format.

|

||||

"""

|

||||

# Load the AI prediction data into a dictionary

|

||||

def _prepare_pr_answer(self):

|

||||

data = json.loads(self.prediction)

|

||||

|

||||

# Initialization

|

||||

markdown_text = pr_body = ""

|

||||

pr_types = []

|

||||

|

||||

# Iterate over the dictionary items and append the key and value to 'markdown_text' in a markdown format

|

||||

markdown_text = ""

|

||||

for key, value in data.items():

|

||||

markdown_text += f"## {key}\n\n"

|

||||

markdown_text += f"{value}\n\n"

|

||||

|

||||

# If the 'PR Type' key is present in the dictionary, split its value by comma and assign it to 'pr_types'

|

||||

pr_body = ""

|

||||

pr_types = []

|

||||

if 'PR Type' in data:

|

||||

pr_types = data['PR Type'].split(',')

|

||||

|

||||

# Assign the value of the 'PR Title' key to 'title' variable and remove it from the dictionary

|

||||

title = data.pop('PR Title')

|

||||

|

||||

# Iterate over the remaining dictionary items and append the key and value to 'pr_body' in a markdown format,

|

||||

# except for the items containing the word 'walkthrough'

|

||||

title = data['PR Title']

|

||||

del data['PR Title']

|

||||

for key, value in data.items():

|

||||

pr_body += f"{key}:\n"

|

||||

if 'walkthrough' in key.lower():

|

||||

pr_body += f"{value}\n"

|

||||

else:

|

||||

pr_body += f"**{value}**\n\n___\n"

|

||||

|

||||

if settings.config.verbosity_level >= 2:

|

||||

logging.info(f"title:\n{title}\n{pr_body}")

|

||||

|

||||

return title, pr_body, pr_types, markdown_text

|

||||

return title, pr_body, pr_types, markdown_text

|

||||

|

||||

@ -2,7 +2,6 @@ import copy

|

||||

import json

|

||||

import logging

|

||||

from collections import OrderedDict

|

||||

from typing import Tuple, List

|

||||

|

||||

from jinja2 import Environment, StrictUndefined

|

||||

|

||||

@ -17,19 +16,7 @@ from pr_agent.servers.help import actions_help_text, bot_help_text

|

||||

|

||||

|

||||

class PRReviewer:

|

||||

"""

|

||||

The PRReviewer class is responsible for reviewing a pull request and generating feedback using an AI model.

|

||||

"""

|

||||

def __init__(self, pr_url: str, cli_mode: bool = False, is_answer: bool = False, args: list = None):

|

||||

"""

|

||||

Initialize the PRReviewer object with the necessary attributes and objects to review a pull request.

|

||||

|

||||

Args:

|

||||

pr_url (str): The URL of the pull request to be reviewed.

|

||||

cli_mode (bool, optional): Indicates whether the review is being done in command-line interface mode. Defaults to False.

|

||||

is_answer (bool, optional): Indicates whether the review is being done in answer mode. Defaults to False.

|

||||

args (list, optional): List of arguments passed to the PRReviewer class. Defaults to None.

|

||||

"""

|

||||

def __init__(self, pr_url: str, cli_mode=False, is_answer: bool = False, args=None):

|

||||

self.parse_args(args)

|

||||

|

||||

self.git_provider = get_git_provider()(pr_url, incremental=self.incremental)

|

||||

@ -38,15 +25,13 @@ class PRReviewer:

|

||||

)

|

||||

self.pr_url = pr_url

|

||||

self.is_answer = is_answer

|

||||

|

||||

if self.is_answer and not self.git_provider.is_supported("get_issue_comments"):

|

||||

raise Exception(f"Answer mode is not supported for {settings.config.git_provider} for now")

|

||||

answer_str, question_str = self._get_user_answers()

|

||||

self.ai_handler = AiHandler()

|

||||

self.patches_diff = None

|

||||

self.prediction = None

|

||||

self.cli_mode = cli_mode

|

||||

|