mirror of

https://github.com/qodo-ai/pr-agent.git

synced 2025-07-21 04:50:39 +08:00

Compare commits

172 Commits

ok/gitlat_

...

ok/multi_c

| Author | SHA1 | Date | |

|---|---|---|---|

| 36f7a7b17a | |||

| fcc208d09f | |||

| 20bbdac135 | |||

| ceedf2bf83 | |||

| 2d6b947292 | |||

| 2e13b12fe6 | |||

| 2d56c88291 | |||

| cf9c6a872d | |||

| 0bb8ab70a4 | |||

| 4a47b78a90 | |||

| 3e542cd88b | |||

| 17ed050ca7 | |||

| e24c5e3501 | |||

| b206b1c5ff | |||

| 0270306d3c | |||

| 3e09b9ac37 | |||

| 725ac9e85d | |||

| e00500b90c | |||

| f1f271fa00 | |||

| d38c5236dd | |||

| 49a3a1e511 | |||

| 64481e2d84 | |||

| e0f295659d | |||

| fe75e3f2ec | |||

| e3274af831 | |||

| 7760f37dee | |||

| ebbe655c40 | |||

| 164ed77d72 | |||

| b1148e5f7a | |||

| 2012e25596 | |||

| a75253097b | |||

| 079d62af56 | |||

| 886139c6b5 | |||

| 8f751f7371 | |||

| 43297b851f | |||

| 4f39239e73 | |||

| 00e1925927 | |||

| 7189b3ab41 | |||

| a00038fbd8 | |||

| a45343793a | |||

| 703215fe83 | |||

| 0f975ccf4a | |||

| 7367c62cf9 | |||

| fed0ea349a | |||

| bd86266a4b | |||

| bd07a0cd7f | |||

| ed8554699b | |||

| 749ae1be79 | |||

| 0e3dbbd0f2 | |||

| 7a57db5d88 | |||

| 102edcdcf1 | |||

| c92648cbd5 | |||

| 26b008565b | |||

| 0dec24aa37 | |||

| 68a2f2a27d | |||

| cfa14178f8 | |||

| b97c4b6114 | |||

| 3d43cecbea | |||

| eb143ec851 | |||

| 3e94a71dcd | |||

| dd14423b07 | |||

| 8e47fdc284 | |||

| ab607d74be | |||

| bfe7304449 | |||

| e12874b696 | |||

| 696e2bd6ff | |||

| 450f410e3c | |||

| 08a3f033cb | |||

| c5a79ceedd | |||

| 13547afc58 | |||

| 8ae936e504 | |||

| e577d27f9b | |||

| dfb73c963a | |||

| 8c0370a166 | |||

| d7b77764c3 | |||

| 6605f9c444 | |||

| 2a8adcbbd6 | |||

| 0b22c8d427 | |||

| dfa0d9fd43 | |||

| c8470645e2 | |||

| 5a181e52d5 | |||

| 0ad8dcd2aa | |||

| e2d015a20c | |||

| a0cfe4b48a | |||

| a6ba8b614a | |||

| 4f0fabd2ca | |||

| 42b047a14e | |||

| 3daf94954a | |||

| b564d8ac32 | |||

| d8e6da74db | |||

| 278f1883fd | |||

| ef71a7049e | |||

| 6fde87b3bd | |||

| 07fe91e57b | |||

| 01e2f3f0cd | |||

| 63a703c000 | |||

| 4664d91844 | |||

| 8f16c46012 | |||

| a8780f722d | |||

| 1a8fce1505 | |||

| 8519b106f9 | |||

| d375dd62fe | |||

| 3770bf8031 | |||

| 5c527eca66 | |||

| b4ca52c7d8 | |||

| a78d741292 | |||

| 42388b1f8d | |||

| 0167003bbc | |||

| 2ce91fbdf5 | |||

| aa7659d6bf | |||

| 4aa54b9bd4 | |||

| c6d0bacc08 | |||

| 99ed9b22a1 | |||

| eee6d51b40 | |||

| a50e137bba | |||

| 92c0522f4d | |||

| 6a72df2981 | |||

| 808ca48605 | |||

| c827cbc0ae | |||

| 48fcb46d4f | |||

| 66b94599ec | |||

| 231efb33c1 | |||

| eb798dae6f | |||

| 52576c79b3 | |||

| cce2a79a1f | |||

| 413e5f6d77 | |||

| 09ca848d4c | |||

| 801923789b | |||

| cfb696dfd5 | |||

| 2e7a0a88fa | |||

| 1dbbafc30a | |||

| d8eae7faab | |||

| 14eceb6e61 | |||

| 884317c4f7 | |||

| c5f4b229b8 | |||

| 5a2a17ec25 | |||

| 1bd47b0d53 | |||

| 7531ccd31f | |||

| 3b19827ae2 | |||

| ea6e1811c1 | |||

| bc2cf75b76 | |||

| 9e1e0766b7 | |||

| ccde68293f | |||

| 99d53af28d | |||

| 5ea607be58 | |||

| e3846a480e | |||

| a60a58794c | |||

| 8ae5faca53 | |||

| 28d6adf62a | |||

| 1229fba346 | |||

| 59a59ebf66 | |||

| 36ab12c486 | |||

| 0254e3d04a | |||

| f6036e936e | |||

| 10a07e497d | |||

| 3b334805ee | |||

| b6f6c903a0 | |||

| 55637a5620 | |||

| 404cc0a00e | |||

| 0815e2024c | |||

| 41dcb75e8e | |||

| d1a8a610e9 | |||

| 918549a4fc | |||

| 8f482cd41a | |||

| 34096059ff | |||

| 2dfbfec8c2 | |||

| 6170995665 | |||

| ca42a54bc3 | |||

| c0610afe2a | |||

| d4cbcc465c | |||

| 8e6518f071 | |||

| 02ecaa340f |

@ -1,3 +1,5 @@

|

|||||||

venv/

|

venv/

|

||||||

pr_agent/settings/.secrets.toml

|

pr_agent/settings/.secrets.toml

|

||||||

pics/

|

pics/

|

||||||

|

pr_agent.egg-info/

|

||||||

|

build/

|

||||||

|

|||||||

36

.github/workflows/build-and-test.yaml

vendored

Normal file

36

.github/workflows/build-and-test.yaml

vendored

Normal file

@ -0,0 +1,36 @@

|

|||||||

|

name: Build-and-test

|

||||||

|

|

||||||

|

on:

|

||||||

|

push:

|

||||||

|

|

||||||

|

jobs:

|

||||||

|

build-and-test:

|

||||||

|

runs-on: ubuntu-latest

|

||||||

|

|

||||||

|

steps:

|

||||||

|

- id: checkout

|

||||||

|

uses: actions/checkout@v2

|

||||||

|

|

||||||

|

- id: dockerx

|

||||||

|

name: Setup Docker Buildx

|

||||||

|

uses: docker/setup-buildx-action@v2

|

||||||

|

|

||||||

|

- id: build

|

||||||

|

name: Build dev docker

|

||||||

|

uses: docker/build-push-action@v2

|

||||||

|

with:

|

||||||

|

context: .

|

||||||

|

file: ./docker/Dockerfile

|

||||||

|

push: false

|

||||||

|

load: true

|

||||||

|

tags: codiumai/pr-agent:test

|

||||||

|

cache-from: type=gha,scope=dev

|

||||||

|

cache-to: type=gha,mode=max,scope=dev

|

||||||

|

target: test

|

||||||

|

|

||||||

|

- id: test

|

||||||

|

name: Test dev docker

|

||||||

|

run: |

|

||||||

|

docker run --rm codiumai/pr-agent:test pytest -v

|

||||||

|

|

||||||

|

|

||||||

@ -1,6 +1,17 @@

|

|||||||

|

# This workflow enables developers to call PR-Agents `/[actions]` in PR's comments and upon PR creation.

|

||||||

|

# Learn more at https://www.codium.ai/pr-agent/

|

||||||

|

# This is v0.2 of this workflow file

|

||||||

|

|

||||||

|

name: PR-Agent

|

||||||

|

|

||||||

on:

|

on:

|

||||||

pull_request:

|

pull_request:

|

||||||

issue_comment:

|

issue_comment:

|

||||||

|

|

||||||

|

permissions:

|

||||||

|

issues: write

|

||||||

|

pull-requests: write

|

||||||

|

|

||||||

jobs:

|

jobs:

|

||||||

pr_agent_job:

|

pr_agent_job:

|

||||||

runs-on: ubuntu-latest

|

runs-on: ubuntu-latest

|

||||||

6

.gitignore

vendored

6

.gitignore

vendored

@ -1,4 +1,8 @@

|

|||||||

.idea/

|

.idea/

|

||||||

venv/

|

venv/

|

||||||

pr_agent/settings/.secrets.toml

|

pr_agent/settings/.secrets.toml

|

||||||

__pycache__

|

__pycache__

|

||||||

|

dist/

|

||||||

|

*.egg-info/

|

||||||

|

build/

|

||||||

|

review.md

|

||||||

|

|||||||

45

CHANGELOG.md

Normal file

45

CHANGELOG.md

Normal file

@ -0,0 +1,45 @@

|

|||||||

|

## 2023-08-03

|

||||||

|

|

||||||

|

### Optimized

|

||||||

|

- Optimized PR diff processing by introducing caching for diff files, reducing the number of API calls.

|

||||||

|

- Refactored `load_large_diff` function to generate a patch only when necessary.

|

||||||

|

- Fixed a bug in the GitLab provider where the new file was not retrieved correctly.

|

||||||

|

|

||||||

|

## 2023-08-02

|

||||||

|

|

||||||

|

### Enhanced

|

||||||

|

- Updated several tools in the `pr_agent` package to use commit messages in their functionality.

|

||||||

|

- Commit messages are now retrieved and stored in the `vars` dictionary for each tool.

|

||||||

|

- Added a section to display the commit messages in the prompts of various tools.

|

||||||

|

|

||||||

|

## 2023-08-01

|

||||||

|

|

||||||

|

### Enhanced

|

||||||

|

- Introduced the ability to retrieve commit messages from pull requests across different git providers.

|

||||||

|

- Implemented commit messages retrieval for GitHub and GitLab providers.

|

||||||

|

- Updated the PR description template to include a section for commit messages if they exist.

|

||||||

|

- Added support for repository-specific configuration files (.pr_agent.yaml) for the PR Agent.

|

||||||

|

- Implemented this feature for both GitHub and GitLab providers.

|

||||||

|

- Added a new configuration option 'use_repo_settings_file' to enable or disable the use of a repo-specific settings file.

|

||||||

|

|

||||||

|

|

||||||

|

## 2023-07-30

|

||||||

|

|

||||||

|

### Enhanced

|

||||||

|

- Added the ability to modify any configuration parameter from 'configuration.toml' on-the-fly.

|

||||||

|

- Updated the command line interface and bot commands to accept configuration changes as arguments.

|

||||||

|

- Improved the PR agent to handle additional arguments for each action.

|

||||||

|

|

||||||

|

## 2023-07-28

|

||||||

|

|

||||||

|

### Improved

|

||||||

|

- Enhanced error handling and logging in the GitLab provider.

|

||||||

|

- Improved handling of inline comments and code suggestions in GitLab.

|

||||||

|

- Fixed a bug where an additional unneeded line was added to code suggestions in GitLab.

|

||||||

|

|

||||||

|

## 2023-07-26

|

||||||

|

|

||||||

|

### Added

|

||||||

|

- New feature for updating the CHANGELOG.md based on the contents of a PR.

|

||||||

|

- Added support for this feature for the Github provider.

|

||||||

|

- New configuration settings and prompts for the changelog update feature.

|

||||||

@ -1,19 +1,57 @@

|

|||||||

## Configuration

|

## Configuration

|

||||||

|

|

||||||

The different tools and sub-tools used by CodiumAI pr-agent are easily configurable via the configuration file: `/pr-agent/settings/configuration.toml`.

|

The different tools and sub-tools used by CodiumAI PR-Agent are adjustable via the **[configuration file](pr_agent/settings/configuration.toml)**

|

||||||

##### Git Provider:

|

|

||||||

You can select your git_provider with the flag `git_provider` in the `config` section

|

|

||||||

|

|

||||||

##### PR Reviewer:

|

### Working from CLI

|

||||||

|

When running from source (CLI), your local configuration file will be initially used.

|

||||||

|

|

||||||

|

Example for invoking the 'review' tools via the CLI:

|

||||||

|

|

||||||

You can enable/disable the different PR Reviewer abilities with the following flags (`pr_reviewer` section):

|

|

||||||

```

|

```

|

||||||

require_focused_review=true

|

python cli.py --pr-url=<pr_url> review

|

||||||

require_score_review=true

|

|

||||||

require_tests_review=true

|

|

||||||

require_security_review=true

|

|

||||||

```

|

```

|

||||||

You can contol the number of suggestions returned by the PR Reviewer with the following flag:

|

In addition to general configurations, the 'review' tool will use parameters from the `[pr_reviewer]` section (every tool has a dedicated section in the configuration file).

|

||||||

```inline_code_comments=3```

|

|

||||||

And enable/disable the inline code suggestions with the following flag:

|

Note that you can print results locally, without publishing them, by setting in `configuration.toml`:

|

||||||

```inline_code_comments=true```

|

|

||||||

|

```

|

||||||

|

[config]

|

||||||

|

publish_output=true

|

||||||

|

verbosity_level=2

|

||||||

|

```

|

||||||

|

This is useful for debugging or experimenting with the different tools.

|

||||||

|

|

||||||

|

### Working from pre-built repo (GitHub Action/GitHub App/Docker)

|

||||||

|

When running PR-Agent from a pre-built repo, the default configuration file will be loaded.

|

||||||

|

|

||||||

|

To edit the configuration, you have two options:

|

||||||

|

1. Place a local configuration file in the root of your local repo. The local file will be used instead of the default one.

|

||||||

|

2. For online usage, just add `--config_path=<value>` to you command, to edit a specific configuration value.

|

||||||

|

For example if you want to edit `pr_reviewer` configurations, you can run:

|

||||||

|

```

|

||||||

|

/review --pr_reviewer.extra_instructions="..." --pr_reviewer.require_score_review=false ...

|

||||||

|

```

|

||||||

|

|

||||||

|

Any configuration value in `configuration.toml` file can be similarly edited.

|

||||||

|

|

||||||

|

### General configuration parameters

|

||||||

|

|

||||||

|

#### Changing a model

|

||||||

|

See [here](pr_agent/algo/__init__.py) for the list of available models.

|

||||||

|

|

||||||

|

To use Llama2 model, for example, set:

|

||||||

|

```

|

||||||

|

[config]

|

||||||

|

model = "replicate/llama-2-70b-chat:2c1608e18606fad2812020dc541930f2d0495ce32eee50074220b87300bc16e1"

|

||||||

|

[replicate]

|

||||||

|

key = ...

|

||||||

|

```

|

||||||

|

(you can obtain a Llama2 key from [here](https://replicate.com/replicate/llama-2-70b-chat/api))

|

||||||

|

|

||||||

|

Also review the [AiHandler](pr_agent/algo/ai_handler.py) file for instruction how to set keys for other models.

|

||||||

|

|

||||||

|

#### Extra instructions

|

||||||

|

All PR-Agent tools have a parameter called `extra_instructions`, that enables to add free-text extra instructions. Example usage:

|

||||||

|

```

|

||||||

|

/update_changelog --pr_update_changelog.extra_instructions="Make sure to update also the version ..."

|

||||||

|

```

|

||||||

@ -1,8 +1,8 @@

|

|||||||

FROM python:3.10 as base

|

FROM python:3.10 as base

|

||||||

|

|

||||||

WORKDIR /app

|

WORKDIR /app

|

||||||

ADD requirements.txt .

|

ADD pyproject.toml .

|

||||||

RUN pip install -r requirements.txt && rm requirements.txt

|

RUN pip install . && rm pyproject.toml

|

||||||

ENV PYTHONPATH=/app

|

ENV PYTHONPATH=/app

|

||||||

ADD pr_agent pr_agent

|

ADD pr_agent pr_agent

|

||||||

ADD github_action/entrypoint.sh /

|

ADD github_action/entrypoint.sh /

|

||||||

|

|||||||

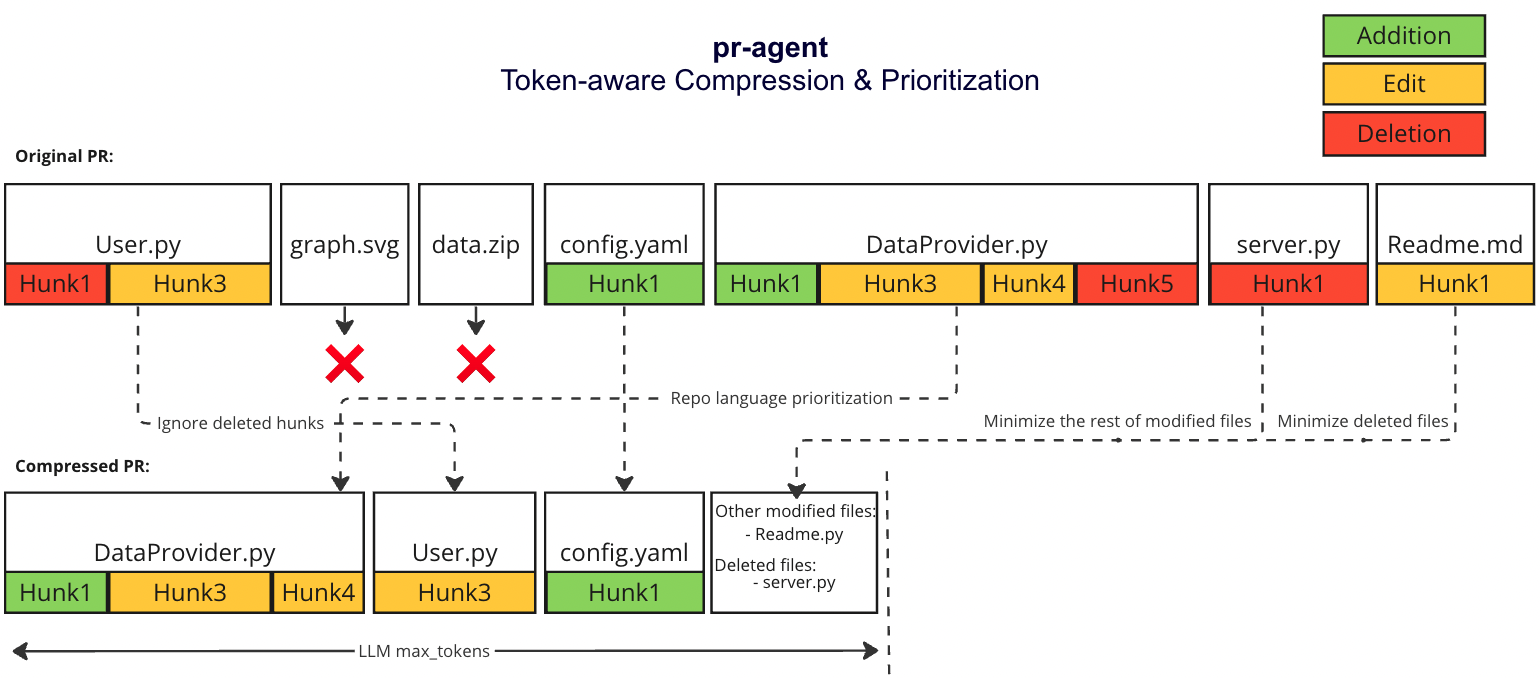

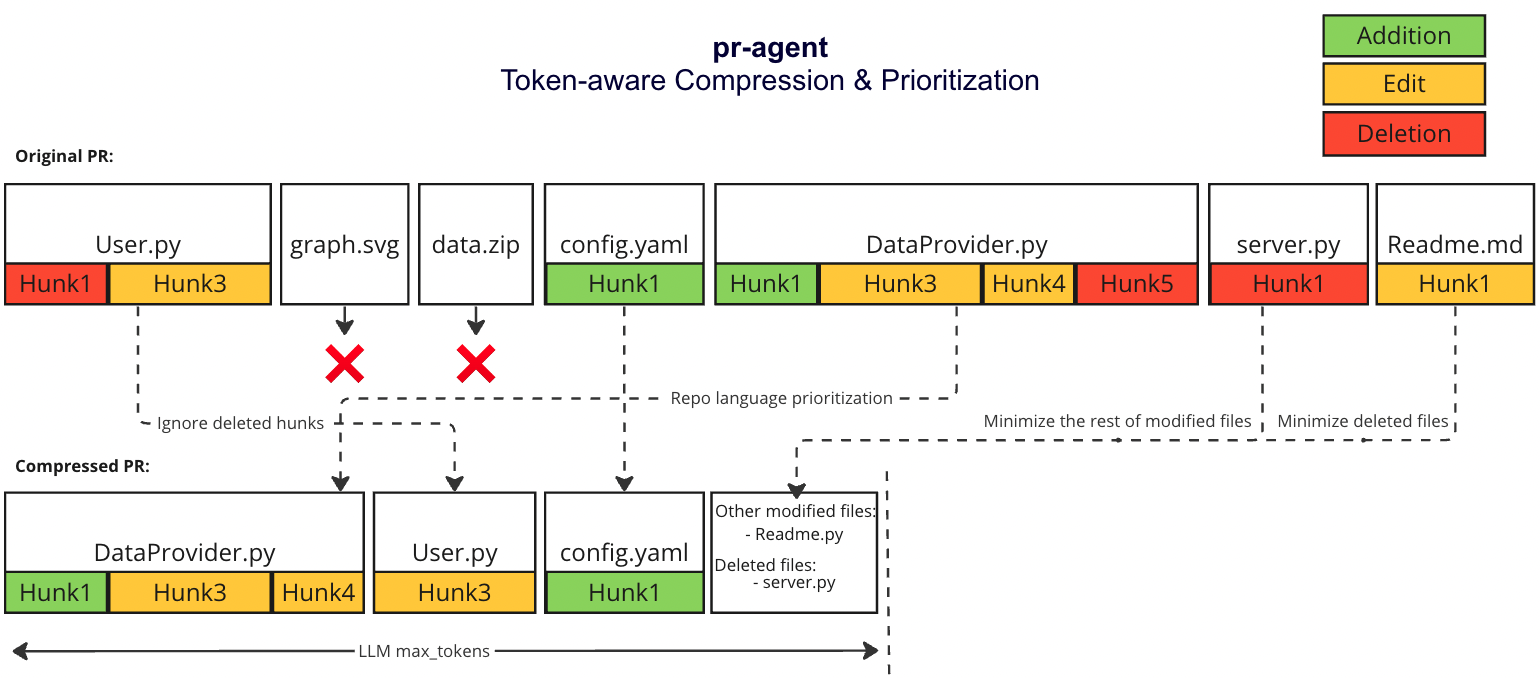

@ -31,7 +31,7 @@ We prioritize additions over deletions:

|

|||||||

- File patches are a list of hunks, remove all hunks of type deletion-only from the hunks in the file patch

|

- File patches are a list of hunks, remove all hunks of type deletion-only from the hunks in the file patch

|

||||||

#### Adaptive and token-aware file patch fitting

|

#### Adaptive and token-aware file patch fitting

|

||||||

We use [tiktoken](https://github.com/openai/tiktoken) to tokenize the patches after the modifications described above, and we use the following strategy to fit the patches into the prompt:

|

We use [tiktoken](https://github.com/openai/tiktoken) to tokenize the patches after the modifications described above, and we use the following strategy to fit the patches into the prompt:

|

||||||

1. Withing each language we sort the files by the number of tokens in the file (in descending order):

|

1. Within each language we sort the files by the number of tokens in the file (in descending order):

|

||||||

* ```[[file2.py, file.py],[file4.jsx, file3.js],[readme.md]]```

|

* ```[[file2.py, file.py],[file4.jsx, file3.js],[readme.md]]```

|

||||||

2. Iterate through the patches in the order described above

|

2. Iterate through the patches in the order described above

|

||||||

2. Add the patches to the prompt until the prompt reaches a certain buffer from the max token length

|

2. Add the patches to the prompt until the prompt reaches a certain buffer from the max token length

|

||||||

@ -39,4 +39,4 @@ We use [tiktoken](https://github.com/openai/tiktoken) to tokenize the patches af

|

|||||||

4. If we haven't reached the max token length, add the `deleted files` to the prompt until the prompt reaches the max token length (hard stop), skip the rest of the patches.

|

4. If we haven't reached the max token length, add the `deleted files` to the prompt until the prompt reaches the max token length (hard stop), skip the rest of the patches.

|

||||||

|

|

||||||

### Example

|

### Example

|

||||||

|

|

||||||

|

|||||||

50

README.md

50

README.md

@ -23,7 +23,9 @@ CodiumAI `PR-Agent` is an open-source tool aiming to help developers review pull

|

|||||||

\

|

\

|

||||||

**Question Answering**: Answering free-text questions about the PR.

|

**Question Answering**: Answering free-text questions about the PR.

|

||||||

\

|

\

|

||||||

**Code Suggestion**: Committable code suggestions for improving the PR.

|

**Code Suggestions**: Committable code suggestions for improving the PR.

|

||||||

|

\

|

||||||

|

**Update Changelog**: Automatically updating the CHANGELOG.md file with the PR changes.

|

||||||

|

|

||||||

<h3>Example results:</h2>

|

<h3>Example results:</h2>

|

||||||

</div>

|

</div>

|

||||||

@ -63,9 +65,9 @@ CodiumAI `PR-Agent` is an open-source tool aiming to help developers review pull

|

|||||||

- [Overview](#overview)

|

- [Overview](#overview)

|

||||||

- [Try it now](#try-it-now)

|

- [Try it now](#try-it-now)

|

||||||

- [Installation](#installation)

|

- [Installation](#installation)

|

||||||

- [Usage and tools](#usage-and-tools)

|

|

||||||

- [Configuration](./CONFIGURATION.md)

|

- [Configuration](./CONFIGURATION.md)

|

||||||

- [How it works](#how-it-works)

|

- [How it works](#how-it-works)

|

||||||

|

- [Why use PR-Agent](#why-use-pr-agent)

|

||||||

- [Roadmap](#roadmap)

|

- [Roadmap](#roadmap)

|

||||||

- [Similar projects](#similar-projects)

|

- [Similar projects](#similar-projects)

|

||||||

</div>

|

</div>

|

||||||

@ -81,6 +83,7 @@ CodiumAI `PR-Agent` is an open-source tool aiming to help developers review pull

|

|||||||

| | Auto-Description | :white_check_mark: | :white_check_mark: | |

|

| | Auto-Description | :white_check_mark: | :white_check_mark: | |

|

||||||

| | Improve Code | :white_check_mark: | :white_check_mark: | |

|

| | Improve Code | :white_check_mark: | :white_check_mark: | |

|

||||||

| | Reflect and Review | :white_check_mark: | | |

|

| | Reflect and Review | :white_check_mark: | | |

|

||||||

|

| | Update CHANGELOG.md | :white_check_mark: | | |

|

||||||

| | | | | |

|

| | | | | |

|

||||||

| USAGE | CLI | :white_check_mark: | :white_check_mark: | :white_check_mark: |

|

| USAGE | CLI | :white_check_mark: | :white_check_mark: | :white_check_mark: |

|

||||||

| | App / webhook | :white_check_mark: | :white_check_mark: | |

|

| | App / webhook | :white_check_mark: | :white_check_mark: | |

|

||||||

@ -90,14 +93,16 @@ CodiumAI `PR-Agent` is an open-source tool aiming to help developers review pull

|

|||||||

| CORE | PR compression | :white_check_mark: | :white_check_mark: | :white_check_mark: |

|

| CORE | PR compression | :white_check_mark: | :white_check_mark: | :white_check_mark: |

|

||||||

| | Repo language prioritization | :white_check_mark: | :white_check_mark: | :white_check_mark: |

|

| | Repo language prioritization | :white_check_mark: | :white_check_mark: | :white_check_mark: |

|

||||||

| | Adaptive and token-aware<br />file patch fitting | :white_check_mark: | :white_check_mark: | :white_check_mark: |

|

| | Adaptive and token-aware<br />file patch fitting | :white_check_mark: | :white_check_mark: | :white_check_mark: |

|

||||||

|

| | Multiple models support | :white_check_mark: | :white_check_mark: | :white_check_mark: |

|

||||||

| | Incremental PR Review | :white_check_mark: | | |

|

| | Incremental PR Review | :white_check_mark: | | |

|

||||||

|

|

||||||

Examples for invoking the different tools via the CLI:

|

Examples for invoking the different tools via the CLI:

|

||||||

- **Review**: python cli.py --pr-url=<pr_url> review

|

- **Review**: python cli.py --pr_url=<pr_url> review

|

||||||

- **Describe**: python cli.py --pr-url=<pr_url> describe

|

- **Describe**: python cli.py --pr_url=<pr_url> describe

|

||||||

- **Improve**: python cli.py --pr-url=<pr_url> improve

|

- **Improve**: python cli.py --pr_url=<pr_url> improve

|

||||||

- **Ask**: python cli.py --pr-url=<pr_url> ask "Write me a poem about this PR"

|

- **Ask**: python cli.py --pr_url=<pr_url> ask "Write me a poem about this PR"

|

||||||

- **Reflect**: python cli.py --pr-url=<pr_url> reflect

|

- **Reflect**: python cli.py --pr_url=<pr_url> reflect

|

||||||

|

- **Update Changelog**: python cli.py --pr_url=<pr_url> update_changelog

|

||||||

|

|

||||||

"<pr_url>" is the url of the relevant PR (for example: https://github.com/Codium-ai/pr-agent/pull/50).

|

"<pr_url>" is the url of the relevant PR (for example: https://github.com/Codium-ai/pr-agent/pull/50).

|

||||||

|

|

||||||

@ -130,36 +135,41 @@ There are several ways to use PR-Agent:

|

|||||||

- [Method 5: Run as a GitHub App](INSTALL.md#method-5-run-as-a-github-app)

|

- [Method 5: Run as a GitHub App](INSTALL.md#method-5-run-as-a-github-app)

|

||||||

- Allowing you to automate the review process on your private or public repositories

|

- Allowing you to automate the review process on your private or public repositories

|

||||||

|

|

||||||

## Usage and Tools

|

|

||||||

|

|

||||||

**PR-Agent** provides five types of interactions ("tools"): `"PR Reviewer"`, `"PR Q&A"`, `"PR Description"`, `"PR Code Sueggestions"` and `"PR Reflect and Review"`.

|

|

||||||

|

|

||||||

- The "PR Reviewer" tool automatically analyzes PRs, and provides various types of feedback.

|

|

||||||

- The "PR Q&A" tool answers free-text questions about the PR.

|

|

||||||

- The "PR Description" tool automatically sets the PR Title and body.

|

|

||||||

- The "PR Code Suggestion" tool provide inline code suggestions for the PR that can be applied and committed.

|

|

||||||

- The "PR Reflect and Review" tool initiates a dialog with the user, asks them to reflect on the PR, and then provides a more focused review.

|

|

||||||

|

|

||||||

## How it works

|

## How it works

|

||||||

|

|

||||||

|

The following diagram illustrates PR-Agent tools and their flow:

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

Check out the [PR Compression strategy](./PR_COMPRESSION.md) page for more details on how we convert a code diff to a manageable LLM prompt

|

Check out the [PR Compression strategy](./PR_COMPRESSION.md) page for more details on how we convert a code diff to a manageable LLM prompt

|

||||||

|

|

||||||

|

## Why use PR-Agent?

|

||||||

|

|

||||||

|

A reasonable question that can be asked is: `"Why use PR-Agent? What make it stand out from existing tools?"`

|

||||||

|

|

||||||

|

Here are some advantages of PR-Agent:

|

||||||

|

|

||||||

|

- We emphasize **real-life practical usage**. Each tool (review, improve, ask, ...) has a single GPT-4 call, no more. We feel that this is critical for realistic team usage - obtaining an answer quickly (~30 seconds) and affordably.

|

||||||

|

- Our [PR Compression strategy](./PR_COMPRESSION.md) is a core ability that enables to effectively tackle both short and long PRs.

|

||||||

|

- Our JSON prompting strategy enables to have **modular, customizable tools**. For example, the '/review' tool categories can be controlled via the [configuration](./CONFIGURATION.md) file. Adding additional categories is easy and accessible.

|

||||||

|

- We support **multiple git providers** (GitHub, Gitlab, Bitbucket), **multiple ways** to use the tool (CLI, GitHub Action, GitHub App, Docker, ...), and **multiple models** (GPT-4, GPT-3.5, Anthropic, Cohere, Llama2).

|

||||||

|

- We are open-source, and welcome contributions from the community.

|

||||||

|

|

||||||

|

|

||||||

## Roadmap

|

## Roadmap

|

||||||

|

|

||||||

- [ ] Support open-source models, as a replacement for OpenAI models. (Note - a minimal requirement for each open-source model is to have 8k+ context, and good support for generating JSON as an output)

|

- [x] Support additional models, as a replacement for OpenAI (see [here](https://github.com/Codium-ai/pr-agent/pull/172))

|

||||||

- [x] Support other Git providers, such as Gitlab and Bitbucket.

|

- [ ] Develop additional logic for handling large PRs

|

||||||

- [ ] Develop additional logic for handling large PRs, and compressing git patches

|

|

||||||

- [ ] Add additional context to the prompt. For example, repo (or relevant files) summarization, with tools such a [ctags](https://github.com/universal-ctags/ctags)

|

- [ ] Add additional context to the prompt. For example, repo (or relevant files) summarization, with tools such a [ctags](https://github.com/universal-ctags/ctags)

|

||||||

- [ ] Adding more tools. Possible directions:

|

- [ ] Adding more tools. Possible directions:

|

||||||

- [x] PR description

|

- [x] PR description

|

||||||

- [x] Inline code suggestions

|

- [x] Inline code suggestions

|

||||||

- [x] Reflect and review

|

- [x] Reflect and review

|

||||||

|

- [x] Rank the PR (see [here](https://github.com/Codium-ai/pr-agent/pull/89))

|

||||||

- [ ] Enforcing CONTRIBUTING.md guidelines

|

- [ ] Enforcing CONTRIBUTING.md guidelines

|

||||||

- [ ] Performance (are there any performance issues)

|

- [ ] Performance (are there any performance issues)

|

||||||

- [ ] Documentation (is the PR properly documented)

|

- [ ] Documentation (is the PR properly documented)

|

||||||

- [ ] Rank the PR importance

|

|

||||||

- [ ] ...

|

- [ ] ...

|

||||||

|

|

||||||

## Similar Projects

|

## Similar Projects

|

||||||

|

|||||||

@ -1,20 +1,24 @@

|

|||||||

FROM python:3.10 as base

|

FROM python:3.10 as base

|

||||||

|

|

||||||

WORKDIR /app

|

WORKDIR /app

|

||||||

ADD requirements.txt .

|

ADD pyproject.toml .

|

||||||

RUN pip install -r requirements.txt && rm requirements.txt

|

RUN pip install . && rm pyproject.toml

|

||||||

ENV PYTHONPATH=/app

|

ENV PYTHONPATH=/app

|

||||||

ADD pr_agent pr_agent

|

|

||||||

|

|

||||||

FROM base as github_app

|

FROM base as github_app

|

||||||

|

ADD pr_agent pr_agent

|

||||||

CMD ["python", "pr_agent/servers/github_app.py"]

|

CMD ["python", "pr_agent/servers/github_app.py"]

|

||||||

|

|

||||||

FROM base as github_polling

|

FROM base as github_polling

|

||||||

|

ADD pr_agent pr_agent

|

||||||

CMD ["python", "pr_agent/servers/github_polling.py"]

|

CMD ["python", "pr_agent/servers/github_polling.py"]

|

||||||

|

|

||||||

FROM base as test

|

FROM base as test

|

||||||

ADD requirements-dev.txt .

|

ADD requirements-dev.txt .

|

||||||

RUN pip install -r requirements-dev.txt && rm requirements-dev.txt

|

RUN pip install -r requirements-dev.txt && rm requirements-dev.txt

|

||||||

|

ADD pr_agent pr_agent

|

||||||

|

ADD tests tests

|

||||||

|

|

||||||

FROM base as cli

|

FROM base as cli

|

||||||

|

ADD pr_agent pr_agent

|

||||||

ENTRYPOINT ["python", "pr_agent/cli.py"]

|

ENTRYPOINT ["python", "pr_agent/cli.py"]

|

||||||

|

|||||||

@ -4,9 +4,9 @@ RUN yum update -y && \

|

|||||||

yum install -y gcc python3-devel && \

|

yum install -y gcc python3-devel && \

|

||||||

yum clean all

|

yum clean all

|

||||||

|

|

||||||

ADD requirements.txt .

|

ADD pyproject.toml .

|

||||||

RUN pip install -r requirements.txt && rm requirements.txt

|

RUN pip install . && rm pyproject.toml

|

||||||

RUN pip install mangum==16.0.0

|

RUN pip install mangum==0.17.0

|

||||||

COPY pr_agent/ ${LAMBDA_TASK_ROOT}/pr_agent/

|

COPY pr_agent/ ${LAMBDA_TASK_ROOT}/pr_agent/

|

||||||

|

|

||||||

CMD ["pr_agent.servers.serverless.serverless"]

|

CMD ["pr_agent.servers.serverless.serverless"]

|

||||||

|

|||||||

@ -1,33 +1,79 @@

|

|||||||

import re

|

import logging

|

||||||

|

import os

|

||||||

|

import shlex

|

||||||

|

import tempfile

|

||||||

|

|

||||||

from pr_agent.config_loader import settings

|

from pr_agent.algo.utils import update_settings_from_args

|

||||||

|

from pr_agent.config_loader import get_settings

|

||||||

|

from pr_agent.git_providers import get_git_provider

|

||||||

from pr_agent.tools.pr_code_suggestions import PRCodeSuggestions

|

from pr_agent.tools.pr_code_suggestions import PRCodeSuggestions

|

||||||

from pr_agent.tools.pr_description import PRDescription

|

from pr_agent.tools.pr_description import PRDescription

|

||||||

from pr_agent.tools.pr_information_from_user import PRInformationFromUser

|

from pr_agent.tools.pr_information_from_user import PRInformationFromUser

|

||||||

from pr_agent.tools.pr_questions import PRQuestions

|

from pr_agent.tools.pr_questions import PRQuestions

|

||||||

from pr_agent.tools.pr_reviewer import PRReviewer

|

from pr_agent.tools.pr_reviewer import PRReviewer

|

||||||

|

from pr_agent.tools.pr_update_changelog import PRUpdateChangelog

|

||||||

|

from pr_agent.tools.pr_config import PRConfig

|

||||||

|

|

||||||

|

command2class = {

|

||||||

|

"answer": PRReviewer,

|

||||||

|

"review": PRReviewer,

|

||||||

|

"review_pr": PRReviewer,

|

||||||

|

"reflect": PRInformationFromUser,

|

||||||

|

"reflect_and_review": PRInformationFromUser,

|

||||||

|

"describe": PRDescription,

|

||||||

|

"describe_pr": PRDescription,

|

||||||

|

"improve": PRCodeSuggestions,

|

||||||

|

"improve_code": PRCodeSuggestions,

|

||||||

|

"ask": PRQuestions,

|

||||||

|

"ask_question": PRQuestions,

|

||||||

|

"update_changelog": PRUpdateChangelog,

|

||||||

|

"config": PRConfig,

|

||||||

|

"settings": PRConfig,

|

||||||

|

}

|

||||||

|

|

||||||

|

commands = list(command2class.keys())

|

||||||

|

|

||||||

class PRAgent:

|

class PRAgent:

|

||||||

def __init__(self):

|

def __init__(self):

|

||||||

pass

|

pass

|

||||||

|

|

||||||

async def handle_request(self, pr_url, request) -> bool:

|

async def handle_request(self, pr_url, request, notify=None) -> bool:

|

||||||

action, *args = request.strip().split()

|

# First, apply repo specific settings if exists

|

||||||

if any(cmd == action for cmd in ["/answer"]):

|

if get_settings().config.use_repo_settings_file:

|

||||||

await PRReviewer(pr_url, is_answer=True).review()

|

repo_settings_file = None

|

||||||

elif any(cmd == action for cmd in ["/review", "/review_pr", "/reflect_and_review"]):

|

try:

|

||||||

if settings.pr_reviewer.ask_and_reflect or "/reflect_and_review" in request:

|

git_provider = get_git_provider()(pr_url)

|

||||||

await PRInformationFromUser(pr_url).generate_questions()

|

repo_settings = git_provider.get_repo_settings()

|

||||||

else:

|

if repo_settings:

|

||||||

await PRReviewer(pr_url, args=args).review()

|

repo_settings_file = None

|

||||||

elif any(cmd == action for cmd in ["/describe", "/describe_pr"]):

|

fd, repo_settings_file = tempfile.mkstemp(suffix='.toml')

|

||||||

await PRDescription(pr_url).describe()

|

os.write(fd, repo_settings)

|

||||||

elif any(cmd == action for cmd in ["/improve", "/improve_code"]):

|

get_settings().load_file(repo_settings_file)

|

||||||

await PRCodeSuggestions(pr_url).suggest()

|

finally:

|

||||||

elif any(cmd == action for cmd in ["/ask", "/ask_question"]):

|

if repo_settings_file:

|

||||||

await PRQuestions(pr_url, args).answer()

|

try:

|

||||||

|

os.remove(repo_settings_file)

|

||||||

|

except Exception as e:

|

||||||

|

logging.error(f"Failed to remove temporary settings file {repo_settings_file}", e)

|

||||||

|

|

||||||

|

# Then, apply user specific settings if exists

|

||||||

|

request = request.replace("'", "\\'")

|

||||||

|

lexer = shlex.shlex(request, posix=True)

|

||||||

|

lexer.whitespace_split = True

|

||||||

|

action, *args = list(lexer)

|

||||||

|

args = update_settings_from_args(args)

|

||||||

|

|

||||||

|

action = action.lstrip("/").lower()

|

||||||

|

if action == "reflect_and_review" and not get_settings().pr_reviewer.ask_and_reflect:

|

||||||

|

action = "review"

|

||||||

|

if action == "answer":

|

||||||

|

if notify:

|

||||||

|

notify()

|

||||||

|

await PRReviewer(pr_url, is_answer=True, args=args).run()

|

||||||

|

elif action in command2class:

|

||||||

|

if notify:

|

||||||

|

notify()

|

||||||

|

await command2class[action](pr_url, args=args).run()

|

||||||

else:

|

else:

|

||||||

return False

|

return False

|

||||||

|

|

||||||

return True

|

return True

|

||||||

|

|||||||

@ -7,4 +7,8 @@ MAX_TOKENS = {

|

|||||||

'gpt-4': 8000,

|

'gpt-4': 8000,

|

||||||

'gpt-4-0613': 8000,

|

'gpt-4-0613': 8000,

|

||||||

'gpt-4-32k': 32000,

|

'gpt-4-32k': 32000,

|

||||||

|

'claude-instant-1': 100000,

|

||||||

|

'claude-2': 100000,

|

||||||

|

'command-nightly': 4096,

|

||||||

|

'replicate/llama-2-70b-chat:2c1608e18606fad2812020dc541930f2d0495ce32eee50074220b87300bc16e1': 4096,

|

||||||

}

|

}

|

||||||

|

|||||||

@ -1,12 +1,15 @@

|

|||||||

import logging

|

import logging

|

||||||

|

|

||||||

|

import litellm

|

||||||

import openai

|

import openai

|

||||||

from openai.error import APIError, Timeout, TryAgain, RateLimitError

|

from litellm import acompletion

|

||||||

|

from openai.error import APIError, RateLimitError, Timeout, TryAgain

|

||||||

from retry import retry

|

from retry import retry

|

||||||

|

|

||||||

from pr_agent.config_loader import settings

|

from pr_agent.config_loader import get_settings

|

||||||

|

|

||||||

|

OPENAI_RETRIES = 5

|

||||||

|

|

||||||

OPENAI_RETRIES=5

|

|

||||||

|

|

||||||

class AiHandler:

|

class AiHandler:

|

||||||

"""

|

"""

|

||||||

@ -21,16 +24,26 @@ class AiHandler:

|

|||||||

Raises a ValueError if the OpenAI key is missing.

|

Raises a ValueError if the OpenAI key is missing.

|

||||||

"""

|

"""

|

||||||

try:

|

try:

|

||||||

openai.api_key = settings.openai.key

|

openai.api_key = get_settings().openai.key

|

||||||

if settings.get("OPENAI.ORG", None):

|

litellm.openai_key = get_settings().openai.key

|

||||||

openai.organization = settings.openai.org

|

self.azure = False

|

||||||

self.deployment_id = settings.get("OPENAI.DEPLOYMENT_ID", None)

|

if get_settings().get("OPENAI.ORG", None):

|

||||||

if settings.get("OPENAI.API_TYPE", None):

|

litellm.organization = get_settings().openai.org

|

||||||

openai.api_type = settings.openai.api_type

|

self.deployment_id = get_settings().get("OPENAI.DEPLOYMENT_ID", None)

|

||||||

if settings.get("OPENAI.API_VERSION", None):

|

if get_settings().get("OPENAI.API_TYPE", None):

|

||||||

openai.api_version = settings.openai.api_version

|

if get_settings().openai.api_type == "azure":

|

||||||

if settings.get("OPENAI.API_BASE", None):

|

self.azure = True

|

||||||

openai.api_base = settings.openai.api_base

|

litellm.azure_key = get_settings().openai.key

|

||||||

|

if get_settings().get("OPENAI.API_VERSION", None):

|

||||||

|

litellm.api_version = get_settings().openai.api_version

|

||||||

|

if get_settings().get("OPENAI.API_BASE", None):

|

||||||

|

litellm.api_base = get_settings().openai.api_base

|

||||||

|

if get_settings().get("ANTHROPIC.KEY", None):

|

||||||

|

litellm.anthropic_key = get_settings().anthropic.key

|

||||||

|

if get_settings().get("COHERE.KEY", None):

|

||||||

|

litellm.cohere_key = get_settings().cohere.key

|

||||||

|

if get_settings().get("REPLICATE.KEY", None):

|

||||||

|

litellm.replicate_key = get_settings().replicate.key

|

||||||

except AttributeError as e:

|

except AttributeError as e:

|

||||||

raise ValueError("OpenAI key is required") from e

|

raise ValueError("OpenAI key is required") from e

|

||||||

|

|

||||||

@ -57,15 +70,17 @@ class AiHandler:

|

|||||||

TryAgain: If there is an attribute error during OpenAI inference.

|

TryAgain: If there is an attribute error during OpenAI inference.

|

||||||

"""

|

"""

|

||||||

try:

|

try:

|

||||||

response = await openai.ChatCompletion.acreate(

|

response = await acompletion(

|

||||||

model=model,

|

model=model,

|

||||||

deployment_id=self.deployment_id,

|

deployment_id=self.deployment_id,

|

||||||

messages=[

|

messages=[

|

||||||

{"role": "system", "content": system},

|

{"role": "system", "content": system},

|

||||||

{"role": "user", "content": user}

|

{"role": "user", "content": user}

|

||||||

],

|

],

|

||||||

temperature=temperature,

|

temperature=temperature,

|

||||||

)

|

azure=self.azure,

|

||||||

|

force_timeout=get_settings().config.ai_timeout

|

||||||

|

)

|

||||||

except (APIError, Timeout, TryAgain) as e:

|

except (APIError, Timeout, TryAgain) as e:

|

||||||

logging.error("Error during OpenAI inference: ", e)

|

logging.error("Error during OpenAI inference: ", e)

|

||||||

raise

|

raise

|

||||||

@ -75,8 +90,9 @@ class AiHandler:

|

|||||||

except (Exception) as e:

|

except (Exception) as e:

|

||||||

logging.error("Unknown error during OpenAI inference: ", e)

|

logging.error("Unknown error during OpenAI inference: ", e)

|

||||||

raise TryAgain from e

|

raise TryAgain from e

|

||||||

if response is None or len(response.choices) == 0:

|

if response is None or len(response["choices"]) == 0:

|

||||||

raise TryAgain

|

raise TryAgain

|

||||||

resp = response.choices[0]['message']['content']

|

resp = response["choices"][0]['message']['content']

|

||||||

finish_reason = response.choices[0].finish_reason

|

finish_reason = response["choices"][0]["finish_reason"]

|

||||||

return resp, finish_reason

|

print(resp, finish_reason)

|

||||||

|

return resp, finish_reason

|

||||||

|

|||||||

@ -3,7 +3,7 @@ from __future__ import annotations

|

|||||||

import logging

|

import logging

|

||||||

import re

|

import re

|

||||||

|

|

||||||

from pr_agent.config_loader import settings

|

from pr_agent.config_loader import get_settings

|

||||||

|

|

||||||

|

|

||||||

def extend_patch(original_file_str, patch_str, num_lines) -> str:

|

def extend_patch(original_file_str, patch_str, num_lines) -> str:

|

||||||

@ -41,7 +41,11 @@ def extend_patch(original_file_str, patch_str, num_lines) -> str:

|

|||||||

extended_patch_lines.extend(

|

extended_patch_lines.extend(

|

||||||

original_lines[start1 + size1 - 1:start1 + size1 - 1 + num_lines])

|

original_lines[start1 + size1 - 1:start1 + size1 - 1 + num_lines])

|

||||||

|

|

||||||

start1, size1, start2, size2 = map(int, match.groups()[:4])

|

try:

|

||||||

|

start1, size1, start2, size2 = map(int, match.groups()[:4])

|

||||||

|

except: # '@@ -0,0 +1 @@' case

|

||||||

|

start1, size1, size2 = map(int, match.groups()[:3])

|

||||||

|

start2 = 0

|

||||||

section_header = match.groups()[4]

|

section_header = match.groups()[4]

|

||||||

extended_start1 = max(1, start1 - num_lines)

|

extended_start1 = max(1, start1 - num_lines)

|

||||||

extended_size1 = size1 + (start1 - extended_start1) + num_lines

|

extended_size1 = size1 + (start1 - extended_start1) + num_lines

|

||||||

@ -55,7 +59,7 @@ def extend_patch(original_file_str, patch_str, num_lines) -> str:

|

|||||||

continue

|

continue

|

||||||

extended_patch_lines.append(line)

|

extended_patch_lines.append(line)

|

||||||

except Exception as e:

|

except Exception as e:

|

||||||

if settings.config.verbosity_level >= 2:

|

if get_settings().config.verbosity_level >= 2:

|

||||||

logging.error(f"Failed to extend patch: {e}")

|

logging.error(f"Failed to extend patch: {e}")

|

||||||

return patch_str

|

return patch_str

|

||||||

|

|

||||||

@ -126,14 +130,14 @@ def handle_patch_deletions(patch: str, original_file_content_str: str,

|

|||||||

"""

|

"""

|

||||||

if not new_file_content_str:

|

if not new_file_content_str:

|

||||||

# logic for handling deleted files - don't show patch, just show that the file was deleted

|

# logic for handling deleted files - don't show patch, just show that the file was deleted

|

||||||

if settings.config.verbosity_level > 0:

|

if get_settings().config.verbosity_level > 0:

|

||||||

logging.info(f"Processing file: {file_name}, minimizing deletion file")

|

logging.info(f"Processing file: {file_name}, minimizing deletion file")

|

||||||

patch = None # file was deleted

|

patch = None # file was deleted

|

||||||

else:

|

else:

|

||||||

patch_lines = patch.splitlines()

|

patch_lines = patch.splitlines()

|

||||||

patch_new = omit_deletion_hunks(patch_lines)

|

patch_new = omit_deletion_hunks(patch_lines)

|

||||||

if patch != patch_new:

|

if patch != patch_new:

|

||||||

if settings.config.verbosity_level > 0:

|

if get_settings().config.verbosity_level > 0:

|

||||||

logging.info(f"Processing file: {file_name}, hunks were deleted")

|

logging.info(f"Processing file: {file_name}, hunks were deleted")

|

||||||

patch = patch_new

|

patch = patch_new

|

||||||

return patch

|

return patch

|

||||||

@ -141,7 +145,8 @@ def handle_patch_deletions(patch: str, original_file_content_str: str,

|

|||||||

|

|

||||||

def convert_to_hunks_with_lines_numbers(patch: str, file) -> str:

|

def convert_to_hunks_with_lines_numbers(patch: str, file) -> str:

|

||||||

"""

|

"""

|

||||||

Convert a given patch string into a string with line numbers for each hunk, indicating the new and old content of the file.

|

Convert a given patch string into a string with line numbers for each hunk, indicating the new and old content of

|

||||||

|

the file.

|

||||||

|

|

||||||

Args:

|

Args:

|

||||||

patch (str): The patch string to be converted.

|

patch (str): The patch string to be converted.

|

||||||

@ -197,7 +202,12 @@ def convert_to_hunks_with_lines_numbers(patch: str, file) -> str:

|

|||||||

patch_with_lines_str += f"{line_old}\n"

|

patch_with_lines_str += f"{line_old}\n"

|

||||||

new_content_lines = []

|

new_content_lines = []

|

||||||

old_content_lines = []

|

old_content_lines = []

|

||||||

start1, size1, start2, size2 = map(int, match.groups()[:4])

|

try:

|

||||||

|

start1, size1, start2, size2 = map(int, match.groups()[:4])

|

||||||

|

except: # '@@ -0,0 +1 @@' case

|

||||||

|

start1, size1, size2 = map(int, match.groups()[:3])

|

||||||

|

start2 = 0

|

||||||

|

|

||||||

elif line.startswith('+'):

|

elif line.startswith('+'):

|

||||||

new_content_lines.append(line)

|

new_content_lines.append(line)

|

||||||

elif line.startswith('-'):

|

elif line.startswith('-'):

|

||||||

|

|||||||

@ -1,19 +1,19 @@

|

|||||||

# Language Selection, source: https://github.com/bigcode-project/bigcode-dataset/blob/main/language_selection/programming-languages-to-file-extensions.json # noqa E501

|

# Language Selection, source: https://github.com/bigcode-project/bigcode-dataset/blob/main/language_selection/programming-languages-to-file-extensions.json # noqa E501

|

||||||

from typing import Dict

|

from typing import Dict

|

||||||

|

|

||||||

from pr_agent.config_loader import settings

|

from pr_agent.config_loader import get_settings

|

||||||

|

|

||||||

language_extension_map_org = settings.language_extension_map_org

|

language_extension_map_org = get_settings().language_extension_map_org

|

||||||

language_extension_map = {k.lower(): v for k, v in language_extension_map_org.items()}

|

language_extension_map = {k.lower(): v for k, v in language_extension_map_org.items()}

|

||||||

|

|

||||||

# Bad Extensions, source: https://github.com/EleutherAI/github-downloader/blob/345e7c4cbb9e0dc8a0615fd995a08bf9d73b3fe6/download_repo_text.py # noqa: E501

|

# Bad Extensions, source: https://github.com/EleutherAI/github-downloader/blob/345e7c4cbb9e0dc8a0615fd995a08bf9d73b3fe6/download_repo_text.py # noqa: E501

|

||||||

bad_extensions = settings.bad_extensions.default

|

bad_extensions = get_settings().bad_extensions.default

|

||||||

if settings.config.use_extra_bad_extensions:

|

if get_settings().config.use_extra_bad_extensions:

|

||||||

bad_extensions += settings.bad_extensions.extra

|

bad_extensions += get_settings().bad_extensions.extra

|

||||||

|

|

||||||

|

|

||||||

def filter_bad_extensions(files):

|

def filter_bad_extensions(files):

|

||||||

return [f for f in files if is_valid_file(f.filename)]

|

return [f for f in files if f.filename is not None and is_valid_file(f.filename)]

|

||||||

|

|

||||||

|

|

||||||

def is_valid_file(filename):

|

def is_valid_file(filename):

|

||||||

|

|||||||

@ -1,15 +1,19 @@

|

|||||||

from __future__ import annotations

|

from __future__ import annotations

|

||||||

|

|

||||||

|

import difflib

|

||||||

import logging

|

import logging

|

||||||

from typing import Tuple, Union, Callable, List

|

import re

|

||||||

|

import traceback

|

||||||

|

from typing import Any, Callable, List, Tuple

|

||||||

|

|

||||||

|

from github import RateLimitExceededException

|

||||||

|

|

||||||

from pr_agent.algo import MAX_TOKENS

|

from pr_agent.algo import MAX_TOKENS

|

||||||

from pr_agent.algo.git_patch_processing import convert_to_hunks_with_lines_numbers, extend_patch, handle_patch_deletions

|

from pr_agent.algo.git_patch_processing import convert_to_hunks_with_lines_numbers, extend_patch, handle_patch_deletions

|

||||||

from pr_agent.algo.language_handler import sort_files_by_main_languages

|

from pr_agent.algo.language_handler import sort_files_by_main_languages

|

||||||

from pr_agent.algo.token_handler import TokenHandler

|

from pr_agent.algo.token_handler import TokenHandler, get_token_encoder

|

||||||

from pr_agent.algo.utils import load_large_diff

|

from pr_agent.config_loader import get_settings

|

||||||

from pr_agent.config_loader import settings

|

from pr_agent.git_providers.git_provider import FilePatchInfo, GitProvider

|

||||||

from pr_agent.git_providers.git_provider import GitProvider

|

|

||||||

|

|

||||||

DELETED_FILES_ = "Deleted files:\n"

|

DELETED_FILES_ = "Deleted files:\n"

|

||||||

|

|

||||||

@ -19,18 +23,21 @@ OUTPUT_BUFFER_TOKENS_SOFT_THRESHOLD = 1000

|

|||||||

OUTPUT_BUFFER_TOKENS_HARD_THRESHOLD = 600

|

OUTPUT_BUFFER_TOKENS_HARD_THRESHOLD = 600

|

||||||

PATCH_EXTRA_LINES = 3

|

PATCH_EXTRA_LINES = 3

|

||||||

|

|

||||||

|

|

||||||

def get_pr_diff(git_provider: GitProvider, token_handler: TokenHandler, model: str,

|

def get_pr_diff(git_provider: GitProvider, token_handler: TokenHandler, model: str,

|

||||||

add_line_numbers_to_hunks: bool = False, disable_extra_lines: bool = False) -> str:

|

add_line_numbers_to_hunks: bool = False, disable_extra_lines: bool = False) -> str:

|

||||||

"""

|

"""

|

||||||

Returns a string with the diff of the pull request, applying diff minimization techniques if needed.

|

Returns a string with the diff of the pull request, applying diff minimization techniques if needed.

|

||||||

|

|

||||||

Args:

|

Args:

|

||||||

git_provider (GitProvider): An object of the GitProvider class representing the Git provider used for the pull request.

|

git_provider (GitProvider): An object of the GitProvider class representing the Git provider used for the pull

|

||||||

token_handler (TokenHandler): An object of the TokenHandler class used for handling tokens in the context of the pull request.

|

request.

|

||||||

|

token_handler (TokenHandler): An object of the TokenHandler class used for handling tokens in the context of the

|

||||||

|

pull request.

|

||||||

model (str): The name of the model used for tokenization.

|

model (str): The name of the model used for tokenization.

|

||||||

add_line_numbers_to_hunks (bool, optional): A boolean indicating whether to add line numbers to the hunks in the diff. Defaults to False.

|

add_line_numbers_to_hunks (bool, optional): A boolean indicating whether to add line numbers to the hunks in the

|

||||||

disable_extra_lines (bool, optional): A boolean indicating whether to disable the extension of each patch with extra lines of context. Defaults to False.

|

diff. Defaults to False.

|

||||||

|

disable_extra_lines (bool, optional): A boolean indicating whether to disable the extension of each patch with

|

||||||

|

extra lines of context. Defaults to False.

|

||||||

|

|

||||||

Returns:

|

Returns:

|

||||||

str: A string with the diff of the pull request, applying diff minimization techniques if needed.

|

str: A string with the diff of the pull request, applying diff minimization techniques if needed.

|

||||||

@ -40,7 +47,11 @@ def get_pr_diff(git_provider: GitProvider, token_handler: TokenHandler, model: s

|

|||||||

global PATCH_EXTRA_LINES

|

global PATCH_EXTRA_LINES

|

||||||

PATCH_EXTRA_LINES = 0

|

PATCH_EXTRA_LINES = 0

|

||||||

|

|

||||||

diff_files = list(git_provider.get_diff_files())

|

try:

|

||||||

|

diff_files = git_provider.get_diff_files()

|

||||||

|

except RateLimitExceededException as e:

|

||||||

|

logging.error(f"Rate limit exceeded for git provider API. original message {e}")

|

||||||

|

raise

|

||||||

|

|

||||||

# get pr languages

|

# get pr languages

|

||||||

pr_languages = sort_files_by_main_languages(git_provider.get_languages(), diff_files)

|

pr_languages = sort_files_by_main_languages(git_provider.get_languages(), diff_files)

|

||||||

@ -71,10 +82,12 @@ def pr_generate_extended_diff(pr_languages: list, token_handler: TokenHandler,

|

|||||||

add_line_numbers_to_hunks: bool) -> \

|

add_line_numbers_to_hunks: bool) -> \

|

||||||

Tuple[list, int]:

|

Tuple[list, int]:

|

||||||

"""

|

"""

|

||||||

Generate a standard diff string with patch extension, while counting the number of tokens used and applying diff minimization techniques if needed.

|

Generate a standard diff string with patch extension, while counting the number of tokens used and applying diff

|

||||||

|

minimization techniques if needed.

|

||||||

|

|

||||||

Args:

|

Args:

|

||||||

- pr_languages: A list of dictionaries representing the languages used in the pull request and their corresponding files.

|

- pr_languages: A list of dictionaries representing the languages used in the pull request and their corresponding

|

||||||

|

files.

|

||||||

- token_handler: An object of the TokenHandler class used for handling tokens in the context of the pull request.

|

- token_handler: An object of the TokenHandler class used for handling tokens in the context of the pull request.

|

||||||

- add_line_numbers_to_hunks: A boolean indicating whether to add line numbers to the hunks in the diff.

|

- add_line_numbers_to_hunks: A boolean indicating whether to add line numbers to the hunks in the diff.

|

||||||

|

|

||||||

@ -87,12 +100,7 @@ def pr_generate_extended_diff(pr_languages: list, token_handler: TokenHandler,

|

|||||||

for lang in pr_languages:

|

for lang in pr_languages:

|

||||||

for file in lang['files']:

|

for file in lang['files']:

|

||||||

original_file_content_str = file.base_file

|

original_file_content_str = file.base_file

|

||||||

new_file_content_str = file.head_file

|

|

||||||

patch = file.patch

|

patch = file.patch

|

||||||

|

|

||||||

# handle the case of large patch, that initially was not loaded

|

|

||||||

patch = load_large_diff(file, new_file_content_str, original_file_content_str, patch)

|

|

||||||

|

|

||||||

if not patch:

|

if not patch:

|

||||||

continue

|

continue

|

||||||

|

|

||||||

@ -114,10 +122,13 @@ def pr_generate_extended_diff(pr_languages: list, token_handler: TokenHandler,

|

|||||||

def pr_generate_compressed_diff(top_langs: list, token_handler: TokenHandler, model: str,

|

def pr_generate_compressed_diff(top_langs: list, token_handler: TokenHandler, model: str,

|

||||||

convert_hunks_to_line_numbers: bool) -> Tuple[list, list, list]:

|

convert_hunks_to_line_numbers: bool) -> Tuple[list, list, list]:

|

||||||

"""

|

"""

|

||||||

Generate a compressed diff string for a pull request, using diff minimization techniques to reduce the number of tokens used.

|

Generate a compressed diff string for a pull request, using diff minimization techniques to reduce the number of

|

||||||

|

tokens used.

|

||||||

Args:

|

Args:

|

||||||

top_langs (list): A list of dictionaries representing the languages used in the pull request and their corresponding files.

|

top_langs (list): A list of dictionaries representing the languages used in the pull request and their

|

||||||

token_handler (TokenHandler): An object of the TokenHandler class used for handling tokens in the context of the pull request.

|

corresponding files.

|

||||||

|

token_handler (TokenHandler): An object of the TokenHandler class used for handling tokens in the context of the

|

||||||

|

pull request.

|

||||||

model (str): The model used for tokenization.

|

model (str): The model used for tokenization.

|

||||||

convert_hunks_to_line_numbers (bool): A boolean indicating whether to convert hunks to line numbers in the diff.

|

convert_hunks_to_line_numbers (bool): A boolean indicating whether to convert hunks to line numbers in the diff.

|

||||||

Returns:

|

Returns:

|

||||||

@ -147,7 +158,6 @@ def pr_generate_compressed_diff(top_langs: list, token_handler: TokenHandler, mo

|

|||||||

original_file_content_str = file.base_file

|

original_file_content_str = file.base_file

|

||||||

new_file_content_str = file.head_file

|

new_file_content_str = file.head_file

|

||||||

patch = file.patch

|

patch = file.patch

|

||||||

patch = load_large_diff(file, new_file_content_str, original_file_content_str, patch)

|

|

||||||

if not patch:

|

if not patch:

|

||||||

continue

|

continue

|

||||||

|

|

||||||

@ -176,7 +186,7 @@ def pr_generate_compressed_diff(top_langs: list, token_handler: TokenHandler, mo

|

|||||||

# Current logic is to skip the patch if it's too large

|

# Current logic is to skip the patch if it's too large

|

||||||

# TODO: Option for alternative logic to remove hunks from the patch to reduce the number of tokens

|

# TODO: Option for alternative logic to remove hunks from the patch to reduce the number of tokens

|

||||||

# until we meet the requirements

|

# until we meet the requirements

|

||||||

if settings.config.verbosity_level >= 2:

|

if get_settings().config.verbosity_level >= 2:

|

||||||

logging.warning(f"Patch too large, minimizing it, {file.filename}")

|

logging.warning(f"Patch too large, minimizing it, {file.filename}")

|

||||||

if not modified_files_list:

|

if not modified_files_list:

|

||||||

total_tokens += token_handler.count_tokens(MORE_MODIFIED_FILES_)

|

total_tokens += token_handler.count_tokens(MORE_MODIFIED_FILES_)

|

||||||

@ -191,15 +201,15 @@ def pr_generate_compressed_diff(top_langs: list, token_handler: TokenHandler, mo

|

|||||||

patch_final = patch

|

patch_final = patch

|

||||||

patches.append(patch_final)

|

patches.append(patch_final)

|

||||||

total_tokens += token_handler.count_tokens(patch_final)

|

total_tokens += token_handler.count_tokens(patch_final)

|

||||||

if settings.config.verbosity_level >= 2:

|

if get_settings().config.verbosity_level >= 2:

|

||||||

logging.info(f"Tokens: {total_tokens}, last filename: {file.filename}")

|

logging.info(f"Tokens: {total_tokens}, last filename: {file.filename}")

|

||||||

|

|

||||||

return patches, modified_files_list, deleted_files_list

|

return patches, modified_files_list, deleted_files_list

|

||||||

|

|

||||||

|

|

||||||

async def retry_with_fallback_models(f: Callable):

|

async def retry_with_fallback_models(f: Callable):

|

||||||

model = settings.config.model

|

model = get_settings().config.model

|

||||||

fallback_models = settings.config.fallback_models

|

fallback_models = get_settings().config.fallback_models

|

||||||

if not isinstance(fallback_models, list):

|

if not isinstance(fallback_models, list):

|

||||||

fallback_models = [fallback_models]

|

fallback_models = [fallback_models]

|

||||||

all_models = [model] + fallback_models

|

all_models = [model] + fallback_models

|

||||||

@ -207,6 +217,97 @@ async def retry_with_fallback_models(f: Callable):

|

|||||||

try:

|

try:

|

||||||

return await f(model)

|

return await f(model)

|

||||||

except Exception as e:

|

except Exception as e:

|

||||||

logging.warning(f"Failed to generate prediction with {model}: {e}")

|

logging.warning(f"Failed to generate prediction with {model}: {traceback.format_exc()}")

|

||||||

if i == len(all_models) - 1: # If it's the last iteration

|

if i == len(all_models) - 1: # If it's the last iteration

|

||||||

raise # Re-raise the last exception

|

raise # Re-raise the last exception

|

||||||

|

|

||||||

|

|

||||||

|

def find_line_number_of_relevant_line_in_file(diff_files: List[FilePatchInfo],

|

||||||

|

relevant_file: str,

|

||||||

|

relevant_line_in_file: str) -> Tuple[int, int]:

|

||||||

|

"""

|

||||||

|

Find the line number and absolute position of a relevant line in a file.

|

||||||

|

|

||||||

|

Args:

|

||||||

|

diff_files (List[FilePatchInfo]): A list of FilePatchInfo objects representing the patches of files.

|

||||||

|

relevant_file (str): The name of the file where the relevant line is located.

|

||||||

|

relevant_line_in_file (str): The content of the relevant line.

|

||||||

|

|

||||||

|

Returns:

|

||||||

|

Tuple[int, int]: A tuple containing the line number and absolute position of the relevant line in the file.

|

||||||

|

"""

|

||||||

|

position = -1

|

||||||

|

absolute_position = -1

|

||||||

|

re_hunk_header = re.compile(

|

||||||

|

r"^@@ -(\d+)(?:,(\d+))? \+(\d+)(?:,(\d+))? @@[ ]?(.*)")

|

||||||

|

|

||||||

|

for file in diff_files:

|

||||||

|

if file.filename.strip() == relevant_file:

|

||||||

|

patch = file.patch

|

||||||

|

patch_lines = patch.splitlines()

|

||||||

|

|

||||||

|

# try to find the line in the patch using difflib, with some margin of error

|

||||||

|

matches_difflib: list[str | Any] = difflib.get_close_matches(relevant_line_in_file,

|

||||||

|

patch_lines, n=3, cutoff=0.93)

|

||||||

|

if len(matches_difflib) == 1 and matches_difflib[0].startswith('+'):

|

||||||

|

relevant_line_in_file = matches_difflib[0]

|

||||||

|

|

||||||

|

delta = 0

|

||||||

|

start1, size1, start2, size2 = 0, 0, 0, 0

|

||||||

|

for i, line in enumerate(patch_lines):

|

||||||

|

if line.startswith('@@'):

|

||||||

|

delta = 0

|

||||||

|

match = re_hunk_header.match(line)

|

||||||

|

start1, size1, start2, size2 = map(int, match.groups()[:4])

|

||||||

|

elif not line.startswith('-'):

|

||||||

|

delta += 1

|

||||||

|

|

||||||

|

if relevant_line_in_file in line and line[0] != '-':

|

||||||

|

position = i

|

||||||

|

absolute_position = start2 + delta - 1

|

||||||

|

break

|

||||||

|

|

||||||

|

if position == -1 and relevant_line_in_file[0] == '+':

|

||||||

|

no_plus_line = relevant_line_in_file[1:].lstrip()

|

||||||

|

for i, line in enumerate(patch_lines):

|

||||||

|

if line.startswith('@@'):

|

||||||

|

delta = 0

|

||||||

|

match = re_hunk_header.match(line)

|

||||||

|

start1, size1, start2, size2 = map(int, match.groups()[:4])

|

||||||

|

elif not line.startswith('-'):

|

||||||

|

delta += 1

|

||||||

|

|

||||||

|

if no_plus_line in line and line[0] != '-':

|

||||||

|

# The model might add a '+' to the beginning of the relevant_line_in_file even if originally

|

||||||

|

# it's a context line

|

||||||

|

position = i

|

||||||

|

absolute_position = start2 + delta - 1

|

||||||

|

break

|

||||||

|

return position, absolute_position

|

||||||

|

|

||||||

|

|

||||||

|

def clip_tokens(text: str, max_tokens: int) -> str:

|

||||||

|

"""

|

||||||

|

Clip the number of tokens in a string to a maximum number of tokens.

|

||||||

|

|

||||||

|

Args:

|

||||||

|

text (str): The string to clip.

|

||||||

|

max_tokens (int): The maximum number of tokens allowed in the string.

|