mirror of

https://github.com/qodo-ai/pr-agent.git

synced 2025-07-21 04:50:39 +08:00

Compare commits

386 Commits

feature/me

...

ok/gitlat_

| Author | SHA1 | Date | |

|---|---|---|---|

| d23daf880f | |||

| adb3f17258 | |||

| 2c03a67312 | |||

| 55eb741965 | |||

| c9c95d60d4 | |||

| cca809e91c | |||

| 57ff46ecc1 | |||

| 3819d52eb0 | |||

| 3072325d2c | |||

| abca2fdcb7 | |||

| 4d84f76948 | |||

| dd8f6eb923 | |||

| b9c25e487a | |||

| 1bf27c38a7 | |||

| 1f987380ed | |||

| cd8bbbf889 | |||

| 8e5498ee97 | |||

| 0412d7aca0 | |||

| 1eac3245d9 | |||

| cd51bef7f7 | |||

| e8aa33fa0b | |||

| 54b021b02c | |||

| 32151e3d9a | |||

| 32358678e6 | |||

| 42e32664a1 | |||

| 1e97236a15 | |||

| 321f7bce46 | |||

| 02a1d8dbfc | |||

| e34f9d8d1c | |||

| 35dac012bd | |||

| 21ced18f50 | |||

| fca78cf395 | |||

| d1b91b0ea3 | |||

| 76e00acbdb | |||

| 2f83e7738c | |||

| f4a226b0f7 | |||

| f5e2838fc3 | |||

| bbdfd2c3d4 | |||

| 74572e1768 | |||

| f0a17b863c | |||

| 86fd84e113 | |||

| d5b9be23d3 | |||

| 057bb3932f | |||

| 05f29cc406 | |||

| 63c4c7e584 | |||

| 1ea23cab96 | |||

| e99f9fd59f | |||

| fdf6a3e833 | |||

| 79cb94b4c2 | |||

| 9adec7cc10 | |||

| 1f0df47b4d | |||

| a71a12791b | |||

| 23fa834721 | |||

| 9f67d07156 | |||

| 6731a7643e | |||

| f87fdd88ad | |||

| f825f6b90a | |||

| f5d5008a24 | |||

| 0b63d4cde5 | |||

| 2e246869d0 | |||

| 2f9546e144 | |||

| 6134c2ff61 | |||

| 3cfbba74f8 | |||

| 050bb60671 | |||

| 12a7e1ce6e | |||

| cd0438005b | |||

| 7c3188ae06 | |||

| 6cd38a37cd | |||

| 12e51bb6aa | |||

| e2a4cd6b03 | |||

| 329e228aa2 | |||

| 3d5d517f2a | |||

| a2eb2e4dac | |||

| d89792d379 | |||

| 23ed2553c4 | |||

| fe29ce2911 | |||

| df25a3ede2 | |||

| 4c36fb4df2 | |||

| 67c61e0ac8 | |||

| 0985db4e36 | |||

| ee2c00abeb | |||

| 577f24d107 | |||

| fc24b34c2b | |||

| 1e962476da | |||

| 3326327572 | |||

| 36be79ea38 | |||

| 523839be7d | |||

| d1586ddd77 | |||

| 3420853923 | |||

| 1f373d7b0a | |||

| 7fdbd6a680 | |||

| 17b40a1fa1 | |||

| c47e74c5c7 | |||

| 7abbe08ff1 | |||

| 8038b6ab99 | |||

| 6e26ad0966 | |||

| 7e2449b228 | |||

| 97bfee47a3 | |||

| 3b27c834a4 | |||

| 5bc2ef1eff | |||

| 2f558006bf | |||

| 8868c92141 | |||

| 370520df51 | |||

| e17dd66dce | |||

| fc8494d696 | |||

| f8aea909b4 | |||

| 2e832b8fb4 | |||

| ccddbeccad | |||

| a47fa342cb | |||

| f73cddcb93 | |||

| 5f36f0d753 | |||

| dc4bf13d39 | |||

| bdf7eff7cd | |||

| dc67e6a66e | |||

| 6d91f44634 | |||

| 0396e10706 | |||

| 77f243b7ab | |||

| c507785475 | |||

| 5c5015b267 | |||

| 3efe08d619 | |||

| 2e36fce4eb | |||

| d6d4427545 | |||

| 5d45632247 | |||

| 90c045e3d0 | |||

| 7f0a96d8f7 | |||

| 8fb9affef3 | |||

| 6c42a471e1 | |||

| f2b74b6970 | |||

| ffd11aeffc | |||

| 05e4e09dfc | |||

| 13092118dc | |||

| 7d108992fc | |||

| e5a8ed205e | |||

| 90f97b0226 | |||

| 9e0f5f0ccc | |||

| 87ea0176b9 | |||

| 62f08f4ec4 | |||

| fe0058f25f | |||

| 6d2673f39d | |||

| b3a1d456b2 | |||

| f77a5f6929 | |||

| fdeae9c209 | |||

| a994ec1427 | |||

| e5259e2f5c | |||

| 978348240b | |||

| 4d92e7d9c2 | |||

| 6f1b418b25 | |||

| 51e08c3c2b | |||

| 4c29ff2db1 | |||

| 5fbaa4366f | |||

| aee08ebbfe | |||

| 6ad8df6be7 | |||

| 539edcad3c | |||

| b7172df700 | |||

| 768bd40ad8 | |||

| ea27c63f13 | |||

| c866288b0a | |||

| 8ae3c60670 | |||

| f8f415eb75 | |||

| 24583b05f7 | |||

| fa421fd169 | |||

| e0ae5c945e | |||

| 865888e4e8 | |||

| 3b7cfe7bc5 | |||

| 262f9dddbc | |||

| fa706b6e96 | |||

| ff51ab0946 | |||

| 7884aa2348 | |||

| 8f3520807c | |||

| fa90b242e3 | |||

| 2dfd34bd61 | |||

| 48f569bef0 | |||

| a20fb9cc0c | |||

| c58e1f90e7 | |||

| d363f148f0 | |||

| cbf96a2e67 | |||

| 4d87c3ec6a | |||

| c13c52d733 | |||

| dbf8142fe0 | |||

| bacf6c96c2 | |||

| c9d49da8f7 | |||

| 7b22edac60 | |||

| fc309f69b9 | |||

| 7efb5cf74e | |||

| 8e200197c5 | |||

| fe98f67e08 | |||

| 0b1edd9716 | |||

| e638dc075c | |||

| 559b160886 | |||

| 571b8769ac | |||

| e4bd2148ce | |||

| 1637bd8774 | |||

| ce33582d3d | |||

| bc6b592fd9 | |||

| 24ae6b966f | |||

| f4de3d2899 | |||

| 4cacb07ec2 | |||

| 2371a9b041 | |||

| 5b7403ae80 | |||

| e979b8643d | |||

| 05b4f167a3 | |||

| 2c4245e023 | |||

| d54ee252ee | |||

| 85eec0b98c | |||

| 41a988d99a | |||

| 448da3d481 | |||

| b030299547 | |||

| 5bdbfda1e2 | |||

| 047cfb21f3 | |||

| 35a2497a38 | |||

| 99630f83c2 | |||

| 1757f2707c | |||

| 66c44d715c | |||

| 8f7855013a | |||

| e200be4e57 | |||

| d0b734bc91 | |||

| 399d5c5c5d | |||

| 1b88049cb0 | |||

| 0304bf05c1 | |||

| 94173cbb06 | |||

| 75447280e4 | |||

| 5edff8b7e4 | |||

| 487351d343 | |||

| 93311a9d9b | |||

| 704030230f | |||

| 60bce8f049 | |||

| e394cb7ddb | |||

| a0e4fb01af | |||

| eb9190efa1 | |||

| 8cc37d6f59 | |||

| 6cc9fe3d06 | |||

| 0acf423450 | |||

| 7958786b4c | |||

| 719f3a9dd8 | |||

| 71efd84113 | |||

| 25e46a99fd | |||

| 2531849b73 | |||

| 19f11f99ce | |||

| 87f978e816 | |||

| 7488eb8c9e | |||

| b3e79ed677 | |||

| 5d2fe07bf7 | |||

| 84bf95e9ab | |||

| 4f4989af8c | |||

| 0a4a604c28 | |||

| 23a249ccdb | |||

| 4a6bf4c55a | |||

| 3f75b14ba3 | |||

| ae9cedd50d | |||

| ae63833043 | |||

| da6828ad87 | |||

| ea1cd7ae45 | |||

| 1c1aad2806 | |||

| f466d79031 | |||

| e2323dfb9f | |||

| e51e443adc | |||

| f6d4a214ca | |||

| 4bb46d9faa | |||

| f337d76af6 | |||

| 4e59693c76 | |||

| 4033303c1f | |||

| 38c8d187d2 | |||

| f8ddfd2f25 | |||

| 4b4fda37a6 | |||

| 9ca6b789a7 | |||

| 0f73f5f906 | |||

| 055a8ea859 | |||

| 5742a9be1e | |||

| 914cc6639a | |||

| f34cda126a | |||

| dece20c984 | |||

| 94c1f430af | |||

| 9fadde388b | |||

| d1b6b3bc95 | |||

| f57d58ee7d | |||

| 77a451ada0 | |||

| 4b8420aa16 | |||

| 25bc69f70e | |||

| e2faf117c5 | |||

| aaff03bb60 | |||

| cd1e62ec96 | |||

| 7767cae181 | |||

| 1bc206e7b2 | |||

| 52a438b3c8 | |||

| b8a71b369d | |||

| 72af2a1f9c | |||

| fd4a2bf7ff | |||

| a3211d4958 | |||

| 86d7ed5f82 | |||

| 210d94f2aa | |||

| b2d952cafa | |||

| 6eacf4791d | |||

| 4076f67ab8 | |||

| c2639a2520 | |||

| 38db65831e | |||

| e1b856f7e6 | |||

| 5fdc9223e9 | |||

| 301622216f | |||

| 973cb2de1c | |||

| b63db6cef0 | |||

| 8fba670bda | |||

| ca47833c56 | |||

| 567475c18c | |||

| fb4badd160 | |||

| 9695d96799 | |||

| 0930f76cb7 | |||

| 365559405f | |||

| d4adcb3c22 | |||

| 75167c2700 | |||

| 78f5f58774 | |||

| 81a2e5cbe2 | |||

| e63a4f47ce | |||

| caff65613f | |||

| ee3cac9836 | |||

| 8b3ff7a632 | |||

| 7d49e080fc | |||

| 1a94079936 | |||

| 7ed12c2f8e | |||

| ed8cf27b05 | |||

| 4b786b350e | |||

| 110d987514 | |||

| cc5e01cec5 | |||

| 620bf68d25 | |||

| 86e5a30a36 | |||

| 6c10f78c31 | |||

| 46922d2842 | |||

| 55ab198bb2 | |||

| 0c7f048e58 | |||

| efc8f755d5 | |||

| aebcb3f3c6 | |||

| c8d369ee61 | |||

| 1cedd13cf3 | |||

| b7cd368cce | |||

| 6ef5843380 | |||

| c5f2abb548 | |||

| bfdff08cb8 | |||

| ffa4ce3f1e | |||

| f1380df468 | |||

| 2de83827b6 | |||

| 2c4c7c485e | |||

| f3df032f06 | |||

| 9e96fbab1f | |||

| e15559011d | |||

| 2434240f08 | |||

| d3936122ec | |||

| c75f561701 | |||

| f1ab6ec88f | |||

| f293717827 | |||

| 270912d41e | |||

| d9bd73646c | |||

| 933f2ca093 | |||

| 4331610e01 | |||

| d04c0f490c | |||

| f7c703751f | |||

| 13101df811 | |||

| 1eab6a8479 | |||

| 64cb5da821 | |||

| 6648c04799 | |||

| 24697d613b | |||

| f6f4d32edb | |||

| 938a8a7c7d | |||

| deda4baa87 | |||

| 30248c2a7b | |||

| c2e3bf7b70 | |||

| e5e90e35e5 | |||

| 3e445c7e03 | |||

| 53e7ff62bf | |||

| 1eea60c6a5 | |||

| d0c544e650 | |||

| 28249924fd | |||

| a2d8695ca4 | |||

| 259fa84eeb | |||

| ff720d32fe | |||

| 399d7b7990 | |||

| 74dfae8dbe | |||

| 71b077faf8 | |||

| b6333e7f20 | |||

| e53ae712f9 | |||

| 542c4599ba | |||

| 795f6ab8d5 | |||

| e3b2469e0f | |||

| 0ebd29d398 | |||

| 1a626fb1f3 | |||

| 0ce42e786e | |||

| 84231f99dc | |||

| 70b7acee15 |

@ -1 +1,3 @@

|

||||

venv/

|

||||

pr_agent/settings/.secrets.toml

|

||||

pics/

|

||||

16

.github/workflows/review.yaml

vendored

Normal file

16

.github/workflows/review.yaml

vendored

Normal file

@ -0,0 +1,16 @@

|

||||

on:

|

||||

pull_request:

|

||||

issue_comment:

|

||||

jobs:

|

||||

pr_agent_job:

|

||||

runs-on: ubuntu-latest

|

||||

name: Run pr agent on every pull request

|

||||

steps:

|

||||

- name: PR Agent action step

|

||||

id: pragent

|

||||

uses: Codium-ai/pr-agent@main

|

||||

env:

|

||||

OPENAI_KEY: ${{ secrets.OPENAI_KEY }}

|

||||

OPENAI_ORG: ${{ secrets.OPENAI_ORG }} # optional

|

||||

GITHUB_TOKEN: ${{ secrets.GITHUB_TOKEN }}

|

||||

|

||||

19

CONFIGURATION.md

Normal file

19

CONFIGURATION.md

Normal file

@ -0,0 +1,19 @@

|

||||

## Configuration

|

||||

|

||||

The different tools and sub-tools used by CodiumAI pr-agent are easily configurable via the configuration file: `/pr-agent/settings/configuration.toml`.

|

||||

##### Git Provider:

|

||||

You can select your git_provider with the flag `git_provider` in the `config` section

|

||||

|

||||

##### PR Reviewer:

|

||||

|

||||

You can enable/disable the different PR Reviewer abilities with the following flags (`pr_reviewer` section):

|

||||

```

|

||||

require_focused_review=true

|

||||

require_score_review=true

|

||||

require_tests_review=true

|

||||

require_security_review=true

|

||||

```

|

||||

You can contol the number of suggestions returned by the PR Reviewer with the following flag:

|

||||

```inline_code_comments=3```

|

||||

And enable/disable the inline code suggestions with the following flag:

|

||||

```inline_code_comments=true```

|

||||

10

Dockerfile.github_action

Normal file

10

Dockerfile.github_action

Normal file

@ -0,0 +1,10 @@

|

||||

FROM python:3.10 as base

|

||||

|

||||

WORKDIR /app

|

||||

ADD requirements.txt .

|

||||

RUN pip install -r requirements.txt && rm requirements.txt

|

||||

ENV PYTHONPATH=/app

|

||||

ADD pr_agent pr_agent

|

||||

ADD github_action/entrypoint.sh /

|

||||

RUN chmod +x /entrypoint.sh

|

||||

ENTRYPOINT ["/entrypoint.sh"]

|

||||

1

Dockerfile.github_action_dockerhub

Normal file

1

Dockerfile.github_action_dockerhub

Normal file

@ -0,0 +1 @@

|

||||

FROM codiumai/pr-agent:github_action

|

||||

218

INSTALL.md

Normal file

218

INSTALL.md

Normal file

@ -0,0 +1,218 @@

|

||||

|

||||

## Installation

|

||||

|

||||

---

|

||||

|

||||

#### Method 1: Use Docker image (no installation required)

|

||||

|

||||

To request a review for a PR, or ask a question about a PR, you can run directly from the Docker image. Here's how:

|

||||

|

||||

1. To request a review for a PR, run the following command:

|

||||

|

||||

```

|

||||

docker run --rm -it -e OPENAI.KEY=<your key> -e GITHUB.USER_TOKEN=<your token> codiumai/pr-agent --pr_url <pr_url> review

|

||||

```

|

||||

|

||||

2. To ask a question about a PR, run the following command:

|

||||

|

||||

```

|

||||

docker run --rm -it -e OPENAI.KEY=<your key> -e GITHUB.USER_TOKEN=<your token> codiumai/pr-agent --pr_url <pr_url> ask "<your question>"

|

||||

```

|

||||

|

||||

Possible questions you can ask include:

|

||||

|

||||

- What is the main theme of this PR?

|

||||

- Is the PR ready for merge?

|

||||

- What are the main changes in this PR?

|

||||

- Should this PR be split into smaller parts?

|

||||

- Can you compose a rhymed song about this PR?

|

||||

|

||||

---

|

||||

|

||||

#### Method 2: Run as a GitHub Action

|

||||

|

||||

You can use our pre-built Github Action Docker image to run PR-Agent as a Github Action.

|

||||

|

||||

1. Add the following file to your repository under `.github/workflows/pr_agent.yml`:

|

||||

|

||||

```yaml

|

||||

on:

|

||||

pull_request:

|

||||

issue_comment:

|

||||

jobs:

|

||||

pr_agent_job:

|

||||

runs-on: ubuntu-latest

|

||||

name: Run pr agent on every pull request, respond to user comments

|

||||

steps:

|

||||

- name: PR Agent action step

|

||||

id: pragent

|

||||

uses: Codium-ai/pr-agent@main

|

||||

env:

|

||||

OPENAI_KEY: ${{ secrets.OPENAI_KEY }}

|

||||

GITHUB_TOKEN: ${{ secrets.GITHUB_TOKEN }}

|

||||

```

|

||||

|

||||

2. Add the following secret to your repository under `Settings > Secrets`:

|

||||

|

||||

```

|

||||

OPENAI_KEY: <your key>

|

||||

```

|

||||

|

||||

The GITHUB_TOKEN secret is automatically created by GitHub.

|

||||

|

||||

3. Merge this change to your main branch.

|

||||

When you open your next PR, you should see a comment from `github-actions` bot with a review of your PR, and instructions on how to use the rest of the tools.

|

||||

|

||||

4. You may configure PR-Agent by adding environment variables under the env section corresponding to any configurable property in the [configuration](./CONFIGURATION.md) file. Some examples:

|

||||

```yaml

|

||||

env:

|

||||

# ... previous environment values

|

||||

OPENAI.ORG: "<Your organization name under your OpenAI account>"

|

||||

PR_REVIEWER.REQUIRE_TESTS_REVIEW: "false" # Disable tests review

|

||||

PR_CODE_SUGGESTIONS.NUM_CODE_SUGGESTIONS: 6 # Increase number of code suggestions

|

||||

```

|

||||

|

||||

---

|

||||

|

||||

#### Method 3: Run from source

|

||||

|

||||

1. Clone this repository:

|

||||

|

||||

```

|

||||

git clone https://github.com/Codium-ai/pr-agent.git

|

||||

```

|

||||

|

||||

2. Install the requirements in your favorite virtual environment:

|

||||

|

||||

```

|

||||

pip install -r requirements.txt

|

||||

```

|

||||

|

||||

3. Copy the secrets template file and fill in your OpenAI key and your GitHub user token:

|

||||

|

||||

```

|

||||

cp pr_agent/settings/.secrets_template.toml pr_agent/settings/.secrets.toml

|

||||

# Edit .secrets.toml file

|

||||

```

|

||||

|

||||

4. Add the pr_agent folder to your PYTHONPATH, then run the cli.py script:

|

||||

|

||||

```

|

||||

export PYTHONPATH=[$PYTHONPATH:]<PATH to pr_agent folder>

|

||||

python pr_agent/cli.py --pr_url <pr_url> review

|

||||

python pr_agent/cli.py --pr_url <pr_url> ask <your question>

|

||||

python pr_agent/cli.py --pr_url <pr_url> describe

|

||||

python pr_agent/cli.py --pr_url <pr_url> improve

|

||||

```

|

||||

|

||||

---

|

||||

|

||||

#### Method 4: Run as a polling server

|

||||

Request reviews by tagging your Github user on a PR

|

||||

|

||||

Follow steps 1-3 of method 2.

|

||||

Run the following command to start the server:

|

||||

|

||||

```

|

||||

python pr_agent/servers/github_polling.py

|

||||

```

|

||||

|

||||

---

|

||||

|

||||

#### Method 5: Run as a GitHub App

|

||||

Allowing you to automate the review process on your private or public repositories.

|

||||

|

||||

1. Create a GitHub App from the [Github Developer Portal](https://docs.github.com/en/developers/apps/creating-a-github-app).

|

||||

|

||||

- Set the following permissions:

|

||||

- Pull requests: Read & write

|

||||

- Issue comment: Read & write

|

||||

- Metadata: Read-only

|

||||

- Set the following events:

|

||||

- Issue comment

|

||||

- Pull request

|

||||

|

||||

2. Generate a random secret for your app, and save it for later. For example, you can use:

|

||||

|

||||

```

|

||||

WEBHOOK_SECRET=$(python -c "import secrets; print(secrets.token_hex(10))")

|

||||

```

|

||||

|

||||

3. Acquire the following pieces of information from your app's settings page:

|

||||

|

||||

- App private key (click "Generate a private key" and save the file)

|

||||

- App ID

|

||||

|

||||

4. Clone this repository:

|

||||

|

||||

```

|

||||

git clone https://github.com/Codium-ai/pr-agent.git

|

||||

```

|

||||

|

||||

5. Copy the secrets template file and fill in the following:

|

||||

```

|

||||

cp pr_agent/settings/.secrets_template.toml pr_agent/settings/.secrets.toml

|

||||

# Edit .secrets.toml file

|

||||

```

|

||||

- Your OpenAI key.

|

||||

- Copy your app's private key to the private_key field.

|

||||

- Copy your app's ID to the app_id field.

|

||||

- Copy your app's webhook secret to the webhook_secret field.

|

||||

- Set deployment_type to 'app' in [configuration.toml](./pr_agent/settings/configuration.toml)

|

||||

|

||||

> The .secrets.toml file is not copied to the Docker image by default, and is only used for local development.

|

||||

> If you want to use the .secrets.toml file in your Docker image, you can add remove it from the .dockerignore file.

|

||||

> In most production environments, you would inject the secrets file as environment variables or as mounted volumes.

|

||||

> For example, in order to inject a secrets file as a volume in a Kubernetes environment you can update your pod spec to include the following,

|

||||

> assuming you have a secret named `pr-agent-settings` with a key named `.secrets.toml`:

|

||||

```

|

||||

volumes:

|

||||

- name: settings-volume

|

||||

secret:

|

||||

secretName: pr-agent-settings

|

||||

// ...

|

||||

containers:

|

||||

// ...

|

||||

volumeMounts:

|

||||

- mountPath: /app/pr_agent/settings_prod

|

||||

name: settings-volume

|

||||

```

|

||||

|

||||

> Another option is to set the secrets as environment variables in your deployment environment, for example `OPENAI.KEY` and `GITHUB.USER_TOKEN`.

|

||||

|

||||

6. Build a Docker image for the app and optionally push it to a Docker repository. We'll use Dockerhub as an example:

|

||||

|

||||

```

|

||||

docker build . -t codiumai/pr-agent:github_app --target github_app -f docker/Dockerfile

|

||||

docker push codiumai/pr-agent:github_app # Push to your Docker repository

|

||||

```

|

||||

|

||||

7. Host the app using a server, serverless function, or container environment. Alternatively, for development and

|

||||

debugging, you may use tools like smee.io to forward webhooks to your local machine.

|

||||

You can check [Deploy as a Lambda Function](#deploy-as-a-lambda-function)

|

||||

|

||||

8. Go back to your app's settings, and set the following:

|

||||

|

||||

- Webhook URL: The URL of your app's server or the URL of the smee.io channel.

|

||||

- Webhook secret: The secret you generated earlier.

|

||||

|

||||

9. Install the app by navigating to the "Install App" tab and selecting your desired repositories.

|

||||

|

||||

---

|

||||

|

||||

#### Deploy as a Lambda Function

|

||||

|

||||

1. Follow steps 1-5 of [Method 5](#method-5-run-as-a-github-app).

|

||||

2. Build a docker image that can be used as a lambda function

|

||||

```shell

|

||||

docker buildx build --platform=linux/amd64 . -t codiumai/pr-agent:serverless -f docker/Dockerfile.lambda

|

||||

```

|

||||

3. Push image to ECR

|

||||

```shell

|

||||

docker tag codiumai/pr-agent:serverless <AWS_ACCOUNT>.dkr.ecr.<AWS_REGION>.amazonaws.com/codiumai/pr-agent:serverless

|

||||

docker push <AWS_ACCOUNT>.dkr.ecr.<AWS_REGION>.amazonaws.com/codiumai/pr-agent:serverless

|

||||

```

|

||||

4. Create a lambda function that uses the uploaded image. Set the lambda timeout to be at least 3m.

|

||||

5. Configure the lambda function to have a Function URL.

|

||||

6. Go back to steps 8-9 of [Method 5](#method-5-run-as-a-github-app) with the function url as your Webhook URL.

|

||||

The Webhook URL would look like `https://<LAMBDA_FUNCTION_URL>/api/v1/github_webhooks`

|

||||

42

PR_COMPRESSION.md

Normal file

42

PR_COMPRESSION.md

Normal file

@ -0,0 +1,42 @@

|

||||

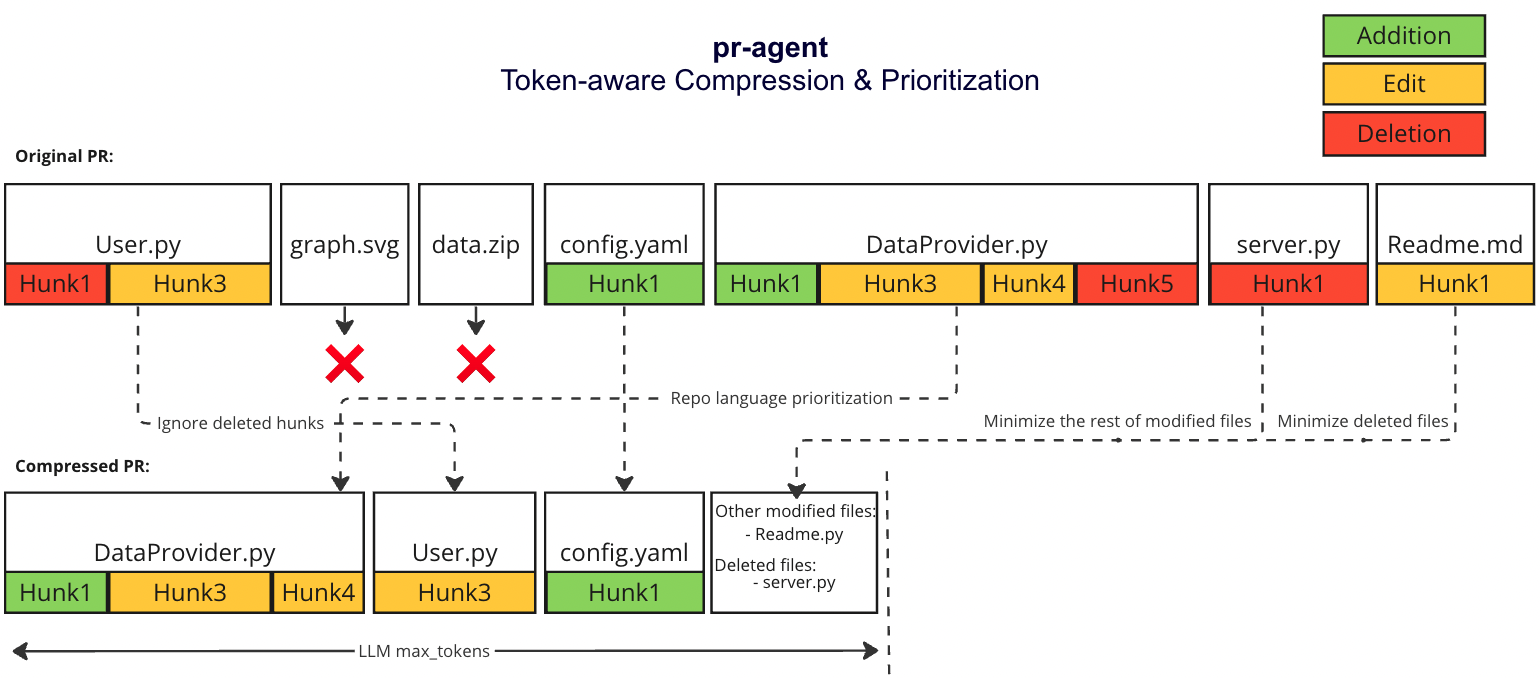

# Git Patch Logic

|

||||

There are two scenarios:

|

||||

1. The PR is small enough to fit in a single prompt (including system and user prompt)

|

||||

2. The PR is too large to fit in a single prompt (including system and user prompt)

|

||||

|

||||

For both scenarios, we first use the following strategy

|

||||

#### Repo language prioritization strategy

|

||||

|

||||

We prioritize the languages of the repo based on the following criteria:

|

||||

1. Exclude binary files and non code files (e.g. images, pdfs, etc)

|

||||

2. Given the main languages used in the repo

|

||||

2. We sort the PR files by the most common languages in the repo (in descending order):

|

||||

* ```[[file.py, file2.py],[file3.js, file4.jsx],[readme.md]]```

|

||||

|

||||

|

||||

## Small PR

|

||||

In this case, we can fit the entire PR in a single prompt:

|

||||

1. Exclude binary files and non code files (e.g. images, pdfs, etc)

|

||||

2. We Expand the surrounding context of each patch to 6 lines above and below the patch

|

||||

## Large PR

|

||||

|

||||

### Motivation

|

||||

Pull Requests can be very long and contain a lot of information with varying degree of relevance to the pr-agent.

|

||||

We want to be able to pack as much information as possible in a single LMM prompt, while keeping the information relevant to the pr-agent.

|

||||

|

||||

|

||||

|

||||

#### PR compression strategy

|

||||

We prioritize additions over deletions:

|

||||

- Combine all deleted files into a single list (`deleted files`)

|

||||

- File patches are a list of hunks, remove all hunks of type deletion-only from the hunks in the file patch

|

||||

#### Adaptive and token-aware file patch fitting

|

||||

We use [tiktoken](https://github.com/openai/tiktoken) to tokenize the patches after the modifications described above, and we use the following strategy to fit the patches into the prompt:

|

||||

1. Withing each language we sort the files by the number of tokens in the file (in descending order):

|

||||

* ```[[file2.py, file.py],[file4.jsx, file3.js],[readme.md]]```

|

||||

2. Iterate through the patches in the order described above

|

||||

2. Add the patches to the prompt until the prompt reaches a certain buffer from the max token length

|

||||

3. If there are still patches left, add the remaining patches as a list called `other modified files` to the prompt until the prompt reaches the max token length (hard stop), skip the rest of the patches.

|

||||

4. If we haven't reached the max token length, add the `deleted files` to the prompt until the prompt reaches the max token length (hard stop), skip the rest of the patches.

|

||||

|

||||

### Example

|

||||

|

||||

376

README.md

376

README.md

@ -1,277 +1,171 @@

|

||||

<div align="center">

|

||||

|

||||

# 🛡️ CodiumAI PR-Agent

|

||||

<div align="center">

|

||||

|

||||

<img src="./pics/logo-dark.png#gh-dark-mode-only" width="330"/>

|

||||

<img src="./pics/logo-light.png#gh-light-mode-only" width="330"/><br/>

|

||||

Making pull requests less painful with an AI agent

|

||||

</div>

|

||||

|

||||

[](https://github.com/Codium-ai/pr-agent/blob/main/LICENSE)

|

||||

[](https://discord.com/channels/1057273017547378788/1126104260430528613)

|

||||

<a href="https://github.com/Codium-ai/pr-agent/commits/main">

|

||||

<img alt="GitHub" src="https://img.shields.io/github/last-commit/Codium-ai/pr-agent/main?style=for-the-badge" height="20">

|

||||

</a>

|

||||

</div>

|

||||

<div style="text-align:left;">

|

||||

|

||||

CodiumAI `PR-Agent` is an open-source tool that helps developers review PRs faster and more efficiently.

|

||||

It automatically analyzes the PR, and provides feedback and suggestions, and can answer questions.

|

||||

It is powered by GPT-4, and is based on the [CodiumAI](https://github.com/Codium-ai/) platform.

|

||||

CodiumAI `PR-Agent` is an open-source tool aiming to help developers review pull requests faster and more efficiently. It automatically analyzes the pull request and can provide several types of feedback:

|

||||

|

||||

**Auto-Description**: Automatically generating PR description - title, type, summary, code walkthrough and PR labels.

|

||||

\

|

||||

**PR Review**: Adjustable feedback about the PR main theme, type, relevant tests, security issues, focus, score, and various suggestions for the PR content.

|

||||

\

|

||||

**Question Answering**: Answering free-text questions about the PR.

|

||||

\

|

||||

**Code Suggestion**: Committable code suggestions for improving the PR.

|

||||

|

||||

<h3>Example results:</h2>

|

||||

</div>

|

||||

<h4>/describe:</h4>

|

||||

<div align="center">

|

||||

<p float="center">

|

||||

<img src="https://www.codium.ai/images/describe-2.gif" width="800">

|

||||

</p>

|

||||

</div>

|

||||

<h4>/review:</h4>

|

||||

<div align="center">

|

||||

<p float="center">

|

||||

<img src="https://www.codium.ai/images/review-2.gif" width="800">

|

||||

</p>

|

||||

</div>

|

||||

<h4>/reflect_and_review:</h4>

|

||||

<div align="center">

|

||||

<p float="center">

|

||||

<img src="https://www.codium.ai/images/reflect_and_review.gif" width="800">

|

||||

</p>

|

||||

</div>

|

||||

<h4>/ask:</h4>

|

||||

<div align="center">

|

||||

<p float="center">

|

||||

<img src="https://www.codium.ai/images/ask-2.gif" width="800">

|

||||

</p>

|

||||

</div>

|

||||

<h4>/improve:</h4>

|

||||

<div align="center">

|

||||

<p float="center">

|

||||

<img src="https://www.codium.ai/images/improve-2.gif" width="800">

|

||||

</p>

|

||||

</div>

|

||||

<div align="left">

|

||||

|

||||

|

||||

- [Overview](#overview)

|

||||

- [Try it now](#try-it-now)

|

||||

- [Installation](#installation)

|

||||

- [Usage and tools](#usage-and-tools)

|

||||

- [Configuration](./CONFIGURATION.md)

|

||||

- [How it works](#how-it-works)

|

||||

- [Roadmap](#roadmap)

|

||||

- [Similar projects](#similar-projects)

|

||||

</div>

|

||||

|

||||

|

||||

## Overview

|

||||

`PR-Agent` offers extensive pull request functionalities across various git providers:

|

||||

| | | GitHub | Gitlab | Bitbucket |

|

||||

|-------|---------------------------------------------|:------:|:------:|:---------:|

|

||||

| TOOLS | Review | :white_check_mark: | :white_check_mark: | :white_check_mark: |

|

||||

| | ⮑ Inline review | :white_check_mark: | :white_check_mark: | |

|

||||

| | Ask | :white_check_mark: | :white_check_mark: | |

|

||||

| | Auto-Description | :white_check_mark: | :white_check_mark: | |

|

||||

| | Improve Code | :white_check_mark: | :white_check_mark: | |

|

||||

| | Reflect and Review | :white_check_mark: | | |

|

||||

| | | | | |

|

||||

| USAGE | CLI | :white_check_mark: | :white_check_mark: | :white_check_mark: |

|

||||

| | App / webhook | :white_check_mark: | :white_check_mark: | |

|

||||

| | Tagging bot | :white_check_mark: | | |

|

||||

| | Actions | :white_check_mark: | | |

|

||||

| | | | | |

|

||||

| CORE | PR compression | :white_check_mark: | :white_check_mark: | :white_check_mark: |

|

||||

| | Repo language prioritization | :white_check_mark: | :white_check_mark: | :white_check_mark: |

|

||||

| | Adaptive and token-aware<br />file patch fitting | :white_check_mark: | :white_check_mark: | :white_check_mark: |

|

||||

| | Incremental PR Review | :white_check_mark: | | |

|

||||

|

||||

* [Quickstart](#Quickstart)

|

||||

* [Configuration](#Configuration)

|

||||

* [Usage and Tools](#usage-and-tools)

|

||||

* [Roadmap](#roadmap)

|

||||

* [Similar projects](#similar-projects)

|

||||

Examples for invoking the different tools via the CLI:

|

||||

- **Review**: python cli.py --pr-url=<pr_url> review

|

||||

- **Describe**: python cli.py --pr-url=<pr_url> describe

|

||||

- **Improve**: python cli.py --pr-url=<pr_url> improve

|

||||

- **Ask**: python cli.py --pr-url=<pr_url> ask "Write me a poem about this PR"

|

||||

- **Reflect**: python cli.py --pr-url=<pr_url> reflect

|

||||

|

||||

"<pr_url>" is the url of the relevant PR (for example: https://github.com/Codium-ai/pr-agent/pull/50).

|

||||

|

||||

## Quickstart

|

||||

In the [configuration](./CONFIGURATION.md) file you can select your git provider (GitHub, Gitlab, Bitbucket), and further configure the different tools.

|

||||

|

||||

## Try it now

|

||||

|

||||

Try GPT-4 powered PR-Agent on your public GitHub repository for free. Just mention `@CodiumAI-Agent` and add the desired command in any PR comment! The agent will generate a response based on your command.

|

||||

|

||||

|

||||

|

||||

To set up your own PR-Agent, see the [Installation](#installation) section

|

||||

|

||||

---

|

||||

|

||||

## Installation

|

||||

|

||||

To get started with PR-Agent quickly, you first need to acquire two tokens:

|

||||

|

||||

1. An OpenAI key from [here](https://platform.openai.com/), with access to GPT-4.

|

||||

2. A GitHub personal access token (classic) with the repo scope.

|

||||

|

||||

There are several ways to use PR-Agent. Let's start with the simplest one:

|

||||

There are several ways to use PR-Agent:

|

||||

|

||||

---

|

||||

|

||||

### Method 1: Use Docker image (no installation required)

|

||||

|

||||

To request a review for a PR, or ask a question about a PR, you can run the appropriate

|

||||

Python scripts from the scripts folder. Here's how:

|

||||

|

||||

1. To request a review for a PR, run the following command:

|

||||

```

|

||||

docker run --rm -it -e OPENAI.KEY=<your key> -e GITHUB.USER_TOKEN=<your token> codiumai/pr-agent --pr_url <pr url>

|

||||

```

|

||||

|

||||

---

|

||||

|

||||

2. To ask a question about a PR, run the following command:

|

||||

```

|

||||

docker run --rm -it -e OPENAI.KEY=<your key> -e GITHUB.USER_TOKEN=<your token> codiumai/pr-agent --pr_url <pr url> --question "<your question>"

|

||||

```

|

||||

|

||||

Possible questions you can ask include:

|

||||

- What is the main theme of this PR?

|

||||

- Is the PR ready for merge?

|

||||

- What are the main changes in this PR?

|

||||

- Should this PR be split into smaller parts?

|

||||

- Can you compose a rhymed song about this PR.

|

||||

|

||||

---

|

||||

|

||||

### Method 2: Run from source

|

||||

|

||||

1. Clone this repository:

|

||||

```

|

||||

git clone https://github.com/Codium-ai/pr-agent.git

|

||||

```

|

||||

|

||||

2. Install the requirements in your favorite virtual environment:

|

||||

```

|

||||

pip install -r requirements.txt

|

||||

```

|

||||

|

||||

3. Copy the secrets template file and fill in your OpenAI key and your GitHub user token:

|

||||

```

|

||||

cp pr_agent/settings/.secrets_template.toml pr_agent/settings/.secrets

|

||||

# Edit .secrets file

|

||||

```

|

||||

|

||||

4. Run the appropriate Python scripts from the scripts folder:

|

||||

```

|

||||

python pr_agent/cli.py --pr_url <pr url>

|

||||

python pr_agent/cli.py --pr_url <pr url> --question "<your question>"

|

||||

```

|

||||

|

||||

---

|

||||

|

||||

### Method 3: Method 3: Run as a polling server; request reviews by tagging your Github user on a PR

|

||||

|

||||

Follow steps 1-3 of method 2.

|

||||

Run the following command to start the server:

|

||||

```

|

||||

python pr_agent/servers/github_polling.py

|

||||

```

|

||||

|

||||

---

|

||||

|

||||

### Method 4: Run as a Github App, allowing you to automate the review process on your private or public repositories.

|

||||

|

||||

1. Create a GitHub App from the [Github Developer Portal](https://docs.github.com/en/developers/apps/creating-a-github-app).

|

||||

- Set the following permissions:

|

||||

- Pull requests: Read & write

|

||||

- Issue comment: Read & write

|

||||

- Metadata: Read-only

|

||||

- Set the following events:

|

||||

- Issue comment

|

||||

- Pull request

|

||||

|

||||

2. Generate a random secret for your app, and save it for later. For example, you can use:

|

||||

```

|

||||

WEBHOOK_SECRET=$(python -c "import secrets; print(secrets.token_hex(10))")

|

||||

```

|

||||

|

||||

3. Acquire the following pieces of information from your app's settings page:

|

||||

- App private key (click "Generate a private key", and save the file)

|

||||

- App ID

|

||||

|

||||

4. Clone this repository:

|

||||

```

|

||||

git clone https://github.com/Codium-ai/pr-agent.git

|

||||

```

|

||||

|

||||

5. Copy the secrets template file and fill in the following:

|

||||

- Your OpenAI key.

|

||||

- Set deployment_type to 'app'

|

||||

- Copy your app's private key to the private_key field.

|

||||

- Copy your app's ID to the app_id field.

|

||||

- Copy your app's webhook secret to the webhook_secret field.

|

||||

```

|

||||

cp pr_agent/settings/.secrets_template.toml pr_agent/settings/.secrets

|

||||

# Edit .secrets file

|

||||

```

|

||||

|

||||

6. Build a Docker image for the app and optionally push it to a Docker repository. We'll use Dockerhub as an example:

|

||||

```

|

||||

docker build . -t codiumai/pr-agent:github_app --target github_app -f docker/Dockerfile

|

||||

docker push codiumai/pr-agent:github_app # Push to your Docker repository

|

||||

```

|

||||

|

||||

7. Host the app using a server, serverless function, or container environment. Alternatively, for development and

|

||||

debugging, you may use tools like smee.io to forward webhooks to your local machine.

|

||||

|

||||

8. Go back to your app's settings, set the following:

|

||||

- Webhook URL: The URL of your app's server, or the URL of the smee.io channel.

|

||||

- Webhook secret: The secret you generated earlier.

|

||||

|

||||

9. Install the app by navigating to the "Install App" tab, and selecting your desired repositories.

|

||||

|

||||

---

|

||||

- [Method 1: Use Docker image (no installation required)](INSTALL.md#method-1-use-docker-image-no-installation-required)

|

||||

- [Method 2: Run as a GitHub Action](INSTALL.md#method-2-run-as-a-github-action)

|

||||

- [Method 3: Run from source](INSTALL.md#method-3-run-from-source)

|

||||

- [Method 4: Run as a polling server](INSTALL.md#method-4-run-as-a-polling-server)

|

||||

- Request reviews by tagging your GitHub user on a PR

|

||||

- [Method 5: Run as a GitHub App](INSTALL.md#method-5-run-as-a-github-app)

|

||||

- Allowing you to automate the review process on your private or public repositories

|

||||

|

||||

## Usage and Tools

|

||||

CodiumAI PR-Agent provides two types of interactions ("tools"): `"PR Reviewer"` and `"PR Q&A"`.

|

||||

- The "PR Reviewer" tool automatically analyzes PRs, and provides different types of feedbacks.

|

||||

|

||||

**PR-Agent** provides five types of interactions ("tools"): `"PR Reviewer"`, `"PR Q&A"`, `"PR Description"`, `"PR Code Sueggestions"` and `"PR Reflect and Review"`.

|

||||

|

||||

- The "PR Reviewer" tool automatically analyzes PRs, and provides various types of feedback.

|

||||

- The "PR Q&A" tool answers free-text questions about the PR.

|

||||

- The "PR Description" tool automatically sets the PR Title and body.

|

||||

- The "PR Code Suggestion" tool provide inline code suggestions for the PR that can be applied and committed.

|

||||

- The "PR Reflect and Review" tool initiates a dialog with the user, asks them to reflect on the PR, and then provides a more focused review.

|

||||

|

||||

### PR Reviewer

|

||||

Here is a quick overview of the different sub-tools of PR Reviewer:

|

||||

## How it works

|

||||

|

||||

- PR Analysis

|

||||

- Summarize main theme

|

||||

- PR description and title

|

||||

- PR type classification

|

||||

- Is the PR covered by relevant tests

|

||||

- Is the PR minimal and focused

|

||||

- PR Feedback

|

||||

- General PR suggestions

|

||||

- Code suggestions

|

||||

- Security concerns

|

||||

|

||||

This is how a typical output of the PR Reviewer looks like:

|

||||

|

||||

---

|

||||

#### PR Analysis

|

||||

|

||||

- 🎯 **Main theme:** Adding language extension handler and token handler

|

||||

- 🔍 **Description and title:** Yes

|

||||

- 📌 **Type of PR:** Enhancement

|

||||

- 🧪 **Relevant tests added:** No

|

||||

- ✨ **Minimal and focused:** Yes, the PR is focused on adding two new handlers for language extension and token counting.

|

||||

#### PR Feedback

|

||||

|

||||

- 💡 **General PR suggestions:** The PR is generally well-structured and the code is clean. However, it would be beneficial to add some tests to ensure the new handlers work as expected. Also, consider adding docstrings to the new functions and classes to improve code readability and maintainability.

|

||||

|

||||

- 🤖 **Code suggestions:**

|

||||

|

||||

- **suggestion 1:**

|

||||

- **relevant file:** pr_agent/algo/language_handler.py

|

||||

- **suggestion content:** Consider using a set instead of a list for 'bad_extensions' as checking membership in a set is faster than in a list. [medium]

|

||||

|

||||

- **suggestion 2:**

|

||||

- **relevant file:** pr_agent/algo/language_handler.py

|

||||

- **suggestion content:** In the 'filter_bad_extensions' function, you are splitting the filename on '.' and taking the last element to get the extension. This might not work as expected if the filename contains multiple '.' characters. Consider using 'os.path.splitext' to get the file extension more reliably. [important]

|

||||

|

||||

- 🔒 **Security concerns:** No, the PR does not introduce possible security concerns or issues.

|

||||

|

||||

---

|

||||

|

||||

|

||||

### PR Q&A

|

||||

This tool answers free-text questions about the PR. This is how a typical output of the PR Q&A looks like:

|

||||

|

||||

---

|

||||

**Question**: summarize for me the PR in 4 bullet points

|

||||

|

||||

**Answer**:

|

||||

- The PR introduces a new feature to sort files by their main languages. It uses a mapping of programming languages to their file extensions to achieve this.

|

||||

- It also introduces a filter to exclude files with certain extensions, deemed as 'bad extensions', from the sorting process.

|

||||

- The PR modifies the `get_pr_diff` function in `pr_processing.py` to use the new sorting function. It also refactors the code to move the PR pruning logic into a separate function.

|

||||

- A new `TokenHandler` class is introduced in `token_handler.py` to handle token counting operations. This class is initialized with a PR, variables, system, and user, and provides methods to get system and user tokens and to count tokens in a patch.

|

||||

|

||||

---

|

||||

|

||||

## Configuration

|

||||

The different tools and sub-tools used by CodiumAI PR-Agent are easily configurable via the configuration file: `/settings/configuration.toml`.

|

||||

#### Enabling/disabling sub-tools:

|

||||

You can enable/disable the different PR Reviewer sub-sections with the following flags:

|

||||

```

|

||||

require_minimal_and_focused_review=true

|

||||

require_tests_review=true

|

||||

require_security_review=true

|

||||

```

|

||||

#### Code Suggestions configuration:

|

||||

There are also configuration options to control different aspects of the `code suggestions` feature.

|

||||

The number of suggestions provided can be controlled by adjusting the following parameter:

|

||||

```

|

||||

num_code_suggestions=4

|

||||

```

|

||||

You can also enable more verbose and informative mode of code suggestions:

|

||||

```

|

||||

extended_code_suggestions=false

|

||||

```

|

||||

This is a comparison of the regular and extended code suggestions modes:

|

||||

|

||||

---

|

||||

Example for regular suggestion:

|

||||

|

||||

|

||||

- **suggestion 1:**

|

||||

- **relevant file:** sql.py

|

||||

- **suggestion content:** Remove hardcoded sensitive information like username and password. Use environment variables or a secure method to store these values. [important]

|

||||

---

|

||||

|

||||

Example for extended suggestion:

|

||||

|

||||

|

||||

- **suggestion 1:**

|

||||

- **relevant file:** sql.py

|

||||

- **suggestion content:** Remove hardcoded sensitive information (username and password) [important]

|

||||

- **why:** Hardcoding sensitive information is a security risk. It's better to use environment variables or a secure way to store these values.

|

||||

- **code example:**

|

||||

- **before code:**

|

||||

```

|

||||

user = "root",

|

||||

password = "Mysql@123",

|

||||

```

|

||||

- **after code:**

|

||||

```

|

||||

user = os.getenv('DB_USER'),

|

||||

password = os.getenv('DB_PASSWORD'),

|

||||

```

|

||||

---

|

||||

|

||||

|

||||

Check out the [PR Compression strategy](./PR_COMPRESSION.md) page for more details on how we convert a code diff to a manageable LLM prompt

|

||||

|

||||

## Roadmap

|

||||

- [ ] Support open-source models, as a replacement for openai models. Note that a minimal requirement for each open-source model is to have 8k+ context, and good support for generating json as an output

|

||||

- [ ] Support other Git providers, such as Gitlab and Bitbucket.

|

||||

- [ ] Develop additional logics for handling large PRs, and compressing git patches

|

||||

- [ ] Dedicated tools and sub-tools for specific programming languages (Python, Javascript, Java, C++, etc)

|

||||

|

||||

- [ ] Support open-source models, as a replacement for OpenAI models. (Note - a minimal requirement for each open-source model is to have 8k+ context, and good support for generating JSON as an output)

|

||||

- [x] Support other Git providers, such as Gitlab and Bitbucket.

|

||||

- [ ] Develop additional logic for handling large PRs, and compressing git patches

|

||||

- [ ] Add additional context to the prompt. For example, repo (or relevant files) summarization, with tools such a [ctags](https://github.com/universal-ctags/ctags)

|

||||

- [ ] Adding more tools. Possible directions:

|

||||

- [ ] Code Quality

|

||||

- [ ] Coding Style

|

||||

- [x] PR description

|

||||

- [x] Inline code suggestions

|

||||

- [x] Reflect and review

|

||||

- [ ] Enforcing CONTRIBUTING.md guidelines

|

||||

- [ ] Performance (are there any performance issues)

|

||||

- [ ] Documentation (is the PR properly documented)

|

||||

- [ ] Rank the PR importance

|

||||

- [ ] ...

|

||||

|

||||

## Similar Projects

|

||||

|

||||

- [CodiumAI - Meaningful tests for busy devs](https://github.com/Codium-ai/codiumai-vscode-release)

|

||||

- [Aider - GPT powered coding in your terminal](https://github.com/paul-gauthier/aider)

|

||||

- [GPT-Engineer](https://github.com/AntonOsika/gpt-engineer)

|

||||

- [openai-pr-reviewer](https://github.com/coderabbitai/openai-pr-reviewer)

|

||||

- [CodeReview BOT](https://github.com/anc95/ChatGPT-CodeReview)

|

||||

- [AI-Maintainer](https://github.com/merwanehamadi/AI-Maintainer)

|

||||

|

||||

8

action.yaml

Normal file

8

action.yaml

Normal file

@ -0,0 +1,8 @@

|

||||

name: 'Codium PR Agent'

|

||||

description: 'Summarize, review and suggest improvements for pull requests'

|

||||

branding:

|

||||

icon: 'award'

|

||||

color: 'green'

|

||||

runs:

|

||||

using: 'docker'

|

||||

image: 'Dockerfile.github_action_dockerhub'

|

||||

12

docker/Dockerfile.lambda

Normal file

12

docker/Dockerfile.lambda

Normal file

@ -0,0 +1,12 @@

|

||||

FROM public.ecr.aws/lambda/python:3.10

|

||||

|

||||

RUN yum update -y && \

|

||||

yum install -y gcc python3-devel && \

|

||||

yum clean all

|

||||

|

||||

ADD requirements.txt .

|

||||

RUN pip install -r requirements.txt && rm requirements.txt

|

||||

RUN pip install mangum==16.0.0

|

||||

COPY pr_agent/ ${LAMBDA_TASK_ROOT}/pr_agent/

|

||||

|

||||

CMD ["pr_agent.servers.serverless.serverless"]

|

||||

2

github_action/entrypoint.sh

Normal file

2

github_action/entrypoint.sh

Normal file

@ -0,0 +1,2 @@

|

||||

#!/bin/bash

|

||||

python /app/pr_agent/servers/github_action_runner.py

|

||||

Binary file not shown.

|

Before Width: | Height: | Size: 102 KiB |

BIN

pics/logo-dark.png

Normal file

BIN

pics/logo-dark.png

Normal file

Binary file not shown.

|

After Width: | Height: | Size: 22 KiB |

BIN

pics/logo-light.png

Normal file

BIN

pics/logo-light.png

Normal file

Binary file not shown.

|

After Width: | Height: | Size: 25 KiB |

Binary file not shown.

|

Before Width: | Height: | Size: 137 KiB |

Binary file not shown.

|

Before Width: | Height: | Size: 267 KiB |

Binary file not shown.

|

Before Width: | Height: | Size: 42 KiB |

@ -1,20 +1,33 @@

|

||||

import re

|

||||

from typing import Optional

|

||||

|

||||

from pr_agent.config_loader import settings

|

||||

from pr_agent.tools.pr_code_suggestions import PRCodeSuggestions

|

||||

from pr_agent.tools.pr_description import PRDescription

|

||||

from pr_agent.tools.pr_information_from_user import PRInformationFromUser

|

||||

from pr_agent.tools.pr_questions import PRQuestions

|

||||

from pr_agent.tools.pr_reviewer import PRReviewer

|

||||

|

||||

|

||||

class PRAgent:

|

||||

def __init__(self, installation_id: Optional[int] = None):

|

||||

self.installation_id = installation_id

|

||||

def __init__(self):

|

||||

pass

|

||||

|

||||

async def handle_request(self, pr_url, request):

|

||||

if 'please review' in request.lower():

|

||||

reviewer = PRReviewer(pr_url, self.installation_id)

|

||||

await reviewer.review()

|

||||

async def handle_request(self, pr_url, request) -> bool:

|

||||

action, *args = request.strip().split()

|

||||

if any(cmd == action for cmd in ["/answer"]):

|

||||

await PRReviewer(pr_url, is_answer=True).review()

|

||||

elif any(cmd == action for cmd in ["/review", "/review_pr", "/reflect_and_review"]):

|

||||

if settings.pr_reviewer.ask_and_reflect or "/reflect_and_review" in request:

|

||||

await PRInformationFromUser(pr_url).generate_questions()

|

||||

else:

|

||||

await PRReviewer(pr_url, args=args).review()

|

||||

elif any(cmd == action for cmd in ["/describe", "/describe_pr"]):

|

||||

await PRDescription(pr_url).describe()

|

||||

elif any(cmd == action for cmd in ["/improve", "/improve_code"]):

|

||||

await PRCodeSuggestions(pr_url).suggest()

|

||||

elif any(cmd == action for cmd in ["/ask", "/ask_question"]):

|

||||

await PRQuestions(pr_url, args).answer()

|

||||

else:

|

||||

return False

|

||||

|

||||

elif 'please answer' in request.lower():

|

||||

question = re.split(r'(?i)please answer', request)[1].strip()

|

||||

answerer = PRQuestions(pr_url, question, self.installation_id)

|

||||

await answerer.answer()

|

||||

return True

|

||||

|

||||

@ -1,28 +1,65 @@

|

||||

import logging

|

||||

|

||||

import openai

|

||||

from openai.error import APIError, Timeout, TryAgain

|

||||

from openai.error import APIError, Timeout, TryAgain, RateLimitError

|

||||

from retry import retry

|

||||

|

||||

from pr_agent.config_loader import settings

|

||||

|

||||

OPENAI_RETRIES=2

|

||||

OPENAI_RETRIES=5

|

||||

|

||||

class AiHandler:

|

||||

"""

|

||||

This class handles interactions with the OpenAI API for chat completions.

|

||||

It initializes the API key and other settings from a configuration file,

|

||||

and provides a method for performing chat completions using the OpenAI ChatCompletion API.

|

||||

"""

|

||||

|

||||

def __init__(self):

|

||||

"""

|

||||

Initializes the OpenAI API key and other settings from a configuration file.

|

||||

Raises a ValueError if the OpenAI key is missing.

|

||||

"""

|

||||

try:

|

||||

openai.api_key = settings.openai.key

|

||||

if settings.get("OPENAI.ORG", None):

|

||||

openai.organization = settings.openai.org

|

||||

self.deployment_id = settings.get("OPENAI.DEPLOYMENT_ID", None)

|

||||

if settings.get("OPENAI.API_TYPE", None):

|

||||

openai.api_type = settings.openai.api_type

|

||||

if settings.get("OPENAI.API_VERSION", None):

|

||||

openai.api_version = settings.openai.api_version

|

||||

if settings.get("OPENAI.API_BASE", None):

|

||||

openai.api_base = settings.openai.api_base

|

||||

except AttributeError as e:

|

||||

raise ValueError("OpenAI key is required") from e

|

||||

|

||||

@retry(exceptions=(APIError, Timeout, TryAgain, AttributeError),

|

||||

@retry(exceptions=(APIError, Timeout, TryAgain, AttributeError, RateLimitError),

|

||||

tries=OPENAI_RETRIES, delay=2, backoff=2, jitter=(1, 3))

|

||||

async def chat_completion(self, model: str, temperature: float, system: str, user: str):

|

||||

"""

|

||||

Performs a chat completion using the OpenAI ChatCompletion API.

|

||||

Retries in case of API errors or timeouts.

|

||||

|

||||

Args:

|

||||

model (str): The model to use for chat completion.

|

||||

temperature (float): The temperature parameter for chat completion.

|

||||

system (str): The system message for chat completion.

|

||||

user (str): The user message for chat completion.

|

||||

|

||||

Returns:

|

||||

tuple: A tuple containing the response and finish reason from the API.

|

||||

|

||||

Raises:

|

||||

TryAgain: If the API response is empty or there are no choices in the response.

|

||||

APIError: If there is an error during OpenAI inference.

|

||||

Timeout: If there is a timeout during OpenAI inference.

|

||||

TryAgain: If there is an attribute error during OpenAI inference.

|

||||

"""

|

||||

try:

|

||||

response = await openai.ChatCompletion.acreate(

|

||||

model=model,

|

||||

deployment_id=self.deployment_id,

|

||||

messages=[

|

||||

{"role": "system", "content": system},

|

||||

{"role": "user", "content": user}

|

||||

@ -32,6 +69,12 @@ class AiHandler:

|

||||

except (APIError, Timeout, TryAgain) as e:

|

||||

logging.error("Error during OpenAI inference: ", e)

|

||||

raise

|

||||

except (RateLimitError) as e:

|

||||

logging.error("Rate limit error during OpenAI inference: ", e)

|

||||

raise

|

||||

except (Exception) as e:

|

||||

logging.error("Unknown error during OpenAI inference: ", e)

|

||||

raise TryAgain from e

|

||||

if response is None or len(response.choices) == 0:

|

||||

raise TryAgain

|

||||

resp = response.choices[0]['message']['content']

|

||||

|

||||

@ -8,11 +8,22 @@ from pr_agent.config_loader import settings

|

||||

|

||||

def extend_patch(original_file_str, patch_str, num_lines) -> str:

|

||||

"""

|

||||

Extends the patch to include 'num_lines' more surrounding lines

|

||||

Extends the given patch to include a specified number of surrounding lines.

|

||||

|

||||

Args:

|

||||

original_file_str (str): The original file to which the patch will be applied.

|

||||

patch_str (str): The patch to be applied to the original file.

|

||||

num_lines (int): The number of surrounding lines to include in the extended patch.

|

||||

|

||||

Returns:

|

||||

str: The extended patch string.

|

||||

"""

|

||||

if not patch_str or num_lines == 0:

|

||||

return patch_str

|

||||

|

||||

if type(original_file_str) == bytes:

|

||||

original_file_str = original_file_str.decode('utf-8')

|

||||

|

||||

original_lines = original_file_str.splitlines()

|

||||

patch_lines = patch_str.splitlines()

|

||||

extended_patch_lines = []

|

||||

@ -58,6 +69,14 @@ def extend_patch(original_file_str, patch_str, num_lines) -> str:

|

||||

|

||||

|

||||

def omit_deletion_hunks(patch_lines) -> str:

|

||||

"""

|

||||

Omit deletion hunks from the patch and return the modified patch.

|

||||

Args:

|

||||

- patch_lines: a list of strings representing the lines of the patch

|

||||

Returns:

|

||||

- A string representing the modified patch with deletion hunks omitted

|

||||

"""

|

||||

|

||||

temp_hunk = []

|

||||

added_patched = []

|

||||

add_hunk = False

|

||||

@ -90,13 +109,26 @@ def omit_deletion_hunks(patch_lines) -> str:

|

||||

def handle_patch_deletions(patch: str, original_file_content_str: str,

|

||||

new_file_content_str: str, file_name: str) -> str:

|

||||

"""

|

||||

Handle entire file or deletion patches

|

||||

Handle entire file or deletion patches.

|

||||

|

||||

This function takes a patch, original file content, new file content, and file name as input.

|

||||

It handles entire file or deletion patches and returns the modified patch with deletion hunks omitted.

|

||||

|

||||

Args:

|

||||

patch (str): The patch to be handled.

|

||||

original_file_content_str (str): The original content of the file.

|

||||

new_file_content_str (str): The new content of the file.

|

||||

file_name (str): The name of the file.

|

||||

|

||||

Returns:

|

||||

str: The modified patch with deletion hunks omitted.

|

||||

|

||||

"""

|

||||

if not new_file_content_str:

|

||||

# logic for handling deleted files - don't show patch, just show that the file was deleted

|

||||

if settings.config.verbosity_level > 0:

|

||||

logging.info(f"Processing file: {file_name}, minimizing deletion file")

|

||||

patch = "File was deleted\n"

|

||||

patch = None # file was deleted

|

||||

else:

|

||||

patch_lines = patch.splitlines()

|

||||

patch_new = omit_deletion_hunks(patch_lines)

|

||||

@ -105,3 +137,84 @@ def handle_patch_deletions(patch: str, original_file_content_str: str,

|

||||

logging.info(f"Processing file: {file_name}, hunks were deleted")

|

||||

patch = patch_new

|

||||

return patch

|

||||

|

||||

|

||||

def convert_to_hunks_with_lines_numbers(patch: str, file) -> str:

|

||||

"""

|

||||

Convert a given patch string into a string with line numbers for each hunk, indicating the new and old content of the file.

|

||||

|

||||

Args:

|

||||

patch (str): The patch string to be converted.

|

||||

file: An object containing the filename of the file being patched.

|

||||

|

||||

Returns:

|

||||

str: A string with line numbers for each hunk, indicating the new and old content of the file.

|

||||

|

||||

example output:

|

||||

## src/file.ts

|

||||

--new hunk--

|

||||

881 line1

|

||||

882 line2

|

||||

883 line3

|

||||

887 + line4

|

||||

888 + line5

|

||||

889 line6

|

||||

890 line7

|

||||

...

|

||||

--old hunk--

|

||||

line1

|

||||

line2

|

||||

- line3

|

||||

- line4

|

||||

line5

|

||||

line6

|

||||

...

|

||||

"""

|

||||

|

||||

patch_with_lines_str = f"## {file.filename}\n"

|

||||

import re

|

||||

patch_lines = patch.splitlines()

|

||||

RE_HUNK_HEADER = re.compile(

|

||||

r"^@@ -(\d+)(?:,(\d+))? \+(\d+)(?:,(\d+))? @@[ ]?(.*)")

|

||||

new_content_lines = []

|

||||

old_content_lines = []

|

||||

match = None

|

||||

start1, size1, start2, size2 = -1, -1, -1, -1

|

||||

for line in patch_lines:

|

||||

if 'no newline at end of file' in line.lower():

|

||||

continue

|

||||

|

||||

if line.startswith('@@'):

|

||||

match = RE_HUNK_HEADER.match(line)

|

||||

if match and new_content_lines: # found a new hunk, split the previous lines

|

||||

if new_content_lines:

|

||||

patch_with_lines_str += '\n--new hunk--\n'

|

||||

for i, line_new in enumerate(new_content_lines):

|

||||

patch_with_lines_str += f"{start2 + i} {line_new}\n"

|

||||

if old_content_lines:

|

||||

patch_with_lines_str += '--old hunk--\n'

|

||||

for line_old in old_content_lines:

|

||||

patch_with_lines_str += f"{line_old}\n"

|

||||

new_content_lines = []

|

||||

old_content_lines = []

|

||||

start1, size1, start2, size2 = map(int, match.groups()[:4])

|

||||

elif line.startswith('+'):

|

||||

new_content_lines.append(line)

|

||||

elif line.startswith('-'):

|

||||

old_content_lines.append(line)

|

||||

else:

|

||||

new_content_lines.append(line)

|

||||

old_content_lines.append(line)

|

||||

|

||||

# finishing last hunk

|

||||

if match and new_content_lines:

|

||||

if new_content_lines:

|

||||

patch_with_lines_str += '\n--new hunk--\n'

|

||||

for i, line_new in enumerate(new_content_lines):

|

||||

patch_with_lines_str += f"{start2 + i} {line_new}\n"

|

||||

if old_content_lines:

|

||||

patch_with_lines_str += '\n--old hunk--\n'

|

||||

for line_old in old_content_lines:

|

||||

patch_with_lines_str += f"{line_old}\n"

|

||||

|

||||

return patch_with_lines_str.strip()

|

||||

|

||||

File diff suppressed because one or more lines are too long

@ -1,45 +1,86 @@

|

||||

from __future__ import annotations

|

||||

|

||||

import difflib

|

||||

import logging

|

||||

from typing import Any, Dict, Tuple

|

||||

from typing import Tuple, Union, Callable, List

|

||||

|

||||

from pr_agent.algo.git_patch_processing import extend_patch, handle_patch_deletions

|

||||

from pr_agent.algo import MAX_TOKENS

|

||||

from pr_agent.algo.git_patch_processing import convert_to_hunks_with_lines_numbers, extend_patch, handle_patch_deletions

|

||||

from pr_agent.algo.language_handler import sort_files_by_main_languages

|

||||

from pr_agent.algo.token_handler import TokenHandler

|

||||

from pr_agent.algo.utils import load_large_diff

|

||||

from pr_agent.config_loader import settings

|

||||

from pr_agent.git_providers import GithubProvider

|

||||

from pr_agent.git_providers.git_provider import GitProvider

|

||||

|

||||

OUTPUT_BUFFER_TOKENS = 800

|

||||

DELETED_FILES_ = "Deleted files:\n"

|

||||

|

||||

MORE_MODIFIED_FILES_ = "More modified files:\n"

|

||||

|

||||

OUTPUT_BUFFER_TOKENS_SOFT_THRESHOLD = 1000

|

||||

OUTPUT_BUFFER_TOKENS_HARD_THRESHOLD = 600

|

||||

PATCH_EXTRA_LINES = 3

|

||||

|

||||

|

||||

def get_pr_diff(git_provider: [GithubProvider, Any], token_handler: TokenHandler) -> str:

|

||||

def get_pr_diff(git_provider: GitProvider, token_handler: TokenHandler, model: str,

|

||||

add_line_numbers_to_hunks: bool = False, disable_extra_lines: bool = False) -> str:

|

||||

"""

|

||||

Returns a string with the diff of the PR.

|

||||

If needed, apply diff minimization techniques to reduce the number of tokens

|

||||

Returns a string with the diff of the pull request, applying diff minimization techniques if needed.

|

||||

|

||||

Args:

|

||||

git_provider (GitProvider): An object of the GitProvider class representing the Git provider used for the pull request.

|

||||

token_handler (TokenHandler): An object of the TokenHandler class used for handling tokens in the context of the pull request.

|

||||

model (str): The name of the model used for tokenization.

|

||||

add_line_numbers_to_hunks (bool, optional): A boolean indicating whether to add line numbers to the hunks in the diff. Defaults to False.

|

||||

disable_extra_lines (bool, optional): A boolean indicating whether to disable the extension of each patch with extra lines of context. Defaults to False.

|

||||

|

||||

Returns:

|

||||

str: A string with the diff of the pull request, applying diff minimization techniques if needed.

|

||||

"""

|

||||

files = list(git_provider.get_diff_files())

|

||||

|

||||

if disable_extra_lines:

|

||||

global PATCH_EXTRA_LINES

|

||||

PATCH_EXTRA_LINES = 0

|

||||

|

||||

diff_files = list(git_provider.get_diff_files())

|

||||

|

||||

# get pr languages

|

||||

pr_languages = sort_files_by_main_languages(git_provider.get_languages(), files)