Compare commits

272 Commits

add-pr-tem

...

v0.28

| Author | SHA1 | Date | |

|---|---|---|---|

| 7d57edf959 | |||

| 6950b3ca6b | |||

| e422f50cfe | |||

| 66a667d509 | |||

| 482cd7c680 | |||

| 991a866368 | |||

| fcd9416129 | |||

| 255e1d0fc1 | |||

| 7117e9fe0e | |||

| b42841fcc4 | |||

| 839b6093cb | |||

| 351b9d9115 | |||

| 3e11f07f0b | |||

| 1d57dd7443 | |||

| 7aa3d12876 | |||

| 6f6595c343 | |||

| b300cfa84d | |||

| 9a21069075 | |||

| 85f7b99dea | |||

| d6f79f486a | |||

| b0ed584821 | |||

| 05ea699a92 | |||

| 605eef64e7 | |||

| c94aa58ae4 | |||

| e20e7c138c | |||

| b161672218 | |||

| 5bc253e1d9 | |||

| 8495e4d549 | |||

| fb324d106c | |||

| a4387b5829 | |||

| 477ebf4926 | |||

| fe98779a88 | |||

| e14fc7e02d | |||

| 1bd65934df | |||

| 88a17848eb | |||

| 1aab87516e | |||

| dd80276f3f | |||

| ad17cb4d92 | |||

| 6efb694945 | |||

| dde362bd47 | |||

| a9ce909713 | |||

| e925f31ac0 | |||

| 5e7e353670 | |||

| 52d4312c9a | |||

| 8ec6067b26 | |||

| bc575e5a67 | |||

| b087458e33 | |||

| 6610921bba | |||

| 3fd15042a6 | |||

| 4ab2396be0 | |||

| a75d430751 | |||

| 737cf559d9 | |||

| fa77828db2 | |||

| f506fb1e05 | |||

| 4ea46d5b25 | |||

| ed9fcd0238 | |||

| 9d3bd7289a | |||

| 1724a65ab2 | |||

| 6883ced9e3 | |||

| 29e28056db | |||

| 507cd6e675 | |||

| dd1a0e51bb | |||

| b4e2a32543 | |||

| 677a54c5a0 | |||

| a211175fea | |||

| 64f52288a1 | |||

| 4ee1704862 | |||

| f5e381e1b2 | |||

| 2cacaf56b0 | |||

| 9a574e0caa | |||

| 0f33750035 | |||

| 4713175fcf | |||

| d16012a568 | |||

| 01f1599336 | |||

| f5bd98a3b9 | |||

| 1c86af30b6 | |||

| 0acd5193cb | |||

| ffefcb8a04 | |||

| 35bb2b31e3 | |||

| a18f9d00c9 | |||

| 20d709075c | |||

| 52c99e3f7b | |||

| 692bc449be | |||

| 884b49dd84 | |||

| 338ec5cae0 | |||

| c6e4498653 | |||

| c7c411eb63 | |||

| 222155e4f2 | |||

| f9d5e72058 | |||

| 2619ff3eb3 | |||

| 15e8167115 | |||

| d6ad511511 | |||

| 1943662946 | |||

| c1fb76abcf | |||

| 121d90a9da | |||

| a8935dece3 | |||

| fd12191fcf | |||

| 61b6d1f1a3 | |||

| 874b7c7da4 | |||

| a0d86b532f | |||

| 4f2551e0a6 | |||

| eb5f38b13b | |||

| 1753bc703e | |||

| 31de811820 | |||

| 4c0e371238 | |||

| ca286b8dc0 | |||

| 0c30f084bc | |||

| a33bee5805 | |||

| f32163d57c | |||

| bb24a6f43d | |||

| 5b267332b9 | |||

| 30bf7572b0 | |||

| b5ce49cbc0 | |||

| 440d2368a4 | |||

| 215c10cc8c | |||

| 7623e1a419 | |||

| 5e30e190b8 | |||

| 5447dd2ac6 | |||

| 214200b816 | |||

| fcb7d97640 | |||

| 224920bdb7 | |||

| d3f83f3069 | |||

| 8e6267b0e6 | |||

| 9809e2dbd8 | |||

| 7cf521c001 | |||

| e71c0f1805 | |||

| 8182a4afc0 | |||

| 3817aa2868 | |||

| 94a8606d24 | |||

| af635650f1 | |||

| 222f276959 | |||

| 9a32e94b3e | |||

| 7c56eee701 | |||

| 48b3c69c10 | |||

| 9d1c8312b5 | |||

| 64e5a87530 | |||

| 9a9acef0e8 | |||

| 3ff8f1ff11 | |||

| c7f4b87d6f | |||

| 9db44b5f5f | |||

| 70a2377ac9 | |||

| 52a68bcd44 | |||

| 0a4c02c8b3 | |||

| e253f18e7f | |||

| d6b6191f90 | |||

| de80901284 | |||

| dfbd8dad5d | |||

| d6f405dd0d | |||

| 25ba9414fe | |||

| d097266c38 | |||

| fa1eda967f | |||

| c89c0eab8c | |||

| 93e34703ab | |||

| c15ed628db | |||

| 70f47336d6 | |||

| c6a6a2f352 | |||

| 1dc3db7322 | |||

| 049fc558a8 | |||

| 2dc89d0998 | |||

| 07bbfff4ba | |||

| 328637b8be | |||

| 44d9535dbc | |||

| 7fec17f3ff | |||

| cd15f64f11 | |||

| d4ac206c46 | |||

| 444910868e | |||

| a24b06b253 | |||

| 393516f746 | |||

| 152b111ef2 | |||

| 56250f5ea8 | |||

| 7b1df82c05 | |||

| 05960f2c3f | |||

| feb306727e | |||

| 2a647709c4 | |||

| a4cd05e71c | |||

| da6ef8c80f | |||

| 775bfc74eb | |||

| ebdbde1bca | |||

| e16c6d0b27 | |||

| f0b52870a2 | |||

| 1b35f01aa1 | |||

| a0dc9deb30 | |||

| 7e32a08f00 | |||

| 84983f3e9d | |||

| 71451de156 | |||

| 0e4a1d9ab8 | |||

| e7b05732f8 | |||

| 37083ae354 | |||

| 020ef212c1 | |||

| 01cd66f4f5 | |||

| af72b45593 | |||

| 9abb212e83 | |||

| e81b0dca30 | |||

| d37732c25d | |||

| e6b6e28d6b | |||

| 5bace4ddc6 | |||

| b80d7d1189 | |||

| b2d8dee00a | |||

| ac3dbdf5fc | |||

| f143a24879 | |||

| 347af1dd99 | |||

| d91245a9d3 | |||

| bfdaac0a05 | |||

| 183d2965d0 | |||

| 86647810e0 | |||

| 56978d9793 | |||

| 6433e827f4 | |||

| c0e78ba522 | |||

| 45d776a1f7 | |||

| 6e19e77e5e | |||

| 1e98d27ab4 | |||

| a47d4032b8 | |||

| 2887d0a7ed | |||

| a07f6855cb | |||

| 237a6ffb5f | |||

| 5e1cc12df4 | |||

| 29a350b4f8 | |||

| 6efcd61087 | |||

| c7cafa720e | |||

| 9de9b397e2 | |||

| 35059cadf7 | |||

| 0317951e32 | |||

| 4edb8b89d1 | |||

| a5278bdad2 | |||

| 3fd586c9bd | |||

| 717b2fe5f1 | |||

| 262c1cbc68 | |||

| defdaa0e02 | |||

| 5ca6918943 | |||

| cfd813883b | |||

| c6d32a4c9f | |||

| ae4e99026e | |||

| 42f493f41e | |||

| 34d73feb1d | |||

| ebc94bbd44 | |||

| 4fcc7a5f3a | |||

| 41760ea333 | |||

| 13128c4c2f | |||

| da168151e8 | |||

| a132927052 | |||

| b52d0726a2 | |||

| fc411dc8bc | |||

| 22f02ac08c | |||

| 52883fb1b5 | |||

| adfc2a6b69 | |||

| c4aa13e798 | |||

| 90575e3f0d | |||

| fcbe986ec7 | |||

| 061fec0d36 | |||

| 778d00d1a0 | |||

| cc8d5a6c50 | |||

| 62c47f9cb5 | |||

| bb31b0c66b | |||

| 359c963ad1 | |||

| 130b1ff4fb | |||

| 605a4b99ad | |||

| b989f41b96 | |||

| 26168a605b | |||

| 2c37b02aa0 | |||

| a2550870c2 | |||

| 279c6ead8f | |||

| c9500cf796 | |||

| 0f63d8685f | |||

| 77204faa51 | |||

| 43fb8ff433 | |||

| cd129d8b27 | |||

| 04aff0d3b2 | |||

| be1dd4bd20 | |||

| b3b89e7138 | |||

| 9045723084 | |||

| 34e22a2c8e | |||

| 1d784c60cb |

@ -3,7 +3,7 @@ FROM python:3.12 as base

|

||||

WORKDIR /app

|

||||

ADD pyproject.toml .

|

||||

ADD requirements.txt .

|

||||

RUN pip install . && rm pyproject.toml requirements.txt

|

||||

RUN pip install --no-cache-dir . && rm pyproject.toml requirements.txt

|

||||

ENV PYTHONPATH=/app

|

||||

ADD docs docs

|

||||

ADD pr_agent pr_agent

|

||||

|

||||

167

README.md

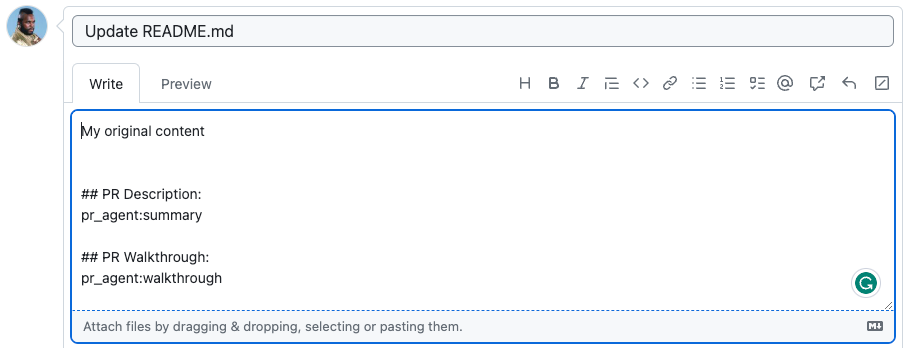

@ -4,12 +4,18 @@

|

||||

|

||||

|

||||

<picture>

|

||||

<source media="(prefers-color-scheme: dark)" srcset="https://codium.ai/images/pr_agent/logo-dark.png" width="330">

|

||||

<source media="(prefers-color-scheme: light)" srcset="https://codium.ai/images/pr_agent/logo-light.png" width="330">

|

||||

<source media="(prefers-color-scheme: dark)" srcset="https://www.qodo.ai/wp-content/uploads/2025/02/PR-Agent-Purple-2.png">

|

||||

<source media="(prefers-color-scheme: light)" srcset="https://www.qodo.ai/wp-content/uploads/2025/02/PR-Agent-Purple-2.png">

|

||||

<img src="https://codium.ai/images/pr_agent/logo-light.png" alt="logo" width="330">

|

||||

|

||||

</picture>

|

||||

<br/>

|

||||

|

||||

[Installation Guide](https://qodo-merge-docs.qodo.ai/installation/) |

|

||||

[Usage Guide](https://qodo-merge-docs.qodo.ai/usage-guide/) |

|

||||

[Tools Guide](https://qodo-merge-docs.qodo.ai/tools/) |

|

||||

[Qodo Merge](https://qodo-merge-docs.qodo.ai/overview/pr_agent_pro/) 💎

|

||||

|

||||

PR-Agent aims to help efficiently review and handle pull requests, by providing AI feedback and suggestions

|

||||

</div>

|

||||

|

||||

@ -22,13 +28,16 @@ PR-Agent aims to help efficiently review and handle pull requests, by providing

|

||||

</a>

|

||||

</div>

|

||||

|

||||

### [Documentation](https://qodo-merge-docs.qodo.ai/)

|

||||

[//]: # (### [Documentation](https://qodo-merge-docs.qodo.ai/))

|

||||

|

||||

- See the [Installation Guide](https://qodo-merge-docs.qodo.ai/installation/) for instructions on installing PR-Agent on different platforms.

|

||||

[//]: # ()

|

||||

[//]: # (- See the [Installation Guide](https://qodo-merge-docs.qodo.ai/installation/) for instructions on installing PR-Agent on different platforms.)

|

||||

|

||||

- See the [Usage Guide](https://qodo-merge-docs.qodo.ai/usage-guide/) for instructions on running PR-Agent tools via different interfaces, such as CLI, PR Comments, or by automatically triggering them when a new PR is opened.

|

||||

[//]: # ()

|

||||

[//]: # (- See the [Usage Guide](https://qodo-merge-docs.qodo.ai/usage-guide/) for instructions on running PR-Agent tools via different interfaces, such as CLI, PR Comments, or by automatically triggering them when a new PR is opened.)

|

||||

|

||||

- See the [Tools Guide](https://qodo-merge-docs.qodo.ai/tools/) for a detailed description of the different tools, and the available configurations for each tool.

|

||||

[//]: # ()

|

||||

[//]: # (- See the [Tools Guide](https://qodo-merge-docs.qodo.ai/tools/) for a detailed description of the different tools, and the available configurations for each tool.)

|

||||

|

||||

|

||||

## Table of Contents

|

||||

@ -37,12 +46,25 @@ PR-Agent aims to help efficiently review and handle pull requests, by providing

|

||||

- [Overview](#overview)

|

||||

- [Example results](#example-results)

|

||||

- [Try it now](#try-it-now)

|

||||

- [Qodo Merge 💎](https://qodo-merge-docs.qodo.ai/overview/pr_agent_pro/)

|

||||

- [Qodo Merge](https://qodo-merge-docs.qodo.ai/overview/pr_agent_pro/)

|

||||

- [How it works](#how-it-works)

|

||||

- [Why use PR-Agent?](#why-use-pr-agent)

|

||||

|

||||

## News and Updates

|

||||

|

||||

### Feb 28, 2025

|

||||

A new version, v0.27, was released. See release notes [here](https://github.com/qodo-ai/pr-agent/releases/tag/v0.27).

|

||||

|

||||

### Feb 27, 2025

|

||||

- Updated the default model to `o3-mini` for all tools. You can still use the `gpt-4o` as the default model by setting the `model` parameter in the configuration file.

|

||||

- Important updates and bug fixes for Azure DevOps, see [here](https://github.com/qodo-ai/pr-agent/pull/1583)

|

||||

- Added support for adjusting the [response language](https://qodo-merge-docs.qodo.ai/usage-guide/additional_configurations/#language-settings) of the PR-Agent tools.

|

||||

|

||||

### Feb 6, 2025

|

||||

New design for the `/improve` tool:

|

||||

|

||||

<kbd><img src="https://github.com/user-attachments/assets/26506430-550e-469a-adaa-af0a09b70c6d" width="512"></kbd>

|

||||

|

||||

### Jan 25, 2025

|

||||

|

||||

The open-source GitHub organization was updated:

|

||||

@ -60,49 +82,12 @@ to

|

||||

|

||||

New tool [/Implement](https://qodo-merge-docs.qodo.ai/tools/implement/) (💎), which converts human code review discussions and feedback into ready-to-commit code changes.

|

||||

|

||||

<kbd><img src="https://www.qodo.ai/images/pr_agent/implement1.png" width="512"></kbd>

|

||||

<kbd><img src="https://www.qodo.ai/images/pr_agent/implement1.png?v=2" width="512"></kbd>

|

||||

|

||||

|

||||

### Jan 1, 2025

|

||||

|

||||

Update logic and [documentation](https://qodo-merge-docs.qodo.ai/usage-guide/changing_a_model/#ollama) for running local models via Ollama.

|

||||

|

||||

### December 30, 2024

|

||||

|

||||

Following feedback from the community, we have addressed two vulnerabilities identified in the open-source PR-Agent project. The fixes are now included in the newly released version (v0.26), available as of today.

|

||||

|

||||

### December 25, 2024

|

||||

|

||||

The `review` tool previously included a legacy feature for providing code suggestions (controlled by '--pr_reviewer.num_code_suggestion'). This functionality has been deprecated. Use instead the [`improve`](https://qodo-merge-docs.qodo.ai/tools/improve/) tool, which offers higher quality and more actionable code suggestions.

|

||||

|

||||

### December 2, 2024

|

||||

|

||||

Open-source repositories can now freely use Qodo Merge, and enjoy easy one-click installation using a marketplace [app](https://github.com/apps/qodo-merge-pro-for-open-source).

|

||||

|

||||

<kbd><img src="https://github.com/user-attachments/assets/b0838724-87b9-43b0-ab62-73739a3a855c" width="512"></kbd>

|

||||

|

||||

See [here](https://qodo-merge-docs.qodo.ai/installation/pr_agent_pro/) for more details about installing Qodo Merge for private repositories.

|

||||

|

||||

|

||||

### November 18, 2024

|

||||

|

||||

A new mode was enabled by default for code suggestions - `--pr_code_suggestions.focus_only_on_problems=true`:

|

||||

|

||||

- This option reduces the number of code suggestions received

|

||||

- The suggestions will focus more on identifying and fixing code problems, rather than style considerations like best practices, maintainability, or readability.

|

||||

- The suggestions will be categorized into just two groups: "Possible Issues" and "General".

|

||||

|

||||

Still, if you prefer the previous mode, you can set `--pr_code_suggestions.focus_only_on_problems=false` in the [configuration file](https://qodo-merge-docs.qodo.ai/usage-guide/configuration_options/).

|

||||

|

||||

**Example results:**

|

||||

|

||||

Original mode

|

||||

|

||||

<kbd><img src="https://qodo.ai/images/pr_agent/code_suggestions_original_mode.png" width="512"></kbd>

|

||||

|

||||

Focused mode

|

||||

|

||||

<kbd><img src="https://qodo.ai/images/pr_agent/code_suggestions_focused_mode.png" width="512"></kbd>

|

||||

Following feedback from the community, we have addressed two vulnerabilities identified in the open-source PR-Agent project. The [fixes](https://github.com/qodo-ai/pr-agent/pull/1425) are now included in the newly released version (v0.26), available as of today.

|

||||

|

||||

|

||||

## Overview

|

||||

@ -110,42 +95,44 @@ Focused mode

|

||||

|

||||

Supported commands per platform:

|

||||

|

||||

| | | GitHub | GitLab | Bitbucket | Azure DevOps |

|

||||

|-------|---------------------------------------------------------------------------------------------------------|:--------------------:|:--------------------:|:--------------------:|:------------:|

|

||||

| TOOLS | [Review](https://qodo-merge-docs.qodo.ai/tools/review/) | ✅ | ✅ | ✅ | ✅ |

|

||||

| | [Describe](https://qodo-merge-docs.qodo.ai/tools/describe/) | ✅ | ✅ | ✅ | ✅ |

|

||||

| | [Improve](https://qodo-merge-docs.qodo.ai/tools/improve/) | ✅ | ✅ | ✅ | ✅ |

|

||||

| | [Ask](https://qodo-merge-docs.qodo.ai/tools/ask/) | ✅ | ✅ | ✅ | ✅ |

|

||||

| | ⮑ [Ask on code lines](https://qodo-merge-docs.qodo.ai/tools/ask/#ask-lines) | ✅ | ✅ | | |

|

||||

| | [Update CHANGELOG](https://qodo-merge-docs.qodo.ai/tools/update_changelog/) | ✅ | ✅ | ✅ | ✅ |

|

||||

| | [Ticket Context](https://qodo-merge-docs.qodo.ai/core-abilities/fetching_ticket_context/) 💎 | ✅ | ✅ | ✅ | |

|

||||

| | [Utilizing Best Practices](https://qodo-merge-docs.qodo.ai/tools/improve/#best-practices) 💎 | ✅ | ✅ | ✅ | |

|

||||

| | [PR Chat](https://qodo-merge-docs.qodo.ai/chrome-extension/features/#pr-chat) 💎 | ✅ | | | |

|

||||

| | [Suggestion Tracking](https://qodo-merge-docs.qodo.ai/tools/improve/#suggestion-tracking) 💎 | ✅ | ✅ | | |

|

||||

| | [CI Feedback](https://qodo-merge-docs.qodo.ai/tools/ci_feedback/) 💎 | ✅ | | | |

|

||||

| | [PR Documentation](https://qodo-merge-docs.qodo.ai/tools/documentation/) 💎 | ✅ | ✅ | | |

|

||||

| | [Custom Labels](https://qodo-merge-docs.qodo.ai/tools/custom_labels/) 💎 | ✅ | ✅ | | |

|

||||

| | [Analyze](https://qodo-merge-docs.qodo.ai/tools/analyze/) 💎 | ✅ | ✅ | | |

|

||||

| | [Similar Code](https://qodo-merge-docs.qodo.ai/tools/similar_code/) 💎 | ✅ | | | |

|

||||

| | [Custom Prompt](https://qodo-merge-docs.qodo.ai/tools/custom_prompt/) 💎 | ✅ | ✅ | ✅ | |

|

||||

| | [Test](https://qodo-merge-docs.qodo.ai/tools/test/) 💎 | ✅ | ✅ | | |

|

||||

| | [Implement](https://qodo-merge-docs.qodo.ai/tools/implement/) 💎 | ✅ | ✅ | ✅ | |

|

||||

| | | | | | |

|

||||

| USAGE | [CLI](https://qodo-merge-docs.qodo.ai/usage-guide/automations_and_usage/#local-repo-cli) | ✅ | ✅ | ✅ | ✅ |

|

||||

| | [App / webhook](https://qodo-merge-docs.qodo.ai/usage-guide/automations_and_usage/#github-app) | ✅ | ✅ | ✅ | ✅ |

|

||||

| | [Tagging bot](https://github.com/Codium-ai/pr-agent#try-it-now) | ✅ | | | |

|

||||

| | [Actions](https://qodo-merge-docs.qodo.ai/installation/github/#run-as-a-github-action) | ✅ |✅| ✅ |✅|

|

||||

| | | | | | |

|

||||

| CORE | [PR compression](https://qodo-merge-docs.qodo.ai/core-abilities/compression_strategy/) | ✅ | ✅ | ✅ | ✅ |

|

||||

| | Adaptive and token-aware file patch fitting | ✅ | ✅ | ✅ | ✅ |

|

||||

| | [Multiple models support](https://qodo-merge-docs.qodo.ai/usage-guide/changing_a_model/) | ✅ | ✅ | ✅ | ✅ |

|

||||

| | [Local and global metadata](https://qodo-merge-docs.qodo.ai/core-abilities/metadata/) | ✅ | ✅ | ✅ | ✅ |

|

||||

| | [Dynamic context](https://qodo-merge-docs.qodo.ai/core-abilities/dynamic_context/) | ✅ | ✅ | ✅ | ✅ |

|

||||

| | [Self reflection](https://qodo-merge-docs.qodo.ai/core-abilities/self_reflection/) | ✅ | ✅ | ✅ | ✅ |

|

||||

| | [Static code analysis](https://qodo-merge-docs.qodo.ai/core-abilities/static_code_analysis/) 💎 | ✅ | ✅ | ✅ | |

|

||||

| | [Global and wiki configurations](https://qodo-merge-docs.qodo.ai/usage-guide/configuration_options/) 💎 | ✅ | ✅ | ✅ | |

|

||||

| | [PR interactive actions](https://www.qodo.ai/images/pr_agent/pr-actions.mp4) 💎 | ✅ | ✅ | | |

|

||||

| | [Impact Evaluation](https://qodo-merge-docs.qodo.ai/core-abilities/impact_evaluation/) 💎 | ✅ | ✅ | | |

|

||||

| | | GitHub | GitLab | Bitbucket | Azure DevOps |

|

||||

|-------|---------------------------------------------------------------------------------------------------------|:--------------------:|:--------------------:|:---------:|:------------:|

|

||||

| TOOLS | [Review](https://qodo-merge-docs.qodo.ai/tools/review/) | ✅ | ✅ | ✅ | ✅ |

|

||||

| | [Describe](https://qodo-merge-docs.qodo.ai/tools/describe/) | ✅ | ✅ | ✅ | ✅ |

|

||||

| | [Improve](https://qodo-merge-docs.qodo.ai/tools/improve/) | ✅ | ✅ | ✅ | ✅ |

|

||||

| | [Ask](https://qodo-merge-docs.qodo.ai/tools/ask/) | ✅ | ✅ | ✅ | ✅ |

|

||||

| | ⮑ [Ask on code lines](https://qodo-merge-docs.qodo.ai/tools/ask/#ask-lines) | ✅ | ✅ | | |

|

||||

| | [Update CHANGELOG](https://qodo-merge-docs.qodo.ai/tools/update_changelog/) | ✅ | ✅ | ✅ | ✅ |

|

||||

| | [Help Docs](https://qodo-merge-docs.qodo.ai/tools/help_docs/?h=auto#auto-approval) | ✅ | ✅ | ✅ | |

|

||||

| | [Ticket Context](https://qodo-merge-docs.qodo.ai/core-abilities/fetching_ticket_context/) 💎 | ✅ | ✅ | ✅ | |

|

||||

| | [Utilizing Best Practices](https://qodo-merge-docs.qodo.ai/tools/improve/#best-practices) 💎 | ✅ | ✅ | ✅ | |

|

||||

| | [PR Chat](https://qodo-merge-docs.qodo.ai/chrome-extension/features/#pr-chat) 💎 | ✅ | | | |

|

||||

| | [Suggestion Tracking](https://qodo-merge-docs.qodo.ai/tools/improve/#suggestion-tracking) 💎 | ✅ | ✅ | | |

|

||||

| | [CI Feedback](https://qodo-merge-docs.qodo.ai/tools/ci_feedback/) 💎 | ✅ | | | |

|

||||

| | [PR Documentation](https://qodo-merge-docs.qodo.ai/tools/documentation/) 💎 | ✅ | ✅ | | |

|

||||

| | [Custom Labels](https://qodo-merge-docs.qodo.ai/tools/custom_labels/) 💎 | ✅ | ✅ | | |

|

||||

| | [Analyze](https://qodo-merge-docs.qodo.ai/tools/analyze/) 💎 | ✅ | ✅ | | |

|

||||

| | [Similar Code](https://qodo-merge-docs.qodo.ai/tools/similar_code/) 💎 | ✅ | | | |

|

||||

| | [Custom Prompt](https://qodo-merge-docs.qodo.ai/tools/custom_prompt/) 💎 | ✅ | ✅ | ✅ | |

|

||||

| | [Test](https://qodo-merge-docs.qodo.ai/tools/test/) 💎 | ✅ | ✅ | | |

|

||||

| | [Implement](https://qodo-merge-docs.qodo.ai/tools/implement/) 💎 | ✅ | ✅ | ✅ | |

|

||||

| | [Auto-Approve](https://qodo-merge-docs.qodo.ai/tools/improve/?h=auto#auto-approval) 💎 | ✅ | ✅ | ✅ | |

|

||||

| | | | | | |

|

||||

| USAGE | [CLI](https://qodo-merge-docs.qodo.ai/usage-guide/automations_and_usage/#local-repo-cli) | ✅ | ✅ | ✅ | ✅ |

|

||||

| | [App / webhook](https://qodo-merge-docs.qodo.ai/usage-guide/automations_and_usage/#github-app) | ✅ | ✅ | ✅ | ✅ |

|

||||

| | [Tagging bot](https://github.com/Codium-ai/pr-agent#try-it-now) | ✅ | | | |

|

||||

| | [Actions](https://qodo-merge-docs.qodo.ai/installation/github/#run-as-a-github-action) | ✅ |✅| ✅ |✅|

|

||||

| | | | | | |

|

||||

| CORE | [PR compression](https://qodo-merge-docs.qodo.ai/core-abilities/compression_strategy/) | ✅ | ✅ | ✅ | ✅ |

|

||||

| | Adaptive and token-aware file patch fitting | ✅ | ✅ | ✅ | ✅ |

|

||||

| | [Multiple models support](https://qodo-merge-docs.qodo.ai/usage-guide/changing_a_model/) | ✅ | ✅ | ✅ | ✅ |

|

||||

| | [Local and global metadata](https://qodo-merge-docs.qodo.ai/core-abilities/metadata/) | ✅ | ✅ | ✅ | ✅ |

|

||||

| | [Dynamic context](https://qodo-merge-docs.qodo.ai/core-abilities/dynamic_context/) | ✅ | ✅ | ✅ | ✅ |

|

||||

| | [Self reflection](https://qodo-merge-docs.qodo.ai/core-abilities/self_reflection/) | ✅ | ✅ | ✅ | ✅ |

|

||||

| | [Static code analysis](https://qodo-merge-docs.qodo.ai/core-abilities/static_code_analysis/) 💎 | ✅ | ✅ | | |

|

||||

| | [Global and wiki configurations](https://qodo-merge-docs.qodo.ai/usage-guide/configuration_options/) 💎 | ✅ | ✅ | ✅ | |

|

||||

| | [PR interactive actions](https://www.qodo.ai/images/pr_agent/pr-actions.mp4) 💎 | ✅ | ✅ | | |

|

||||

| | [Impact Evaluation](https://qodo-merge-docs.qodo.ai/core-abilities/impact_evaluation/) 💎 | ✅ | ✅ | | |

|

||||

- 💎 means this feature is available only in [Qodo-Merge](https://www.qodo.ai/pricing/)

|

||||

|

||||

[//]: # (- Support for additional git providers is described in [here](./docs/Full_environments.md))

|

||||

@ -161,7 +148,7 @@ ___

|

||||

\

|

||||

‣ **Update Changelog ([`/update_changelog`](https://qodo-merge-docs.qodo.ai/tools/update_changelog/))**: Automatically updating the CHANGELOG.md file with the PR changes.

|

||||

\

|

||||

‣ **Find Similar Issue ([`/similar_issue`](https://qodo-merge-docs.qodo.ai/tools/similar_issues/))**: Automatically retrieves and presents similar issues.

|

||||

‣ **Help Docs ([`/help_docs`](https://qodo-merge-docs.qodo.ai/tools/help_docs/))**: Answers a question on any repository by utilizing given documentation.

|

||||

\

|

||||

‣ **Add Documentation 💎 ([`/add_docs`](https://qodo-merge-docs.qodo.ai/tools/documentation/))**: Generates documentation to methods/functions/classes that changed in the PR.

|

||||

\

|

||||

@ -221,7 +208,7 @@ ___

|

||||

|

||||

## Try it now

|

||||

|

||||

Try the GPT-4 powered PR-Agent instantly on _your public GitHub repository_. Just mention `@CodiumAI-Agent` and add the desired command in any PR comment. The agent will generate a response based on your command.

|

||||

Try the Claude Sonnet powered PR-Agent instantly on _your public GitHub repository_. Just mention `@CodiumAI-Agent` and add the desired command in any PR comment. The agent will generate a response based on your command.

|

||||

For example, add a comment to any pull request with the following text:

|

||||

```

|

||||

@CodiumAI-Agent /review

|

||||

@ -232,12 +219,6 @@ Note that this is a promotional bot, suitable only for initial experimentation.

|

||||

It does not have 'edit' access to your repo, for example, so it cannot update the PR description or add labels (`@CodiumAI-Agent /describe` will publish PR description as a comment). In addition, the bot cannot be used on private repositories, as it does not have access to the files there.

|

||||

|

||||

|

||||

|

||||

|

||||

|

||||

To set up your own PR-Agent, see the [Installation](https://qodo-merge-docs.qodo.ai/installation/) section below.

|

||||

Note that when you set your own PR-Agent or use Qodo hosted PR-Agent, there is no need to mention `@CodiumAI-Agent ...`. Instead, directly start with the command, e.g., `/ask ...`.

|

||||

|

||||

---

|

||||

|

||||

|

||||

@ -265,10 +246,10 @@ A reasonable question that can be asked is: `"Why use PR-Agent? What makes it st

|

||||

|

||||

Here are some advantages of PR-Agent:

|

||||

|

||||

- We emphasize **real-life practical usage**. Each tool (review, improve, ask, ...) has a single GPT-4 call, no more. We feel that this is critical for realistic team usage - obtaining an answer quickly (~30 seconds) and affordably.

|

||||

- We emphasize **real-life practical usage**. Each tool (review, improve, ask, ...) has a single LLM call, no more. We feel that this is critical for realistic team usage - obtaining an answer quickly (~30 seconds) and affordably.

|

||||

- Our [PR Compression strategy](https://qodo-merge-docs.qodo.ai/core-abilities/#pr-compression-strategy) is a core ability that enables to effectively tackle both short and long PRs.

|

||||

- Our JSON prompting strategy enables to have **modular, customizable tools**. For example, the '/review' tool categories can be controlled via the [configuration](pr_agent/settings/configuration.toml) file. Adding additional categories is easy and accessible.

|

||||

- We support **multiple git providers** (GitHub, Gitlab, Bitbucket), **multiple ways** to use the tool (CLI, GitHub Action, GitHub App, Docker, ...), and **multiple models** (GPT-4, GPT-3.5, Anthropic, Cohere, Llama2).

|

||||

- We support **multiple git providers** (GitHub, Gitlab, Bitbucket), **multiple ways** to use the tool (CLI, GitHub Action, GitHub App, Docker, ...), and **multiple models** (GPT, Claude, Deepseek, ...)

|

||||

|

||||

|

||||

## Data privacy

|

||||

@ -292,8 +273,6 @@ https://openai.com/enterprise-privacy

|

||||

|

||||

## Links

|

||||

|

||||

[](https://discord.gg/kG35uSHDBc)

|

||||

|

||||

- Discord community: https://discord.gg/kG35uSHDBc

|

||||

- Qodo site: https://www.qodo.ai/

|

||||

- Blog: https://www.qodo.ai/blog/

|

||||

|

||||

315

docs/docs/ai_search/index.md

Normal file

@ -0,0 +1,315 @@

|

||||

<div class="search-section">

|

||||

<h1>AI Docs Search</h1>

|

||||

<p class="search-description">

|

||||

Search through our documentation using AI-powered natural language queries.

|

||||

</p>

|

||||

<div class="search-container">

|

||||

<input

|

||||

type="text"

|

||||

id="searchInput"

|

||||

class="search-input"

|

||||

placeholder="Enter your search term..."

|

||||

>

|

||||

<button id="searchButton" class="search-button">Search</button>

|

||||

</div>

|

||||

<div id="spinner" class="spinner-container" style="display: none;">

|

||||

<div class="spinner"></div>

|

||||

</div>

|

||||

<div id="results" class="results-container"></div>

|

||||

</div>

|

||||

|

||||

<style>

|

||||

Untitled

|

||||

.search-section {

|

||||

max-width: 800px;

|

||||

margin: 0 auto;

|

||||

padding: 0 1rem 2rem;

|

||||

}

|

||||

|

||||

h1 {

|

||||

color: #666;

|

||||

font-size: 2.125rem;

|

||||

font-weight: normal;

|

||||

margin-bottom: 1rem;

|

||||

}

|

||||

|

||||

.search-description {

|

||||

color: #666;

|

||||

font-size: 1rem;

|

||||

line-height: 1.5;

|

||||

margin-bottom: 2rem;

|

||||

max-width: 800px;

|

||||

}

|

||||

|

||||

.search-container {

|

||||

display: flex;

|

||||

gap: 1rem;

|

||||

max-width: 800px;

|

||||

margin: 0; /* Changed from auto to 0 to align left */

|

||||

}

|

||||

|

||||

.search-input {

|

||||

flex: 1;

|

||||

padding: 0 0.875rem;

|

||||

border: 1px solid #ddd;

|

||||

border-radius: 4px;

|

||||

font-size: 0.9375rem;

|

||||

outline: none;

|

||||

height: 40px; /* Explicit height */

|

||||

}

|

||||

|

||||

.search-input:focus {

|

||||

border-color: #6c63ff;

|

||||

}

|

||||

|

||||

.search-button {

|

||||

padding: 0 1.25rem;

|

||||

background-color: #2196F3;

|

||||

color: white;

|

||||

border: none;

|

||||

border-radius: 4px;

|

||||

cursor: pointer;

|

||||

font-size: 0.875rem;

|

||||

transition: background-color 0.2s;

|

||||

height: 40px; /* Match the height of search input */

|

||||

display: flex;

|

||||

align-items: center;

|

||||

justify-content: center;

|

||||

}

|

||||

|

||||

.search-button:hover {

|

||||

background-color: #1976D2;

|

||||

}

|

||||

|

||||

.spinner-container {

|

||||

display: flex;

|

||||

justify-content: center;

|

||||

margin-top: 2rem;

|

||||

}

|

||||

|

||||

.spinner {

|

||||

width: 40px;

|

||||

height: 40px;

|

||||

border: 4px solid #f3f3f3;

|

||||

border-top: 4px solid #2196F3;

|

||||

border-radius: 50%;

|

||||

animation: spin 1s linear infinite;

|

||||

}

|

||||

|

||||

@keyframes spin {

|

||||

0% { transform: rotate(0deg); }

|

||||

100% { transform: rotate(360deg); }

|

||||

}

|

||||

|

||||

.results-container {

|

||||

margin-top: 2rem;

|

||||

max-width: 800px;

|

||||

}

|

||||

|

||||

.result-item {

|

||||

padding: 1rem;

|

||||

border: 1px solid #ddd;

|

||||

border-radius: 4px;

|

||||

margin-bottom: 1rem;

|

||||

}

|

||||

|

||||

.result-title {

|

||||

font-size: 1.2rem;

|

||||

color: #2196F3;

|

||||

margin-bottom: 0.5rem;

|

||||

}

|

||||

|

||||

.result-description {

|

||||

color: #666;

|

||||

}

|

||||

|

||||

.error-message {

|

||||

color: #dc3545;

|

||||

padding: 1rem;

|

||||

border: 1px solid #dc3545;

|

||||

border-radius: 4px;

|

||||

margin-top: 1rem;

|

||||

}

|

||||

|

||||

.markdown-content {

|

||||

line-height: 1.6;

|

||||

color: var(--md-typeset-color);

|

||||

background: var(--md-default-bg-color);

|

||||

border: 1px solid var(--md-default-fg-color--lightest);

|

||||

border-radius: 12px;

|

||||

padding: 1.5rem;

|

||||

box-shadow: 0 2px 4px rgba(0,0,0,0.05);

|

||||

position: relative;

|

||||

margin-top: 2rem;

|

||||

}

|

||||

|

||||

.markdown-content::before {

|

||||

content: '';

|

||||

position: absolute;

|

||||

top: -8px;

|

||||

left: 24px;

|

||||

width: 16px;

|

||||

height: 16px;

|

||||

background: var(--md-default-bg-color);

|

||||

border-left: 1px solid var(--md-default-fg-color--lightest);

|

||||

border-top: 1px solid var(--md-default-fg-color--lightest);

|

||||

transform: rotate(45deg);

|

||||

}

|

||||

|

||||

.markdown-content > *:first-child {

|

||||

margin-top: 0;

|

||||

padding-top: 0;

|

||||

}

|

||||

|

||||

.markdown-content p {

|

||||

margin-bottom: 1rem;

|

||||

}

|

||||

|

||||

.markdown-content p:last-child {

|

||||

margin-bottom: 0;

|

||||

}

|

||||

|

||||

.markdown-content code {

|

||||

background: var(--md-code-bg-color);

|

||||

color: var(--md-code-fg-color);

|

||||

padding: 0.2em 0.4em;

|

||||

border-radius: 3px;

|

||||

font-size: 0.9em;

|

||||

font-family: ui-monospace, SFMono-Regular, SF Mono, Menlo, Consolas, Liberation Mono, monospace;

|

||||

}

|

||||

|

||||

.markdown-content pre {

|

||||

background: var(--md-code-bg-color);

|

||||

padding: 1rem;

|

||||

border-radius: 6px;

|

||||

overflow-x: auto;

|

||||

margin: 1rem 0;

|

||||

}

|

||||

|

||||

.markdown-content pre code {

|

||||

background: none;

|

||||

padding: 0;

|

||||

font-size: 0.9em;

|

||||

}

|

||||

|

||||

[data-md-color-scheme="slate"] .markdown-content {

|

||||

box-shadow: 0 2px 4px rgba(0,0,0,0.1);

|

||||

}

|

||||

|

||||

</style>

|

||||

|

||||

<script src="https://cdnjs.cloudflare.com/ajax/libs/marked/9.1.6/marked.min.js"></script>

|

||||

|

||||

<script>

|

||||

window.addEventListener('load', function() {

|

||||

function displayResults(responseText) {

|

||||

const resultsContainer = document.getElementById('results');

|

||||

const spinner = document.getElementById('spinner');

|

||||

const searchContainer = document.querySelector('.search-container');

|

||||

|

||||

// Hide spinner

|

||||

spinner.style.display = 'none';

|

||||

|

||||

// Scroll to search bar

|

||||

searchContainer.scrollIntoView({ behavior: 'smooth', block: 'start' });

|

||||

|

||||

try {

|

||||

const results = JSON.parse(responseText);

|

||||

|

||||

marked.setOptions({

|

||||

breaks: true,

|

||||

gfm: true,

|

||||

headerIds: false,

|

||||

sanitize: false

|

||||

});

|

||||

|

||||

const htmlContent = marked.parse(results.message);

|

||||

|

||||

resultsContainer.className = 'markdown-content';

|

||||

resultsContainer.innerHTML = htmlContent;

|

||||

|

||||

// Scroll after content is rendered

|

||||

setTimeout(() => {

|

||||

const searchContainer = document.querySelector('.search-container');

|

||||

const offset = 55; // Offset from top in pixels

|

||||

const elementPosition = searchContainer.getBoundingClientRect().top;

|

||||

const offsetPosition = elementPosition + window.pageYOffset - offset;

|

||||

|

||||

window.scrollTo({

|

||||

top: offsetPosition,

|

||||

behavior: 'smooth'

|

||||

});

|

||||

}, 100);

|

||||

} catch (error) {

|

||||

console.error('Error parsing results:', error);

|

||||

resultsContainer.innerHTML = '<div class="error-message">Error processing results</div>';

|

||||

}

|

||||

}

|

||||

|

||||

async function performSearch() {

|

||||

const searchInput = document.getElementById('searchInput');

|

||||

const resultsContainer = document.getElementById('results');

|

||||

const spinner = document.getElementById('spinner');

|

||||

const searchTerm = searchInput.value.trim();

|

||||

|

||||

if (!searchTerm) {

|

||||

resultsContainer.innerHTML = '<div class="error-message">Please enter a search term</div>';

|

||||

return;

|

||||

}

|

||||

|

||||

// Show spinner, clear results

|

||||

spinner.style.display = 'flex';

|

||||

resultsContainer.innerHTML = '';

|

||||

|

||||

try {

|

||||

const data = {

|

||||

"query": searchTerm

|

||||

};

|

||||

|

||||

const options = {

|

||||

method: 'POST',

|

||||

headers: {

|

||||

'accept': 'text/plain',

|

||||

'content-type': 'application/json',

|

||||

},

|

||||

body: JSON.stringify(data)

|

||||

};

|

||||

|

||||

// const API_ENDPOINT = 'http://0.0.0.0:3000/api/v1/docs_help';

|

||||

const API_ENDPOINT = 'https://help.merge.qodo.ai/api/v1/docs_help';

|

||||

|

||||

const response = await fetch(API_ENDPOINT, options);

|

||||

|

||||

if (!response.ok) {

|

||||

throw new Error(`HTTP error! status: ${response.status}`);

|

||||

}

|

||||

|

||||

const responseText = await response.text();

|

||||

displayResults(responseText);

|

||||

} catch (error) {

|

||||

spinner.style.display = 'none';

|

||||

resultsContainer.innerHTML = `

|

||||

<div class="error-message">

|

||||

An error occurred while searching. Please try again later.

|

||||

</div>

|

||||

`;

|

||||

}

|

||||

}

|

||||

|

||||

// Add event listeners

|

||||

const searchButton = document.getElementById('searchButton');

|

||||

const searchInput = document.getElementById('searchInput');

|

||||

|

||||

if (searchButton) {

|

||||

searchButton.addEventListener('click', performSearch);

|

||||

}

|

||||

|

||||

if (searchInput) {

|

||||

searchInput.addEventListener('keypress', function(e) {

|

||||

if (e.key === 'Enter') {

|

||||

performSearch();

|

||||

}

|

||||

});

|

||||

}

|

||||

});

|

||||

</script>

|

||||

|

Before Width: | Height: | Size: 4.2 KiB After Width: | Height: | Size: 15 KiB |

|

Before Width: | Height: | Size: 263 KiB After Width: | Height: | Size: 57 KiB |

|

Before Width: | Height: | Size: 1.2 KiB After Width: | Height: | Size: 24 KiB |

|

Before Width: | Height: | Size: 8.7 KiB After Width: | Height: | Size: 17 KiB |

@ -2,7 +2,7 @@

|

||||

|

||||

With a single-click installation you will gain access to a context-aware chat on your pull requests code, a toolbar extension with multiple AI feedbacks, Qodo Merge filters, and additional abilities.

|

||||

|

||||

The extension is powered by top code models like Claude 3.5 Sonnet and GPT4. All the extension's features are free to use on public repositories.

|

||||

The extension is powered by top code models like Claude 3.7 Sonnet and o3-mini. All the extension's features are free to use on public repositories.

|

||||

|

||||

For private repositories, you will need to install [Qodo Merge](https://github.com/apps/qodo-merge-pro){:target="_blank"} in addition to the extension (Quick GitHub app setup with a 14-day free trial. No credit card needed).

|

||||

For a demonstration of how to install Qodo Merge and use it with the Chrome extension, please refer to the tutorial video at the provided [link](https://codium.ai/images/pr_agent/private_repos.mp4){:target="_blank"}.

|

||||

|

||||

@ -1,2 +0,0 @@

|

||||

## Overview

|

||||

TBD

|

||||

57

docs/docs/core-abilities/company_codebase.md

Normal file

@ -0,0 +1,57 @@

|

||||

# Company Codebase 💎

|

||||

`Supported Git Platforms: GitHub`

|

||||

|

||||

|

||||

## Overview

|

||||

|

||||

### What is Company Codebase?

|

||||

|

||||

An organized, semantic database that aggregates all your company’s source code into one searchable repository, enabling efficient code discovery and analysis.

|

||||

|

||||

### How does Company Codebase work?

|

||||

|

||||

By indexing your company's code and using Retrieval-Augmented Generation (RAG), it retrieves contextual code segments on demand, improving pull request (PR) insights and accelerating review accuracy.

|

||||

|

||||

|

||||

## Getting started

|

||||

|

||||

!!! info "Prerequisites"

|

||||

- Database setup and codebase indexing must be completed before proceeding. [Contact support](https://www.qodo.ai/contact/) for assistance.

|

||||

|

||||

### Configuration options

|

||||

|

||||

In order to enable the RAG feature, add the following lines to your configuration file:

|

||||

``` toml

|

||||

[rag_arguments]

|

||||

enable_rag=true

|

||||

```

|

||||

|

||||

!!! example "RAG Arguments Options"

|

||||

|

||||

<table>

|

||||

<tr>

|

||||

<td><b>enable_rag</b></td>

|

||||

<td>If set to true, codebase enrichment using RAG will be enabled. Default is false.</td>

|

||||

</tr>

|

||||

<tr>

|

||||

<td><b>rag_repo_list</b></td>

|

||||

<td>A list of repositories that will be used by the semantic search for RAG. Use `['all']` to consider the entire codebase or a select list or repositories, for example: ['my-org/my-repo', ...]. Default: the repository from which the PR was opened.</td>

|

||||

</tr>

|

||||

</table>

|

||||

|

||||

|

||||

References from the codebase will be shown in a collapsible bookmark, allowing you to easily access relevant code snippets:

|

||||

|

||||

{width=640}

|

||||

|

||||

## Limitations

|

||||

|

||||

### Querying the codebase presents significant challenges:

|

||||

- **Search Method**: RAG uses natural language queries to find semantically relevant code sections

|

||||

- **Result Quality**: No guarantee that RAG results will be useful for all queries

|

||||

- **Scope Recommendation**: To reduce noise, avoid using the whole codebase; focus on PR repository instead

|

||||

|

||||

### This feature has several requirements and restrictions:

|

||||

- **Codebase**: Must be properly indexed for search functionality

|

||||

- **Security**: Requires secure and private indexed codebase implementation

|

||||

- **Deployment**: Only available for Qodo Merge Enterprise plan using single tenant or on-premises setup

|

||||

@ -2,14 +2,15 @@

|

||||

`Supported Git Platforms: GitHub, GitLab, Bitbucket`

|

||||

|

||||

## Overview

|

||||

Qodo Merge PR Agent streamlines code review workflows by seamlessly connecting with multiple ticket management systems.

|

||||

Qodo Merge streamlines code review workflows by seamlessly connecting with multiple ticket management systems.

|

||||

This integration enriches the review process by automatically surfacing relevant ticket information and context alongside code changes.

|

||||

|

||||

## Ticket systems supported

|

||||

**Ticket systems supported**:

|

||||

|

||||

- GitHub

|

||||

- Jira (💎)

|

||||

|

||||

Ticket data fetched:

|

||||

**Ticket data fetched:**

|

||||

|

||||

1. Ticket Title

|

||||

2. Ticket Description

|

||||

@ -26,7 +27,7 @@ Ticket Recognition Requirements:

|

||||

- For Jira tickets, you should follow the instructions in [Jira Integration](https://qodo-merge-docs.qodo.ai/core-abilities/fetching_ticket_context/#jira-integration) in order to authenticate with Jira.

|

||||

|

||||

### Describe tool

|

||||

Qodo Merge PR Agent will recognize the ticket and use the ticket content (title, description, labels) to provide additional context for the code changes.

|

||||

Qodo Merge will recognize the ticket and use the ticket content (title, description, labels) to provide additional context for the code changes.

|

||||

By understanding the reasoning and intent behind modifications, the LLM can offer more insightful and relevant code analysis.

|

||||

|

||||

### Review tool

|

||||

@ -46,41 +47,22 @@ If you want to disable this feedback, add the following line to your configurati

|

||||

require_ticket_analysis_review=false

|

||||

```

|

||||

|

||||

## Providers

|

||||

## GitHub Issues Integration

|

||||

|

||||

### Github Issues Integration

|

||||

|

||||

Qodo Merge PR Agent will automatically recognize Github issues mentioned in the PR description and fetch the issue content.

|

||||

Qodo Merge will automatically recognize GitHub issues mentioned in the PR description and fetch the issue content.

|

||||

Examples of valid GitHub issue references:

|

||||

|

||||

- `https://github.com/<ORG_NAME>/<REPO_NAME>/issues/<ISSUE_NUMBER>`

|

||||

- `#<ISSUE_NUMBER>`

|

||||

- `<ORG_NAME>/<REPO_NAME>#<ISSUE_NUMBER>`

|

||||

|

||||

Since Qodo Merge PR Agent is integrated with GitHub, it doesn't require any additional configuration to fetch GitHub issues.

|

||||

Since Qodo Merge is integrated with GitHub, it doesn't require any additional configuration to fetch GitHub issues.

|

||||

|

||||

### Jira Integration 💎

|

||||

## Jira Integration 💎

|

||||

|

||||

We support both Jira Cloud and Jira Server/Data Center.

|

||||

To integrate with Jira, you can link your PR to a ticket using either of these methods:

|

||||

|

||||

**Method 1: Description Reference:**

|

||||

|

||||

Include a ticket reference in your PR description using either the complete URL format https://<JIRA_ORG>.atlassian.net/browse/ISSUE-123 or the shortened ticket ID ISSUE-123.

|

||||

|

||||

**Method 2: Branch Name Detection:**

|

||||

|

||||

Name your branch with the ticket ID as a prefix (e.g., `ISSUE-123-feature-description` or `ISSUE-123/feature-description`).

|

||||

|

||||

!!! note "Jira Base URL"

|

||||

For shortened ticket IDs or branch detection (method 2), you must configure the Jira base URL in your configuration file under the [jira] section:

|

||||

|

||||

```toml

|

||||

[jira]

|

||||

jira_base_url = "https://<JIRA_ORG>.atlassian.net"

|

||||

```

|

||||

|

||||

#### Jira Cloud 💎

|

||||

### Jira Cloud

|

||||

There are two ways to authenticate with Jira Cloud:

|

||||

|

||||

**1) Jira App Authentication**

|

||||

@ -95,7 +77,7 @@ Installation steps:

|

||||

2. After installing the app, you will be redirected to the Qodo Merge registration page. and you will see a success message.<br>

|

||||

{width=384}

|

||||

|

||||

3. Now you can use the Jira integration in Qodo Merge PR Agent.

|

||||

3. Now Qodo Merge will be able to fetch Jira ticket context for your PRs.

|

||||

|

||||

**2) Email/Token Authentication**

|

||||

|

||||

@ -120,45 +102,70 @@ jira_api_email = "YOUR_EMAIL"

|

||||

```

|

||||

|

||||

|

||||

#### Jira Data Center/Server 💎

|

||||

### Jira Data Center/Server

|

||||

|

||||

##### Local App Authentication (For Qodo Merge On-Premise Customers)

|

||||

[//]: # ()

|

||||

[//]: # (##### Local App Authentication (For Qodo Merge On-Premise Customers))

|

||||

|

||||

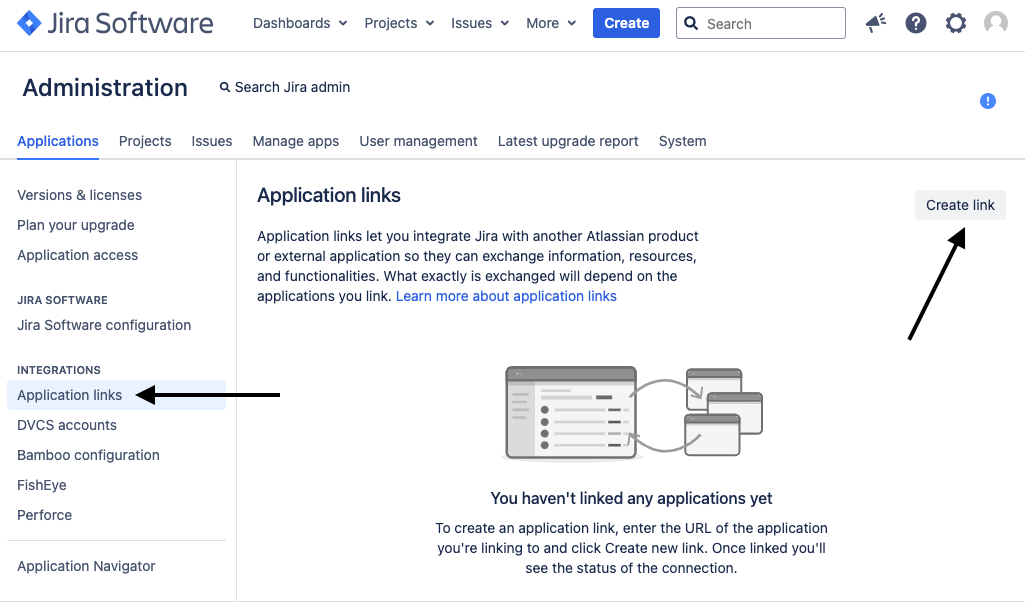

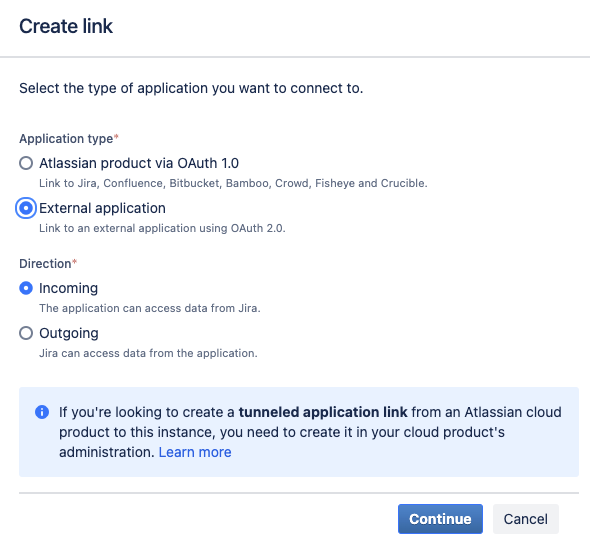

##### 1. Step 1: Set up an application link in Jira Data Center/Server

|

||||

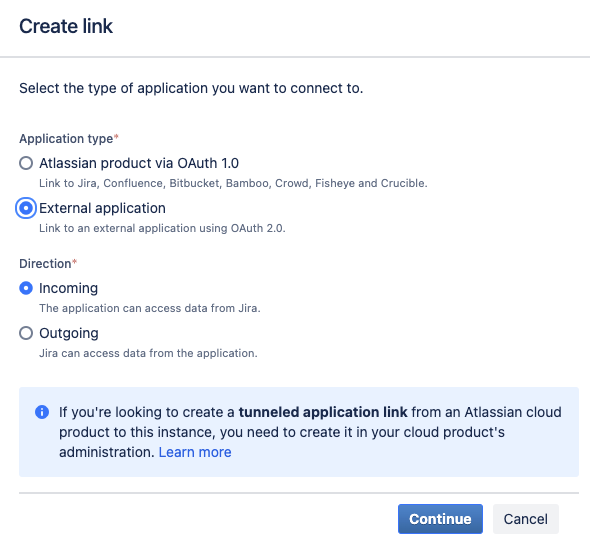

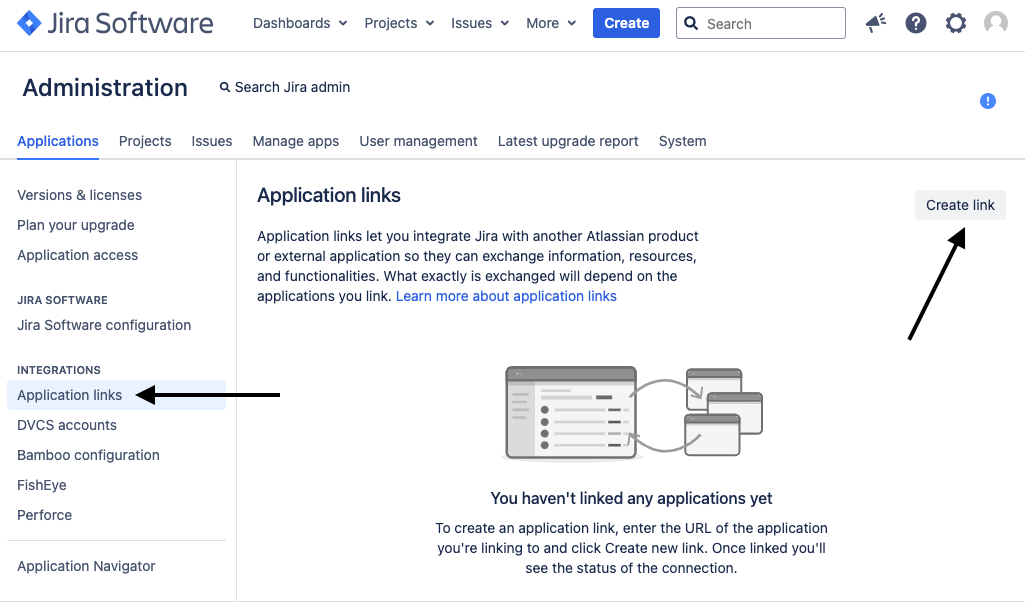

* Go to Jira Administration > Applications > Application Links > Click on `Create link`

|

||||

[//]: # ()

|

||||

[//]: # (##### 1. Step 1: Set up an application link in Jira Data Center/Server)

|

||||

|

||||

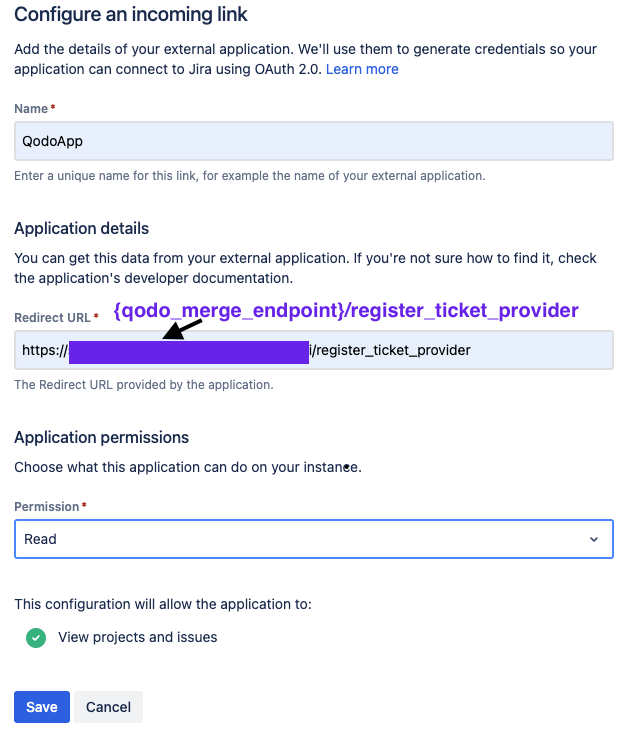

{width=384}

|

||||

* Choose `External application` and set the direction to `Incoming` and then click `Continue`

|

||||

[//]: # (* Go to Jira Administration > Applications > Application Links > Click on `Create link`)

|

||||

|

||||

{width=256}

|

||||

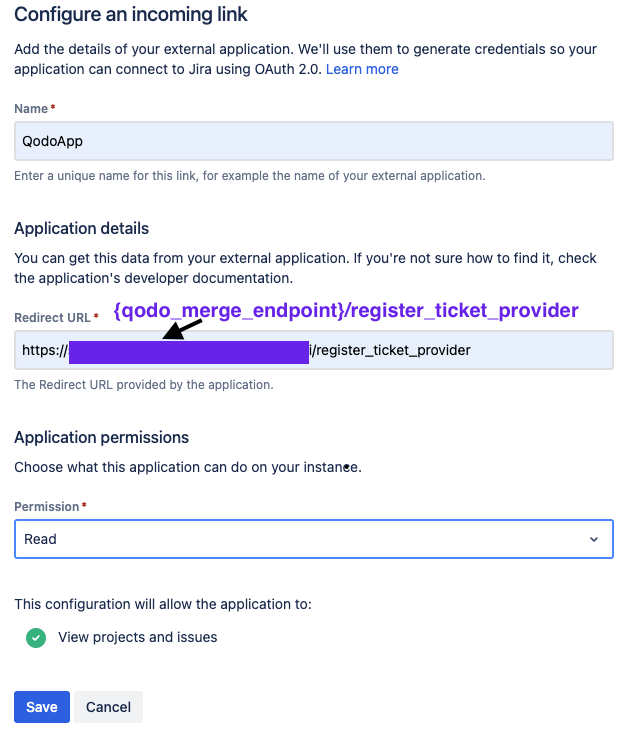

* In the following screen, enter the following details:

|

||||

* Name: `Qodo Merge`

|

||||

* Redirect URL: Enter your Qodo Merge URL followed `https://{QODO_MERGE_ENDPOINT}/register_ticket_provider`

|

||||

* Permission: Select `Read`

|

||||

* Click `Save`

|

||||

[//]: # ()

|

||||

[//]: # ({width=384})

|

||||

|

||||

{width=384}

|

||||

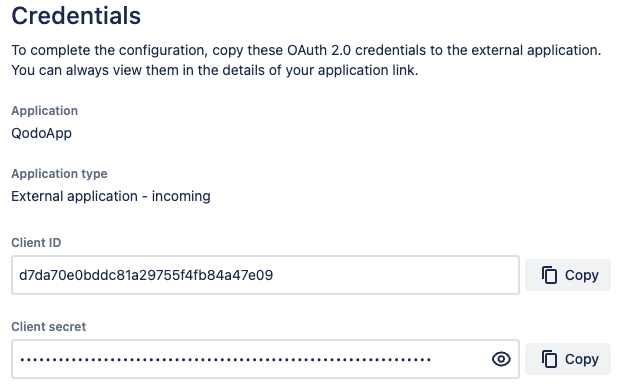

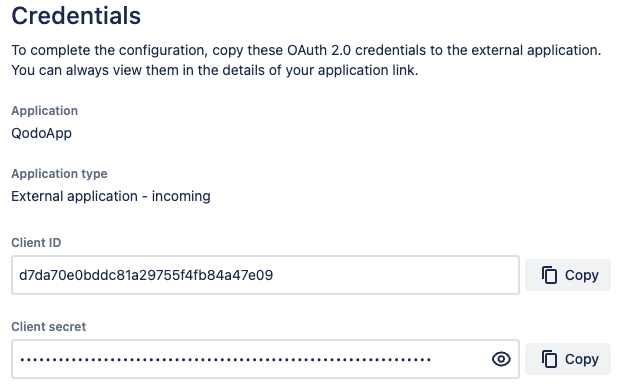

* Copy the `Client ID` and `Client secret` and set them in your `.secrets` file:

|

||||

[//]: # (* Choose `External application` and set the direction to `Incoming` and then click `Continue`)

|

||||

|

||||

{width=256}

|

||||

```toml

|

||||

[jira]

|

||||

jira_app_secret = "..."

|

||||

jira_client_id = "..."

|

||||

```

|

||||

[//]: # ()

|

||||

[//]: # ({width=256})

|

||||

|

||||

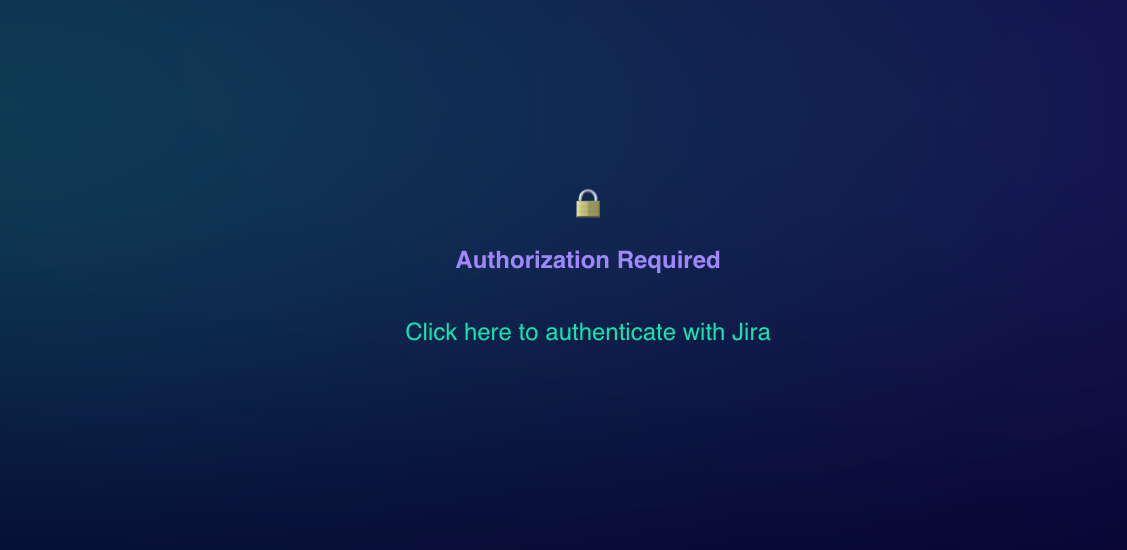

##### 2. Step 2: Authenticate with Jira Data Center/Server

|

||||

* Open this URL in your browser: `https://{QODO_MERGE_ENDPOINT}/jira_auth`

|

||||

* Click on link

|

||||

[//]: # (* In the following screen, enter the following details:)

|

||||

|

||||

{width=384}

|

||||

[//]: # ( * Name: `Qodo Merge`)

|

||||

|

||||

* You will be redirected to Jira Data Center/Server, click `Allow`

|

||||

* You will be redirected back to Qodo Merge PR Agent and you will see a success message.

|

||||

[//]: # ( * Redirect URL: Enter your Qodo Merge URL followed `https://{QODO_MERGE_ENDPOINT}/register_ticket_provider`)

|

||||

|

||||

[//]: # ( * Permission: Select `Read`)

|

||||

|

||||

[//]: # ( * Click `Save`)

|

||||

|

||||

[//]: # ()

|

||||

[//]: # ({width=384})

|

||||

|

||||

[//]: # (* Copy the `Client ID` and `Client secret` and set them in your `.secrets` file:)

|

||||

|

||||

[//]: # ()

|

||||

[//]: # ({width=256})

|

||||

|

||||

[//]: # (```toml)

|

||||

|

||||

[//]: # ([jira])

|

||||

|

||||

[//]: # (jira_app_secret = "...")

|

||||

|

||||

[//]: # (jira_client_id = "...")

|

||||

|

||||

[//]: # (```)

|

||||

|

||||

[//]: # ()

|

||||

[//]: # (##### 2. Step 2: Authenticate with Jira Data Center/Server)

|

||||

|

||||

[//]: # (* Open this URL in your browser: `https://{QODO_MERGE_ENDPOINT}/jira_auth`)

|

||||

|

||||

[//]: # (* Click on link)

|

||||

|

||||

[//]: # ()

|

||||

[//]: # ({width=384})

|

||||

|

||||

[//]: # ()

|

||||

[//]: # (* You will be redirected to Jira Data Center/Server, click `Allow`)

|

||||

|

||||

[//]: # (* You will be redirected back to Qodo Merge and you will see a success message.)

|

||||

|

||||

|

||||

##### Personal Access Token (PAT) Authentication

|

||||

We also support Personal Access Token (PAT) Authentication method.

|

||||

[//]: # (Personal Access Token (PAT) Authentication)

|

||||

Currently, JIRA integration for Data Center/Server is available via Personal Access Token (PAT) Authentication method

|

||||

|

||||

1. Create a [Personal Access Token (PAT)](https://confluence.atlassian.com/enterprise/using-personal-access-tokens-1026032365.html) in your Jira account

|

||||

2. In your Configuration file/Environment variables/Secrets file, add the following lines:

|

||||

@ -168,3 +175,23 @@ We also support Personal Access Token (PAT) Authentication method.

|

||||

jira_base_url = "YOUR_JIRA_BASE_URL" # e.g. https://jira.example.com

|

||||

jira_api_token = "YOUR_API_TOKEN"

|

||||

```

|

||||

|

||||

### How to link a PR to a Jira ticket

|

||||

|

||||

To integrate with Jira, you can link your PR to a ticket using either of these methods:

|

||||

|

||||

**Method 1: Description Reference:**

|

||||

|

||||

Include a ticket reference in your PR description using either the complete URL format https://<JIRA_ORG>.atlassian.net/browse/ISSUE-123 or the shortened ticket ID ISSUE-123.

|

||||

|

||||

**Method 2: Branch Name Detection:**

|

||||

|

||||

Name your branch with the ticket ID as a prefix (e.g., `ISSUE-123-feature-description` or `ISSUE-123/feature-description`).

|

||||

|

||||

!!! note "Jira Base URL"

|

||||

For shortened ticket IDs or branch detection (method 2 for JIRA cloud), you must configure the Jira base URL in your configuration file under the [jira] section:

|

||||

|

||||

```toml

|

||||

[jira]

|

||||

jira_base_url = "https://<JIRA_ORG>.atlassian.net"

|

||||

```

|

||||

|

||||

@ -9,7 +9,7 @@ Qodo Merge utilizes a variety of core abilities to provide a comprehensive and e

|

||||

- [Impact evaluation](https://qodo-merge-docs.qodo.ai/core-abilities/impact_evaluation/)

|

||||

- [Interactivity](https://qodo-merge-docs.qodo.ai/core-abilities/interactivity/)

|

||||

- [Compression strategy](https://qodo-merge-docs.qodo.ai/core-abilities/compression_strategy/)

|

||||

- [Code-oriented YAML](https://qodo-merge-docs.qodo.ai/core-abilities/code_oriented_yaml/)

|

||||

- [Company Codebase](https://qodo-merge-docs.qodo.ai/core-abilities/company_codebase/)

|

||||

- [Static code analysis](https://qodo-merge-docs.qodo.ai/core-abilities/static_code_analysis/)

|

||||

- [Code fine-tuning benchmark](https://qodo-merge-docs.qodo.ai/finetuning_benchmark/)

|

||||

|

||||

|

||||

@ -1,5 +1,5 @@

|

||||

## Local and global metadata injection with multi-stage analysis

|

||||

(1)

|

||||

1\.

|

||||

Qodo Merge initially retrieves for each PR the following data:

|

||||

|

||||

- PR title and branch name

|

||||

@ -11,7 +11,7 @@ Qodo Merge initially retrieves for each PR the following data:

|

||||

!!! tip "Tip: Organization-level metadata"

|

||||

In addition to the inputs above, Qodo Merge can incorporate supplementary preferences provided by the user, like [`extra_instructions` and `organization best practices`](https://qodo-merge-docs.qodo.ai/tools/improve/#extra-instructions-and-best-practices). This information can be used to enhance the PR analysis.

|

||||

|

||||

(2)

|

||||

2\.

|

||||

By default, the first command that Qodo Merge executes is [`describe`](https://qodo-merge-docs.qodo.ai/tools/describe/), which generates three types of outputs:

|

||||

|

||||

- PR Type (e.g. bug fix, feature, refactor, etc)

|

||||

@ -49,8 +49,8 @@ __old hunk__

|

||||

...

|

||||

```

|

||||

|

||||

(3) The entire PR files that were retrieved are also used to expand and enhance the PR context (see [Dynamic Context](https://qodo-merge-docs.qodo.ai/core-abilities/dynamic_context/)).

|

||||

3\. The entire PR files that were retrieved are also used to expand and enhance the PR context (see [Dynamic Context](https://qodo-merge-docs.qodo.ai/core-abilities/dynamic_context/)).

|

||||

|

||||

|

||||

(4) All the metadata described above represents several level of cumulative analysis - ranging from hunk level, to file level, to PR level, to organization level.

|

||||

4\. All the metadata described above represents several level of cumulative analysis - ranging from hunk level, to file level, to PR level, to organization level.

|

||||

This comprehensive approach enables Qodo Merge AI models to generate more precise and contextually relevant suggestions and feedback.

|

||||

|

||||

@ -26,7 +26,7 @@ ___

|

||||

|

||||

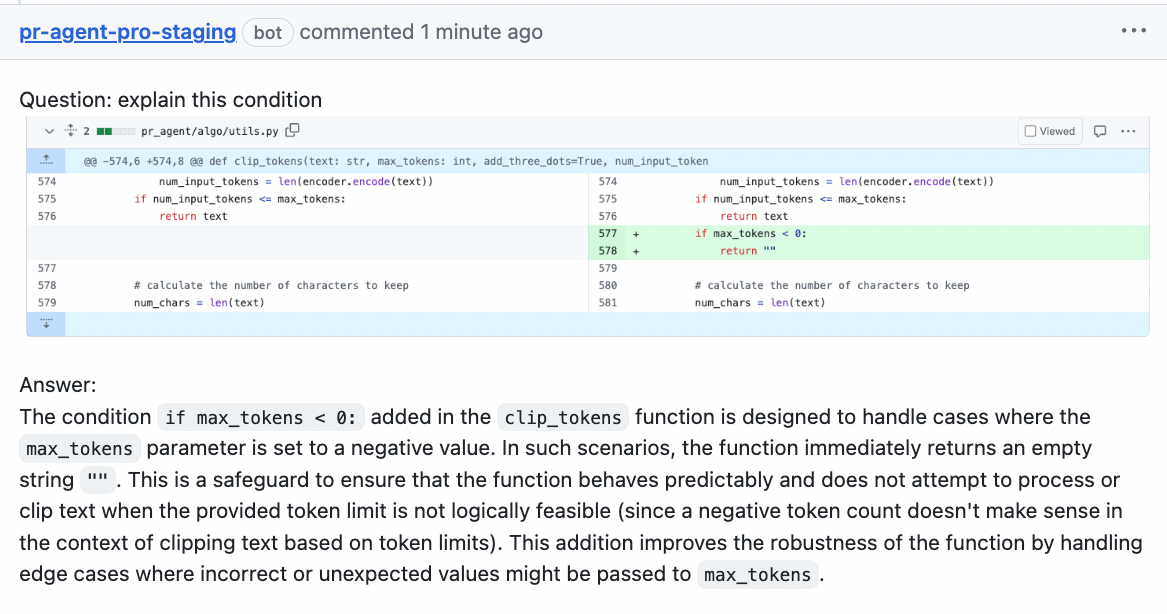

#### Answer:<span style="display:none;">2</span>

|

||||

|

||||

- Modern AI models, like Claude 3.5 Sonnet and GPT-4, are improving rapidly but remain imperfect. Users should critically evaluate all suggestions rather than accepting them automatically.

|

||||

- Modern AI models, like Claude Sonnet and GPT-4, are improving rapidly but remain imperfect. Users should critically evaluate all suggestions rather than accepting them automatically.

|

||||

- AI errors are rare, but possible. A main value from reviewing the code suggestions lies in their high probability of catching **mistakes or bugs made by the PR author**. We believe it's worth spending 30-60 seconds reviewing suggestions, even if some aren't relevant, as this practice can enhance code quality and prevent bugs in production.

|

||||

|

||||

|

||||

|

||||

@ -1,6 +1,6 @@

|

||||

# Qodo Merge Code Fine-tuning Benchmark

|

||||

|

||||

On coding tasks, the gap between open-source models and top closed-source models such as GPT4 is significant.

|

||||

On coding tasks, the gap between open-source models and top closed-source models such as GPT-4o is significant.

|

||||

<br>

|

||||

In practice, open-source models are unsuitable for most real-world code tasks, and require further fine-tuning to produce acceptable results.

|

||||

|

||||

@ -68,7 +68,7 @@ Here are the prompts, and example outputs, used as input-output pairs to fine-tu

|

||||

|

||||

### Evaluation dataset

|

||||

|

||||

- For each tool, we aggregated 100 additional examples to be used for evaluation. These examples were not used in the training dataset, and were manually selected to represent diverse real-world use-cases.

|

||||

- For each tool, we aggregated 200 additional examples to be used for evaluation. These examples were not used in the training dataset, and were manually selected to represent diverse real-world use-cases.

|

||||

- For each test example, we generated two responses: one from the fine-tuned model, and one from the best code model in the world, `gpt-4-turbo-2024-04-09`.

|

||||

|

||||

- We used a third LLM to judge which response better answers the prompt, and will likely be perceived by a human as better response.

|

||||

|

||||

@ -28,34 +28,34 @@ Qodo Merge offers extensive pull request functionalities across various git prov

|

||||

|

||||

| | | GitHub | Gitlab | Bitbucket | Azure DevOps |

|

||||

|-------|-----------------------------------------------------------------------------------------------------------------------|:------:|:------:|:---------:|:------------:|

|

||||

| TOOLS | Review | ✅ | ✅ | ✅ | ✅ |

|

||||

| | ⮑ Incremental | ✅ | | | |

|

||||

| | Ask | ✅ | ✅ | ✅ | ✅ |

|

||||

| | Describe | ✅ | ✅ | ✅ | ✅ |

|

||||

| | ⮑ [Inline file summary](https://qodo-merge-docs.qodo.ai/tools/describe/#inline-file-summary){:target="_blank"} 💎 | ✅ | ✅ | | ✅ |

|

||||

| | Improve | ✅ | ✅ | ✅ | ✅ |

|

||||

| | ⮑ Extended | ✅ | ✅ | ✅ | ✅ |

|

||||

| | [Custom Prompt](./tools/custom_prompt.md){:target="_blank"} 💎 | ✅ | ✅ | ✅ | ✅ |

|

||||

| | Reflect and Review | ✅ | ✅ | ✅ | ✅ |

|

||||

| | Update CHANGELOG.md | ✅ | ✅ | ✅ | ️ |

|

||||

| | Find Similar Issue | ✅ | | | ️ |

|

||||

| | [Add PR Documentation](./tools/documentation.md){:target="_blank"} 💎 | ✅ | ✅ | | ✅ |

|

||||

| | [Generate Custom Labels](./tools/describe.md#handle-custom-labels-from-the-repos-labels-page-💎){:target="_blank"} 💎 | ✅ | ✅ | | ✅ |

|

||||

| | [Analyze PR Components](./tools/analyze.md){:target="_blank"} 💎 | ✅ | ✅ | | ✅ |

|

||||

| | [Test](https://pr-agent-docs.codium.ai/tools/test/) 💎 | ✅ | ✅ | | |

|

||||

| | [Implement](https://pr-agent-docs.codium.ai/tools/implement/) 💎 | ✅ | ✅ | ✅ | |

|

||||

| | | | | | ️ |

|

||||

| USAGE | CLI | ✅ | ✅ | ✅ | ✅ |

|

||||

| | App / webhook | ✅ | ✅ | ✅ | ✅ |

|

||||

| | Actions | ✅ | | | ️ |

|

||||

| | | | | |

|

||||

| CORE | PR compression | ✅ | ✅ | ✅ | ✅ |

|

||||

| | Repo language prioritization | ✅ | ✅ | ✅ | ✅ |

|

||||

| | Adaptive and token-aware file patch fitting | ✅ | ✅ | ✅ | ✅ |

|

||||

| | Multiple models support | ✅ | ✅ | ✅ | ✅ |

|

||||

| | Incremental PR review | ✅ | | | |

|

||||

| | [Static code analysis](./tools/analyze.md/){:target="_blank"} 💎 | ✅ | ✅ | ✅ | ✅ |

|

||||

| | [Multiple configuration options](./usage-guide/configuration_options.md){:target="_blank"} 💎 | ✅ | ✅ | ✅ | ✅ |

|

||||

| TOOLS | Review | ✅ | ✅ | ✅ | ✅ |

|

||||

| | ⮑ Incremental | ✅ | | | |

|

||||

| | Ask | ✅ | ✅ | ✅ | ✅ |

|

||||

| | Describe | ✅ | ✅ | ✅ | ✅ |

|

||||

| | ⮑ [Inline file summary](https://qodo-merge-docs.qodo.ai/tools/describe/#inline-file-summary){:target="_blank"} 💎 | ✅ | ✅ | | ✅ |

|

||||

| | Improve | ✅ | ✅ | ✅ | ✅ |

|

||||

| | ⮑ Extended | ✅ | ✅ | ✅ | ✅ |

|

||||

| | [Auto-Approve](https://qodo-merge-docs.qodo.ai/tools/improve/#auto-approval) 💎 | ✅ | ✅ | ✅ | |

|

||||

| | [Custom Prompt](./tools/custom_prompt.md){:target="_blank"} 💎 | ✅ | ✅ | ✅ | ✅ |

|

||||

| | Reflect and Review | ✅ | ✅ | ✅ | ✅ |

|

||||

| | Update CHANGELOG.md | ✅ | ✅ | ✅ | ️ |

|

||||

| | Find Similar Issue | ✅ | | | ️ |

|

||||

| | [Add PR Documentation](./tools/documentation.md){:target="_blank"} 💎 | ✅ | ✅ | | ✅ |

|

||||

| | [Generate Custom Labels](./tools/describe.md#handle-custom-labels-from-the-repos-labels-page-💎){:target="_blank"} 💎 | ✅ | ✅ | | ✅ |

|

||||

| | [Analyze PR Components](./tools/analyze.md){:target="_blank"} 💎 | ✅ | ✅ | | ✅ |

|

||||

| | [Test](https://pr-agent-docs.codium.ai/tools/test/) 💎 | ✅ | ✅ | | |

|

||||

| | [Implement](https://pr-agent-docs.codium.ai/tools/implement/) 💎 | ✅ | ✅ | ✅ | |

|

||||

| | | | | | ️ |

|

||||

| USAGE | CLI | ✅ | ✅ | ✅ | ✅ |

|

||||

| | App / webhook | ✅ | ✅ | ✅ | ✅ |

|

||||

| | Actions | ✅ | | | ️ |

|

||||

| | | | | |

|

||||

| CORE | PR compression | ✅ | ✅ | ✅ | ✅ |

|

||||

| | Repo language prioritization | ✅ | ✅ | ✅ | ✅ |

|

||||

| | Adaptive and token-aware file patch fitting | ✅ | ✅ | ✅ | ✅ |

|

||||

| | Multiple models support | ✅ | ✅ | ✅ | ✅ |

|

||||

| | [Static code analysis](./core-abilities/static_code_analysis/){:target="_blank"} 💎 | ✅ | ✅ | | |

|

||||

| | [Multiple configuration options](./usage-guide/configuration_options.md){:target="_blank"} 💎 | ✅ | ✅ | ✅ | ✅ |

|

||||

|

||||

💎 marks a feature available only in [Qodo Merge](https://www.codium.ai/pricing/){:target="_blank"}, and not in the open-source version.

|

||||

|

||||

|

||||

@ -1,7 +1,7 @@

|

||||

## Run as a Bitbucket Pipeline

|

||||

|

||||

|

||||

You can use the Bitbucket Pipeline system to run Qodo Merge on every pull request open or update.

|

||||

You can use the Bitbucket Pipeline system to run PR-Agent on every pull request open or update.

|

||||

|

||||

1. Add the following file in your repository bitbucket-pipelines.yml

|

||||

|

||||

@ -11,7 +11,7 @@ pipelines:

|

||||

'**':

|

||||

- step:

|

||||

name: PR Agent Review

|

||||

image: python:3.10

|

||||

image: python:3.12

|

||||

services:

|

||||

- docker

|

||||

script:

|

||||

@ -54,7 +54,7 @@ python cli.py --pr_url https://git.onpreminstanceofbitbucket.com/projects/PROJEC

|

||||

|

||||

### Run it as service

|

||||

|

||||

To run Qodo Merge as webhook, build the docker image:

|

||||

To run PR-Agent as webhook, build the docker image:

|

||||

```

|

||||

docker build . -t codiumai/pr-agent:bitbucket_server_webhook --target bitbucket_server_webhook -f docker/Dockerfile

|

||||

docker push codiumai/pr-agent:bitbucket_server_webhook # Push to your Docker repository

|

||||

|

||||

@ -43,36 +43,47 @@ Note that if your base branches are not protected, don't set the variables as `p

|

||||

|

||||

## Run a GitLab webhook server

|

||||

|

||||

1. From the GitLab workspace or group, create an access token with "Reporter" role ("Developer" if using Pro version of the agent) and "api" scope.

|

||||

1. In GitLab create a new user and give it "Reporter" role ("Developer" if using Pro version of the agent) for the intended group or project.

|

||||

|

||||

2. Generate a random secret for your app, and save it for later. For example, you can use:

|

||||

2. For the user from step 1. generate a `personal_access_token` with `api` access.

|

||||

|

||||

3. Generate a random secret for your app, and save it for later (`shared_secret`). For example, you can use:

|

||||

|

||||

```

|

||||

WEBHOOK_SECRET=$(python -c "import secrets; print(secrets.token_hex(10))")

|

||||

SHARED_SECRET=$(python -c "import secrets; print(secrets.token_hex(10))")

|

||||

```

|

||||

|

||||

3. Clone this repository:

|

||||

4. Clone this repository:

|

||||

|

||||

```

|

||||

git clone https://github.com/Codium-ai/pr-agent.git

|

||||

git clone https://github.com/qodo-ai/pr-agent.git

|

||||

```

|

||||

|

||||

4. Prepare variables and secrets. Skip this step if you plan on settings these as environment variables when running the agent:

|

||||

5. Prepare variables and secrets. Skip this step if you plan on setting these as environment variables when running the agent:

|

||||

1. In the configuration file/variables:

|

||||

- Set `deployment_type` to "gitlab"

|

||||

- Set `config.git_provider` to "gitlab"

|

||||

|

||||

2. In the secrets file/variables:

|

||||

- Set your AI model key in the respective section

|

||||

- In the [gitlab] section, set `personal_access_token` (with token from step 1) and `shared_secret` (with secret from step 2)

|

||||

- In the [gitlab] section, set `personal_access_token` (with token from step 2) and `shared_secret` (with secret from step 3)

|

||||

|

||||

|

||||

5. Build a Docker image for the app and optionally push it to a Docker repository. We'll use Dockerhub as an example:

|

||||